TinyML Diffsion Models

Modern AI models are becoming increasingly large, demanding substantial computational resources and memory. This creates a gap between the computational demands of these models and the available hardware capabilities. Pruning addresses this gap by reducing model size, memory footprint, and ultimately, energy consumption.

🧩 I. Introduction

This article synthesizes information from an MIT lecture on EfficientML.ai (Lecture 18) and supporting materials. These models, popularized by tools like Midjourney and OpenAI’s Sora, generate high-quality images and videos but are computationally demanding. The focus is on techniques to make these algorithms more efficient, faster, and cheaper to run on hardware.

🌟 II. Key Concepts: Diffusion Model Basics

🔍 Generative vs. Discriminative Models:

- Discriminative Models: Predict decision boundaries (e.g., classifying an image).

- Generative Models: Predict the distribution of data (e.g., generating new images).

Diffusion models fall under the generative category.💬 “Different from discriminative models which predict decision boundaries, generative models try to predict the distribution of the data.”

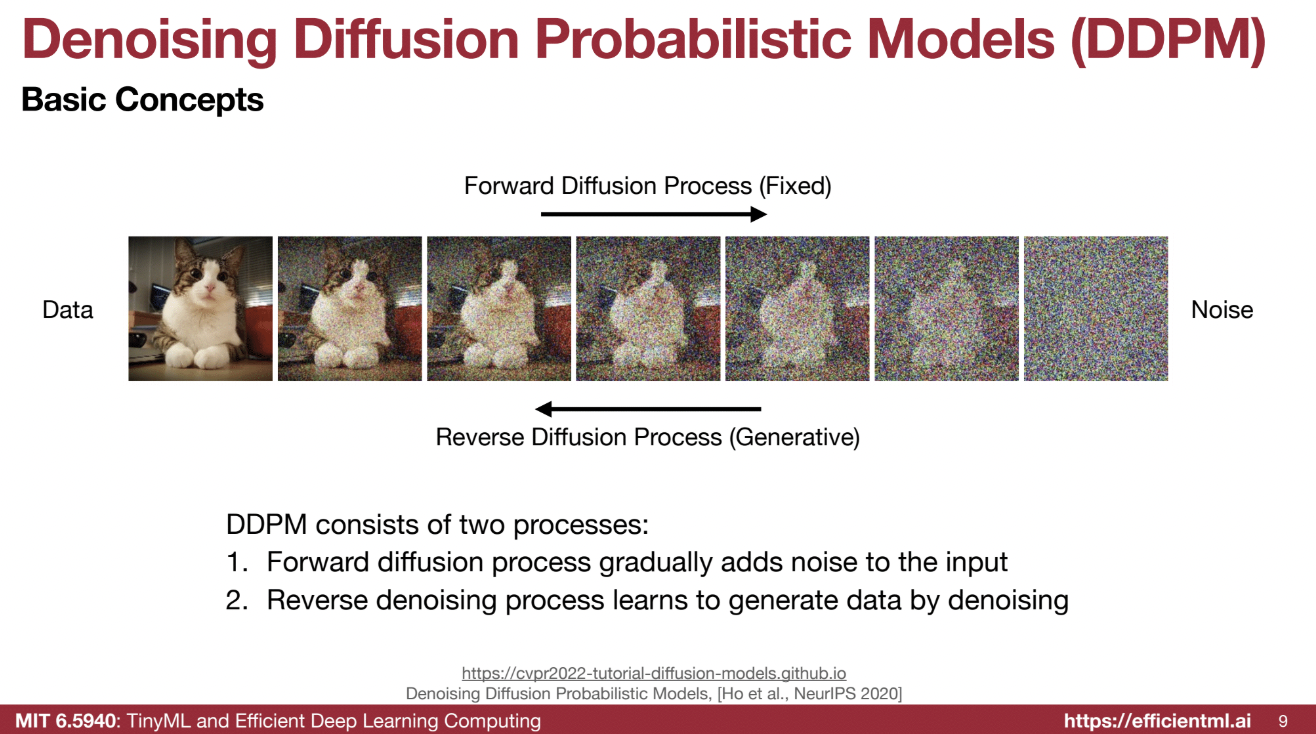

🔄 Forward and Reverse Diffusion Processes:

1️⃣ Forward Process (Noising):

- Gradually adds Gaussian noise to data over multiple steps (T), eventually transforming it into pure noise.

- Each step adds a small amount of noise based on a predefined beta (β) value.

💬 “During the forward diffusion process, we try to add noise to the data so the data becomes noise.”

- Mathematics:

$[ q(x_t | x_{t-1}) := \mathcal{N}(x_t; \sqrt{1 - \beta_t} \cdot x_{t-1}, \beta_t \cdot I) ]$

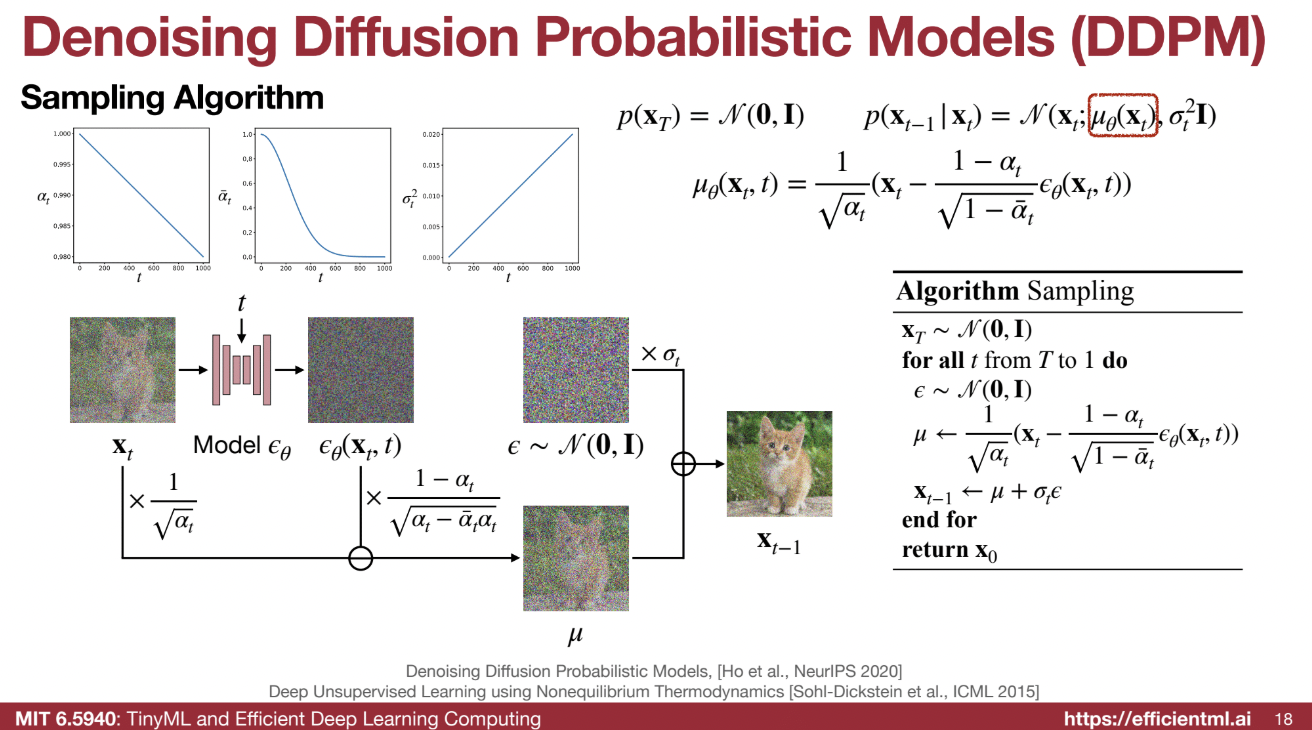

2️⃣ Reverse Process (Denoising):

- Gradually removes noise from noisy data, revealing the original data through iterative denoising.

💬 “In the reverse process, we gradually remove the noise and reveal the data.”

- Modeled as a Gaussian:

$[ p(x_{t-1} | x_t) = \mathcal{N}(x_{t-1}; \mu_\theta(x_t), \sigma^2_t \cdot I) ]$

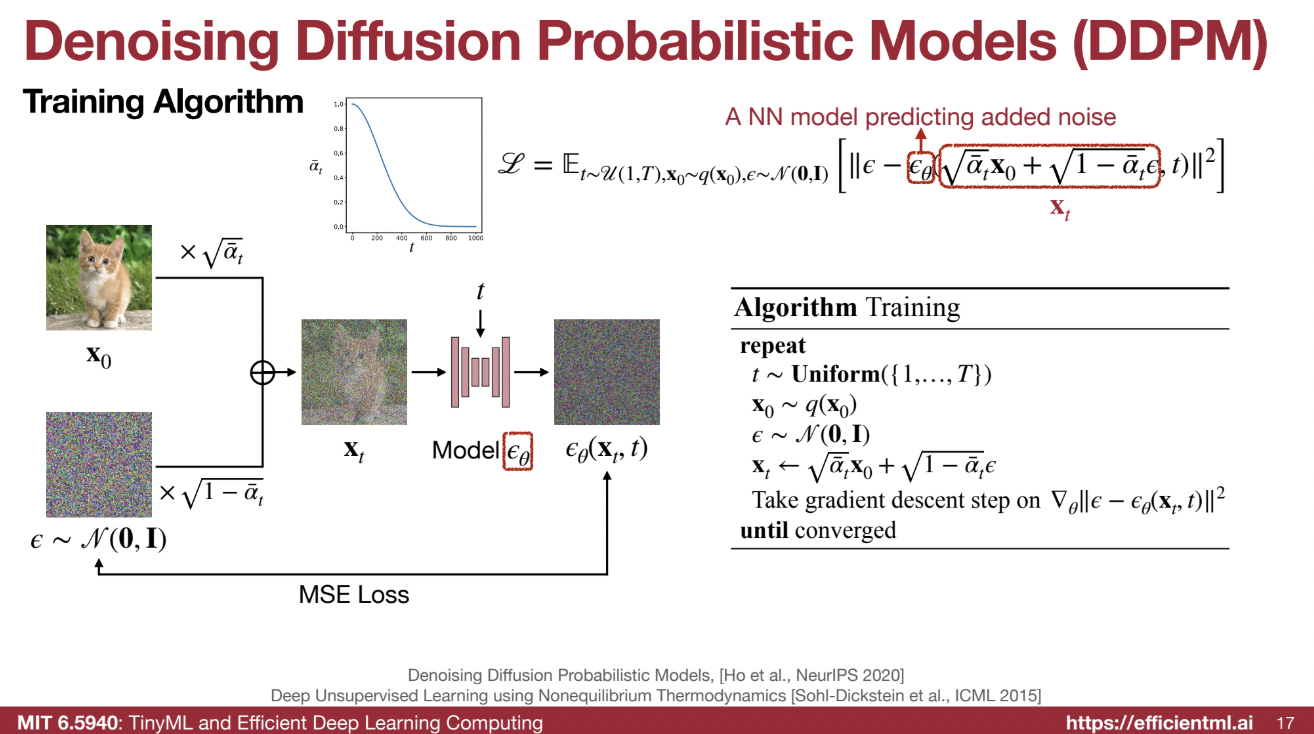

🧮 Mathematical Foundation:

-

Forward Process:

The forward process is a Markov chain, where each state depends on the previous one.

$[ x_t = \sqrt{\bar{\alpha}_t} \cdot x_0 + \sqrt{1 - \bar{\alpha}_t} \cdot \epsilon ]$

where $(\bar{\alpha}_t)$ is the cumulative product of $((1 - \beta_t))$, and $(\epsilon)$ is Gaussian noise. -

The goal is to have noise at time T approximate a Gaussian:

$[ q(x_T | x_0) \approx \mathcal{N}(0, I) ]$

🏋️♂️ Training Process:

- A neural network, $(\epsilon_\theta)$, is trained to predict the noise added during the forward process.

💬 “The training part is trying to predict the noise and ensure it matches the noise we inserted.”

- Loss Function: Mean Squared Error (MSE) between predicted and actual noise.

💬 “We try to match the predicted noise with the original added noise to calculate an MSE loss.”

🔄 Inference Process:

- Starts with a pure noise image at time $(T)$.

- The neural network predicts and subtracts noise iteratively to recover the image.

💬 “Given a noisy input at $(x_T)$, we pass it through the model $(\theta)$ to predict $(x_{T-1})$.”

- Computationally intensive due to its iterative nature and pixel-wise predictions.

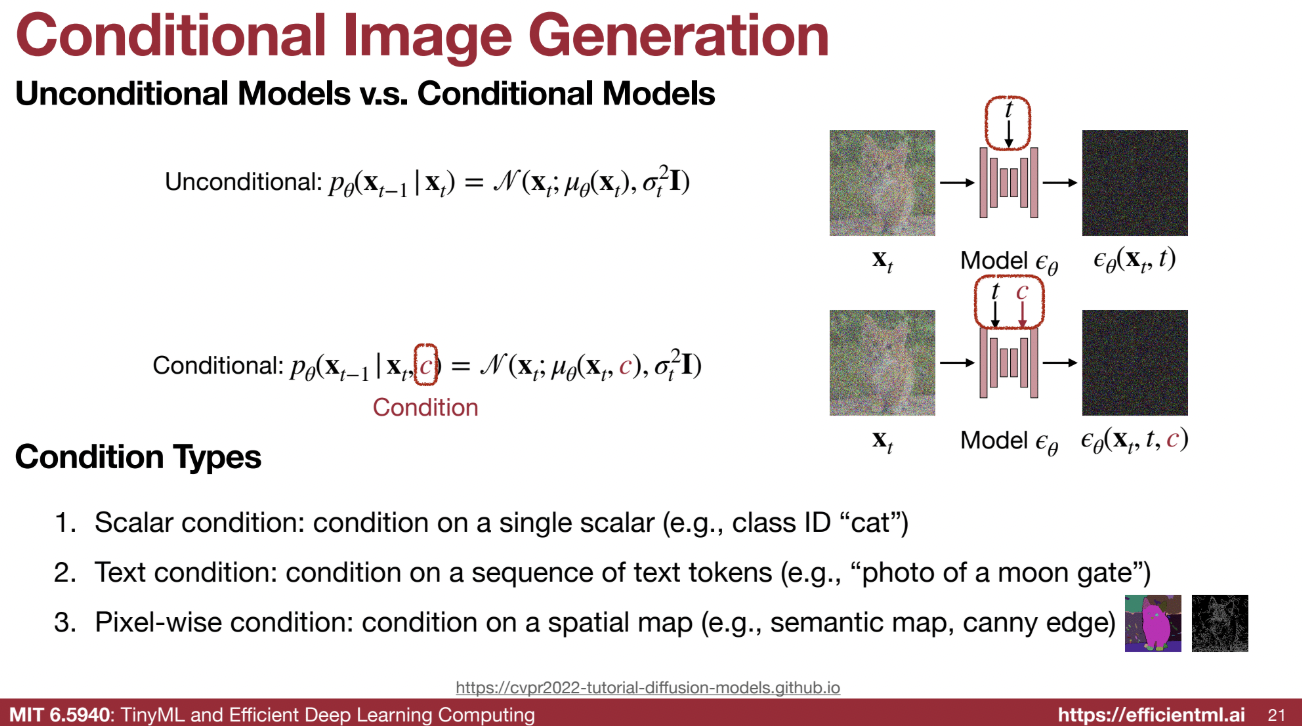

🎨 III. Conditional Diffusion Models

📌 Concept:

Generating images based on specific conditions instead of random samples.

💬 “We don’t want to just generate random images, but we want to condition the generation.”

📊 Types of Conditions:

- Scalar Condition (e.g., class ID):

- Incorporates conditions like “cat” using addition or adaptive normalization with an MLP.

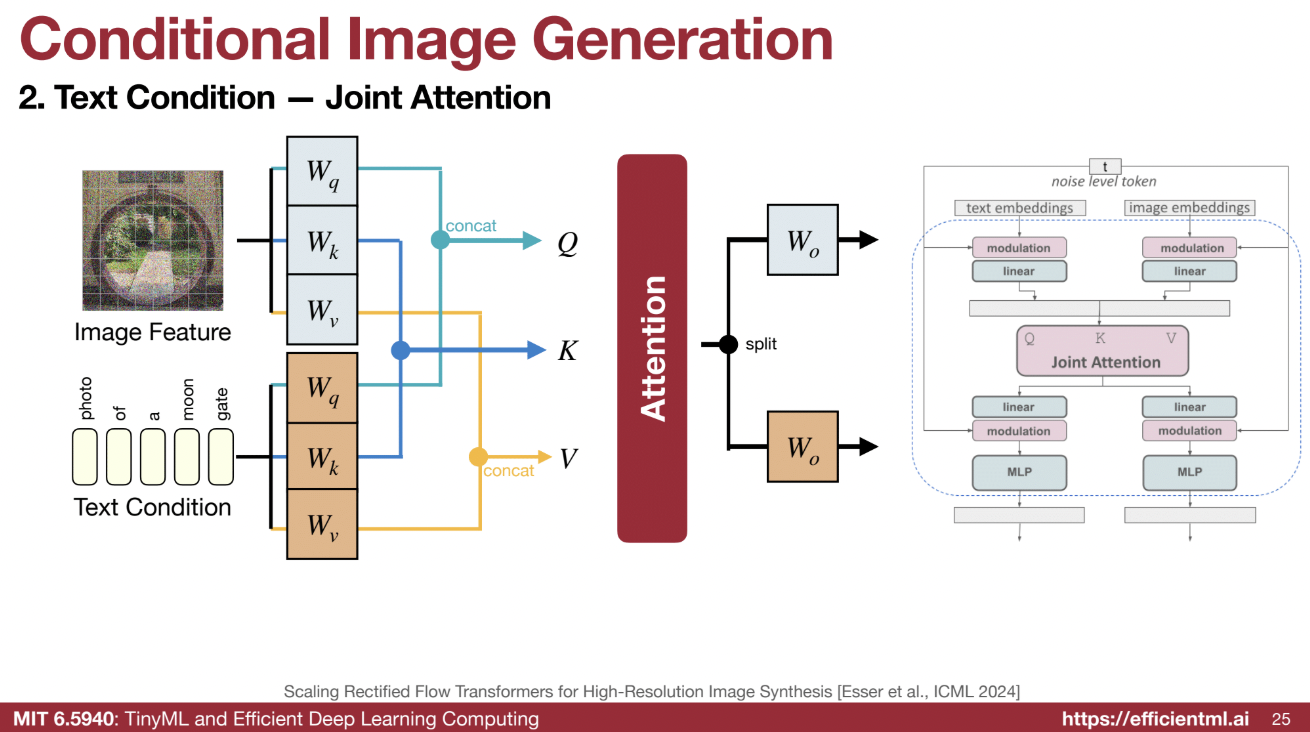

- Text Condition (e.g., text tokens):

- Uses cross-attention mechanisms to combine text and image features.

Treats the image as a query and text as key-value pairs.

- Uses cross-attention mechanisms to combine text and image features.

- Pixel-wise Condition (e.g., semantic maps):

- Uses ControlNet to condition the network on pixel-wise spatial maps.

🧭 Classifier Guidance:

- Naive Guidance:

- Trains a separate classifier to guide the generated image toward an intended class.

- Classifier-Free Guidance:

- Rewrites classifier guidance using Bayes’ rule to avoid training separate networks.

💬 “Can we just use one network and avoid training a separate classifier network?”

- Balances quality and diversity by combining conditional and unconditional models during inference.

- Rewrites classifier guidance using Bayes’ rule to avoid training separate networks.

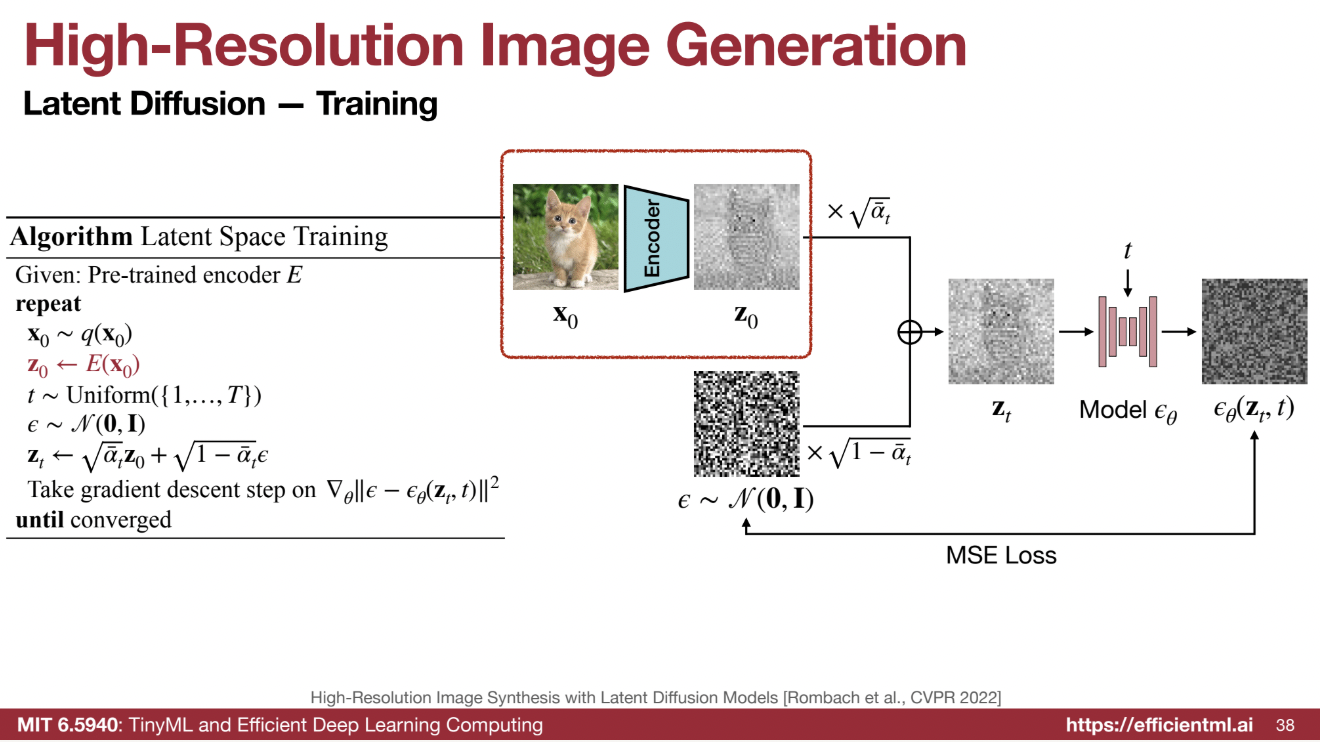

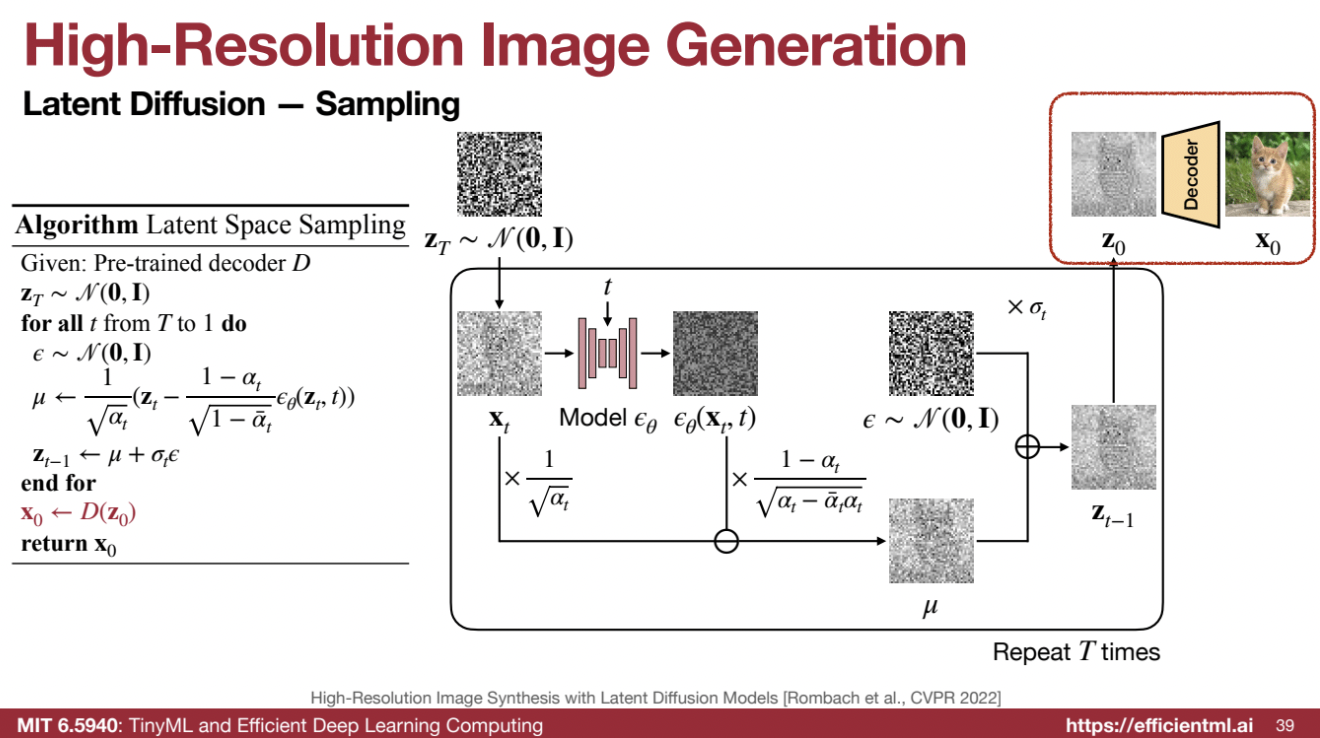

🔑 IV. Latent Diffusion Models

📌 Concept:

- Applies diffusion models in a compressed latent space instead of the high-resolution pixel space.

💬 “Rather than directly performing diffusion on a high-resolution image, we first pass it through an encoder and then denoise it at a smaller resolution.”

🔄 Process:

- Encoder: A pre-trained Variational Autoencoder (VAE) compresses the input image into a lower-resolution latent representation.

- Diffusion: The diffusion model operates in this latent space.

- Decoder: The latent representation is decoded back to the original resolution.

🌟 Benefits:

- Reduced computational costs by operating in a smaller space.

- Faster synthesis due to simplified denoising in a compressed space.

🔧 Deep Compression Autoencoder (DC-AE):

- A novel approach for compressing latent space beyond traditional VAEs.

- 64x compression (compared to 8x in Stable Diffusion’s VAE) achieved through:

- Residual autoencoding techniques.

- Three-stage adaptation process:

- Low-resolution full training.

- High-resolution latent adaptation to middle layers only.

- G-loss refinement for the final decoder layer.

- Advantages:

- Significantly improves speed.

- Reduces memory consumption.

- Decreases the number of tokens needed for diffusion.

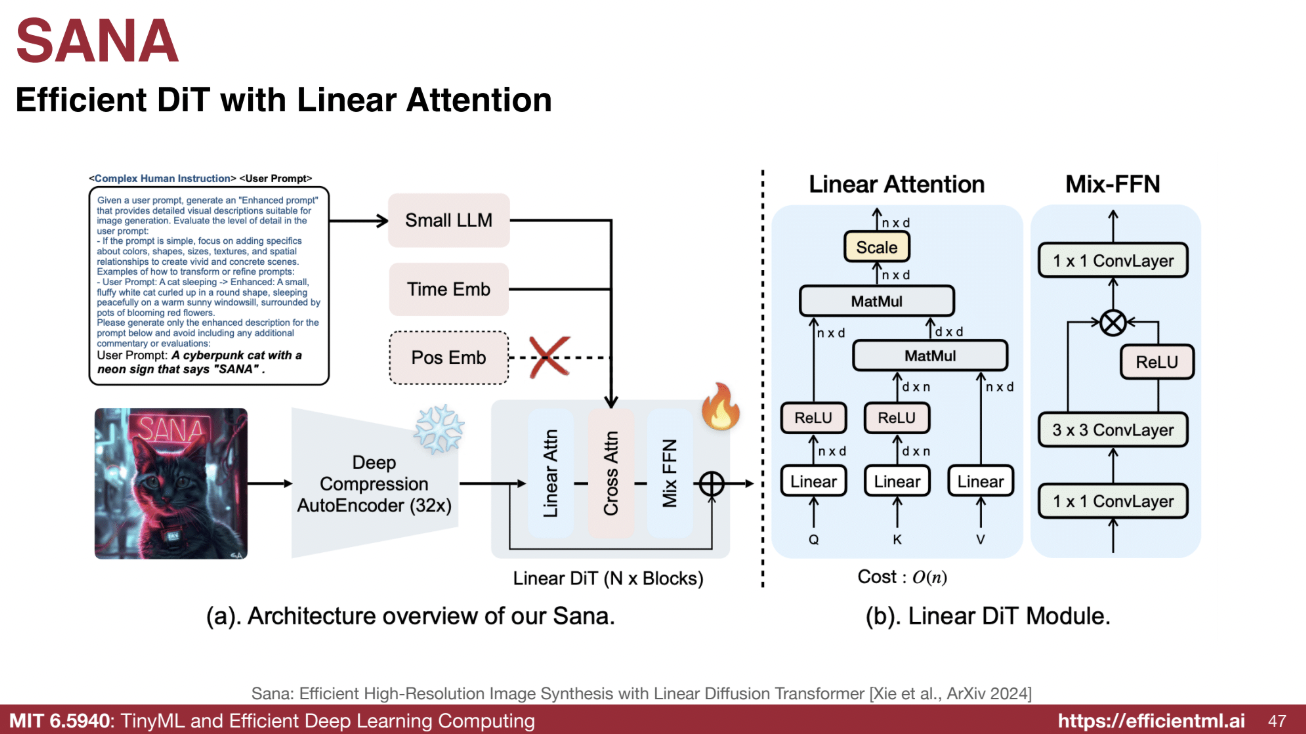

🚀 SANA:

- An efficient image generation diffusion model built using DC-AE and linear attention modules.

- Features:

- Uses a decoder-only large language model optimized for image generation via complex human instructions as text encoder.

- Incorporates VLM (Visual Language Model) to re-caption images and refine captions for training, creating a positive feedback loop.

Deep Compression Autoencoder (DC-AE)

- High Compression Rate: Compresses images by a factor of 64x, far exceeding the 8x compression of standard Variational Autoencoders (VAEs).

- Techniques: Utilizes residual autoencoding and a three-stage adaptation process for high resolution.

- Efficiency: Significantly reduces the number of tokens and computational complexity by operating in a smaller latent space.

- Quality Maintenance: Designed to preserve image quality even at high compression ratios.

Linear Diffusion Transformer

- Efficient Attention: Implements a linear attention mechanism, which is highly efficient for high-resolution images with large numbers of tokens.

- Scalability: Reduces the quadratic computational growth typically associated with resolution increases.

Small Language Model (LLM) as Text Encoder

- Model Choice: Uses a decoder-only LLM (e.g., Google’s Jamma) instead of traditional encoder-decoder models like T5.

- Enhanced Prompts: Prepends complex human instructions to user prompts for detailed visual descriptions.

- Example:

- Simple Prompt: “A man is walking.”

- Enhanced Prompt: “A man in a worn leather jacket walks briskly down a cobblestone street. He has dark hair and is silhouetted framed by the setting sun. He wears a faded blue scarf around his neck.”

- Example:

Visual Language Model (VLM) for Image Re-captioning

- Detailed Captions: Generates descriptive captions for training images, offering more detail than human labelers while avoiding hallucination.

- Example:

- Simple Caption: “Top view of the written ‘HAPPY VALENTINE’ on a tart chocolate cake.”

- Expanded Caption: “The image captures a delightful scene of a Valentine’s Day celebration. At the center of the image is a round chocolate cake, rich and inviting…”

Co-Design of VLM and Diffusion Model

- Synergy: The VLM generates detailed training data for the diffusion model, and the diffusion model’s outputs are used to refine the VLM. This iterative process improves both models simultaneously.

Speed and Quality

- Acceleration:

- 1K image generation: 25x faster.

- 4K image generation: 100x faster.

- Quality: Produces images comparable in quality to leading diffusion models like FLUX.

By integrating these features, Sana sets a new benchmark for efficient, high-quality image generation. Its combination of compression, advanced attention mechanisms, and detailed text-to-image capabilities make it a powerful tool in the field of generative modeling.

🎨 V. Image Editing and Personalization

🖌️ Image Editing:

- Uses diffusion models to edit images by:

- Adding noise to the image and edit strokes.

- Applying the reverse diffusion process to generate the modified image.

🎭 Personalized Diffusion Models (DreamBooth):

- Aims to generate personalized images of specific subjects or styles.

- Fine-tunes a text-to-image model using a few images of the desired subject.

- Note: This process is subject-specific and must be repeated for each new subject.

⚡ VI. Fast Sampling Techniques

🌀 Denoising Diffusion Implicit Models (DDIM):

- Reduces sampling steps by using a non-Markovian forward process that shares the same diffusion kernel and loss as DDPM.

- Larger time steps are allowed, reducing computation without retraining models.

🏋️ Progressive Distillation:

- Reduces sampling steps through a distillation process:

- A “student” model learns to perform multiple denoising steps in one iteration.

- Successive distillations with new student models further reduce steps.

- ⚠️ May slightly impact quality but offers faster inference.

🚀 VII. Acceleration Techniques

🧩 Sparsity:

- Spatial Sparsity:

- Exploits the fact that only edited regions of images need resynthesizing.

💬 “We can just change whatever we edited; for unedited regions, we reuse the feature maps from the original model.”

- Uses Sparse Incremental Generative Engine (SIGE) to compute convolution only over edited blocks.

- Exploits the fact that only edited regions of images need resynthesizing.

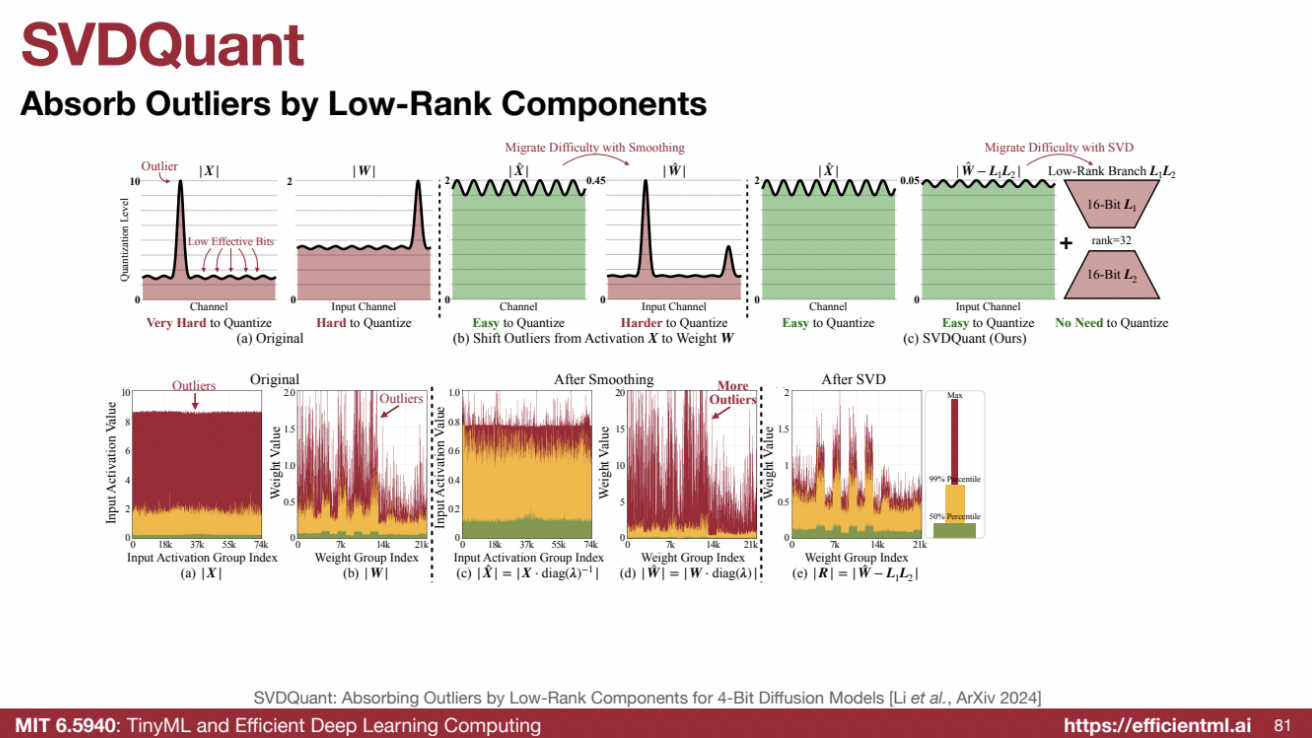

🛠️ Quantization:

- SVDQuant:

- Solves issues with outliers in diffusion models that prevent efficient quantization of weights and activations.

- Techniques:

- Smoothing methods to shift quantization difficulty from activations to weights.

- Low-rank branches to absorb weight outliers, enabling 4-bit quantization for both weights and activations.

- Results:

- Significant speedup and memory savings.

- Fuses kernels to eliminate low-rank component overhead, further improving performance.

The Problem with Quantization

- Challenge: Diffusion models contain outliers (extreme values far from the mean) in weights and activations, making direct quantization to 4-bit challenging.

- Impact: Outliers cause significant accuracy loss when quantized without proper handling.

Smoothing

- Objective: Migrate quantization difficulty from activations to weights by smoothing activations.

- Effect: Smoothing makes activations easier to quantize but shifts outliers to the weights, requiring further processing.

Singular Value Decomposition (SVD) and Low-Rank Approximation

- Decomposition: Applies SVD to split weights into:

- Low-Rank Component: Captures essential information, kept in full precision (e.g., 32-bit) but is computationally inexpensive.

- Residual Component: Absorbs outliers, simplifying quantization of the remaining weights and activations.

- Goal: Simplify quantization by isolating outliers in the low-rank branch.

Quantization

- Process: Quantizes both weights and activations to 4-bit precision after handling outliers.

- Result: Achieves efficient quantization without significant quality degradation.

Kernel Fusion

- Technique: Combines computations of the low-rank branch with the main branch to minimize computational overhead.

- Implementation:

- Down Projection: Fused with the previous kernel (shared input).

- Up Projection: Fused with the next layer’s computation (shared output).

Benefits of SVDQuant

- Speedup:

- Achieves over 3x speedup by utilizing 4-bit arithmetic.

- Demonstrated 3.5x speedup on a 4090 GPU.

- Memory Savings:

- Reduces memory usage from 23 GB to approximately 6 GB.

- Enables running diffusion models on lower-resource hardware.

- Compatibility:

- Compatible with LoRA (Low-Rank Adaptation) models, allowing integration without re-quantizing weights.

- Quality:

- Maintains high-quality output compared to naive quantization methods that degrade image quality.

By combining smoothing, low-rank decomposition, 4-bit quantization, and kernel fusion, SVDQuant offers a robust solution for accelerating diffusion models while reducing memory consumption and maintaining output fidelity.

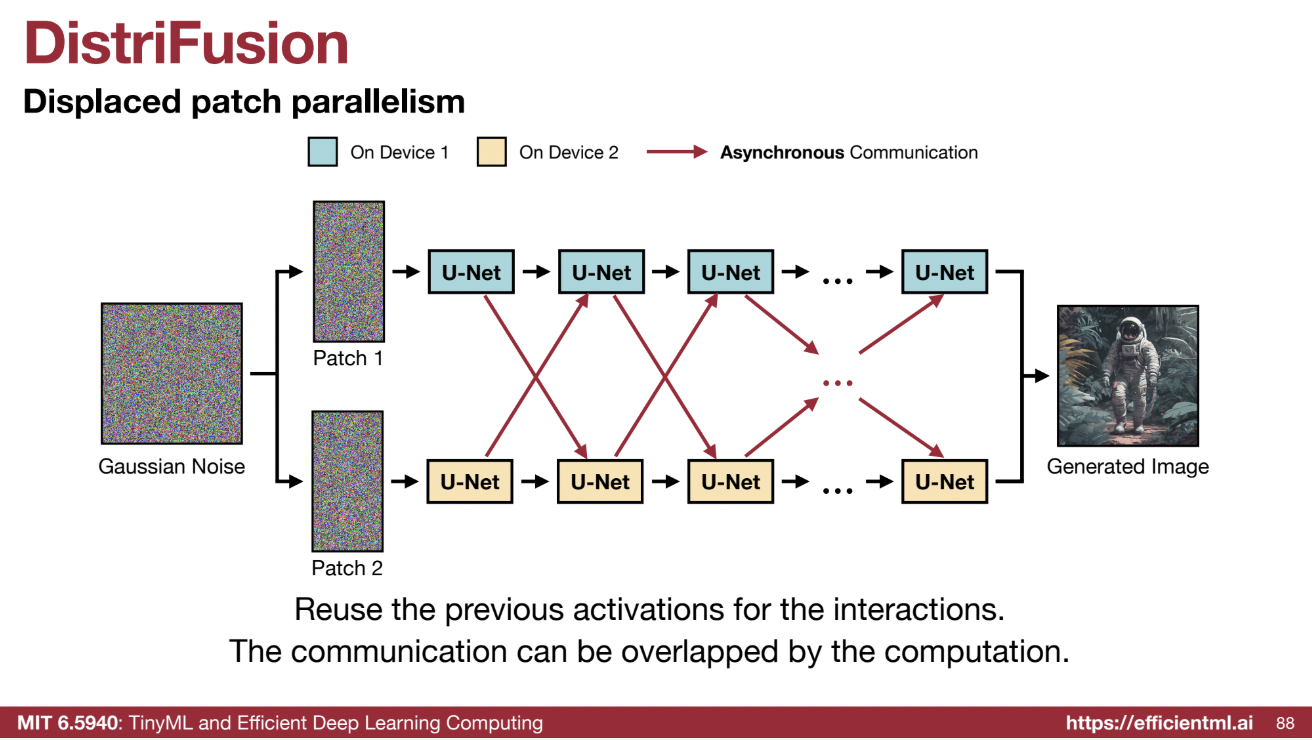

🤝 Parallelism:

- DistriFusion:

- Exploits similarity between inputs in adjacent time steps.

- Reuses old activations to facilitate communication between multiple GPUs.

- Overlaps communication of activations with computation, enabling high-resolution image generation.

- Benefits:

- Significant speedups.

- Efficient splitting of image generation across multiple GPUs.

Summarize

| Model | Technique | Goal/Problem Solved | Real-World Application | |———————————–|——————————————————————————————————————————————————————————–|——————————————————————————————————-|———————————————————————————————————| | Denoising Diffusion Probabilistic Models (DDPM) | Forward process: Gradually add Gaussian noise to data. Reverse process: Gradually denoise from a noisy distribution to reveal the original data. | Generate data by learning to reverse the noising process. | Image generation, outperforming GANs on image datasets. | | Conditional Diffusion Models | Condition the generation process on various inputs like class labels, text prompts, or pixel-wise conditions. | Allow for controlled generation of images based on specific conditions. | Generating images of a specific class (e.g., cat or dog), text-to-image generation, image editing. | | Latent Diffusion Models | Apply diffusion process in a lower-dimensional latent space obtained using a Variational Autoencoder (VAE). | Reduce computational cost of diffusion by operating in a compressed latent space. | High-resolution image generation, enabling faster synthesis (e.g., Stable Diffusion). | | Deep Compression Autoencoder (DC-AE) | Compresses images by a factor of 64x, compared to 8x by standard VAEs, using residual autoencoding and a three-stage adaptation to high resolution. | Further reduce computational cost by compressing the latent space. Optimizes high spatial compression autoencoders. | Accelerates image generation in diffusion models and enables high-resolution image generation (e.g., Sana). | | Sana | Combines a deep compression autoencoder with a linear diffusion transformer, a small LLM as a text encoder, and a visual language model (VLM) for image re-captioning. | Achieve high-resolution, low-cost image generation with faster inference and high quality. | High-resolution image generation (e.g., 4K). Achieves 106x speedup on an A100 GPU. | | Denoising Diffusion Implicit Models (DDIM) | Non-Markovian forward process allowing for larger time steps during sampling. | Accelerate the sampling process of diffusion models by reducing the number of steps needed to denoise an image. | Faster image generation compared to DDPM. | | Progressive Distillation | Distills a deterministic DDIM sampler into the same model architecture, where a “student” model learns from two sampling steps of the teacher model. | Further reduces sampling steps by learning a student model from a teacher model, making the process faster. | Faster image generation with fewer steps. | | Spatially Sparse Inference | Reuse feature maps from previously generated images to only recompute regions that have been edited, for tasks such as image inpainting. | Reduces computation by focusing only on the edited portions of an image during image editing. | Real-time, interactive photo editing applications, image inpainting, and image editing. | | SVDQuant | Combines smoothing and Singular Value Decomposition (SVD) to quantize both weights and activations of diffusion models to 4-bit precision. Fuse low-rank components. | Reduces memory and accelerates computation by quantizing weights and activations to low-bit without compromising output quality. | Faster inference and reduced memory footprint for diffusion models. | | DistriFusion | Exploits the similarity of inputs across adjacent time steps in diffusion models to use stale activations for communication between GPUs. | Reduces communication overhead by using stale activations, enabling parallelism and faster generation of high-resolution images. | Accelerating high-resolution image generation through parallel processing on multiple GPUs. |