TinyML MCUNet

Modern AI models are becoming increasingly large, demanding substantial computational resources and memory. This creates a gap between the computational demands of these models and the available hardware capabilities. Pruning addresses this gap by reducing model size, memory footprint, and ultimately, energy consumption.

This document summarizes EfficientML.ai Lecture 10 - MCUNet and TinyML (MIT 6.5940, Fall 2024) and the accompanying slides, focusing on deploying deep learning on resource-constrained microcontrollers.

🤖 What is TinyML?

TinyML brings AI to billions of IoT devices by enabling deep learning on microcontrollers. Key attributes:

- Local Processing: Reduces reliance on cloud data transmission.

- Privacy & Security: Keeps data processing on-device.

🚧 Challenges

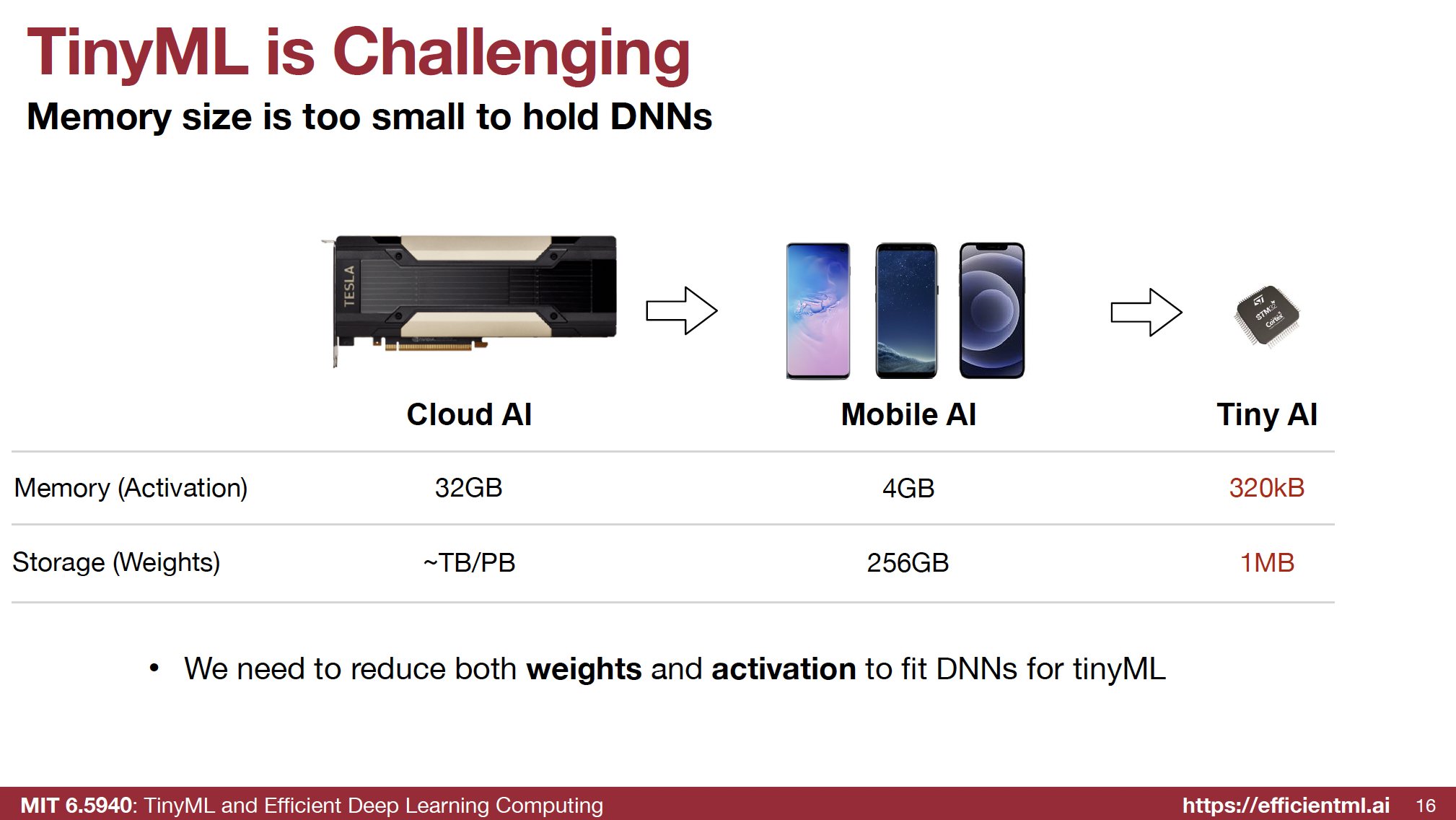

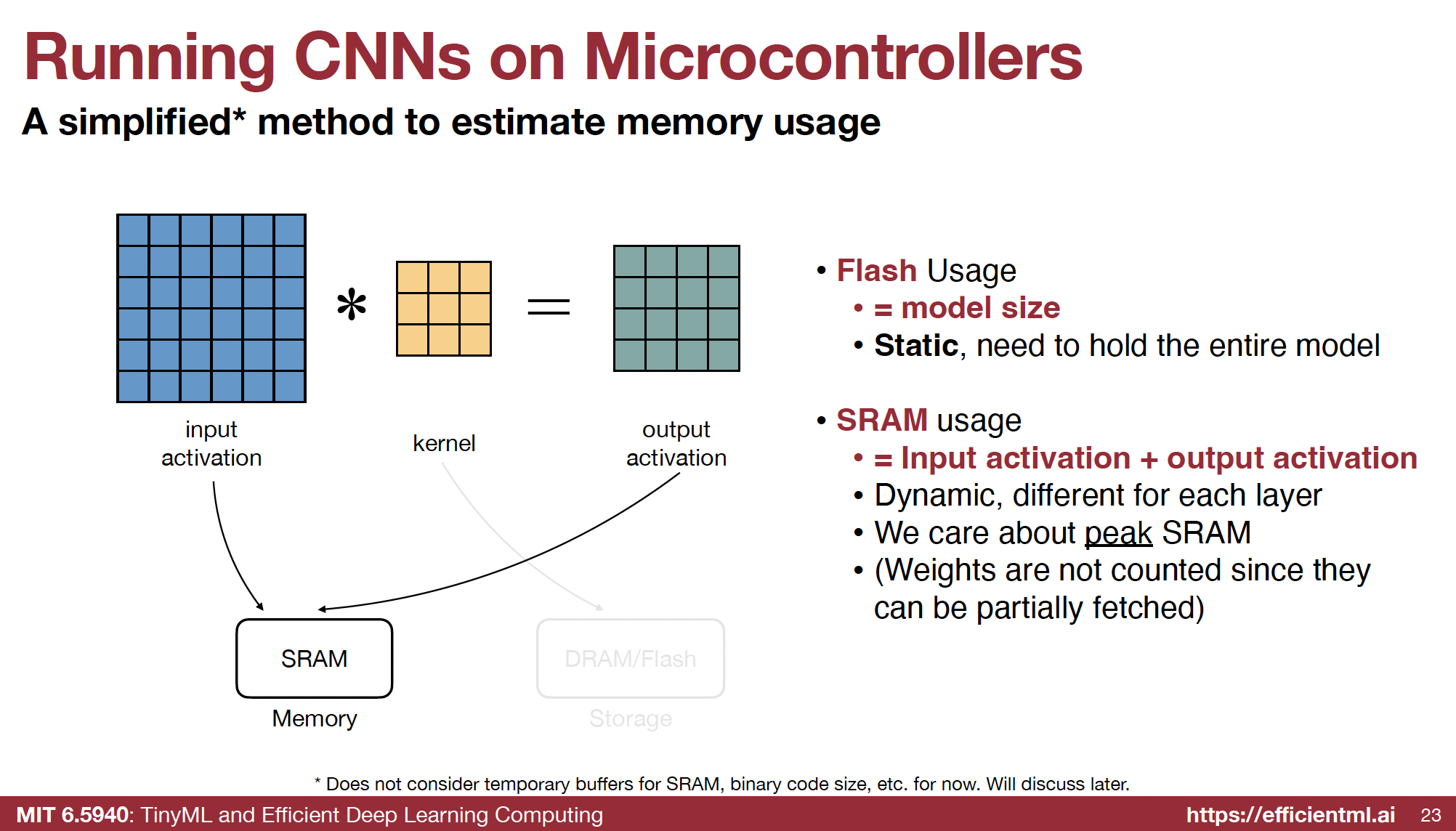

Microcontrollers face severe memory constraints, unlike mobile or cloud platforms.

- SRAM (Activations) and Flash (Weights) are significantly limited.

-

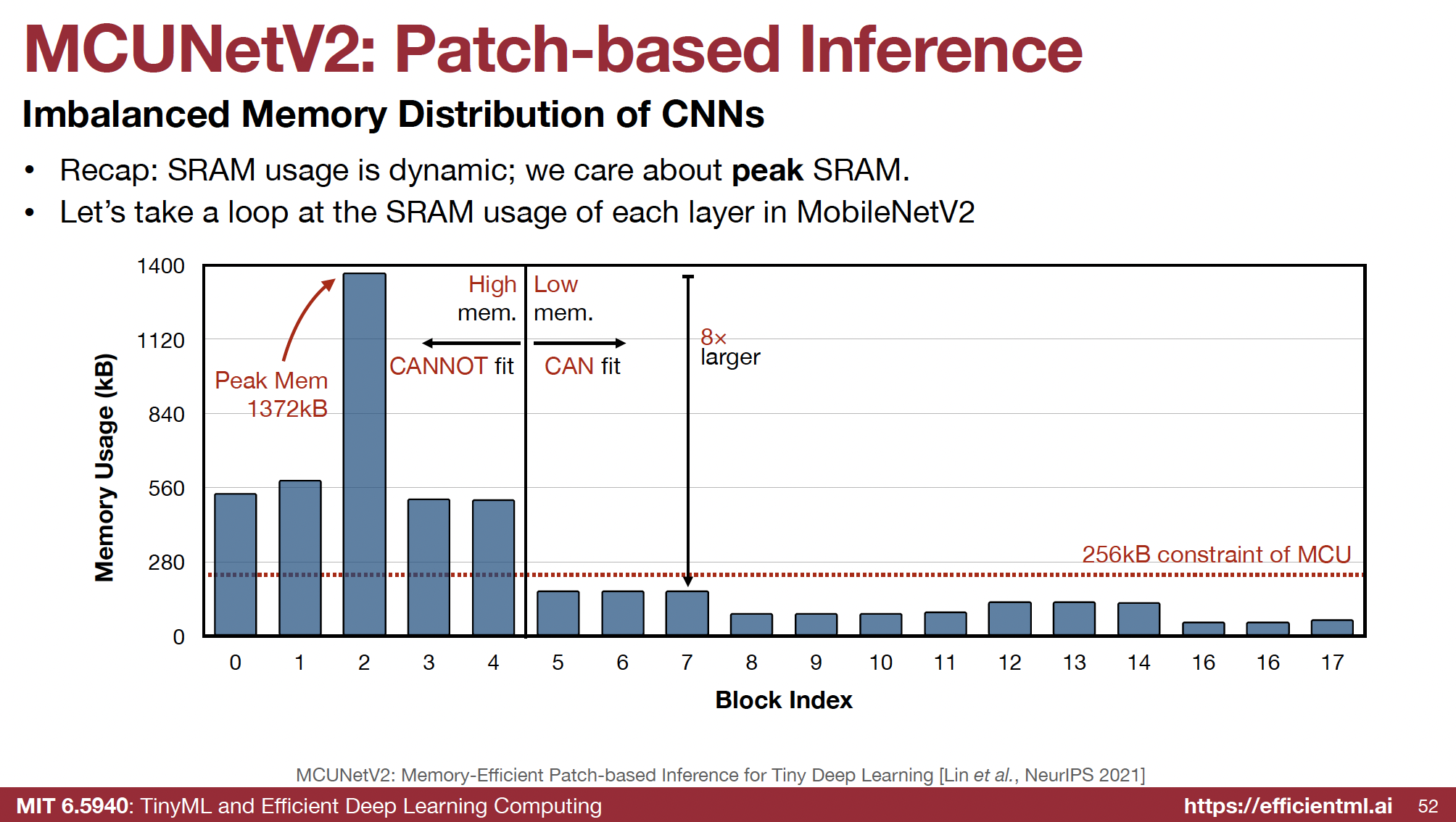

Existing efficient models like MobileNetV2 still exceed these memory limits:

“MobileNetV2 reduces only model size but not peak activation size” - Lec10-MCUNet.pdf

“TinyML is Challenging Memory size is too small to hold DNNs… We need to reduce both weights and activation to fit DNNs for tinyML” - Lec10-MCUNet.pdf

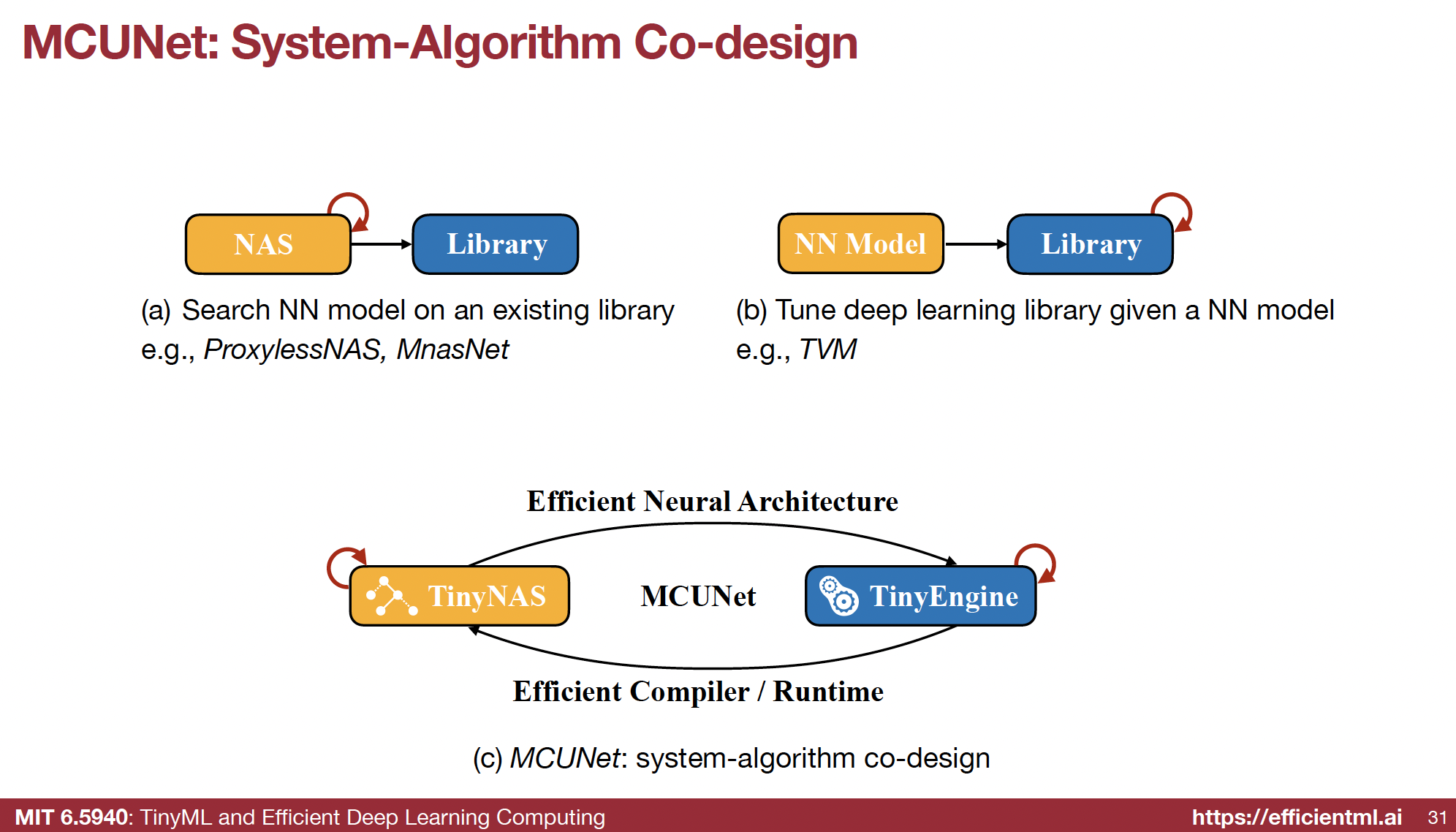

🛠️ The Solution: MCUNet - System-Algorithm Co-Design

MCUNet introduces a co-design approach combining neural architecture search (NAS) with system-level optimizations:

🧠 TinyNAS: Memory-Constrained Model Specialization

- Automated Search Space Optimization: Analyzes FLOPs distribution, focusing on feasible computations under strict memory constraints.

- One-Shot NAS: Trains a super network to generate sub-networks optimized for specific memory and storage limits.

⚙️ TinyEngine: Optimized Inference Engine

- Works with TinyNAS to manage memory efficiently during execution.

🌟 Key Innovations: Patch-Based Inference & Network Redistribution

🧩 Patch-Based Inference

- Breaks the memory bottleneck by processing input images in smaller patches, lowering peak memory usage.

- Challenge: Introduces computational overhead due to overlapping computations in “halo regions” (from receptive fields).

🔄 Network Redistribution

- Reduces overhead by modifying the network architecture:

- Early Layers: Use smaller kernels or remove layers to reduce receptive fields.

- Later Layers: Compensate with more complex computations.

-

Result: Minimal performance loss across tasks like image classification and object detection.

“Break the memory bottleneck with patch-based inference” - Lec10-MCUNet.pdf

“Redistribute: Same performance… Negligible overhead” - Lec10-MCUNet.pdf

🌐 Impact and Applications

MCUNet enables a variety of TinyML applications:

- Tiny Vision: ImageNet-level accuracy, visual wake words, object detection.

- Tiny Audio: Efficient keyword spotting, speech recognition.

- Tiny Time Series/Anomaly Detection: Real-time anomaly detection in industrial, healthcare, and IoT scenarios.

🎯 Key Takeaways

- TinyML is transforming IoT by deploying AI on microcontrollers, enabling privacy, efficiency, and ubiquity.

- MCUNet’s co-design approach tackles memory bottlenecks by optimizing both model architecture and inference systems.

- Innovations like patch-based inference and network redistribution make efficient AI deployment feasible on constrained devices.

- Applications span vision, audio, and anomaly detection, with ongoing advancements paving the way for new capabilities.

TinyML and MCUNet exemplify how cutting-edge AI is reshaping everyday devices, blurring the boundaries between physical and digital worlds.