📚 Transformers and Autoregressive Models

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

This document summarizes key concepts from the lecture on Transformers and attention mechanisms. The focus is on understanding how Transformers, initially developed for time series modeling, have become a dominant architecture in various deep learning applications. We explore core concepts, motivations, advantages, limitations, and applications beyond time series.

1. ⏳ Time Series Modeling: Two Approaches

🔄 Recurrent Neural Network (RNN) - Latent State Approach

- Concept:

RNNs maintain a “latent state” that summarizes past information up to a given time point.

🧩 “The latent state (ht) acts as memory, accumulating information over time.” - Pros:

- 📜 Potentially infinite history: Can capture long past dependencies.

- 🗜️ Compact representation: Entire history condensed into a single state.

- Cons:

- 🧮 Long compute path: Information from the distant past may vanish or explode through hidden states.

- ❌ Difficult to incorporate long-term dependencies in practice.

🎯 Direct Prediction Approach

- Concept:

Directly maps input sequences to outputs without relying on latent states.

🧮 “Predict each Yt as a function of Xt without embedding state.” - Pros:

- ⚡ Shorter compute paths: Efficient information capture.

- Cons:

- ⛔ No compact state representation: Entire history is processed for each prediction.

- 📏 Finite history: Limited by input size.

2. 🛠️ CNNs for Direct Prediction

- Concept:

Temporal Convolutional Networks (TCNs) use causal convolutions to ensure outputs depend only on past and current inputs. - Causal Convolutions:

⏰ “Hidden states at time t depend only on states up to time t.” - Limitations:

- 🔍 Limited receptive field: Small receptive field, requiring deeper networks.

- Solutions:

- 📈 Dilated convolutions

- 🏊 Pooling layers

Each solution has trade-offs like parameter increase or sparse inputs.

3. 🎯 Attention Mechanisms

- Concept:

Attention weights and combines states, computing a weighted sum over time.

🧑🏫 “Initially used in RNNs to combine latent states over all time points.”

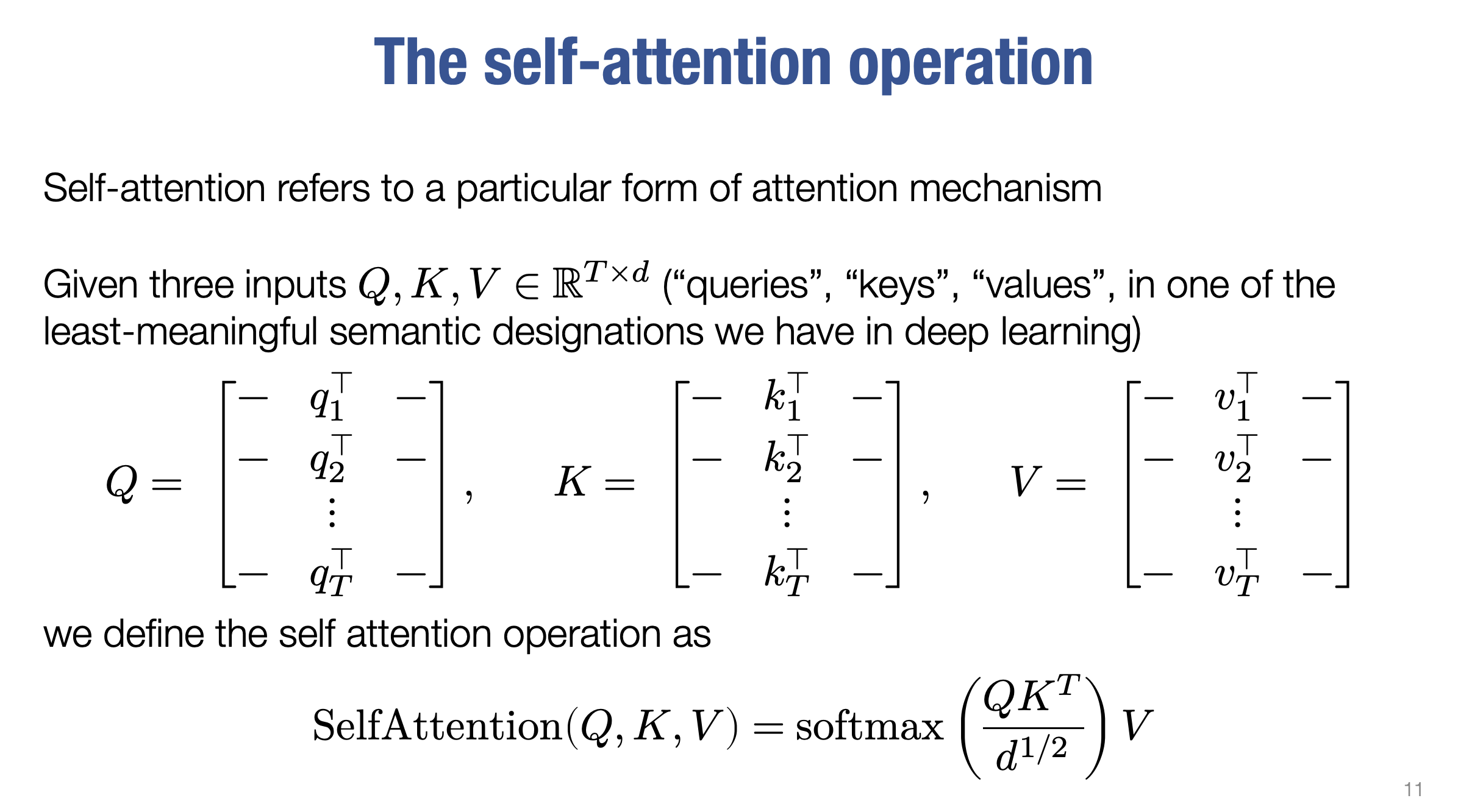

4. 🌐 Self-Attention

- Concept:

Attention where weights are determined by inputs (using queries, keys, and values).

🗝️ “Self-attention uses Q (queries), K (keys), and V (values) matrices.” - Operation:

SelfAttention(Q, K, V) = softmax(QK^T / sqrt(d))V - Properties:

- 🔄 Permutation Equivariance: Order of inputs doesn’t affect result.

- 🌍 Global Influence: Considers all time steps.

- 📊 Constant parameter count: Entire sequence processed without increasing parameters.

- Compute Cost:

- 💸 O(T²d): Difficult to reduce.

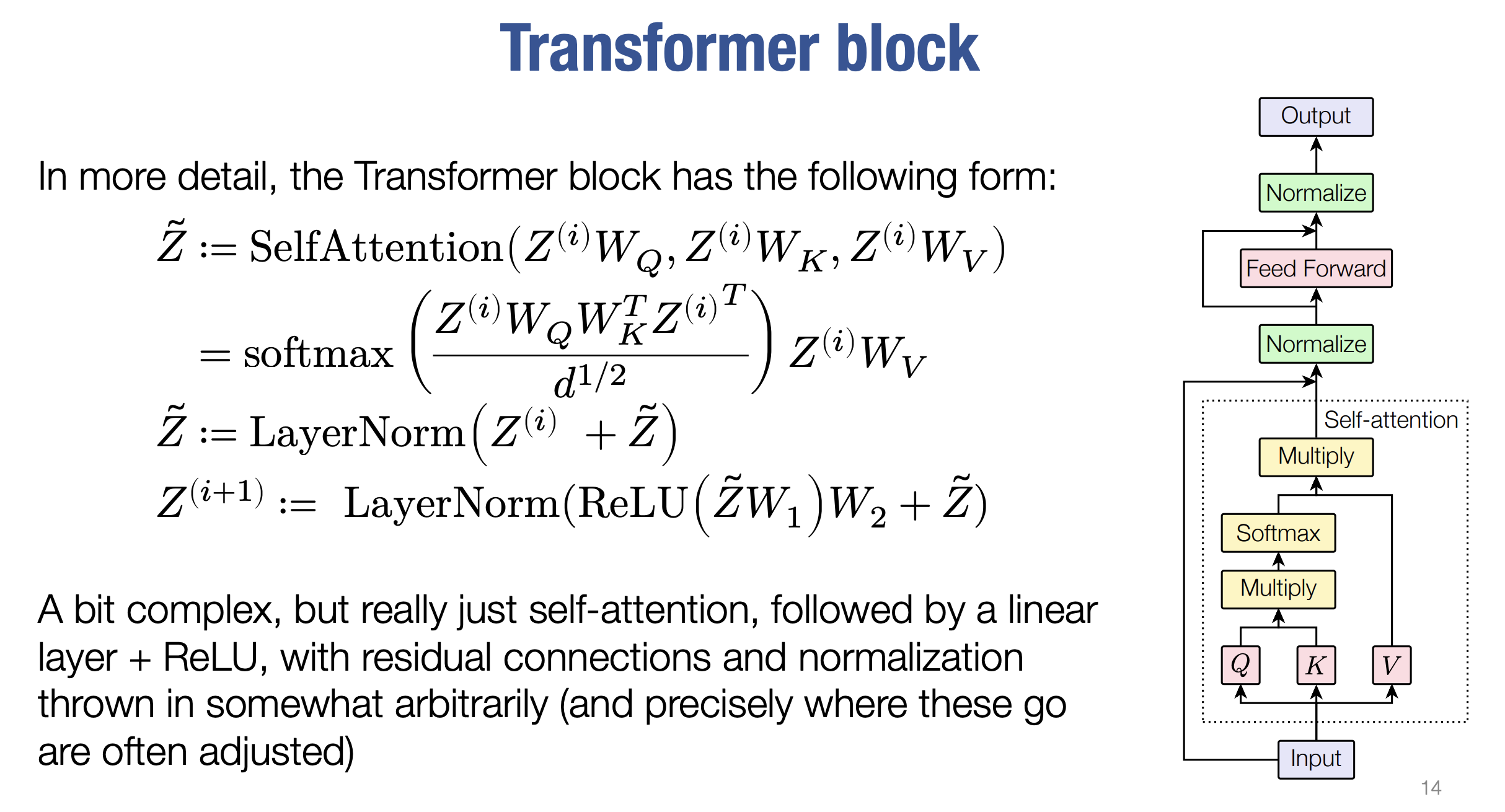

5. 🚀 Transformer Architecture

- Concept:

Uses self-attention and feedforward layers to process sequences.

🔧 “Transforms inputs to hidden states through a series of blocks.” - Transformer Block:

- 🔁 Self-attention

- ➕ Residual connections

- ⚖️ Layer normalization

- 🔨 Feedforward network

- Parallel Processing:

🏎️ Processes all time steps in parallel (unlike RNNs). - Advantages:

- 🌐 Full receptive field in a single layer.

- 🛠️ Mixes entire sequence without increasing parameters.

- Disadvantages:

- ⏱️ Autoregressive tasks affected by dependencies on future inputs.

- 🔄 Permutation equivariance: No inherent data order capture.

6. 🛡️ Addressing Limitations

- Masked Self-Attention:

🔒 “Zero weight assigned to future steps to enforce causality.” - Positional Encodings:

📊 “Sinusoidal encodings added to capture sequence order.”

7. 📈 Transformers Beyond Time Series

- Vision Transformers (ViTs):

🖼️ Images represented as patch embeddings. - Graph Transformers:

🕸️ Captures graph structures using modified attention. - Challenges:

- 🧮 Efficient computation of attention matrices

- 📏 Effective positional embeddings

- 🧱 Mask matrix design