Vision Transformer

Modern AI models are becoming increasingly large, demanding substantial computational resources and memory. This creates a gap between the computational demands of these models and the available hardware capabilities. Pruning addresses this gap by reducing model size, memory footprint, and ultimately, energy consumption.

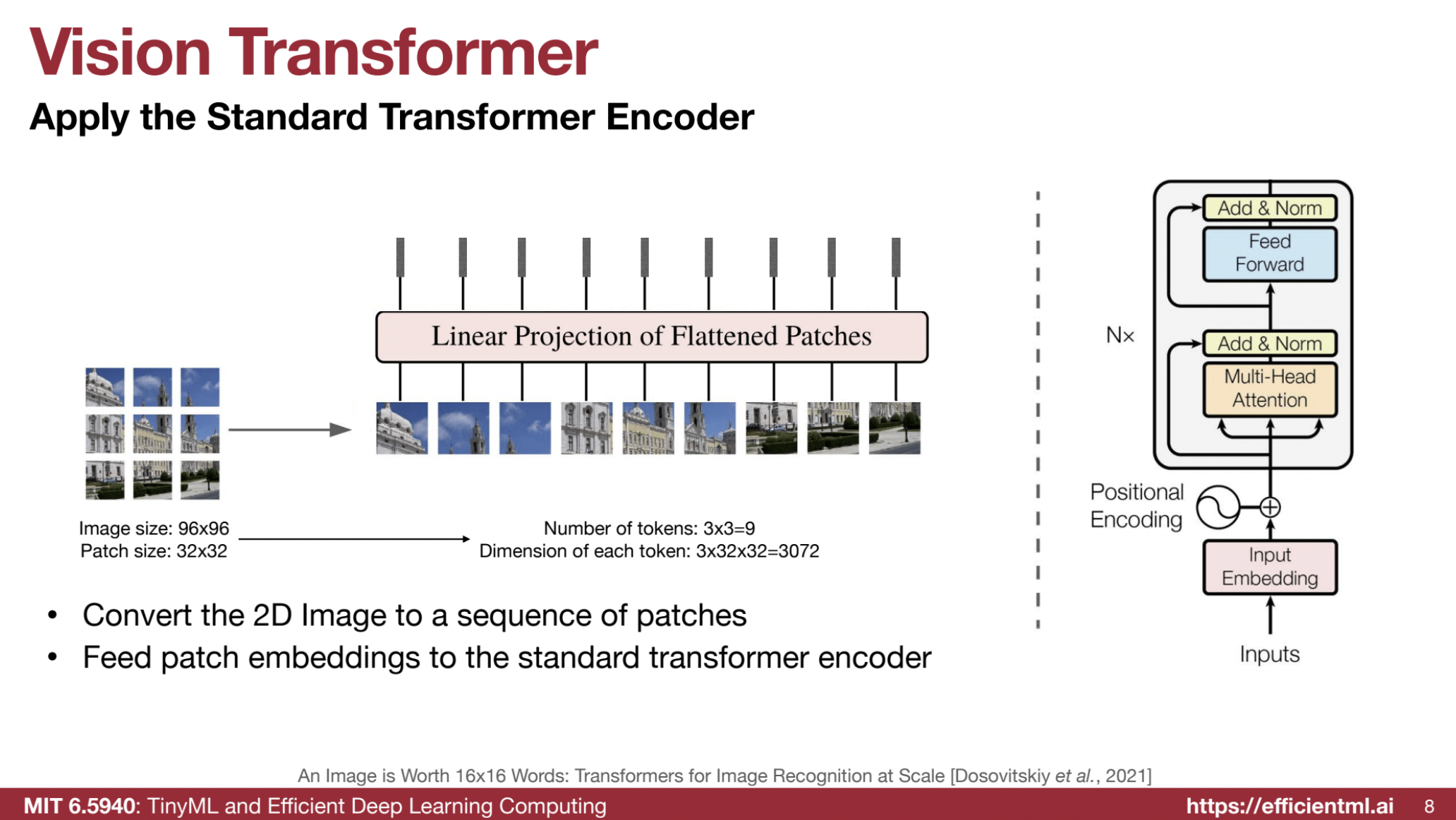

This lecture provides a detailed overview of Vision Transformers (ViTs), including their fundamental principles, acceleration techniques, self-supervised learning strategies, and their application in autoregressive image generation. The content is derived from a lecture given at MIT (6.5940, Fall 2024) and a related PDF slide deck. The core idea is that Transformers, initially designed for language, can be adapted for image processing by treating image patches as tokens.

1️⃣ Basics of Vision Transformers (ViTs)

🖼️ Image Tokenization:

- ViTs process images by dividing them into patches. These patches become the “tokens” that the Transformer architecture operates on.

- A 96x96 image might be divided into 3x3 patches, each of size 32x32, yielding 9 tokens (3x3 = 9).

- Each token dimension is $(3 \times 32 \times 32 = 3072)$, flattened into a 1D vector.

🔗 Linear Projection:

- Flattened patches are projected to a hidden dimension (e.g., 768) using a convolution instead of a fully connected layer.

- Convolution parameters: kernel size 32x32, stride 32, and 3 input channels (RGB).

🔄 Transformer Encoder:

- The token embeddings are fed into a Transformer encoder with positional embeddings to maintain location information.

⚙️ Model Variants:

- ViTs come in various sizes, such as ViT-Large and ViT-Huge, differing in layers, hidden dimensions, and patch sizes (e.g., ViT-L/16 uses 16x16 patches).

🆚 Comparison with CNNs:

- ViTs: Better scalability with large datasets.

- CNNs: More efficient with limited data.

2️⃣ Efficient ViT and Acceleration Techniques

📈 Motivation for Efficiency:

- High-resolution images are critical for tasks like medical imaging and super-resolution, but ViTs’ computation grows quadratically with resolution:

- Attention mechanism complexity: $(O(n^2))$, where n is the number of tokens.

- Token count grows with resolution squared; computations grow with resolution to the power of 4.

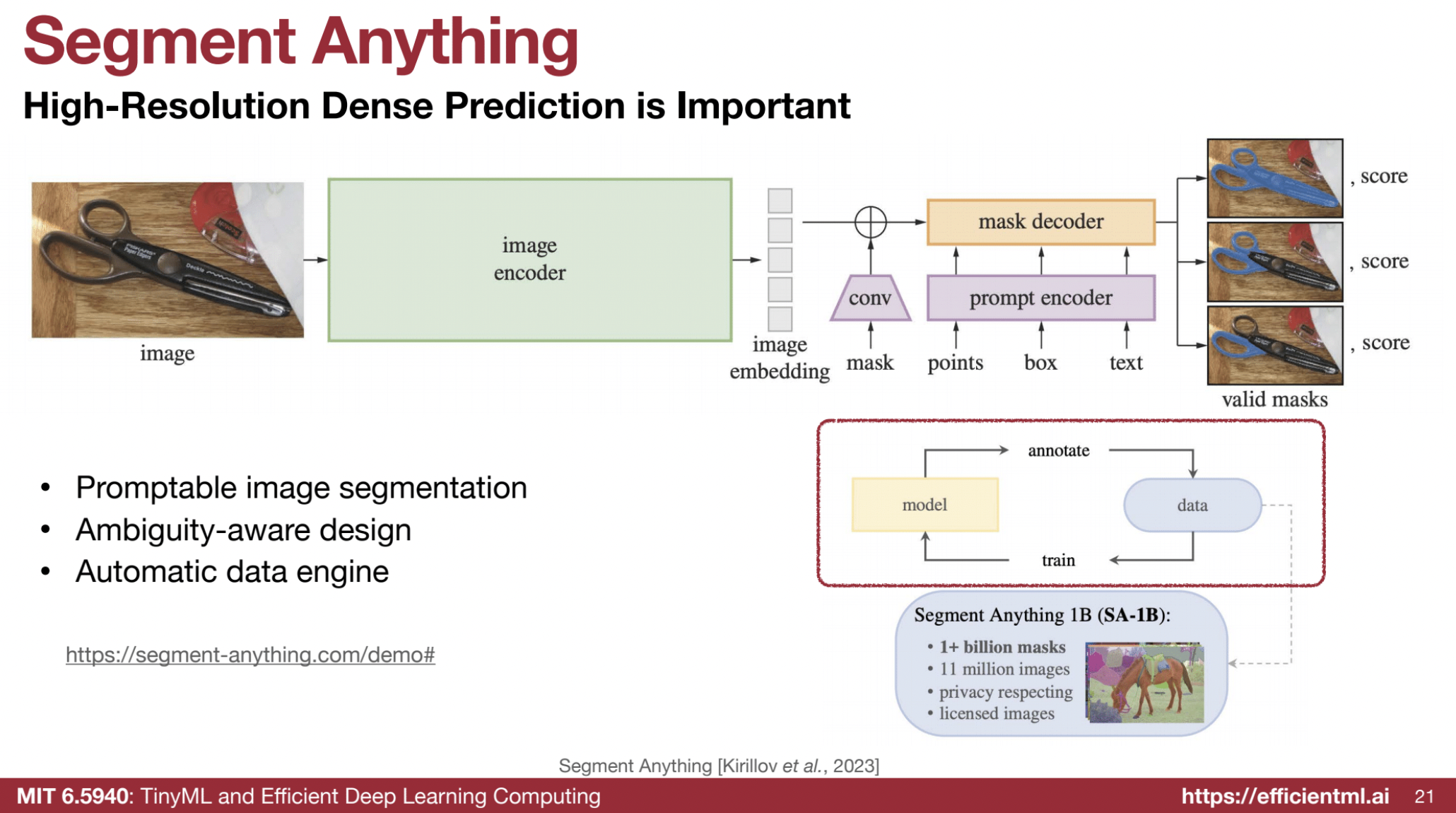

🖼️ Segment Anything Task: Key Details

The “segment anything” task involves promptable image segmentation, which can use points, boxes, or masks as prompts. The model is designed to be ambiguity-aware, enabling it to segment different parts of an object depending on the prompt provided.

- Image Encoder:

- The process starts by passing an image through an encoder, which is a Vision Transformer (ViT), to generate an embedding.

- This step is the most time-consuming part of the process.

- Prompt Encoding:

- A prompt, such as a point, box, or mask, is encoded.

- Combination and Prediction:

- The image embedding is combined with the encoded prompt.

- The model predicts the final mask.

- Text Prompts:

- The model can accept text as a prompt, though this feature was not included in the specific paper discussed.

- Automatic Data Engine:

- The model includes an automatic data engine, allowing it to annotate additional data and use that data to improve training.

- Segmenting Objects:

- The model can segment an entire object or just a portion of it based on the prompt.

- For instance, it can segment:

- An entire pair of scissors.

- Just one handle of the scissors.

- Both handles individually.

- Supported Objects:

- The model can segment a wide range of objects, including but not limited to:

- Fruit, poles, pumpkins, chairs, people, and cars.

- The model can segment a wide range of objects, including but not limited to:

- Throughput Improvements:

- Using efficient Vision Transformer techniques significantly increases the throughput of the model while maintaining segmentation quality.

- Example: Throughput increased from 11 to 182 images per second using efficient ViT techniques.

📦 Window Attention:

- Limits attention computation to fixed-size local windows (e.g., 7x7), reducing complexity to O(n).

🔄 Shifted Window Operation:

- Adds a shift operation for information exchange between adjacent windows, ensuring all tokens can eventually attend to one another.

🧹 Sparse Window Attention:

- Focuses only on sparse and relevant regions, skipping unnecessary computations.

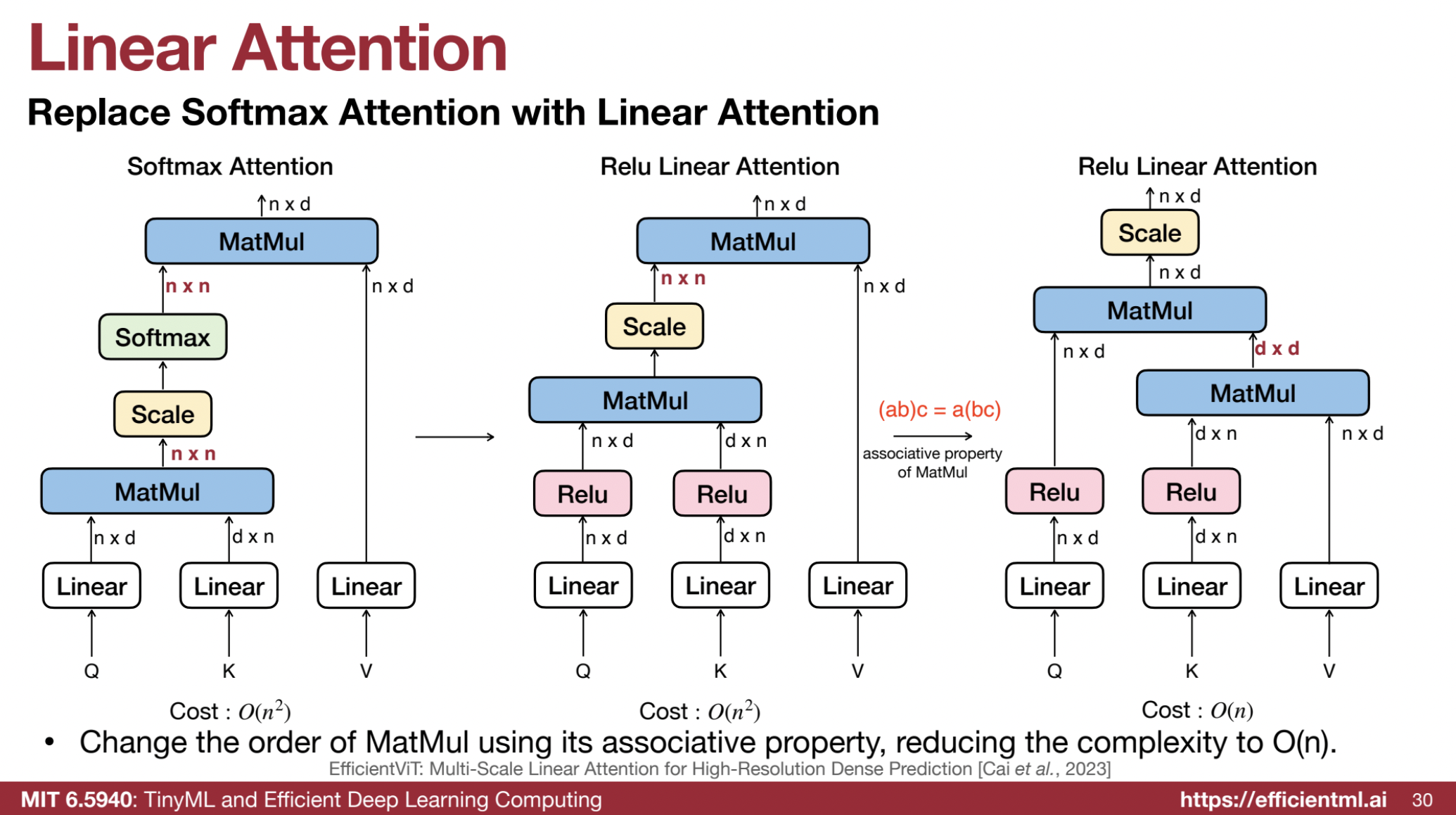

🔀 Linear Attention:

- Changes the order of attention calculations to reduce complexity:

- From $((Q \times K^T) \times V)$ to $(Q \times (K^T \times V))$, reducing quadratic complexity to O(n).

🛠️ EfficientViT:

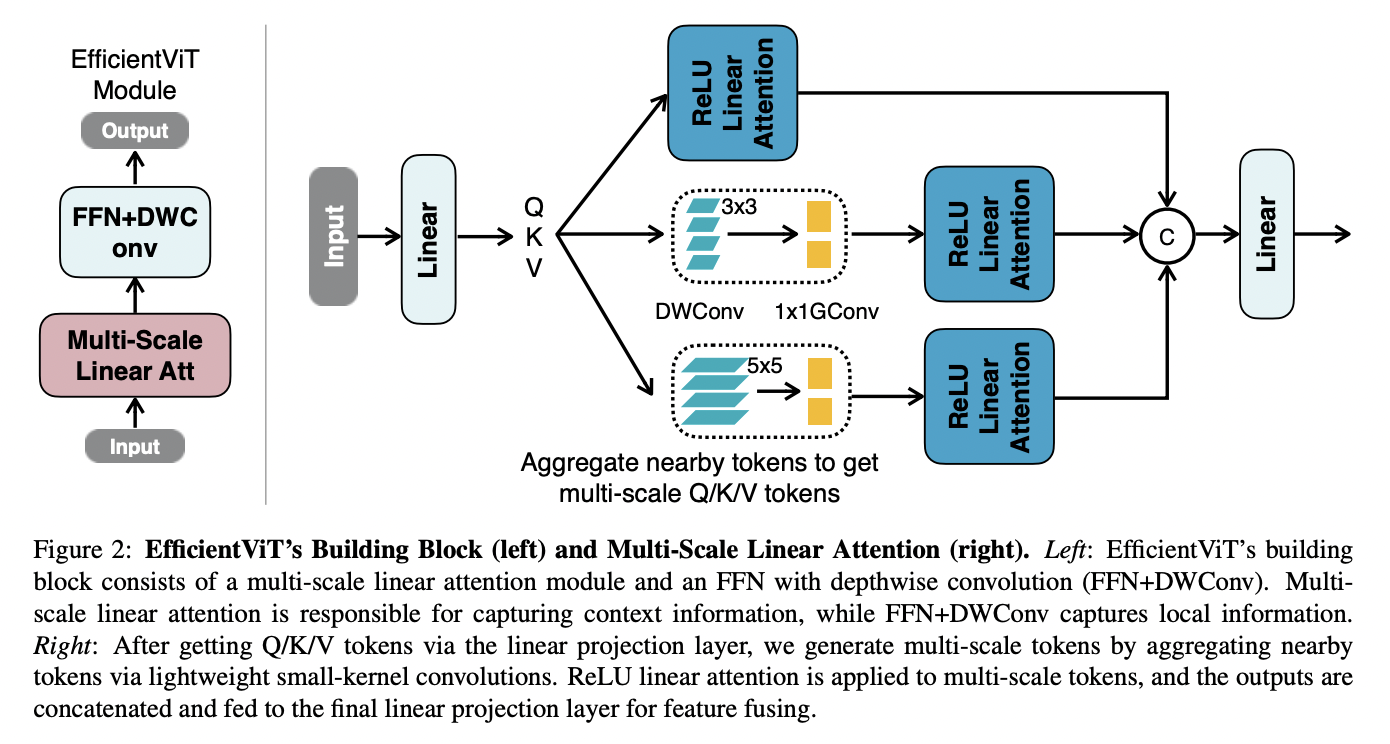

The EfficientViT model is designed for high-resolution dense prediction tasks, such as semantic segmentation and super-resolution, balancing performance and efficiency. It introduces a novel multi-scale linear attention mechanism that captures global context and multi-scale features while ensuring hardware efficiency.

Multi-Scale Linear Attention

- Core Mechanism: Overcomes limitations of traditional softmax attention, which has quadratic computational complexity, making it inefficient for high-resolution images.

- ReLU Linear Attention:

- Replaces softmax attention with ReLU linear attention, reducing complexity from quadratic to linear by leveraging the associative property of matrix multiplication.

- More hardware-efficient but weaker in local information extraction compared to softmax.

- Multi-Scale Aggregation:

- Aggregates nearby tokens using small-kernel depthwise separable convolutions to compensate for ReLU linear attention’s limitations.

- Convolutions applied independently to query (Q), key (K), and value (V) tokens in each attention head.

- Group Convolution:

- Combines depthwise (DWConv) and 1x1 convolutions into single operations to improve GPU efficiency and reduce computation.

EfficientViT Module

- Structure:

- Combines the multi-scale linear attention module with a feed-forward network (FFN) that includes DWConv.

- Multi-scale attention captures context; FFN+DWConv captures local information.

🏗️ Architecture

Backbone

- Standard design with:

- Input Stem: Initial processing of input images.

- Four Stages: Gradually decreases feature map size and increases channel count.

- EfficientViT Modules: Integrated in Stages 3 and 4.

- MBConv Layers: Used for downsampling with a stride of 2.

Head

- Combines outputs from Stages 2, 3, and 4 into a pyramid of feature maps.

- Features fused using 1x1 convolutions, upsampling, and addition.

- Contains MBConv blocks and output layers.

Model Variants

- Scales for different efficiency needs: EfficientViT-B0, B1, B2, B3, and L series (optimized for cloud platforms).

📊 Training and Evaluation

- Datasets: Evaluated on tasks like:

- Semantic Segmentation: Cityscapes, ADE20K.

- Super-Resolution: Benchmarked for quality and speed.

- ImageNet: Backbone supports classification tasks.

- Optimization:

- Trained with AdamW optimizer and cosine learning rate decay.

- Hardware:

- Benchmarked on platforms like mobile CPUs (Qualcomm Snapdragon), edge GPUs (Nvidia Jetson), and cloud GPUs (A100).

- Metrics:

- mIoU: Semantic segmentation.

- PSNR & SSIM: Super-resolution.

- mAP: Zero-shot instance segmentation.

🌟 Key Advantages

- Efficiency:

- Significant speedup with linear attention and avoidance of hardware-inefficient operations like softmax and large-kernel convolutions.

- Performance:

- Maintains or surpasses state-of-the-art results in high-resolution dense prediction tasks.

- Multi-Scale Design:

- Captures global and local context for high-resolution details.

🌐 Applications

- Semantic Segmentation:

- Faster and more accurate on datasets like Cityscapes and ADE20K.

- Super-Resolution:

- Achieves impressive speed and quality improvements.

- Segment Anything:

- Accelerates Segment Anything Model (SAM) with higher throughput and similar/better zero-shot instance segmentation results.

📈 Comparison with Other Models

- SegFormer & SegNeXt:

- Provides a large reduction in computational cost and latency.

- SwinIR & Restormer:

- Outperforms in latency and super-resolution performance.

- EfficientNetV2:

- Achieves better accuracy and significant speedup on ImageNet.

🎯 SparseViT:

- Prunes irrelevant regions, focusing on foreground features while reducing computations.

3️⃣ Self-Supervised Learning for ViTs

🤔 Motivation:

- Labeled datasets are costly; self-supervised learning leverages unlabeled data.

🔄 Contrastive Learning:

- Brings embeddings of the same image (positive samples) closer and pushes apart different images (negative samples).

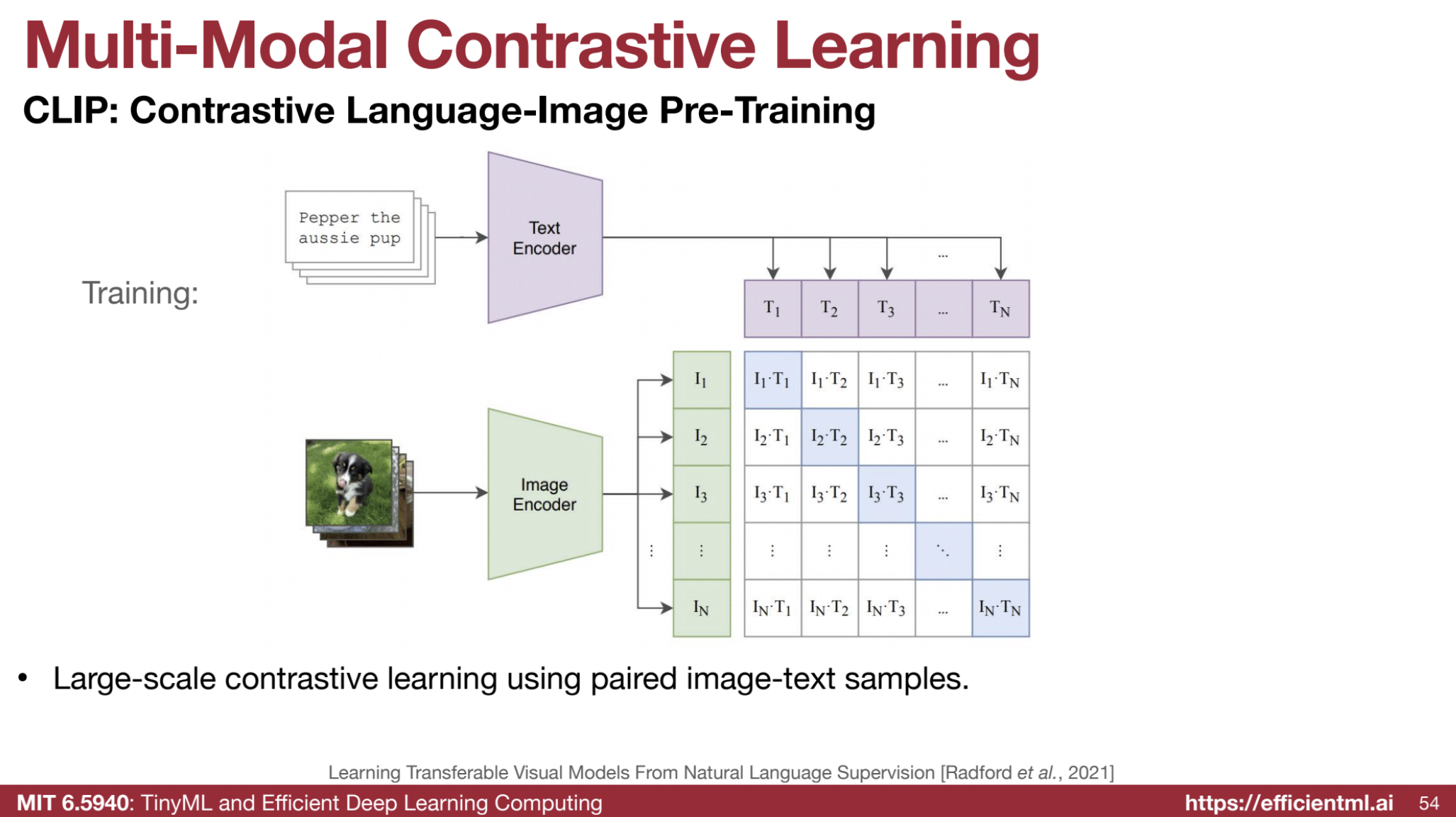

📚 Multi-Modal Contrastive Learning (CLIP):

- Aligns image and text embeddings in the same space, enabling zero-shot classification.

Contrastive learning focuses on learning embeddings such that similar samples are pulled together in the latent space while dissimilar ones are pushed apart. CLIP (Contrastive Language-Image Pretraining) uses this approach to align image and text representations in a shared space. Here’s a breakdown of the loss function and a sample PyTorch implementation.

Contrastive Loss Function

The contrastive loss ensures that:

- The similarity between positive pairs (e.g., related image-text pairs) is maximized.

- The similarity between negative pairs (unrelated pairs) is minimized.

CLIP Loss Function

CLIP uses a bidirectional contrastive loss:

- Image-to-Text Loss: Maximizes similarity between image embeddings and corresponding text embeddings.

- Text-to-Image Loss: Similarly maximizes text-to-image similarity.

- The combined loss is symmetric and encourages cross-modal alignment.

Mathematical Representation

The loss function uses the InfoNCE objective: $[ \mathcal{L}{\text{contrastive}} = -\frac{1}{N} \sum{i=1}^N \left( \log \frac{\exp(\text{sim}(z_i^I, z_i^T) / \tau)}{\sum_{j=1}^N \exp(\text{sim}(z_i^I, z_j^T) / \tau)} + \log \frac{\exp(\text{sim}(z_i^T, z_i^I) / \tau)}{\sum_{j=1}^N \exp(\text{sim}(z_i^T, z_j^I) / \tau)} \right) ]$ Where:

- $( z_i^I )$ and $( z_i^T )$: Image and text embeddings.

- $( \text{sim}(u, v) )$: Similarity function, typically cosine similarity.

- $( \tau )$: Temperature parameter to control sharpness.

🛠️ PyTorch Implementation

Here’s a minimal implementation of the contrastive loss for CLIP in PyTorch:

import torch

import torch.nn.functional as F

def contrastive_loss(image_embeddings, text_embeddings, temperature=0.07):

"""

Computes the contrastive loss for image and text embeddings.

Args:

image_embeddings (torch.Tensor): Image embeddings of shape (N, D).

text_embeddings (torch.Tensor): Text embeddings of shape (N, D).

temperature (float): Temperature scaling parameter.

Returns:

torch.Tensor: Scalar loss value.

"""

# Normalize embeddings

image_embeddings = F.normalize(image_embeddings, dim=-1)

text_embeddings = F.normalize(text_embeddings, dim=-1)

# Compute similarity matrix

logits = torch.matmul(image_embeddings, text_embeddings.T) / temperature

labels = torch.arange(logits.size(0), device=logits.device)

# Compute cross-entropy loss for both directions

loss_image_to_text = F.cross_entropy(logits, labels)

loss_text_to_image = F.cross_entropy(logits.T, labels)

# Combine the losses

loss = (loss_image_to_text + loss_text_to_image) / 2

return loss

# Example usage

if __name__ == "__main__":

batch_size = 8

embedding_dim = 512

temperature = 0.07

# Random image and text embeddings

image_embeddings = torch.randn(batch_size, embedding_dim)

text_embeddings = torch.randn(batch_size, embedding_dim)

loss = contrastive_loss(image_embeddings, text_embeddings, temperature)

print(f"Contrastive Loss: {loss.item():.4f}")

🌟 Key Notes

- Normalization: Embeddings are L2-normalized to project them onto a hypersphere, making cosine similarity equivalent to the dot product.

- Temperature: Controls the sharpness of the logits, impacting how the model differentiates between similar and dissimilar pairs.

- Cross-Entropy: The loss is computed using softmax over the similarity matrix, ensuring the model aligns positive pairs and separates negatives.

🎭 Masked Image Modeling (MAE):

- Masks patches of an image and trains the model to predict the missing parts, similar to Masked Language Models (MLM) in NLP.

- Uses a high mask ratio (e.g., 75%) due to images’ lower information density.

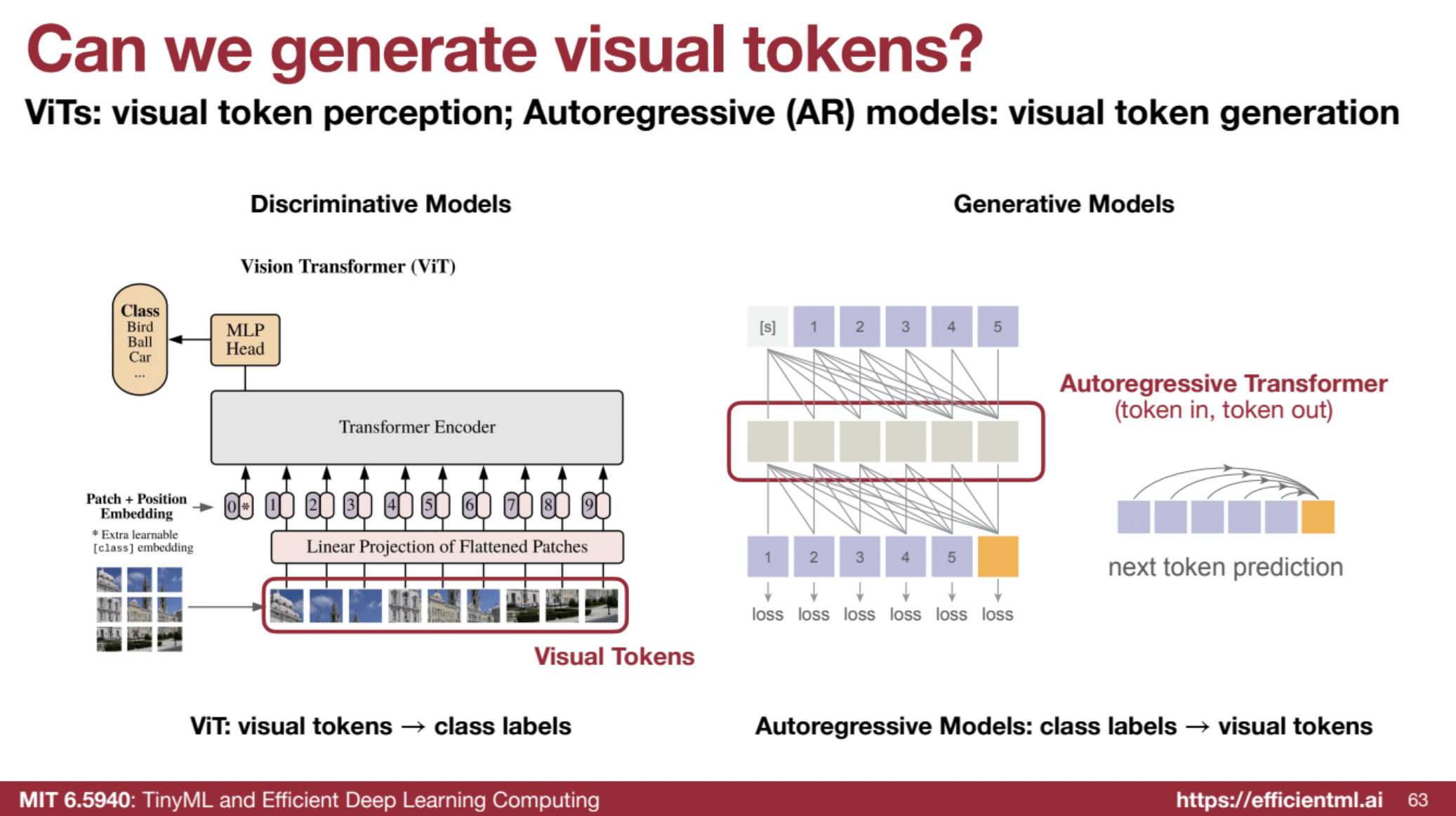

4️⃣ ViT and Autoregressive Image Generation

🎨 Autoregressive (AR) Models for Images:

- Generate image tokens sequentially in a token-in, token-out manner.

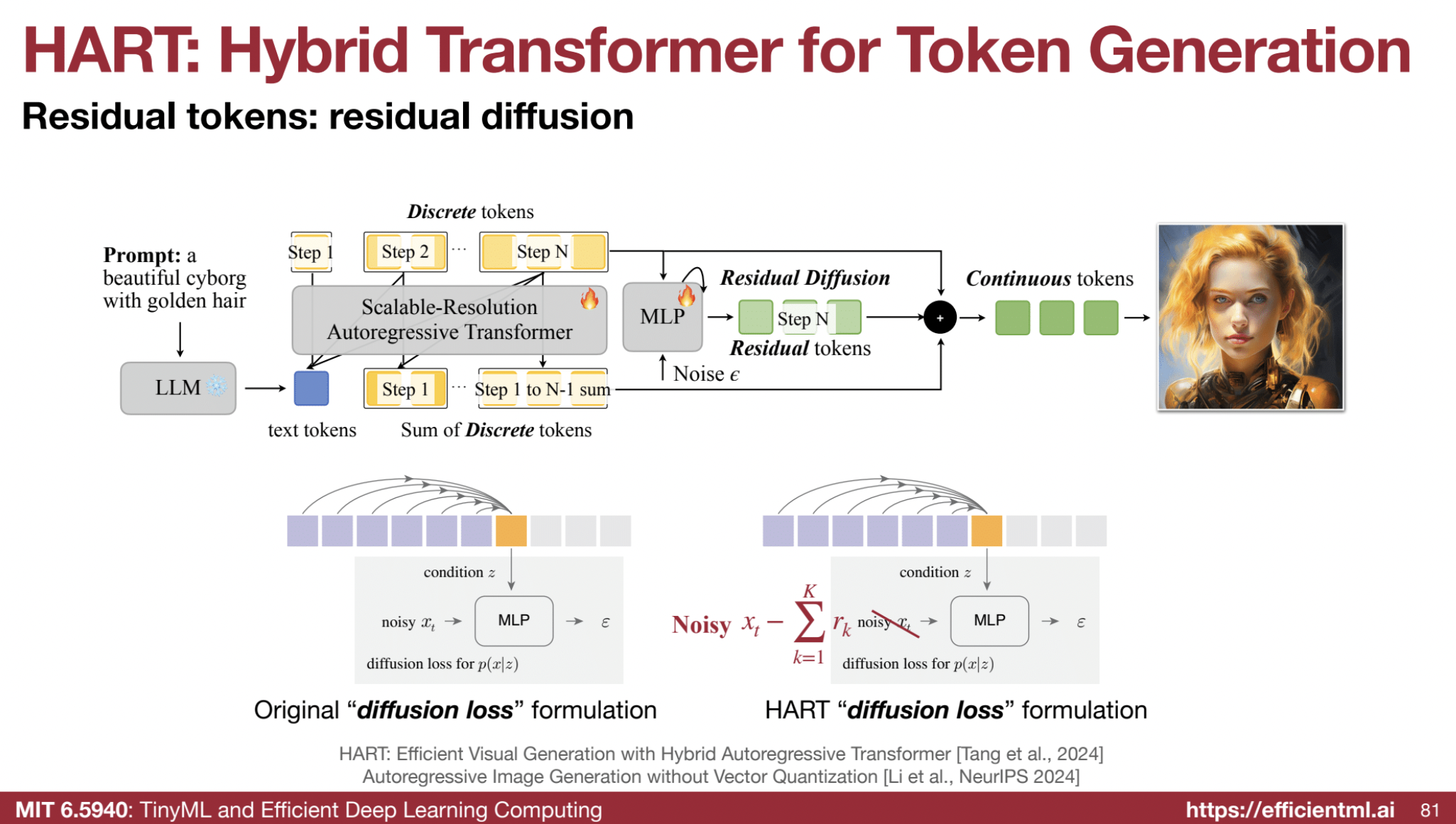

⚡ Hybrid Autoregressive Transformer (HART):

- Combines discrete and continuous tokens for faster image generation than diffusion models.

- Uses vector quantization (VQ) for discrete tokens and a small residual diffusion model for fine-grained details.

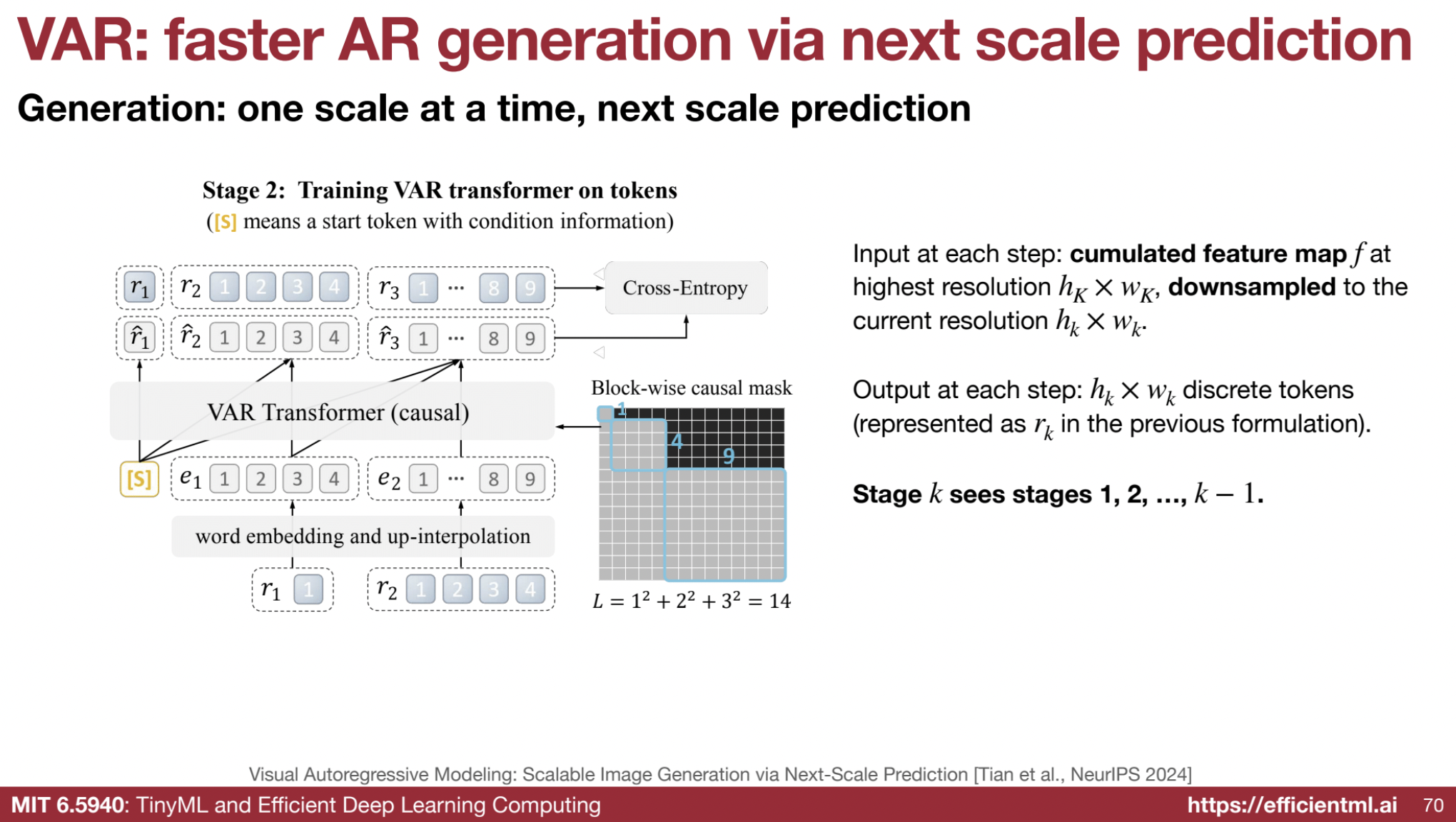

- Employs a multi-scale approach to progressively increase resolution during token generation.

The Hybrid Autoregressive Transformer (HART) model is a novel approach to image generation that combines the strengths of autoregressive (AR) models and diffusion models while overcoming their individual limitations. It was developed to match the visual generation quality of diffusion models while being significantly faster.

🚫 Limitations of Existing Models:

- Autoregressive Models: These models generate images sequentially, often suffering from poor image reconstruction quality due to the use of discrete tokenizers.

- Diffusion Models: While achieving high-quality image synthesis, diffusion models are computationally expensive and slow due to their iterative denoising process.

🎯 HART’s Goal:

- Objective: To develop an autoregressive model that can match the image generation quality of diffusion models while being significantly faster.

- HART aims to bridge the reconstruction performance gap between discrete tokenizers in AR models and continuous tokenizers in diffusion models.

- HART seeks to efficiently generate high-resolution (1024x1024) images, which is a challenge for existing AR models.

🏗️ Model Architecture

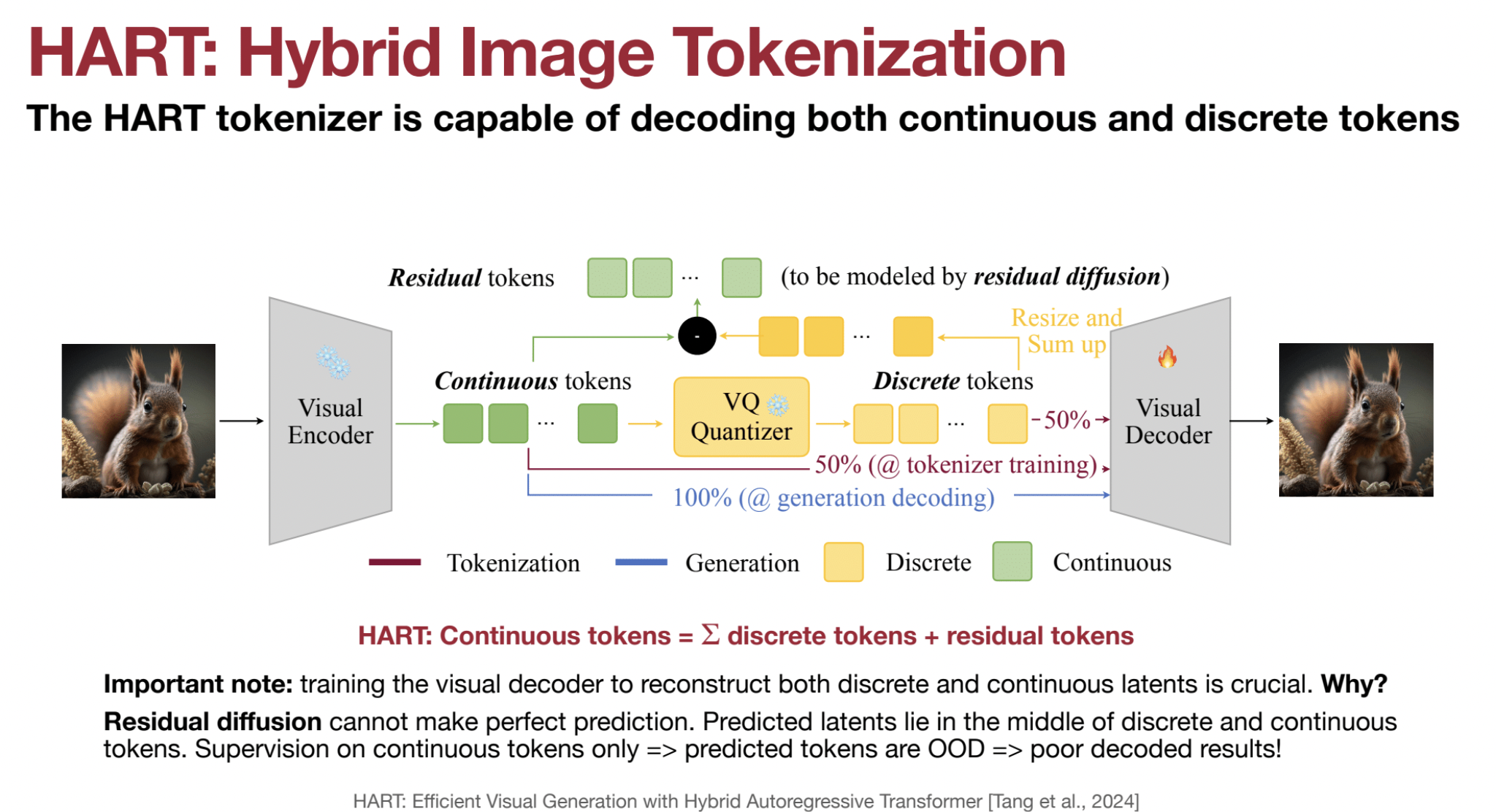

HART introduces a hybrid approach to image tokenization and modeling, using both discrete and continuous tokens. The model consists of two main components:

1. Hybrid Visual Tokenization:

- Visual Encoder: A CNN-based visual encoder transforms the input image into continuous visual tokens in the latent space.

- Multi-Scale Vector Quantization: These continuous tokens are quantized into discrete tokens across multiple scales, similar to the VAR model.

- Residual Tokens: The difference between the accumulated discrete tokens and the original continuous visual features is termed residual tokens, which represent information that cannot be captured by discrete tokens.

- Alternating Training: The visual decoder is trained by randomly selecting either continuous or discrete visual tokens (50% probability each) for reconstructing the input image.

- When the continuous path is selected, the model becomes a conventional continuous autoencoder.

- When the discrete path is selected, the model trains as a standard VQ tokenizer.

- By training with both continuous and discrete tokens, the HART tokenizer can decode continuous features, overcoming the poor generation upper bound imposed by finite VQ codebooks.

2. Hybrid Autoregressive Modeling with Residual Diffusion:

- Scalable-Resolution Autoregressive Transformer:

- The discrete tokens are modeled by a scalable-resolution autoregressive transformer, extending VAR to text-to-image generation.

- It concatenates text tokens with visual tokens during training and uses relative position embeddings to support resolution interpolation.

- Residual Diffusion:

- The continuous residual tokens are modeled using a lightweight residual diffusion process that uses only a 37M parameter MLP, conditioned on the last layer hidden states from the AR transformer.

- This MLP is also conditioned on the discrete tokens predicted in the last VAR sampling step.

- The final image is generated as the sum of the discrete tokens and the residual tokens.

- The residual tokens are easier to learn than the full tokens, allowing HART to achieve optimal quality with fewer sampling steps.

🛠️ Key Architectural Features

- Hybrid Tokenizer: HART’s hybrid tokenizer can decode both continuous and discrete tokens. During generation, only continuous tokens are decoded, which are the sum of discrete and residual tokens.

- Scalable-Resolution AR Transformer: HART uses a scalable-resolution AR transformer that can directly generate 1024px images and is more parameter-efficient than methods using cross-attention.

- Relative Position Embeddings: HART converts all absolute position embeddings to relative position embeddings, which include step and token index embeddings compatible with interpolation. This allows the model to better handle higher resolution inputs.

- Lightweight Residual Diffusion: By modeling only the residual tokens, HART’s diffusion module is small (37M parameters) and efficient, requiring only 8 steps for optimal quality, compared to the 30-50 steps needed by other models.

- KV Caching: HART supports KV caching for faster inference, significantly reducing computational costs.

📊 Results and Performance

- Improved Reconstruction Quality: HART’s hybrid tokenizer significantly improves reconstruction quality compared to discrete tokenizers.

- Reduces the 1024px reconstruction FID (Fréchet Inception Distance) from 2.11 to 0.30 on the MJHQ-30K dataset.

- Matches the reconstruction quality of the SDXL tokenizer.

- Superior Generation Quality: HART achieves state-of-the-art image generation quality, matching or surpassing diffusion models in multiple text-to-image generation metrics.

- Achieves superior FID compared to all diffusion models on the MJHQ-30K dataset.

- Demonstrates better image-text alignment compared to larger diffusion models such as SDXL.

- Produces images with high detail due to the hybrid tokenization and residual diffusion.

- Efficiency Gains: HART is significantly faster and more efficient than both diffusion and autoregressive models.

- Achieves 4.5-7.7x higher throughput compared to diffusion models.

- Achieves 3.1-5.9x lower latency than state-of-the-art diffusion models.

- Requires 6.9-13.4x less computation compared to diffusion models.

- Class-Conditioned Generation: HART shows better class-conditioned image generation results than MAR with 10.7× lower MACs and 12.9× faster runtime. It offers a 7.8% FID reduction with 4% runtime overhead compared to VAR.

- Ablation Studies:

- Residual diffusion provides significant improvements in FID and inception score.

- A single decoder with alternating training enables faster and better generation convergence.

- Relative position embeddings accelerate convergence when finetuning at higher resolutions.

📚 Training Data:

- Yes, HART is trained using text and image pairs. It is trained on datasets like ImageNet, JourneyDB, and synthetic data. These datasets consist of images that have been recaptioned with VILA1.5-13B, a large language model.

- The paired text-image data is used to train the model to generate images corresponding to the text prompts.

- The model learns to associate features in the image with the concepts described in the text.

🆚 HART vs VILA-U: A Comparison of Visual Generation Models

While both HART and VILA-U are involved in visual generation, they have different primary focuses and technical approaches.

🔑 Primary Function:

- HART is primarily focused on efficient image generation with high quality and speed.

- VILA-U is designed as a “unified foundation model” that integrates visual understanding and generation, indicating it handles both understanding and generating visual content.

⚡ Efficiency Focus:

- HART is designed to be significantly faster than diffusion models while maintaining comparable image generation quality, achieving up to 7.7x speedup. This is achieved through:

- Hybrid tokenization

- Scalable-resolution autoregressive transformer

- A lightweight diffusion model for fine details.

- VILA-U, while aiming for efficiency, focuses more on unifying visual understanding and generation rather than primarily optimizing for generation speed.

🖼️ Text-to-Image Generation:

- HART uses a scalable-resolution autoregressive transformer that:

- Concatenates text tokens with visual tokens during training.

- Uses relative position embeddings to support resolution interpolation.

- Uses a small diffusion model for learning fine details.

- VILA-U incorporates text alignment during visual token training to enhance perception capabilities. It uses a unified next-token prediction objective for both visual and textual tokens and does not use diffusion models like HART.

🧩 Tokenization Approach:

- HART employs a hybrid tokenization method using both discrete and continuous tokens. This is essential for capturing both:

- Discrete tokens: Represent the general image structure.

- Residual tokens: Capture fine details, like eyes and hair.

- VILA-U uses a residual vector quantization method to discretize visual features. While the sources do not provide full details, it appears to rely on a discrete tokenization approach to align visual tokens with textual inputs.

🌊 Use of Diffusion Models:

-

HART uses a lightweight diffusion model (37 million parameters) to model residual tokens. It predicts the difference between continuous and discrete tokens and is conditioned on the output of the autoregressive transformer.

-

VILA-U does not explicitly use diffusion models in the same way as HART. Instead, it uses a unified next-token prediction objective for both visual and textual tokens.

🎯 Focus of Models:

- HART has a strong emphasis on efficient high-resolution image generation, with specific techniques such as:

- Hybrid tokenization

- A lightweight diffusion model.

- VILA-U is designed as a more general-purpose model, focusing on unifying visual understanding and generation.