📚 Common Abstractions for Neural Network Computations

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

📚 Introduction to Neural Network Library Abstractions

I. Introduction to Neural Network Library Abstractions

- Briefly reviews deep learning models (e.g., multilayer perceptrons) and automatic differentiation algorithms.

- Introduces the concept of composing these elements into an end-to-end deep learning library.

🕰 II. Programming Abstractions: A Historical Perspective

- Explores different programming abstractions for building machine learning models.

- Reviews the evolution of deep learning frameworks, highlighting key design choices.

🔍 III. Case Studies: Caffe 1.0, TensorFlow 1.0, and PyTorch

A. Caffe 1.0: The Layer Abstraction

- Introduces the Layer as the core abstraction, defining forward and backward functions for computation and gradient propagation.

- Discusses the advantages and limitations of this in-place backpropagation approach.

B. TensorFlow 1.0: Computational Graph and Declarative Programming

- Introduces the concept of computational graphs for describing forward computations and extending them for gradient calculations.

- Highlights the advantages of declarative programming:

- 📈 Optimization opportunities.

- 🧩 Separation of declaration and execution.

C. PyTorch: Imperative Automatic Differentiation and Dynamic Computation Graphs

- Presents the imperative programming style, where computations are executed as the computational graph is constructed.

- Discusses the benefits of this approach:

- 🔄 Dynamic graph construction.

- ⚡ Flexibility in mixing Python control flow.

- 🐞 Ease of debugging.

- Compares and contrasts PyTorch’s approach with TensorFlow’s declarative style, considering optimization and flexibility trade-offs.

📊 Comparison of Caffe 1.0, TensorFlow 1.0, and PyTorch

| Framework | Execution Example | Pros | Cons |

|---|---|---|---|

| Caffe 1.0 | python<br>class Layer: <br> def forward(bottom, top): ... <br> def backward(top, propagate_down, bottom): ...This snippet demonstrates the layer interface in Caffe 1.0. The forward function propagates data from input (bottom) to output (top), while backward handles gradient calculations. |

- Natural backpropagation: The layer abstraction aligns well with forward and backward passes, making implementation intuitive. - Fast execution due to efficient C++ backend. |

- Coupled gradient computation and module composition: Defining complex modules like ResNet can be challenging. - Limited flexibility for dynamic or non-layer-based models. |

| TensorFlow 1.0 | python<br>v1 = tf.Variable()<br>v2 = tf.exp(v1)<br>v3 = v2 + 1<br>v4 = v2 * v3<br>sess = tf.Session()<br>value4 = sess.run(v4, feed_dict={v1: numpy.array()})This example illustrates TensorFlow’s declarative programming style. The computational graph is defined first, followed by execution within a session. |

- Optimization opportunities: Analyzing the complete graph enables optimizations like code elimination and distributed execution. - Scalable computations: Separation of declaration and execution allows running on remote hardware for large-scale tasks. |

- Less flexible: Integrating Python control flow requires special nodes (tf.if, tf.while_loop).- Difficult debugging: Inspecting intermediate values requires running the entire graph, making interactive debugging cumbersome. |

| PyTorch | python<br>v1 = ndl.Tensor()<br>v2 = ndl.exp(v1)<br>v3 = v2 + 1<br>v4 = v2 * v3<br>if v4.numpy() > 0.5:<br> v5 = v4 * 2<br>else:<br> v5 = v4<br>v5.backward()This code exemplifies PyTorch’s imperative programming style. Computations are executed immediately, enabling dynamic graph construction based on runtime values. |

- Flexibility and ease of use: Dynamic graph construction and direct tensor interaction make it user-friendly. - Dynamic computational graphs: Beneficial for tasks with variable input sizes like NLP or reinforcement learning. - Easier debugging with native Python support for control flow and interactive tools. |

- Fewer optimization opportunities: Eager execution may hinder optimizations that benefit from a static graph. - Higher memory usage in certain cases compared to static graph frameworks. |

🔑 Key Insights:

-

Caffe 1.0:

Offers a natural way to implement backpropagation through its layer-based approach, but managing complex architectures like ResNet can be cumbersome. -

TensorFlow 1.0:

Facilitates optimization and scalability with a declarative style, making it suitable for large-scale production systems. However, it sacrifices flexibility and ease of use. -

PyTorch:

Provides flexibility and a user-friendly experience with dynamic graph construction, making it ideal for research and development. However, it may miss certain optimization opportunities available in static graph frameworks.

💡 Framework Selection:

-

For Research and Development:

PyTorch is often preferred due to its flexibility, dynamic graph support, and ease of debugging. -

For Production Environments:

TensorFlow 1.0 is more suitable when scalability, optimization, and deployment on distributed systems are crucial. -

Legacy Usage:

While Caffe 1.0 is still used in some niche cases, it has largely been superseded by newer frameworks like PyTorch and TensorFlow.

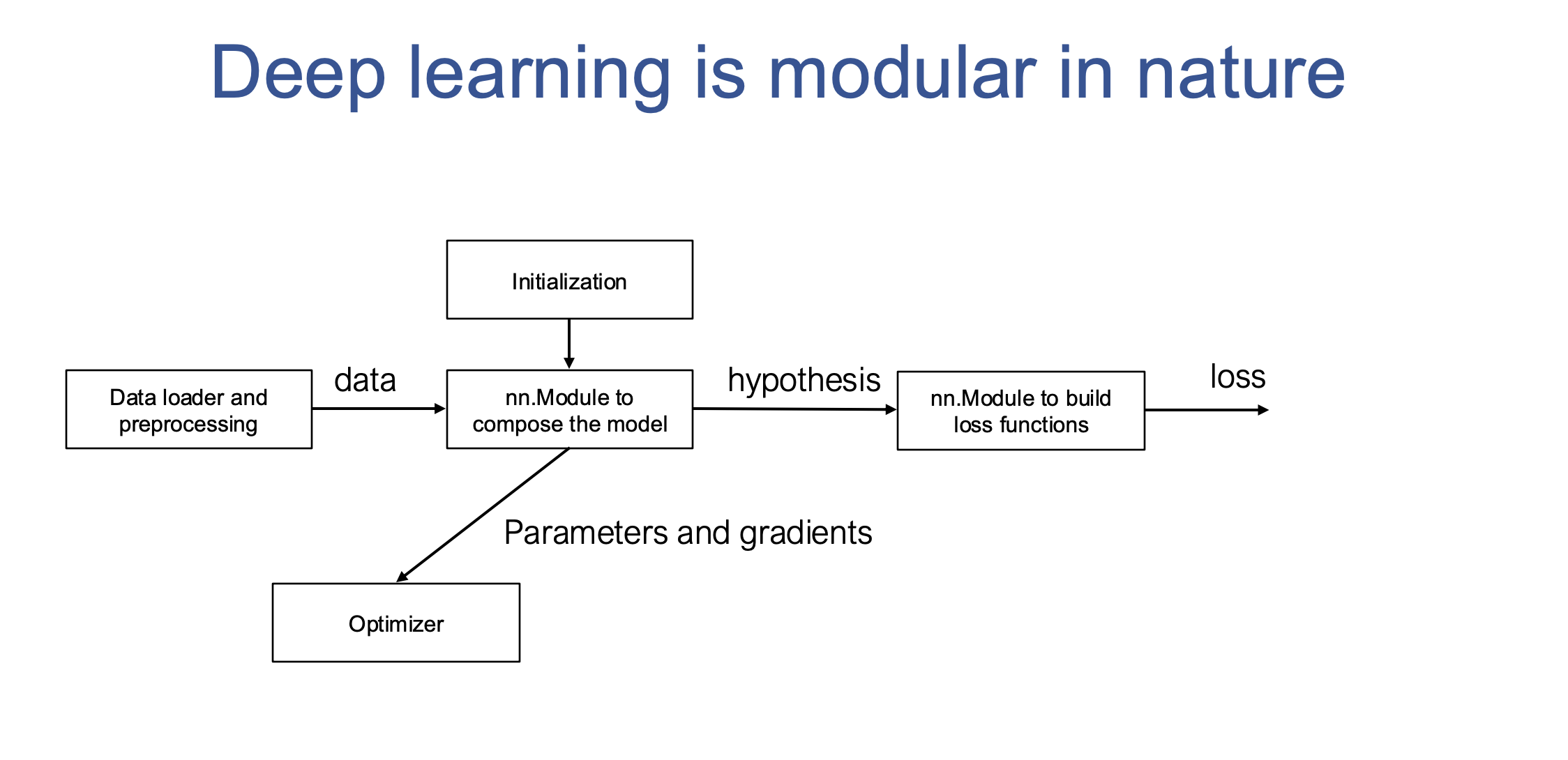

🛠 IV. Modularity in Deep Learning: From Concepts to Code

- Emphasizes the inherent modularity of machine learning, encompassing:

- 📊 Hypothesis class.

- 🧮 Loss function.

- 🚀 Optimization method.

- Explains how this modularity translates into modular components in code, enhancing flexibility and customization.

🏗 V. Composing Deep Learning Models: The Power of nn.Module

A. Recursive Decomposition: Breaking Down Complex Models

- Demonstrates the process of decomposing a complex model (e.g., ResNet) into smaller, reusable modules:

- 🔹 Linear layers.

- 🔹 ReLU activations.

- 🔹 Residual blocks.

- Highlights the importance of modularity for building and understanding deep learning models.

B. nn.Module: The Building Block for Modularity

- Introduces the

nn.Moduleas a fundamental data structure for representing modules. - Emphasizes the “tensor in, tensor out” principle for composing modules seamlessly.

- Key functionalities of

nn.Module:- 🔑 Managing trainable parameters.

- 🛠 Initialization.

- 🔄 Handling training/inference modes.

🔑 VI. Other Key Modular Components

A. Loss Functions

- Special modules for evaluating model performance, following a “tensor in, scalar out” convention.

B. Optimizers

- Modules responsible for updating model weights based on gradients.

- Manage auxiliary states and regularization.

C. Initialization

- Techniques for setting initial parameter values to ensure stable and effective training.

D. Data Loaders and Preprocessing

- Pipelines for loading, augmenting, and preparing data for training.

🚀 VII. Conclusion and Future Directions

- Recap of the modular nature of deep learning and the benefits of composing models from reusable components.

- Encourages reflection on:

- 🔄 The evolution of programming abstractions.

- 🧩 The advantages of separating gradient computation from module composition.

- Suggests exploring additional modular components beyond those discussed.

📚 Glossary of Key Terms

-

Layer

A fundamental building block in neural networks, performing a specific operation on input data, like a linear transformation or an activation function. -

Computational Graph

A directed graph representing mathematical operations and data dependencies in a deep learning model. -

Declarative Programming

A programming paradigm where you specify what you want to compute, and the system determines how to execute it. -

Imperative Programming

A programming paradigm where you provide step-by-step instructions for the computer to execute. -

nn.Module

A base class in PyTorch and similar frameworks for defining modular, reusable components of neural networks. -

Tensor

A multi-dimensional array used to represent data in deep learning frameworks. -

Loss Function

A function that measures the difference between model predictions and the ground truth labels, used to guide model training. -

Optimizer

An algorithm that updates model weights based on the calculated gradients to minimize the loss function. -

Weight Initialization

The process of assigning initial values to the weights of a neural network, which can significantly impact training performance. -

Data Augmentation

Techniques for artificially expanding the training dataset by applying random transformations to the data, improving model robustness and generalization. -

Gradient Computation

The process of calculating the gradients of the loss function with respect to the model parameters, used to guide weight updates. -

Module Composition

The process of combining individual modules to build more complex neural network architectures. -

Learning Rate Scheduler

A component that dynamically adjusts the learning rate during training to improve convergence speed and stability. -

Metric Tracker

A component that monitors and logs various performance metrics during training and evaluation, providing insights into model behavior.