Pruning and Sparsity

Modern AI models are becoming increasingly large, demanding substantial computational resources and memory. This creates a gap between the computational demands of these models and the available hardware capabilities. Pruning addresses this gap by reducing model size, memory footprint, and ultimately, energy consumption.

Part I: Intro

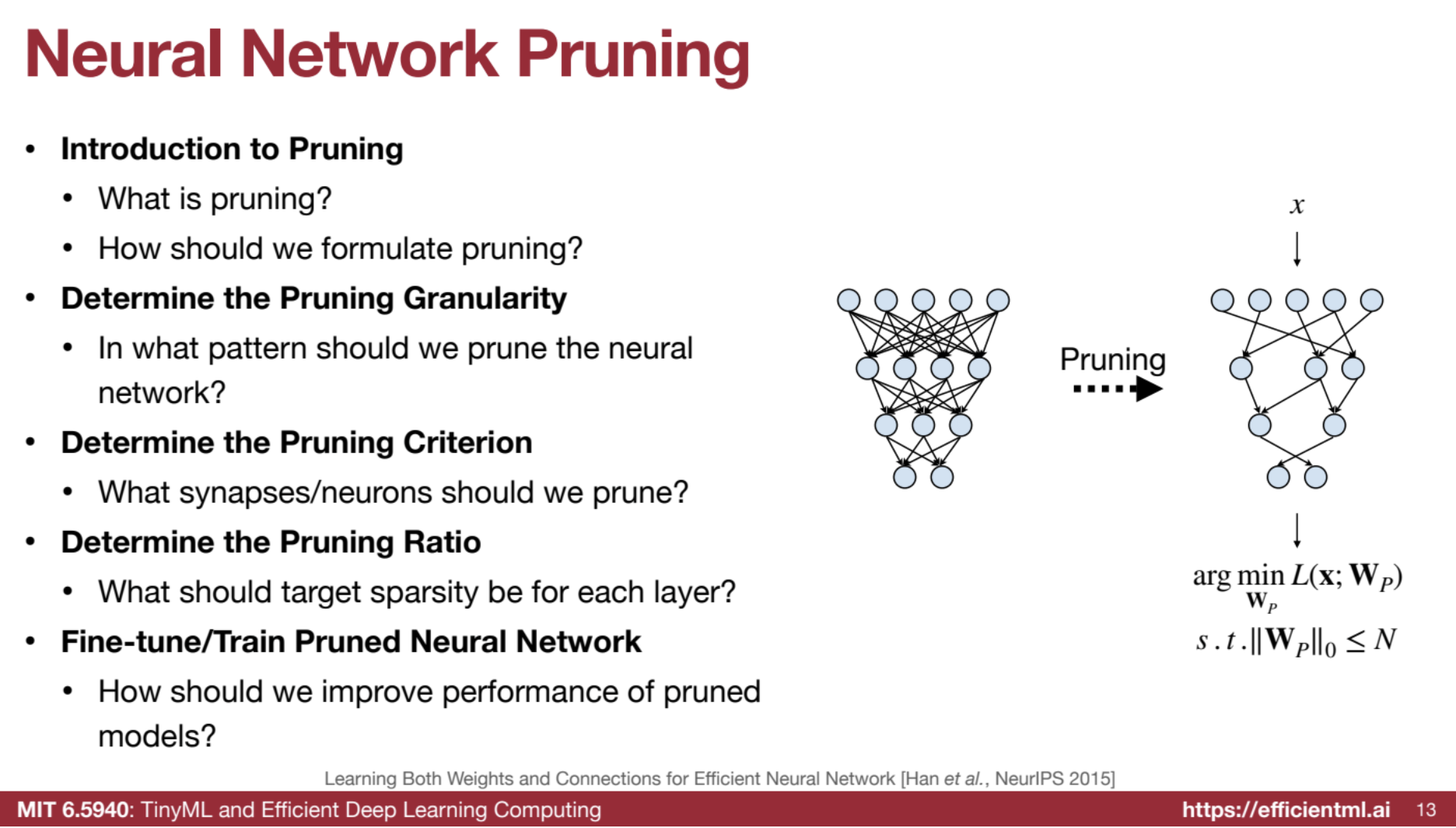

Introduction to Pruning

Pruning involves removing redundant synapses and neurons from a neural network to achieve a smaller, more efficient model. This process is inspired by the natural pruning observed in the human brain during adolescence.

Pruning in the Industry 🚀

Pruning is a popular technique to reduce neural network size and complexity, boosting efficiency. It’s widely adopted in industry, especially for large language models (LLMs).

-

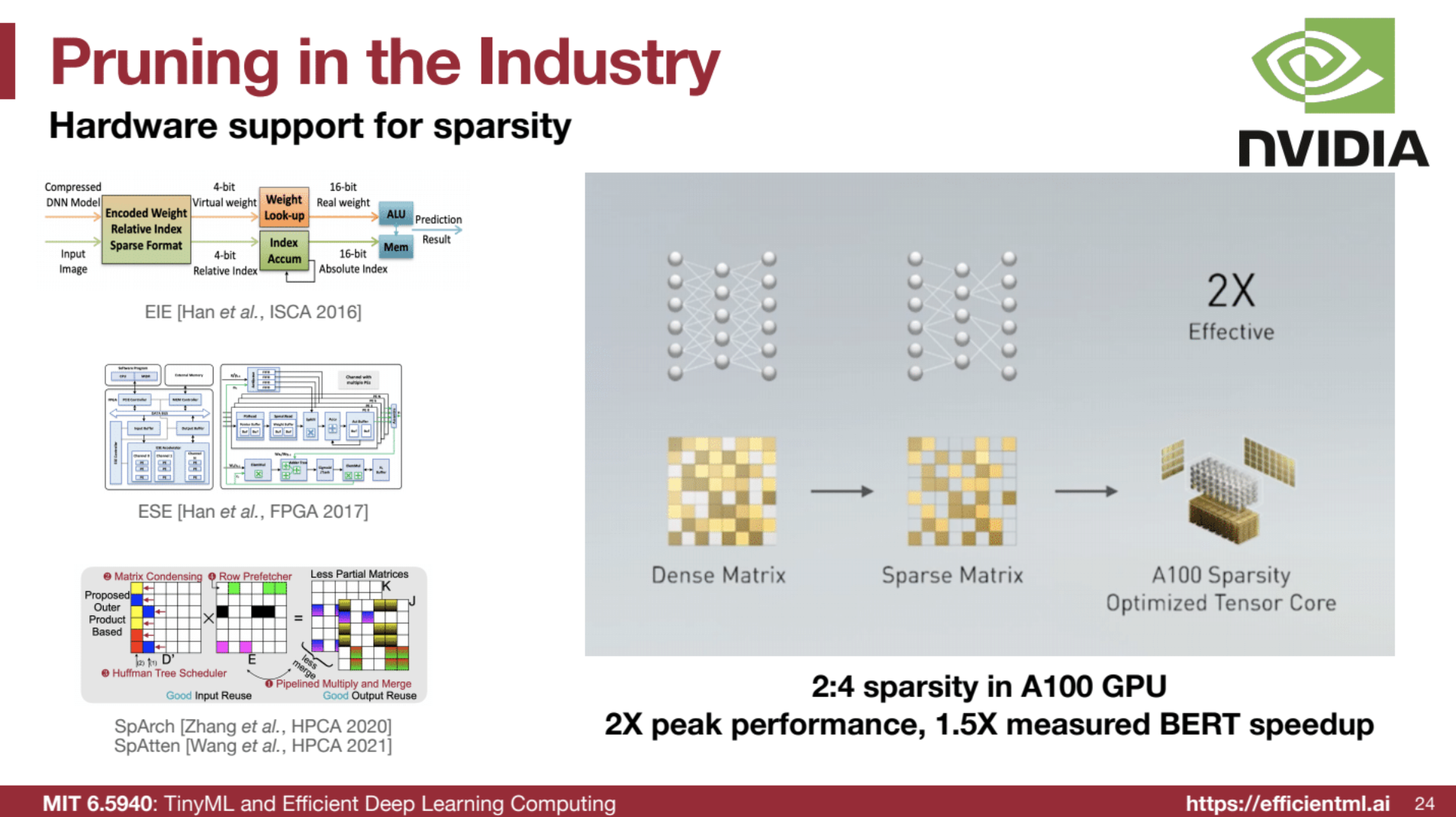

NVIDIA’s 2:4 Sparsity in A100 GPUs: Pruning two out of every four elements achieves a 2x theoretical speedup and 1.5x measured speedup on BERT models.

-

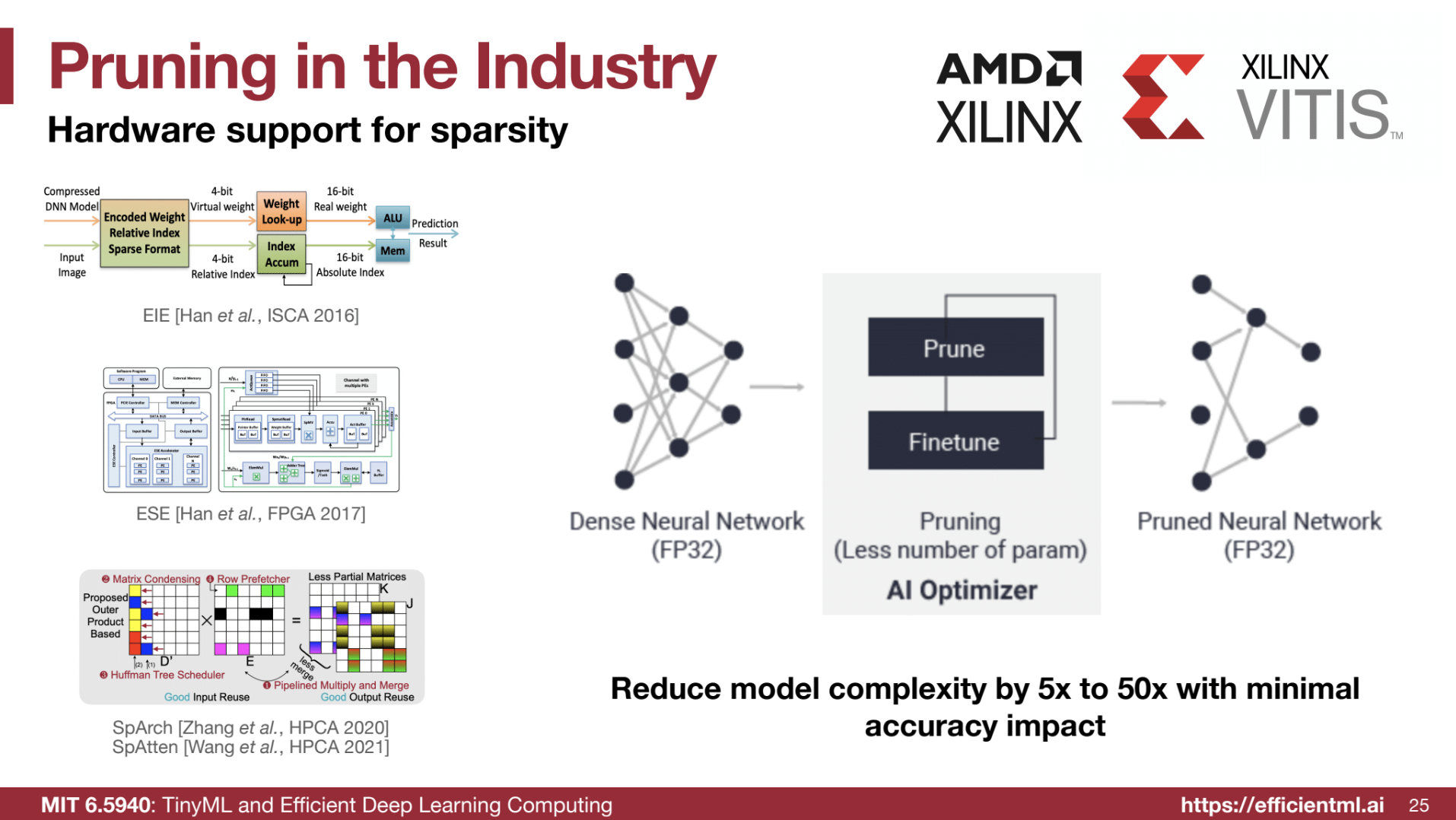

AMD’s AI Optimizer: Originally developed by a startup, this tool prunes and fine-tunes models for faster inference, optimizing for sparsity.

-

MLPerf Benchmark - Llama 2 70B: Through depth and width pruning, Llama 2 70B achieved a 2.5x speedup while maintaining 99% accuracy by reducing layers and intermediate dimensions.

-

Energy Savings from Reduced Data Movement: Since data movement is over 200x more energy-intensive than arithmetic, pruning reduces data transfer, offering substantial energy savings. ⚡

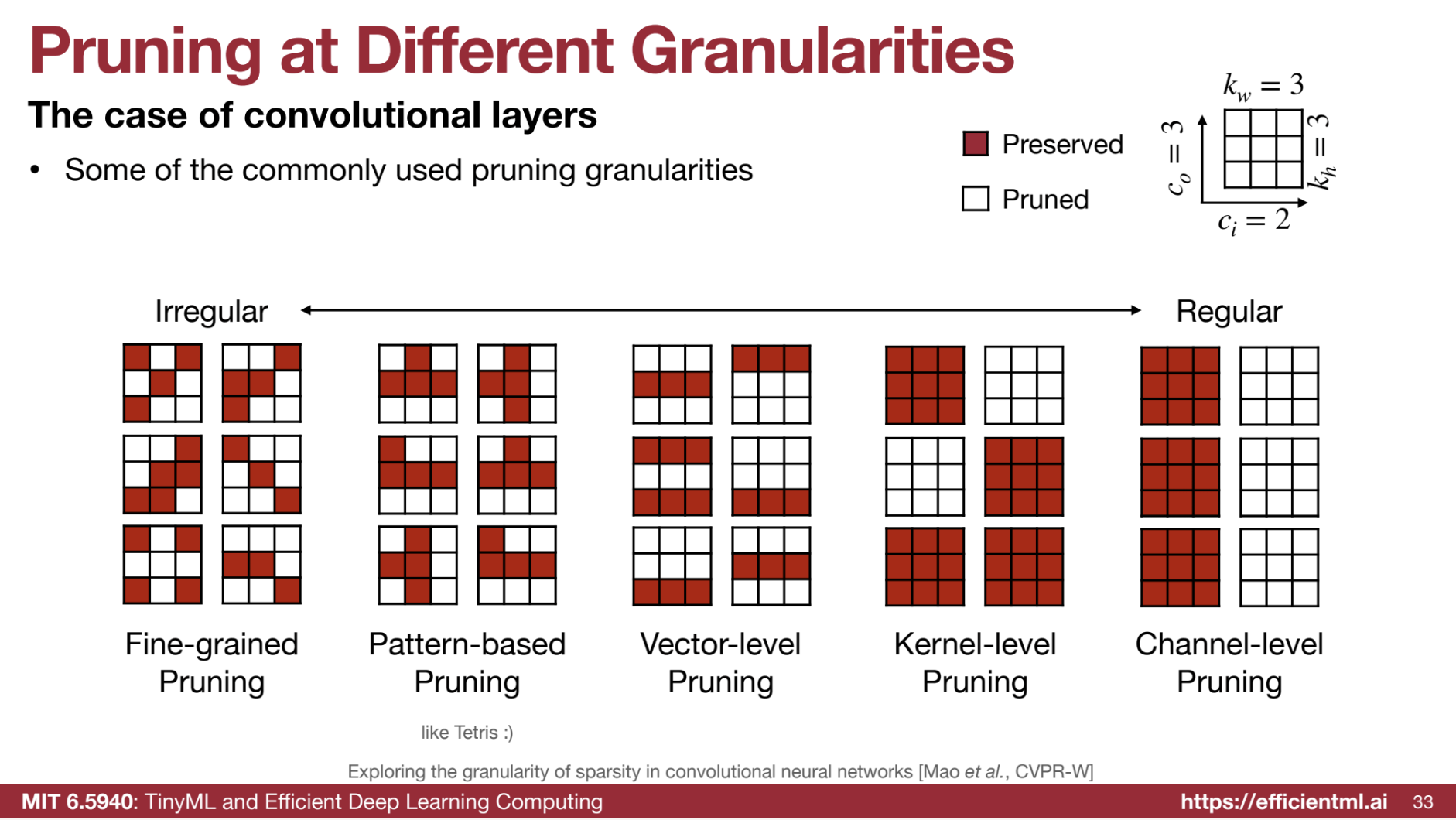

Pruning Granularity

Pruning can be applied at different levels: fine-grained (individual weights), coarse-grained (groups of weights), or channel level (entire channels). Each granularity presents trade-offs in terms of flexibility, compression ratio, and hardware acceleration.

| Granularity | Description | Flexibility | Acceleration | Compression Ratio | Suitability |

|---|---|---|---|---|---|

| Fine-grained/Unstructured | Prunes individual weights. | Most flexible, allowing pruning of any weight. | Difficult to accelerate on hardware like GPUs due to irregular sparsity patterns; requires specialized hardware. | Highest compression ratio by targeting any redundant weight. | Ideal for maximizing compression with specialized hardware. |

| Coarse-grained/Structured | Prunes larger units, such as rows, columns, or channels. | Less flexible than fine-grained, as entire units are pruned. | Easier to accelerate due to smaller, regular matrices allowing efficient dense operations. | Lower compression ratio than fine-grained pruning. | Suitable when ease of acceleration and hardware compatibility are prioritized. |

| Pattern-based | Prunes weights based on patterns like N:M sparsity, where N out of every M elements are pruned. | Moderate flexibility, as the pruning pattern limits weight selection. | Accelerated on hardware if pattern is supported; e.g., 2:4 sparsity on Nvidia Ampere GPUs offers potential 2x speedup. | Moderate compression ratio; e.g., 2:4 sparsity achieves 50% sparsity. | Balanced option for compression and acceleration on supported hardware like Nvidia GPUs. |

| Channel-level (Convolutional) | Prunes entire channels, reducing network channels. | Least flexible, as pruning is restricted to the channel level. | Directly speeds up inference by reducing channels, resulting in smaller networks. | Lower compression ratio than finer-grained methods; e.g., MobileNet achieves only 30% pruning with channel pruning. | Best when direct speedups are desired, even with lower compression ratios. Can be used for LLM model parallelism |

Choosing the appropriate pruning granularity depends on the specific application, desired compression ratio, accuracy requirements, and available hardware.

Pruning Criteria

Various criteria determine which elements to prune. Common methods include:

- Magnitude-based Pruning: Pruning weights with small absolute values, assuming they have less impact.

- Scaling-based Pruning: Associating a learnable scaling factor with each filter, removing filters with small scaling factors.

- Second-order based Pruning: Approximating the impact of pruning on the loss function using a Taylor series expansion.

- Activation based Pruning: Analyzing the activation patterns to identify and prune less important neurons.

- Regression based Pruning: Minimizing the reconstruction error of the pruned layer output instead of the overall loss.

Pruning criteria determine which weights or neurons to remove from a neural network, aiming to prune the least important elements to minimize the impact on performance. Here’s an overview of key criteria discussed.

🎚️ Magnitude-based Pruning

A straightforward method that assumes weights with larger absolute values are more important. Small-magnitude weights are pruned, preserving those with larger values:

- Element-wise Pruning: Individual weights are compared by absolute value, and the smallest are pruned.

- Row-wise or Structure-wise Pruning: The importance of a row or structure (like a convolutional filter) is calculated using norms (e.g., L1 or L2). Structures with the smallest norm values are pruned.

The choice of norm (L1, L2, or Lp) depends on the application and desired properties.

🔍 Scaling-based Pruning

Popular for filter pruning in convolutional layers, this method assigns a learnable scaling factor to each filter, multiplying it with the filter outputs. During training, the network learns to assign small factors to less important filters, which are then pruned.

- Reusing Batch Normalization Parameters: Scaling factors from the batch normalization layer can simplify this process.

🧠 Second-Order-based Pruning

This method minimizes the error introduced by pruning on the loss function using a Taylor series expansion to approximate the loss change after pruning. The “Optimal Brain Damage” paper introduces these assumptions for simplification:

- The loss function is nearly quadratic, allowing the neglect of higher-order terms.

- The network has converged, making first-order terms negligible.

- Errors from pruning individual weights are assumed independent, simplifying the expression.

Challenge: Computing the Hessian matrix (second-order derivatives) is expensive and requires approximations.

🧩 Pruning Neurons

A coarse-grained pruning method that removes an entire neuron, thereby eliminating all weights associated with it.

- In linear layers: A row is removed from the weight matrix.

- In convolutional layers: An entire kernel for a specific output channel is removed.

🔢 Percentage-of-Zeros-based Pruning

This method utilizes sparsity from activation functions like ReLU, which outputs zero for negative inputs. It calculates the Average Percentage of Zeros (APoZ) in the activation output for each neuron across a batch. Neurons with a higher APoZ are pruned.

📉 Regression-based Pruning

This technique focuses on minimizing the reconstruction error of a layer’s output instead of minimizing the overall loss function error. It finds a pruned weight matrix that, when multiplied with input activations, produces an output close to the original.

Useful for pruning large language models, where backpropagating errors through the entire network is computationally intense.

🔑 Choosing the Right Criterion

The optimal pruning criterion depends on factors like network architecture, task requirements, computational resources, and the desired balance between accuracy and compression. Simple methods like magnitude-based pruning are often effective and efficient. Advanced techniques like second-order or regression-based pruning may achieve higher accuracy but require more computation.

Fine-tuning

After pruning, retraining the remaining weights helps recover accuracy loss and improve the model’s performance.

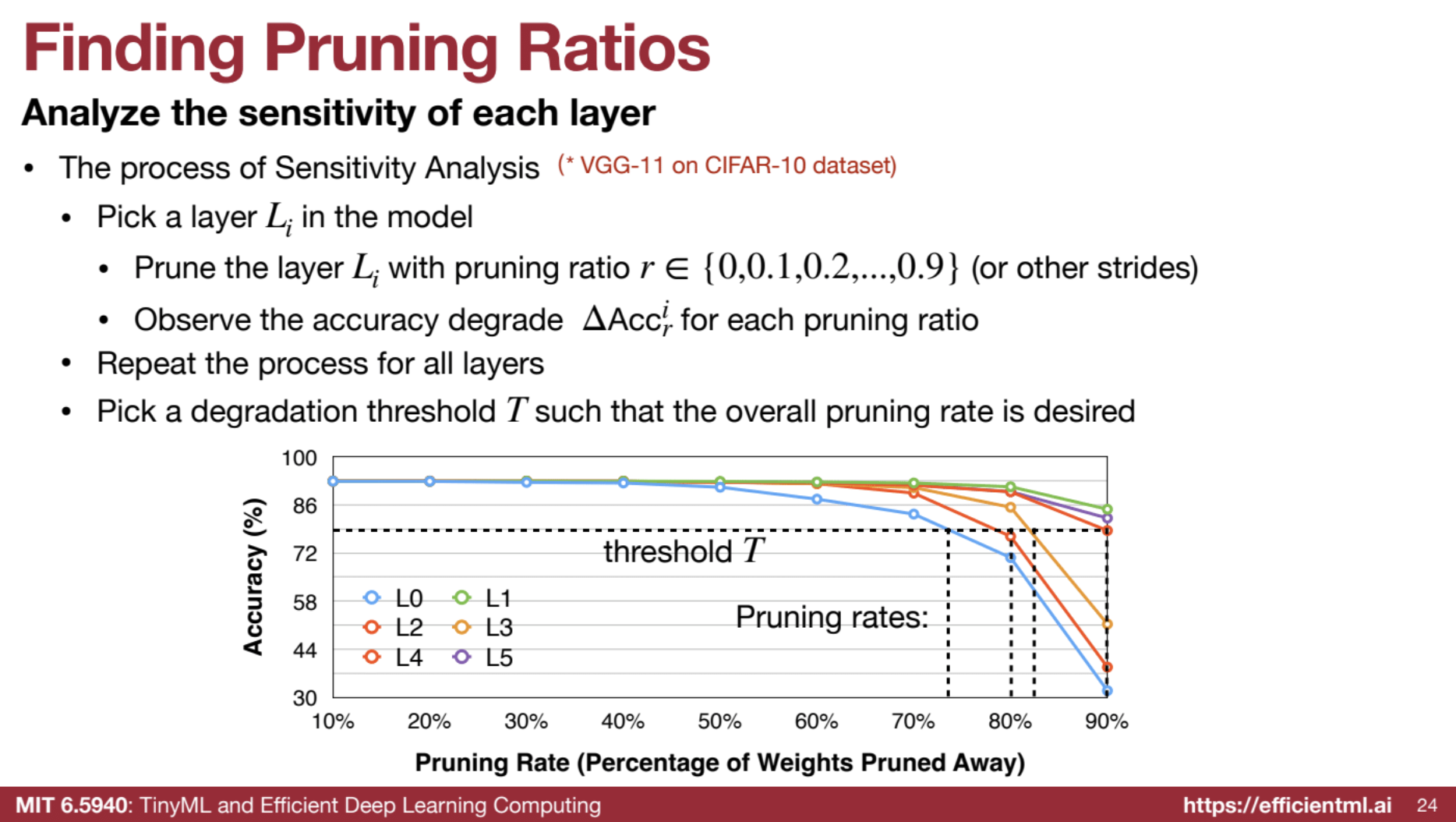

🧮 Determining Pruning Ratios

🔍 Sensitivity Analysis

Analyzing each layer’s sensitivity to pruning by observing accuracy degradation at various pruning ratios.

- Method: Choose a layer, apply a pruning ratio, and observe accuracy degradation. Repeat for each layer.

- Strategy: Less sensitive layers can be pruned more aggressively.

- Limitation: Ignores interactions between layers, which may lead to sub-optimal results.

Example: “Pick a layer in the model, prune it with a certain ratio, observe accuracy degradation, and repeat for all layers.”

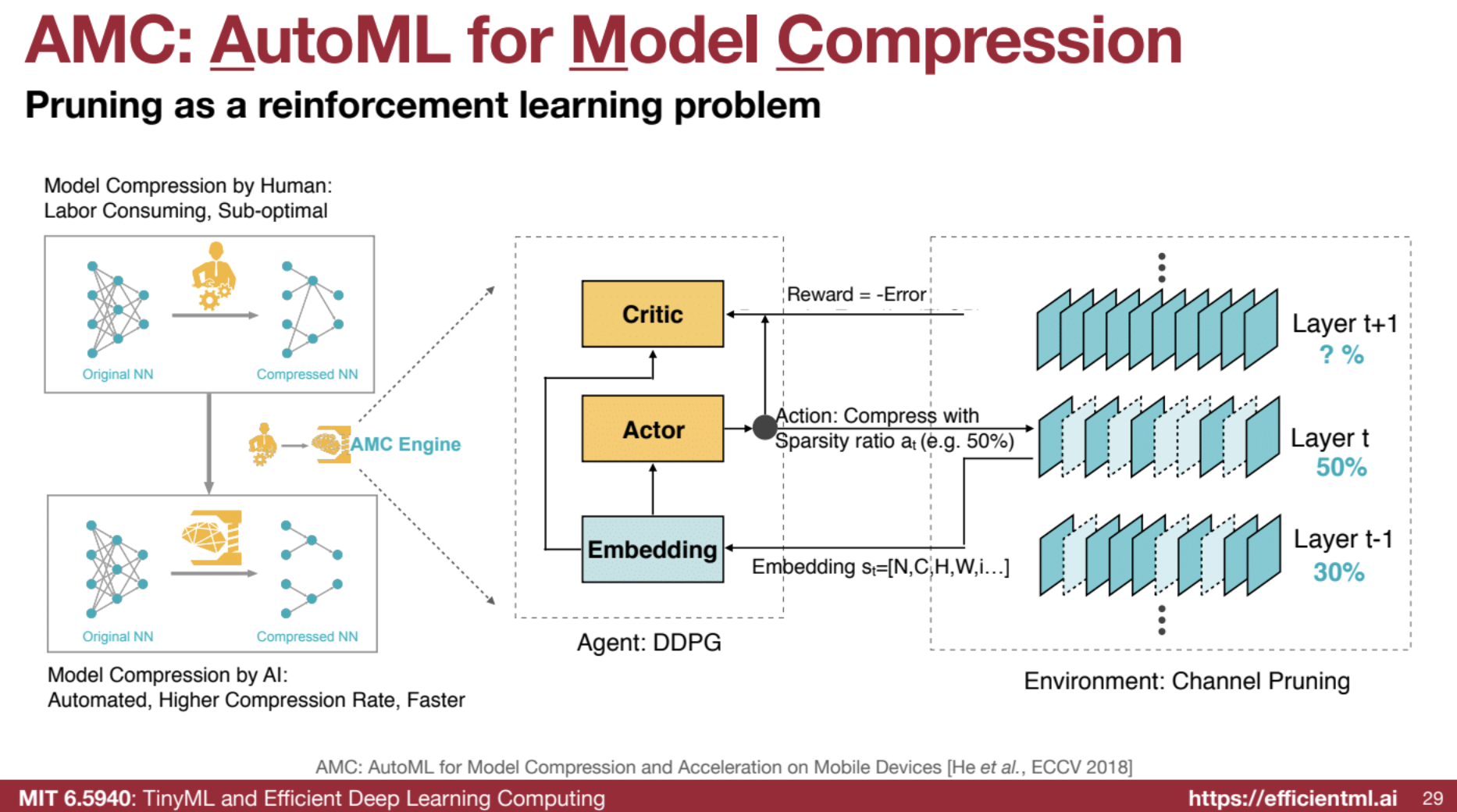

🤖 Automated Pruning (AMC)

Uses reinforcement learning (specifically the DDPG agent) to automatically determine per-layer pruning ratios based on a target compression ratio.

- Approach: Treats pruning ratio selection as a sequential decision-making problem, maximizing reward (accuracy while minimizing FLOPs).

- Benefit: Outperforms human experts by achieving higher compression ratios while maintaining accuracy.

Example: “The state includes features like layer index, channel count, kernel size, current FLOPs. The reward is accuracy minus error if the FLOP constraint is met, minus infinity otherwise.”

🔄 NetAdapt

An iterative, rule-based approach that progressively prunes layers to meet global resource constraints (e.g., latency).

- Method: Prune layers incrementally with short-term fine-tuning, selecting the layer with the highest accuracy for further pruning.

- Benefit: Produces models with varying cost-accuracy trade-offs.

Example: “In each iteration, we aim to reduce latency by a specific amount, pruning layers based on a pre-built lookup table.”

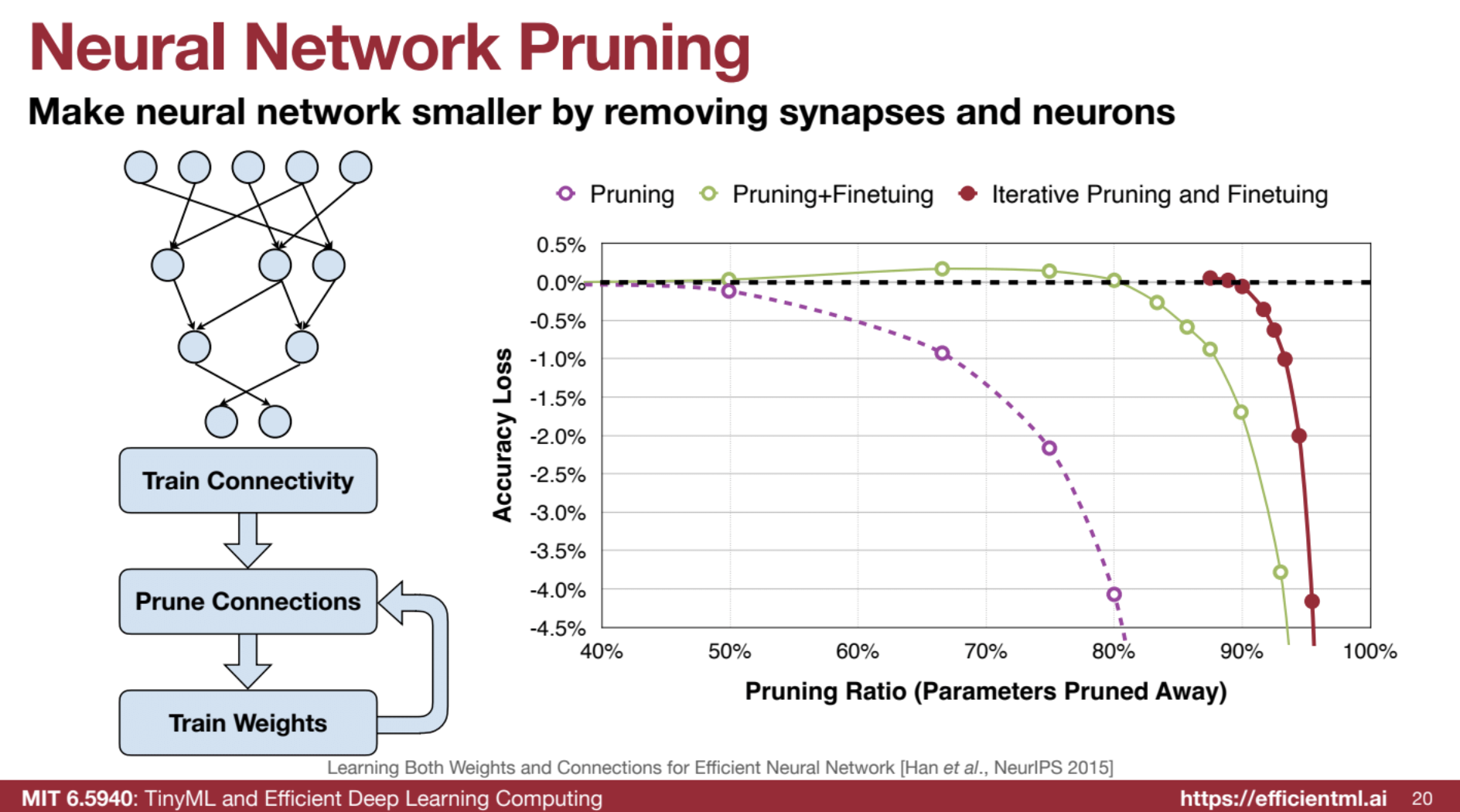

🔧 Fine-tuning and Retraining

- Iterative Pruning: Gradually increases target sparsity over multiple pruning iterations with fine-tuning, achieving better results than one-time pruning.

- Regularization: Apply L1 or L2 regularization during fine-tuning to encourage sparsity.

- Learning Rate Adjustment: Reducing the learning rate during fine-tuning is often essential for stability and performance.

Part II ⚙️ System and Hardware Support

🚀 Efficient Inference Engine (EIE) for Sparse, Compressed Neural Networks

The Efficient Inference Engine (EIE) is a specialized hardware accelerator designed to optimize sparse, compressed neural networks, particularly for fully-connected (FC) layers. EIE leverages weight sparsity, activation sparsity, and quantization to significantly improve computation speed, energy efficiency, and memory footprint. 📉🔋

💡 EIE Exploits Sparsity and Quantization

- Weight Sparsity: EIE skips zero weights in computations (e.g., zero multiplied by any activation is still zero). This achieves a 10x reduction in computation and 5x reduction in memory footprint with 90% weight sparsity.

- Activation Sparsity: EIE benefits from activation sparsity created by ReLU, where many activations are zero. This provides a 3x computation reduction, though it does not affect memory for weights.

- Quantization: EIE uses low-precision formats (e.g., 4 bits) for weights and activations, achieving a 4x reduction over FP16 and 8x over FP32 in memory and computation.

Weight memory is 5X not 10X as we need to store the index of the non-zeors. After ReLu activation is also sparse.

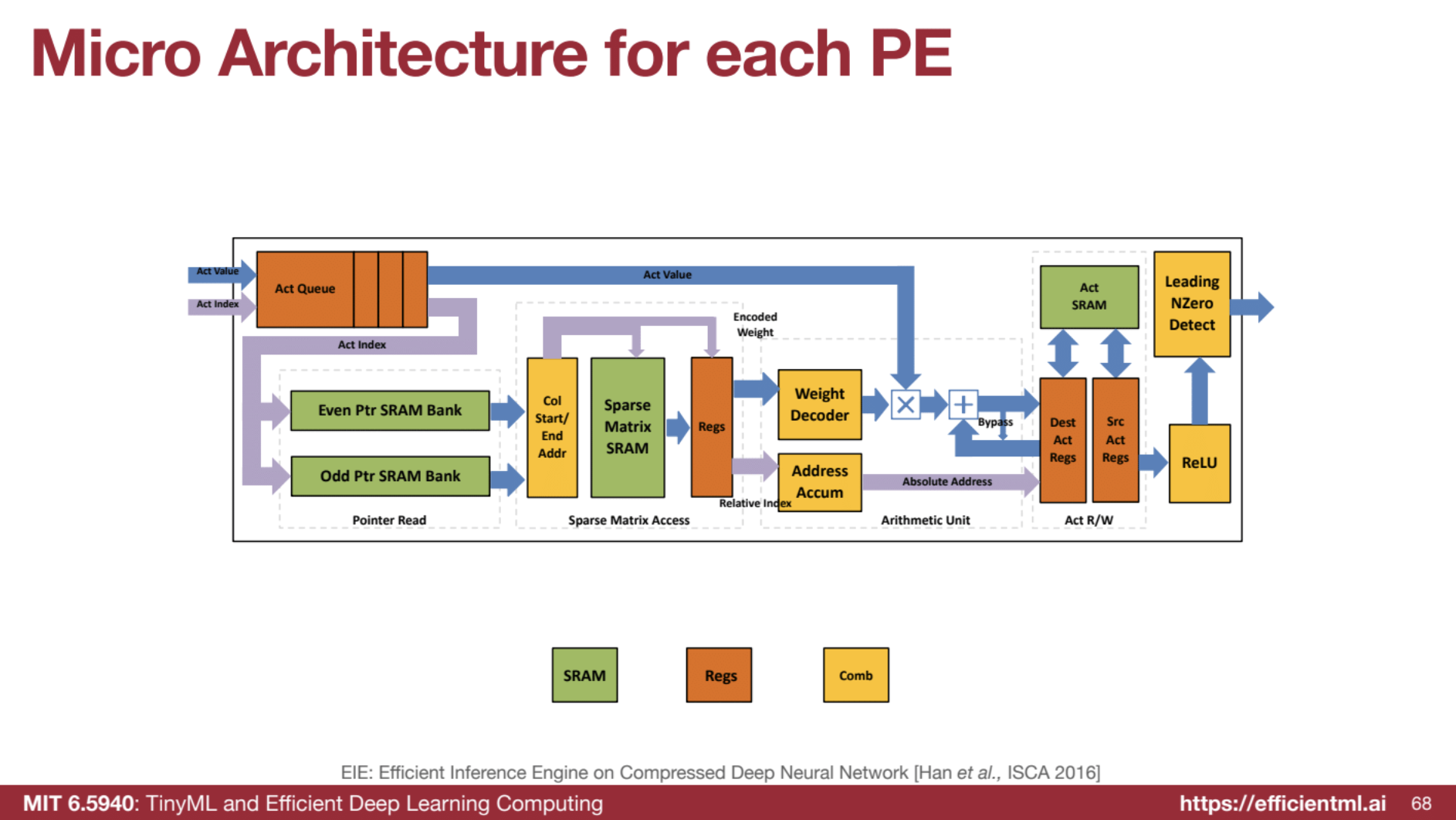

🏗️ EIE Architecture and Operation

EIE’s parallel processing architecture, with multiple processing elements (PEs), efficiently handles sparse computations:

- Data Storage: Sparse weight matrices are stored in a compressed format like CSC (Compressed Sparse Column), containing only non-zero weights and their indices.

- Computation: EIE skips zero activations and broadcasts non-zero activations to PEs for parallel multiplication with corresponding non-zero weights.

- Microarchitecture: Each PE includes an activation queue, sparse matrix access unit, weight decoder, arithmetic unit, and non-zero detection to maximize efficiency in sparse data processing.

⚙️ Step-by-Step PE Workflow

-

Activation Queue (Act Queue): The Act Queue holds only non-zero activations from the previous layer, eliminating zero activations to leverage activation sparsity.

-

Pointer Read: Using indices from the Act Queue, the PE fetches corresponding pointers from the sparse weight matrix’s pointer memory (Even Ptr SRAM Bank and Odd Ptr SRAM Bank), marking the start and end addresses for relevant columns in the compressed sparse matrix.

-

Sparse Matrix Access: The PE accesses the sparse matrix SRAM using the obtained column addresses, retrieving only the non-zero weights associated with the current non-zero activation.

-

Weight Decoder: The PE decodes 4-bit quantized weights to their 16-bit representation. This process leverages weight sharing, allowing efficient computation with low-precision storage.

-

Arithmetic Unit: The decoded weight is multiplied by the non-zero activation from the Act Queue. This result is added to the previous accumulation stored in the accumulator.

-

Leading Non-Zero Detection: The resulting activation passes through a non-zero detection unit, identifying non-zero outputs for storage in the Act Queue, maintaining activation sparsity across layers.

-

ReLU Activation: The final activation result goes through the ReLU function, introducing non-linearity and generating activation sparsity for the next layer.

Steps:

- Act Queue: Initially empty.

- Pointer Read: The first non-zero activation is

1at index 0, so the PE fetches pointers for column 0 of W. - Sparse Matrix Access: The PE retrieves non-zero weights in column 0:

1and4. - Weight Decoder: These weights are decoded to 16-bit values.

- Arithmetic Unit: The PE multiplies the decoded weights with activation

1and accumulates the results. - Leading Non-Zero Detection: The partial output is non-zero, so it’s stored in the Act Queue.

- ReLU Activation: The partial output passes through ReLU.

This process repeats for other non-zero activations in a, skipping computations for zero weights and maximizing efficiency.

🔑 Key Points

- Sparse Efficiency: EIE’s microarchitecture is optimized for handling sparse matrices and activations.

- Compute Savings: By skipping operations with zeros, EIE significantly reduces computation time and energy.

- Maintaining Sparsity: The Act Queue and non-zero detection ensure activation sparsity is preserved across layers.

- Low Precision with Accuracy: The Weight Decoder allows low-precision weight storage without sacrificing computational accuracy.

This breakdown covers the basics, though actual EIE implementation involves complex aspects like address accumulation and relative indexing for further optimization. This outline provides a fundamental understanding of the workflow within each PE of the EIE architecture.

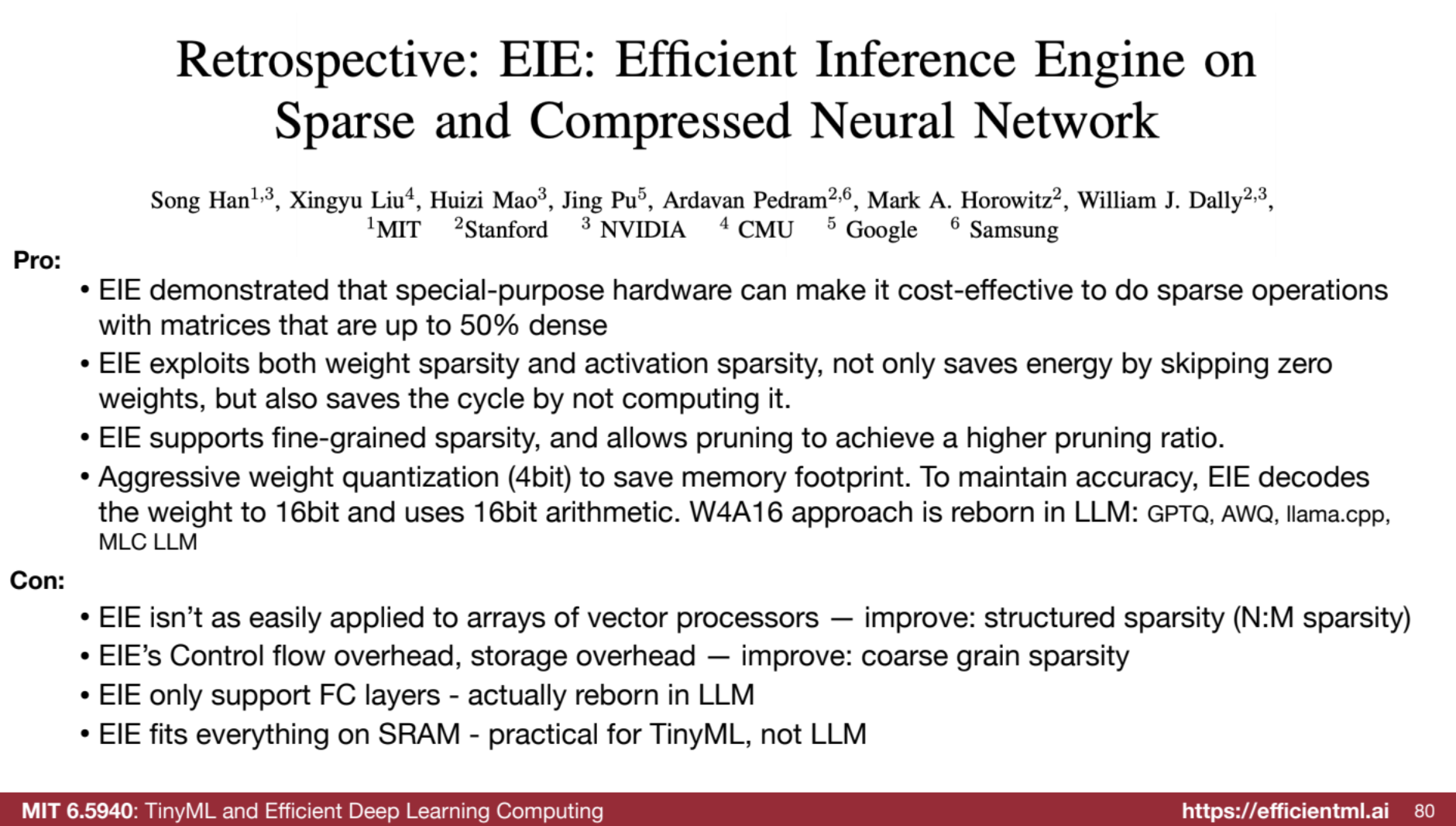

⚙️ EIE Performance and Benefits

EIE offers high throughput and energy efficiency for sparse neural networks, supports fine-grained sparsity, and enables high pruning ratios with minimal memory usage via aggressive quantization.

🚫 EIE’s Limitations

Despite its advantages, EIE has some limitations:

- Limited Applicability to Vector Processors: EIE’s design does not easily translate to vector processor arrays.

- Overhead: Control flow and storage overhead due to pointers and indices for sparse data representation.

- Support for FC Layers Only: Initially designed specifically for FC layers.

🌐 EIE’s Impact and Relevance Today

EIE pioneered specialized hardware for sparse neural networks, influencing today’s popular W4A16 (4-bit weight, 16-bit arithmetic) approach used in large language models (LLMs) like GPTQ, AWQ, llama.cpp, and MLC LLM. Originally limited to FC layers, EIE’s relevance has grown with FC-heavy architectures in LLMs and Transformers. The work is done in 2016 but with recent LLMs, 4-bit weight quantization is also useful with good accuracy, as for LLMs the weight is the bottleneck.

The “lazy computing” concept from EIE has inspired ongoing research in sparsity-aware algorithms for generative AI, Transformers, video processing, and point clouds. 🔄📈

🔄 EIE in the Context of Other Sparsity Techniques

EIE focuses on weight and activation sparsity in FC layers. By contrast, NVIDIA’s Tensor Core targets structured sparsity (e.g., 2:4), while tools like TorchSparse and PointAcc optimize activation sparsity in convolutional layers. Each technique offers unique advantages tailored to specific neural network architectures and applications. 🧩

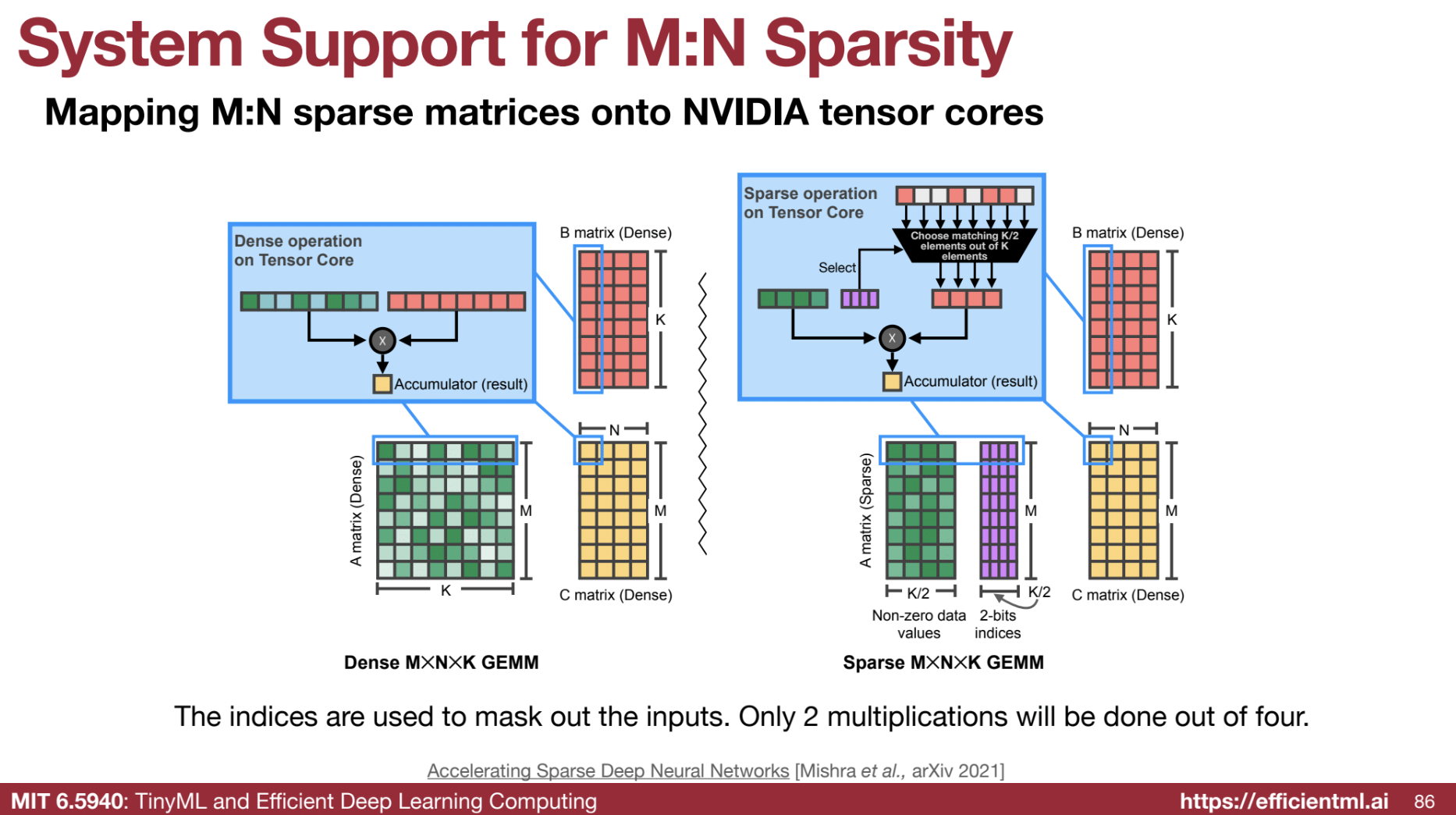

🟢 NVIDIA Tensor Cores (M:N Sparsity)

Supports structured sparsity, specifically 2:4 sparsity, for accelerated processing.

- Mechanism: Uses an index matrix to select and multiply only non-zero elements, achieving close to theoretical 2x speedup.

MxN sparsity, also known as structured sparsity, is a technique designed to accelerate neural network inference. It involves pruning the weight matrix in a structured manner, ensuring that a specific number of weights within a block are always zero. This approach enables efficient hardware implementations that enhance speed and energy efficiency.

How MxN Sparsity Works: The 2:4 Sparsity Example

A prominent example of MxN sparsity is 2:4 sparsity. In a 2:4 sparse matrix, for every group of 4 elements, 2 elements must be zero. This pattern is maintained across the entire weight matrix.

Example: An 8x8 matrix with 2:4 sparsity could look like this:

\[\begin{bmatrix} w_1 & 0 & w_2 & 0 \\ w_3 & 0 & w_4 & 0 \\ 0 & w_5 & 0 & w_6 \\ 0 & w_7 & 0 & w_8 \\ w_9 & 0 & w_{10} & 0 \\ w_{11} & 0 & w_{12} & 0 \\ 0 & w_{13} & 0 & w_{14} \\ 0 & w_{15} & 0 & w_{16} \\ \end{bmatrix}\]Benefits of Structured Sparsity

-

Storage Efficiency: Only non-zero values and their indices are stored, leading to substantial memory savings. For a 2:4 sparse matrix, the memory requirement can be halved compared to its dense counterpart.

-

Computation Reduction: Calculations involving zero weights are entirely skipped, which theoretically allows for a 2x speedup since half the weights are zero.

-

Hardware Acceleration: The regular zero patterns in MxN sparsity enable efficient hardware implementations, facilitating faster and more energy-efficient processing. For instance, NVIDIA’s Tensor Cores are designed to leverage 2:4 sparsity, resulting in significant performance improvements.

Implementing 2:4 Sparsity: Compressed Matrix and Index Matrix

To implement 2:4 sparsity, a structured-sparse matrix format is utilized:

-

Compressed Matrix: All non-zero weight values are stored contiguously in memory.

-

Index Matrix: A separate matrix, the index matrix, contains 2-bit indices that indicate the original location of each non-zero element within its group of four.

Example: From the previous 8x8 matrix, the compressed and index matrices would be:

Compressed Matrix (8x4):

\[\begin{bmatrix} w_1 & w_2 & w_5 & w_6 \\\ w_3 & w_4 & w_7 & w_8 \\\ w_9 & w_{10} & w_{13} & w_{14} \\\ w_{11} & w_{12} & w_{15} & w_{16} \\\ \end{bmatrix}\]Index Matrix (8x4, each element is 2 bits):

\[\begin{bmatrix} 00 & 00 & 01 & 01 \\ 00 & 00 & 01 & 01 \\ 10 & 10 & 11 & 11 \\ 10 & 10 & 11 & 11 \\ \end{bmatrix}\]Sparse Matrix Multiplication with 2:4 Sparsity

During matrix multiplication, the index matrix acts as a selector to fetch the correct elements from the dense input matrix:

- For each non-zero weight in the compressed matrix, its corresponding 2-bit index selects the matching element from the input matrix.

- Multiplication is executed only between the non-zero weight and the selected input element.

- The results are accumulated to form the output matrix.

NVIDIA Tensor Cores and 2:4 Sparsity

NVIDIA’s Ampere architecture GPUs feature Tensor Cores explicitly designed to accelerate 2:4 sparse matrix multiplication. These cores efficiently perform operations on the compressed matrix format, achieving nearly a 2x speedup for large matrices.

Advantages and Limitations of MxN Sparsity

Advantages:

- Significant speed and energy savings due to reduced computations and memory accesses.

- Improved hardware utilization and efficiency, particularly with specialized hardware support.

- Comparable accuracy to dense models for many tasks, especially when combined with quantization.

Limitations:

- The speedup may be less significant for smaller matrices.

- Specific hardware support is necessary to fully exploit the benefits.

- It may not be suitable for all types of neural networks or tasks.

Conclusion

MxN sparsity, particularly 2:4 sparsity, represents a promising approach to accelerate neural network inference. By utilizing structured pruning and dedicated hardware support, it offers substantial benefits in speed, energy consumption, and memory efficiency while maintaining accuracy. As the demand for efficient AI inference increases, techniques like MxN sparsity are poised to gain further traction in future hardware and software designs.

🖥️ TorchSparse & PointAcc

Software and hardware optimizations for efficient sparse convolution operations. Here I just summarize the lectures, and may need a seperate blog for deep dive.

- TorchSparse: Uses adaptive grouping to optimize sparse matrix multiplication on GPUs.

- PointAcc: Speeds up sparse convolution by finding input-output-weight mappings using merge sort and intersection operations.

Example: “Merge sort is used to find mappings in sparse convolution.”

Most Important Ideas/Facts:

- MLPerf Benchmark Results: Pruning has demonstrated significant speedups in large language models. NVIDIA achieved a 2.5x speedup on Llama 2 70B by applying depth and width pruning.

- Memory vs. Computation Cost: Memory access is significantly more energy-intensive than arithmetic operations, making data movement a bottleneck. Pruning helps by reducing the amount of memory accessed.

- Iterative Pruning and Fine-tuning: This approach allows for more aggressive pruning without significant accuracy degradation.

- Hardware Support for Sparsity: NVIDIA’s Ampere GPU architecture supports 2:4 sparsity, enabling 2x theoretical peak performance and demonstrating measurable speedups on models like BERT.

- Non-uniform Pruning Ratio: Different layers in a neural network may have varying levels of redundancy, suggesting the need for a non-uniform pruning ratio for optimal performance.

Quotes:

- “Memory is very expensive; computation is much cheaper. Memory movement is more than two orders of magnitude more costly than arithmetic operations… so data movement is much more expensive. To make deep learning more efficient, we want to reduce the amount of memory, reduce the model size, and reduce the activation size.”

- “The open division submission on Llama 2 70B: 2.5x speedup while maintaining 99% accuracy.”

- “Pruning can be performed at different granularities, from structured to non-structured.”

- “Magnitude-based pruning considers weights with larger absolute values as more important than other weights.”

- “2:4 sparsity in A100 GPU: 2X peak performance, 1.5X measured BERT speedup.”

- On the importance of laziness in efficient computing: “The first principle of efficient computing is to be lazy… try to avoid the work… quickly reject the work if it’s zero… or use a fewer precision, a fewer number of bits to represent that.”

- On the explainability of automated pruning: “Crests: our RL agent automatically learns 3x3 convolutions have more redundancy and can be pruned more.”

- On the resurgence of weight-only quantization in large language models: “EIE can support… aggressive weight quantization… and actually weight-only quantization like four-bit quantization to save memory footprint is still widely used these days to maintain accuracy.”

Key Takeaways:

- Pruning is a powerful technique for improving the efficiency of neural networks, reducing their size, memory footprint, and computational requirements.

- Different pruning strategies offer varying levels of flexibility and effectiveness depending on the specific model and task.

- Fine-tuning is crucial for recovering accuracy after pruning and achieving the best possible performance.

- Advancements in hardware are increasingly incorporating support for sparse models, further enhancing the potential of pruning for efficient deep learning.

📝 Quiz

Instructions: Answer each question in 2-3 sentences.

- What is the fundamental goal of neural network pruning, and what are the potential benefits?

- Explain the difference between fine-grained pruning and channel pruning. What are the trade-offs between these approaches?

- Describe how magnitude-based pruning is used to determine which weights to remove from a neural network.

- Why is non-uniform pruning generally preferred over uniform pruning? What concept is used to determine optimal per-layer pruning ratios?

- Briefly explain how sensitivity analysis can be used to guide the selection of per-layer pruning ratios.

- What are the limitations of using sensitivity analysis to determine pruning ratios? How can automated methods like AMC (AutoML for Model Compression) address these limitations?

- Describe the key features of NetAdapt as a rule-based method for determining per-layer pruning ratios.

- What is the role of fine-tuning in the pruning process, and what are some best practices for effective fine-tuning?

- What is the core idea behind the Efficient Inference Engine (EIE) for accelerating sparse neural networks? What types of sparsity does it leverage?

- How does the concept of “being lazy” apply to the design of efficient hardware and algorithms for sparse neural networks?

📝 Quiz Answer Key

- Neural network pruning aims to reduce the size of a network by removing unnecessary weights or neurons. This leads to benefits like reduced computational cost, lower memory footprint, and faster inference speeds, making models suitable for resource-constrained devices.

- Fine-grained pruning allows for the removal of individual weights, offering maximum flexibility but resulting in an irregular sparsity pattern that’s difficult to accelerate with hardware. Channel pruning removes entire channels, leading to more structured sparsity that’s easier to accelerate but offers less flexibility.

- Magnitude-based pruning assumes that weights with larger magnitudes contribute more significantly to the network’s performance. Weights with smaller magnitudes are deemed less important and are pruned away, creating sparsity.

- Non-uniform pruning, where each layer has a tailored pruning ratio, often yields a better accuracy-latency trade-off than uniformly pruning all layers at the same rate. Sensitivity analysis is employed to find optimal per-layer ratios.

- Sensitivity analysis involves systematically pruning each layer with varying ratios and observing the resulting accuracy degradation. Layers exhibiting greater sensitivity are pruned less, while more robust layers can tolerate higher pruning ratios.

- Sensitivity analysis considers layers independently, neglecting their interactions, which may lead to sub-optimal results. AMC utilizes reinforcement learning to explore the space of pruning ratios across all layers simultaneously, learning the optimal configuration to maximize accuracy under constraints.

- NetAdapt iteratively prunes layers to meet a global latency constraint. It involves pruning each layer to achieve a target latency reduction, short-term fine-tuning to assess accuracy, and selecting the layer with the least impact on accuracy for actual pruning.

- Fine-tuning is essential after pruning to help the network recover accuracy lost due to the removal of weights. Techniques like reduced learning rates, iterative pruning, and regularization help refine the pruned network and achieve better performance.

- EIE leverages both weight sparsity (static zeros in the weight matrix) and activation sparsity (dynamic zeros arising from ReLU activation). It exploits these sparsities to avoid unnecessary computations and memory accesses, achieving significant speedups and energy efficiency.

- “Being lazy” in efficient sparse neural network design refers to avoiding redundant computations and memory accesses. Strategies include skipping operations involving zeros, using lower precision data types, and delaying computation where possible to minimize overhead.

📄 Essay Questions

- Discuss the various pruning granularities (e.g., fine-grained, vector-level, kernel-level, channel-level) and their respective advantages and disadvantages in terms of flexibility, regularity, and hardware acceleration.

- Compare and contrast learning-based methods (AMC, NetAdapt) with rule-based methods for determining per-layer pruning ratios in neural network compression. Discuss the trade-offs between optimality, automation, and computational cost.

- Explain the challenges of efficiently implementing sparse neural networks on hardware. Discuss different hardware architectures and approaches (EIE, NVIDIA Tensor Cores, specialized accelerators) used to address these challenges and accelerate sparse computations.

- Describe how sparsity and quantization can be combined to achieve even greater compression and acceleration of neural networks. Discuss the potential benefits and challenges of this combined approach.

- Explore the future directions and potential applications of sparse neural networks. Discuss how emerging trends in hardware design and algorithms are shaping the evolution of sparse neural network architectures and their use in various domains.

📚 Glossary of Key Terms

| Term | Definition |

|---|---|

| Pruning | The process of removing unnecessary weights or neurons from a neural network to reduce its size and complexity. |

| Sparsity | The presence of a significant number of zero values in a matrix or tensor, leading to reduced computation and memory requirements. |

| Fine-grained Pruning | Removing individual weights from a neural network, providing high flexibility but leading to irregular sparsity. |

| Channel Pruning | Removing entire channels of weights, producing more structured sparsity that is easier to accelerate with hardware. |

| Magnitude-Based Pruning | A criterion for selecting weights to prune based on the assumption that weights with smaller magnitudes are less important. |

| Non-uniform Pruning | Pruning each layer of a network with a different ratio, leading to a better accuracy-latency trade-off compared to uniform pruning. |

| Sensitivity Analysis | A technique for determining the impact of pruning on each layer’s accuracy by systematically pruning with varying ratios. |

| AMC (AutoML for Model Compression) | A reinforcement learning-based method for automatically finding optimal pruning ratios for each layer. |

| NetAdapt | A rule-based iterative method for progressively pruning layers to meet a target latency constraint. |

| Fine-tuning | The process of retraining a pruned neural network to recover accuracy lost due to weight removal. |

| Efficient Inference Engine (EIE) | A hardware accelerator designed to efficiently execute sparse neural networks, leveraging both weight and activation sparsity. |

| Weight Sparsity | The presence of static zero values in the weight matrix of a neural network. |

| Activation Sparsity | The presence of dynamic zero values in the activations of a neural network, often arising from ReLU activation. |

| Quantization | Reducing the precision of numeric data types used in neural networks to decrease memory footprint and accelerate computations. |

| NVIDIA Tensor Cores | Specialized hardware units in NVIDIA GPUs designed to accelerate matrix multiplications, supporting structured sparsity patterns like 2:4 sparsity. |

| TorchSparse | A software library for efficient sparse convolutions on GPUs, supporting various sparse formats and algorithms. |

| PointAcc | A specialized hardware accelerator for accelerating sparse convolutions commonly used in point cloud processing. |

| Being Lazy | A design principle for efficient sparse neural network systems that emphasizes avoiding unnecessary computations and memory accesses. |