Basics of Neural Networks

🌟 Main Themes

- The growing computational demand of deep learning models is outpacing hardware advancements, creating a strong need for efficient deep learning techniques.

- Understanding the basic building blocks of neural networks and their associated efficiency metrics is essential for designing optimized models.

- Optimizing memory usage and data movement is paramount, as memory access is significantly more energy-intensive than computation.

1. Deep Learning’s Computational Demand

- The size of deep learning models is increasing exponentially, surpassing hardware capability growth (Moore’s Law).

- This gap necessitates efficient techniques like model compression and acceleration to bridge the computation supply-demand divide.

2. Neural Network Terminology

- Neurons/Features/Activations: Interchangeable terms representing a neuron’s output.

- Synapses/Weights/Parameters: Learnable parameters controlling the connection strength between neurons.

🌐 The Efficiency of Wide and Shallow Networks

Wide and shallow neural networks can be more hardware-efficient compared to narrow and deep networks with the same number of parameters. This efficiency stems from the way computations are structured and mapped onto hardware like GPUs.

🔍 Breakdown of the Reasons:

-

Kernel Calls:

Wide networks, with their larger number of neurons in each layer, allow for greater parallelism in computations. This means more operations can be performed simultaneously, reducing the need for frequent “kernel calls” to the GPU. Kernel calls involve overhead for setting up and launching computations on the GPU, so minimizing them improves efficiency. -

Thread Utilization:

GPUs excel at parallel processing, utilizing numerous threads to handle computations. Wider layers provide more work for these threads to execute concurrently, leading to better utilization of the GPU’s processing power. Narrower layers, in contrast, might leave many threads idle, underutilizing the hardware’s capabilities.

⚖️ Efficiency vs. Accuracy Trade-off

The sources also highlight a trade-off between hardware efficiency and model accuracy. While wide and shallow networks might be more computationally friendly, deep networks generally achieve higher accuracy. This is likely due to their ability to learn more complex, hierarchical representations of data through multiple layers of non-linear transformations.

Thus, designing neural network architectures involves a careful balancing act between efficiency and accuracy. The optimal choice depends on the specific application and the available hardware resources.

3. Popular Neural Network Layers & Efficiency

- Fully Connected Layer: Connects every output neuron to all input neurons.

- Convolution Layer: Connects output neurons only to inputs within a receptive field, enabling weight sharing and reducing parameter count.

- Grouped Convolution Layer: Divides channels into groups, reducing computational cost.

- Depthwise Convolution Layer: Each output channel connects to a single input channel for high efficiency. Reduce number of weights by a lot, and is widely used in mobileNet since 2015,2016.

- Pooling Layer: Downsamples feature maps, reducing computational and memory requirements.

- Normalization Layer: Stabilizes training by normalizing features, often absorbing quantization operations for efficiency.

- Activation Functions: Trade-offs between accuracy, sparsity, quantization efficiency, and hardware implementation ease.

- Transformers: Attention mechanisms, though lacking learnable parameters, can be optimized with techniques like sparse attention and flash attention.

4. 📏 Dimensions of Popular Neural Network Layers

The lecture provide a detailed breakdown of dimensions for various neural network layers. Understanding these dimensions is crucial for calculating efficiency metrics such as the number of parameters, model size, and computational complexity. Here’s a layer-by-layer explanation:

(1). Fully-Connected Layer (Linear Layer)

- Input Features (X):

(n, ci)nis the batch size, representing the number of input samples processed simultaneously.ciis the number of input channels, representing the dimensionality of each input sample.

- Output Features (Y):

(n, co)cois the number of output channels, representing the dimensionality of each output sample.

- Weights (W):

(co, ci)- This matrix connects each input channel to each output channel.

- Bias (b):

(co,)- A bias term added to each output channel.

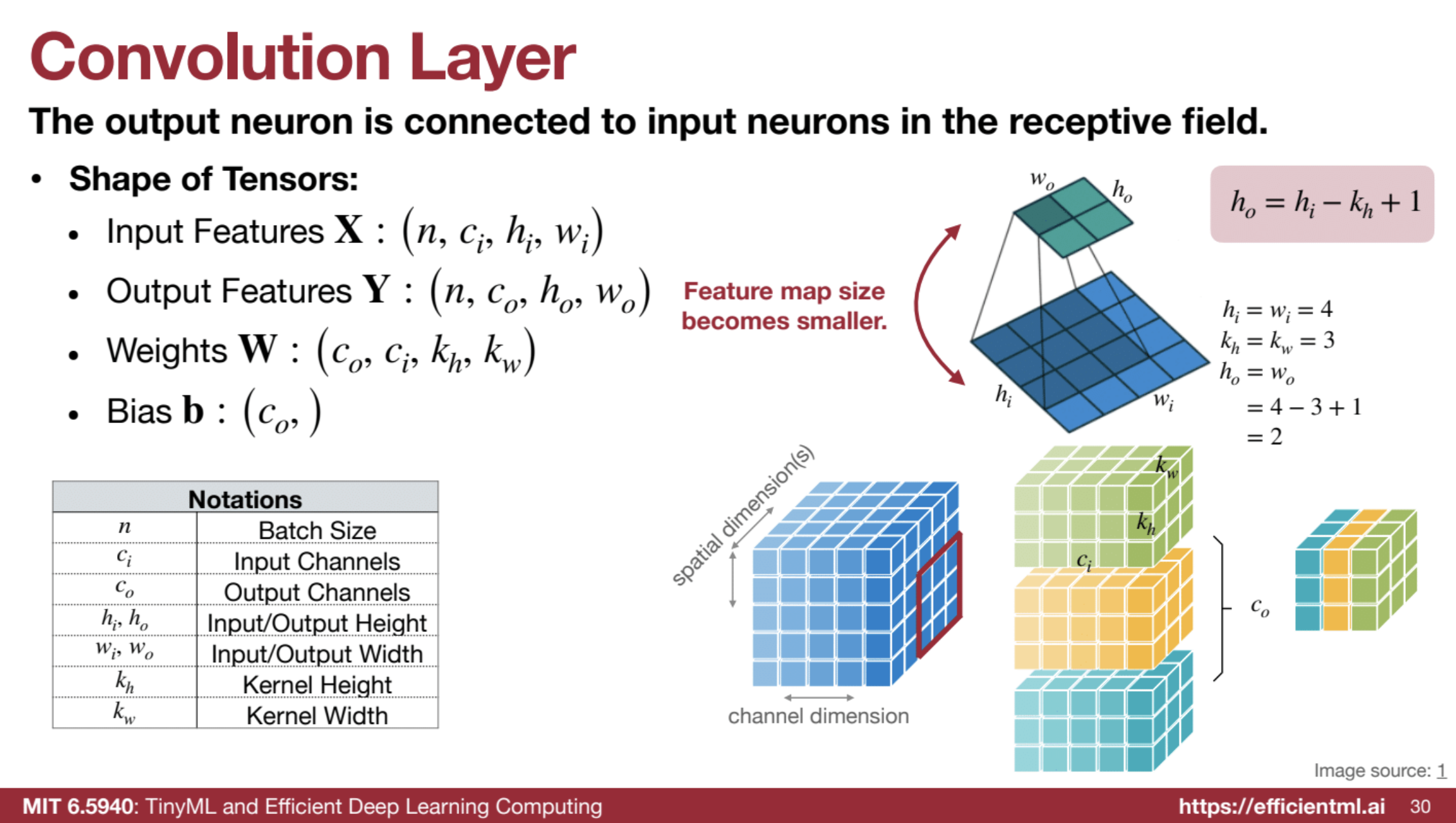

(2). Convolution Layer (Conv)

- Input Features (X):

(n, ci, hi, wi)nis the batch size.ciis the number of input channels.hiandwiare the height and width of the input feature map, respectively.

- Output Features (Y):

(n, co, ho, wo)cois the number of output channels.hoandwoare the height and width of the output feature map, respectively.

- Weights (W):

(co, ci, kh, kw)cois the number of output channels (kernels).ciis the number of input channels.khandkware the kernel height and width, respectively.

- Bias (b):

(co,)- A bias term added to each output channel.

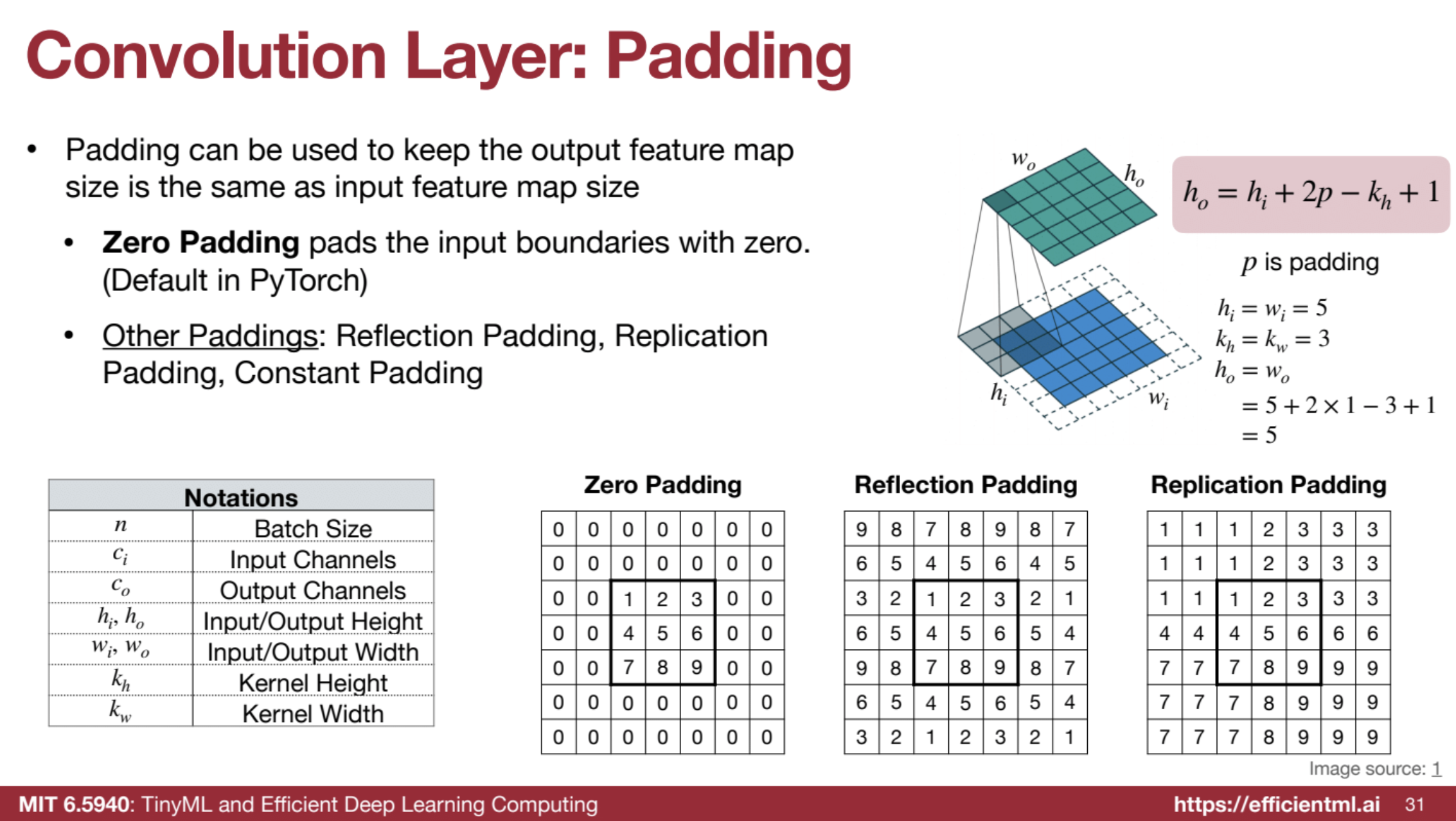

Can use zero padding (PyTorch Default) so the output feature map dimension equals to the input feature map. There are also other padding techniques like reflection padding and replication padding.

Important Relationships:

- Output Feature Map Size:

ho = (hi + 2p - kh) / s + 1wo = (wi + 2p - kw) / s + 1- Where

pis the padding,sis the stride,khis the kernel height, andkwis the kernel width.

🧠 Receptive Fields, Deep CNNs, and Transformer Advantages

CNNs need large receptive fields to capture context in images, like recognizing a “car” by understanding relationships between parts (wheels, windows) and context (road). Larger receptive fields help CNNs capture this broader context.

To achieve this, CNNs stack multiple layers to progressively expand the receptive field, with each layer adding complexity and abstraction.

Formula for CNN Receptive Field:

Receptive Field Size = L * (k - 1) + 1L: number of layersk: kernel size

Challenges with Deep CNNs:

- Computational Complexity: More layers = slower inference and higher energy use.

- Memory Demand: Deep networks store many activations, needing substantial memory.

- Vanishing Gradients: Training becomes difficult as gradients shrink through deep layers.

Techniques to Address These Issues:

- Strided Convolutions: Downsample feature maps, increasing receptive field without extra layers.

- Dilated Convolutions: Expand receptive field with gaps in the kernel, minimizing parameter increase.

- Skip Connections: Allow signals to bypass layers, helping with training deep networks.

Transformers as an Alternative:

- Global Receptive Field: Each element can attend to any other, capturing long-range dependencies directly.

- Efficiency: Transformers often outperform deep CNNs with fewer layers, offering improved computational and memory efficiency.

In summary, deep CNNs provide large receptive fields but at a computational cost. Transformers offer a global receptive field inherently, often making them a more efficient choice for tasks requiring long-range dependency modeling.

(3). Grouped Convolution Layer

- Input Features (X), Output Features (Y), Weights (W), and Bias (b): The dimensions remain the same as the standard convolution layer.

- Groups (g): This parameter determines the number of groups the input and output channels are divided into.

- Weight Tensor Shape: The key difference is that the weight tensor is divided into

ggroups, each responsible for convolving a subset of input channels to a subset of output channels.

(4). Depthwise Convolution Layer

- This is a special case of grouped convolution where the number of groups (

g) is equal to the number of input channels (ci) and output channels (co). - Weights (W):

(c, kh, kw)- Where

cis the number of channels, which is the same for both input and output.

- Where

(5). Pooling Layer

- Input Feature Map: Dimensions are the same as the input to a convolution layer.

- Output Feature Map: Dimensions are determined by the pooling operation (max pooling or average pooling), kernel size, and stride.

- Learnable Parameters: Pooling layers generally do not have any learnable parameters.

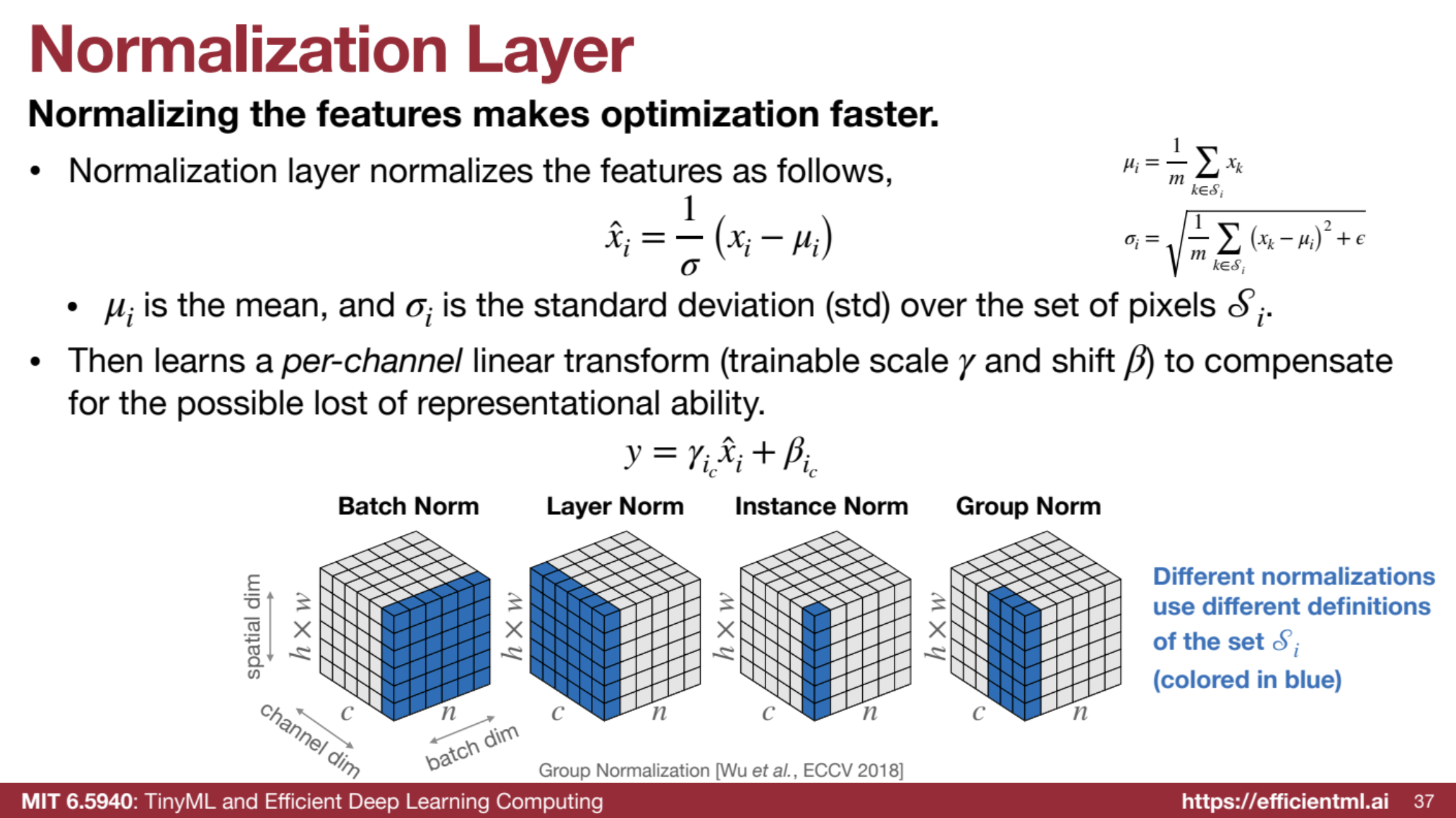

(6). Normalization Layer

- Input Features: Dimensions are typically the same as the input or output of a convolution layer.

- Normalization: The normalization process involves calculating the mean and standard deviation over a set of pixels or tensors. The choice of normalization (batch norm, instance norm, group norm) determines how this set is defined.

- Learnable Parameters: Normalization layers typically have learnable scaling (γ) and shift (β) parameters for each channel to compensate for potential loss of representational ability.

🔍 Normalization Layers Details

Normalization layers are essential for stable and efficient neural network training. They standardize feature values, improving training speed, stability, and generalization.

🔹 What is Normalization?

Normalization standardizes feature values to have a mean of 0 and a standard deviation of 1, smoothing optimization. Steps include:

- Calculate Mean & Std Dev: Compute across selected elements (pixels, tensors).

- Normalize: Subtract mean and divide by std deviation:

x̂i = (xi - μi) / σi - Scale & Shift: Apply scaling (γ) and shift (β) to retain flexibility:

yi = γi * x̂i + βi

🔄 Per-Channel Linear Transformation in Normalization Layers

After normalization, a per-channel linear transformation enhances feature expressiveness. This step introduces a unique scaling (γ) and shift (β) for each channel. Each channel has its own γ and β, adding flexibility.

🌟 Why It’s Needed

While normalization helps optimization, it may reduce feature expressiveness by constraining values. Per-channel transformation restores this, allowing channels to optimally represent features.

🌟 Efficiency Boosts

- Fine-tuning: Adjusting γ and β in transfer learning can improve efficiency without much accuracy loss.

- Quantization: Helps map normalized features to suitable ranges for low-precision representation, aiding faster and more memory-efficient inference.

🌟 Visualizing the Transformation

Imagine each channel of your feature map as a separate image. The per-channel linear transformation allows the network to adjust the brightness and contrast of each of these “channel images” independently. This fine-grained control enables the network to highlight the most important features within each channel, enhancing the overall representational capacity of the normalized features.

🔹 Types of Normalization

Each type differs in normalization scope:

- Batch Norm: Normalizes across batch (N) and channel (C), averaging spatial dims (H, W). Used in CNNs.

- Layer Norm: Normalizes across all channels (C) and spatial dims (H, W) for each sample. Common in attention layers.

- Instance Norm: Normalizes across spatial dims (H, W) for each channel independently.

- Group Norm: Normalizes within groups of channels (C), balancing Instance and Layer Norm.

🔹 Benefits

- Faster Training: Stabilizes gradients for quicker convergence.

- Improved Generalization: Reduces internal covariate shift.

- Reduced Sensitivity to Initialization: Lessens reliance on precise initial weights.

🔹 Efficiency

Normalization layers are efficient, with few learnable parameters and the potential for fusion with other operations during inference, minimizing computational load.

🔹 Efficient Deep Learning Applications

- Fine-tuning: Selective fine-tuning of normalization layers enhances efficiency without sacrificing accuracy.

- Quantization: Normalization aids quantization by constraining dynamic range, supporting lower-precision operations.

🔹 Normalization in Transformers

Transformers use Layer Normalization to stabilize attention mechanisms, ensuring consistent and stable weight calculation.

In summary, normalization layers enhance stability, speed up convergence, support efficient fine-tuning, and enable quantization, making them fundamental for efficient deep learning.

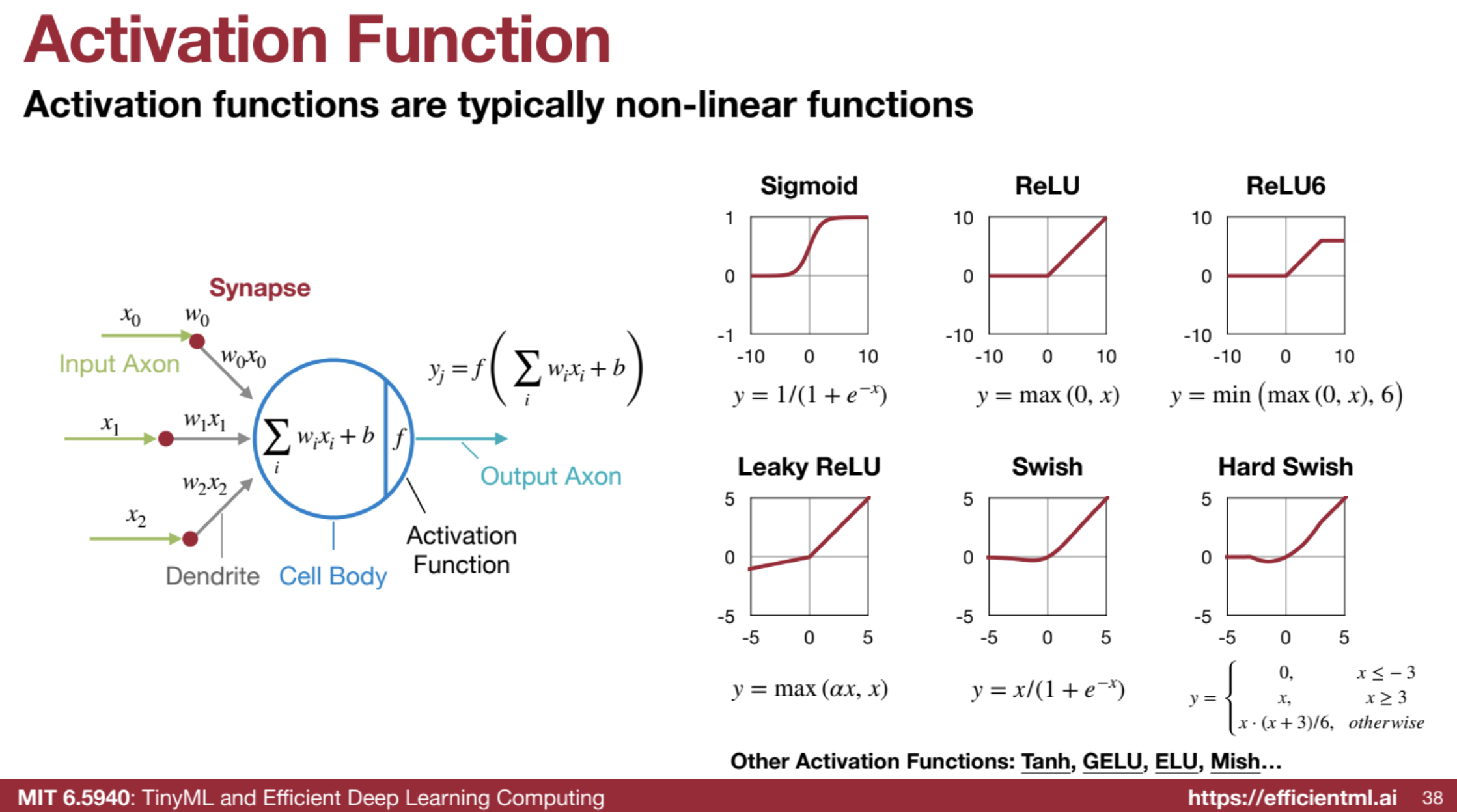

(7). Activation Layer

Activation functions add essential non-linearity to neural networks, enabling them to learn complex data patterns. Without them, a neural network would behave linearly, limiting its modeling capacity.

🌟 Why Non-Linearity Matters

Activation functions enable neural networks to approximate complex functions, crucial for tasks like distinguishing between similar classes. For instance, recognizing cats vs. dogs requires non-linear features (e.g., ear shapes) that simple linear transformations can’t capture.

🔑 Key Activation Functions

Each activation function has trade-offs affecting accuracy, efficiency, and ease of implementation. Here’s a breakdown of the most common ones:

| Activation | Description | Pros | Cons |

|---|---|---|---|

| Sigmoid | Maps input to (0, 1) | Easy to quantize | Vanishing gradients, limited dynamic range |

| ReLU | Outputs input if positive, else 0 | Simple, mitigates vanishing gradients, promotes sparsity | “Dead neurons” if inputs stay negative, large dynamic range |

| ReLU6 | ReLU variant capped at 6 | Easier to quantize, limits dynamic range | Similar issues as ReLU, but reduced |

| Leaky ReLU | Small negative slope | Reduces “dying neurons” | Slightly more computation than ReLU |

| Swish | Smooth, learns non-linear patterns | Higher accuracy in some cases | Complex, challenging hardware implementation |

| Hard Swish | Efficient Swish approximation | Balances accuracy and hardware efficiency | Some performance trade-offs compared to Swish |

⚙️ Efficiency Considerations

- Sparsity: ReLU promotes sparsity, reducing computations.

- Quantization: Smaller dynamic ranges (e.g., Sigmoid, ReLU6) simplify quantization, enhancing speed and memory use.

- Hardware Fit: Functions like ReLU are easier to implement on hardware, enabling faster and more energy-efficient processing.

🌐 Activation in Transformers

In Transformers, activation functions in feed-forward layers contribute to model efficiency and performance, balancing the same trade-offs.

Summary

Activation functions are critical for neural networks to learn complex data relationships, with each function balancing accuracy, efficiency, and hardware compatibility. The choice of function impacts sparsity, quantization, and implementation, making it a key factor in neural network design.

(8). Transformer (Attention Mechanism)

- Query (Q), Key (K), Value (V): These are matrices representing the input data, each with dimensions

(N, d), whereNis the number of tokens anddis the embedding dimension. - Attention Map: The dot product of the query and key, followed by normalization and softmax, produces an attention map of size

(N, N). - Output: Multiplying the attention map with the value matrix results in an output matrix of size

(N, d).

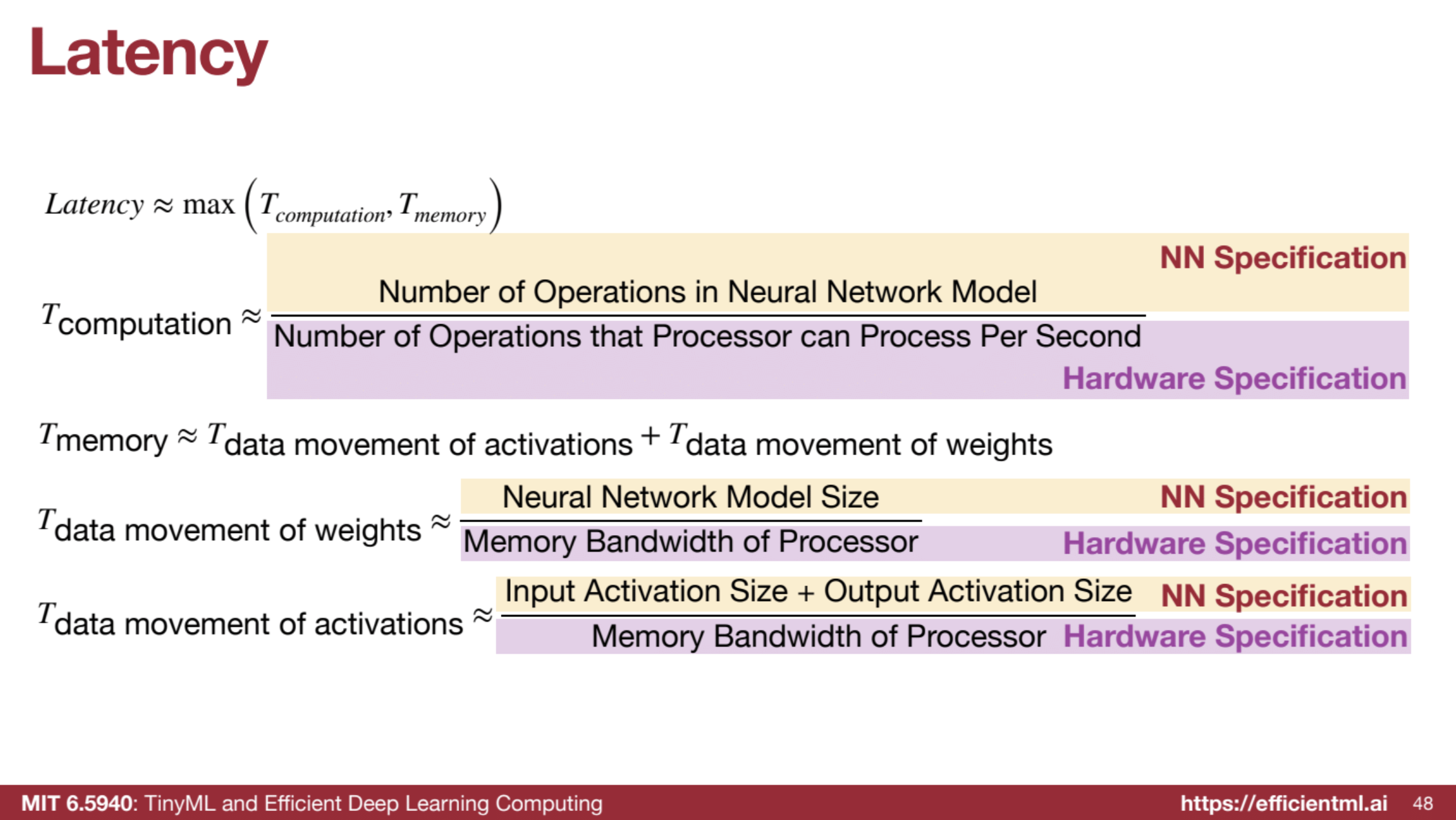

4. Efficiency Metrics

- Latency: Time delay to complete a task, crucial for real-time applications.

- Throughput: Data processing rate, important for batch tasks.

- Parameters: Number of weights in a model; reducing parameters shrinks the model size.

- Model Size: Storage space for weights, typically in MB or KB.

- Activations: Intermediate neuron outputs, a major memory bottleneck during training and inference.

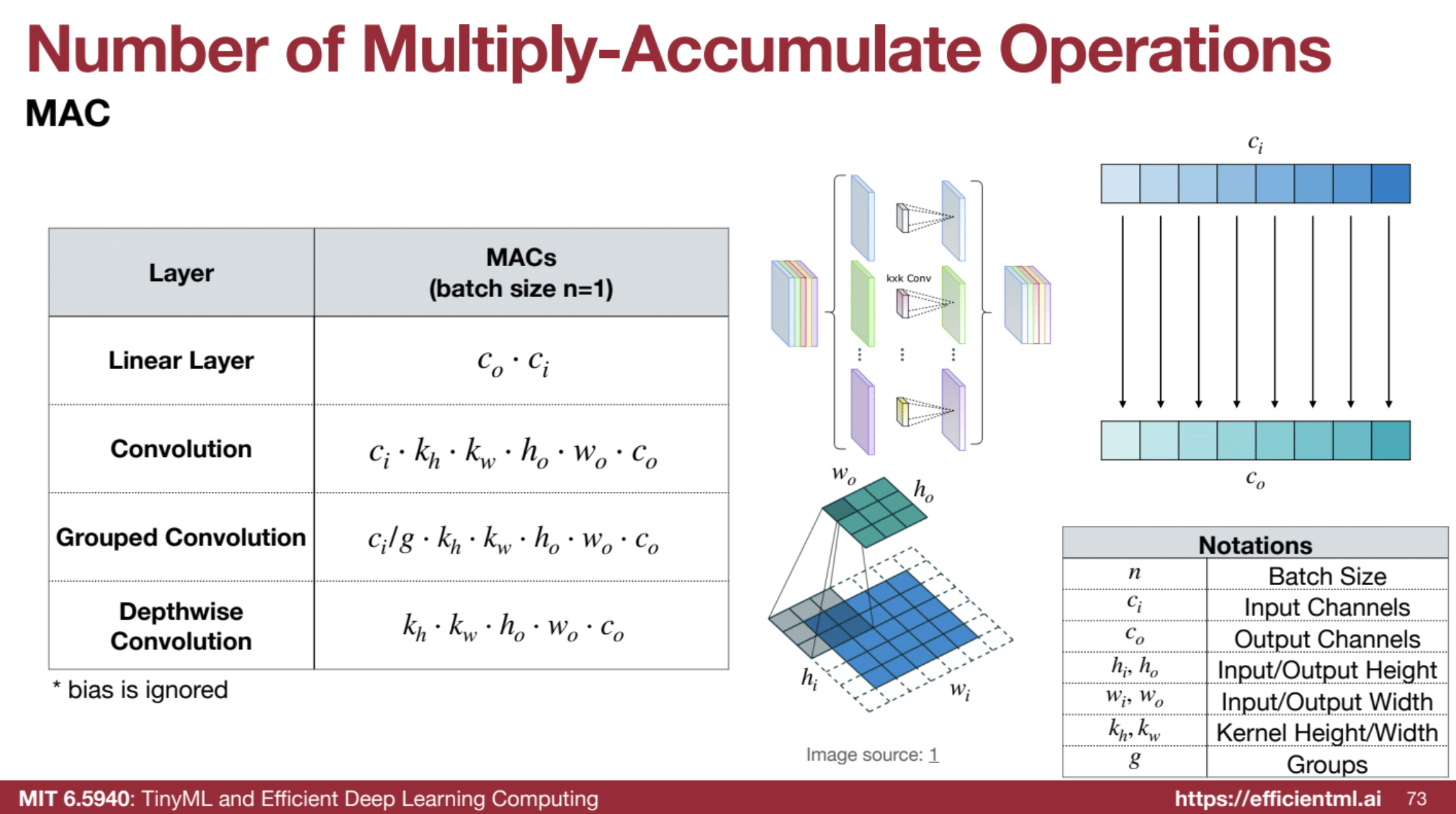

- MAC (Multiply-Accumulate Operations): Hardware instruction for one multiplication and addition.

- FLOP (Floating Point Operation): One multiplication or addition involving floating-point numbers. One MAC equals two FLOPs.

- OP (Operation): General term for any operation (floating-point, integer, or bitwise).

Latency VS. Throughput

Higher throughput doesn’t translate to lower latency and vice versa. On mobile, we mostly care about the latency, while on the data center, we care more on the thoughput as we can compute in parralle.

🌟 Computation is cheap. Data movement is expensive!

Parameters and Model Size

📏 Model Size and Number of Parameters

Model size represents the storage required for a neural network’s weights and is proportional to the number of parameters and bit width.

💾 Defining Model Size

- Measurement: Amount of memory needed to store weights.

- Units: Typically in MB, KB, or bits.

- Data Type: Depends on the weight representation (e.g., FP32, FP16, INT8).

🧮 Model Size Formula

For uniform data types:

- Model Size = Number of Parameters × Bit Width

🔍 Example: AlexNet

- Parameters: 61 million

- 32-Bit: 244 MB (61M × 32 bits ÷ 8 bits/byte)

- 8-Bit (Quantized): 61 MB (61M × 8 bits ÷ 8 bits/byte)

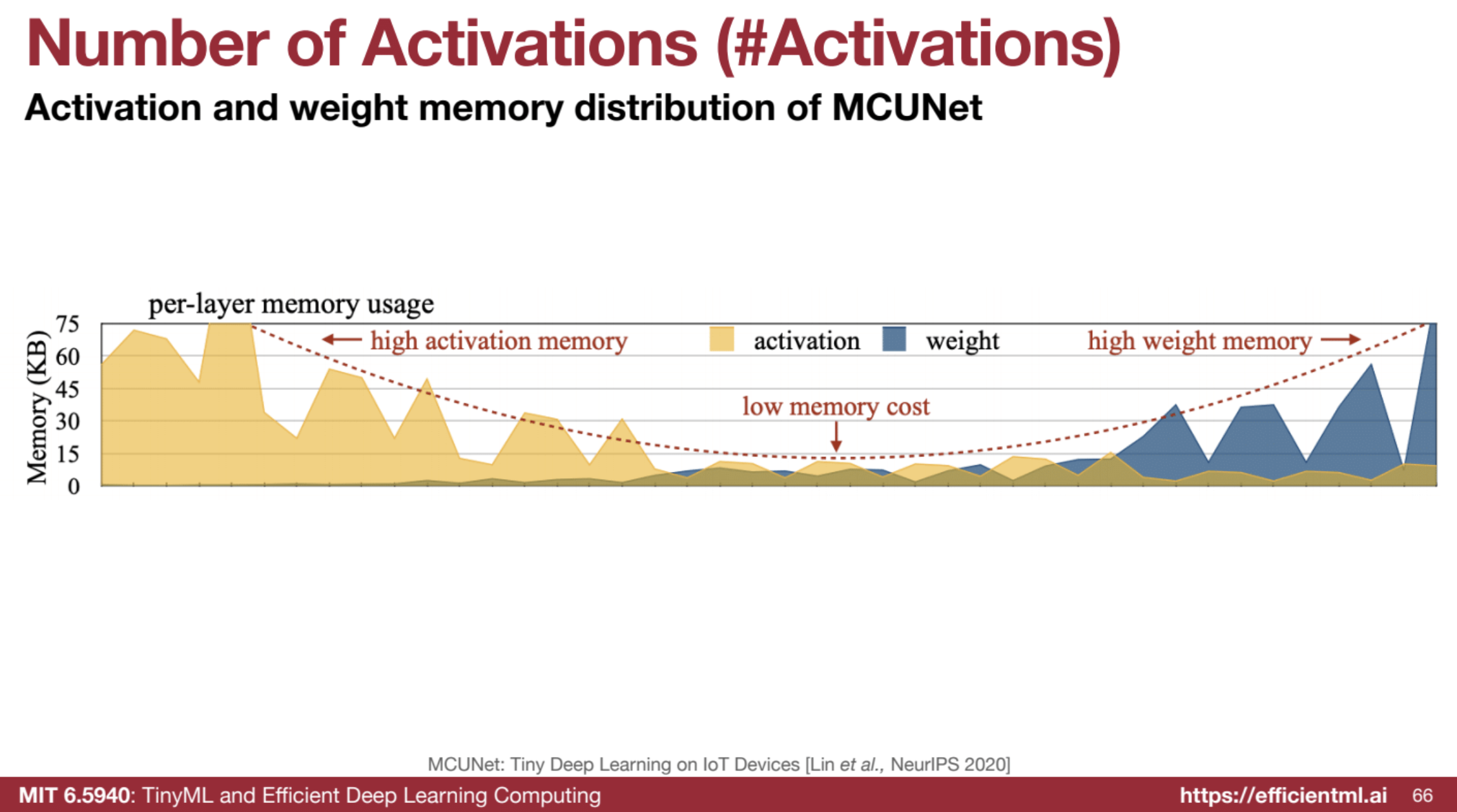

Number of Activations

Activations, or neuron outputs, are intermediate values in a neural network that impact memory use and inference speed.

🧩 Why Are Activations Important?

- Memory Bottleneck: Activation memory is often larger than model weights, especially during CNN inference.

- Inference Speed: More activations can slow down inference due to increased memory access.

Activations is the memory bottleneck in CNN inference. Deeper networks more activations.

In MCU settings, need to make sure the peak activations can be hold in SRAM.

Activations is the memory bottleneck in CNN training due to batch. In LLM, the number of parameters is also the bottleneck so propose model parallelism.

Activation distribution in CNN

📐 Calculating Activations

The total activations in a CNN layer depend on the size of its feature maps:

- Activation Size = C × H × W

- C: Channels

- H: Height

- W: Width

- Peak Activations ≈ Input Activations + Output Activations

- Indicates the memory needed at any point in inference.

💡 Example: AlexNet

- Total Activations: 932,264

- Peak Activation: 440,928 (first layer)

⚙️ Efficiency Implications

- Memory Optimization: Reducing activations (e.g., with smaller input resolutions or efficient architectures like MobileNet) minimizes memory and speeds up inference.

- Hardware Constraints: Ensuring peak activation fits available memory is crucial for deploying models on limited-resource devices.

📊 Breakdown of Computation Metrics: MAC, FLOP, FLOPS, OP, OPS

Understanding computation metrics is vital for evaluating the efficiency and performance of neural network models.

🛠️ MAC (Multiply-Accumulate Operation)

A MAC operation is a core component in matrix multiplications and convolutions, represented as:

- a ← a + b ⋅ c

Matrix Operations:

- Matrix-Vector Multiplication (MV): m × n (rows × columns)

- General Matrix-Matrix Multiplication (GEMM): m × n × k (output dimensions)

- Convolutional Layers: MACs calculated using input channels, kernel dimensions, output dimensions, and output channels.

⚙️ FLOP (Floating Point Operation)

A FLOP represents a floating-point multiplication or addition, with the relationship:

- 1 MAC = 2 FLOPs

🚀 FLOPS (Floating Point Operations Per Second)

FLOPS measures the computational speed:

- FLOPS = FLOPs / second

🔄 OP (Operation) and OPS (Operations Per Second)

OP and OPS extend FLOP and FLOPS to include any operation type, relevant for mixed-precision models.

- OP: Any operation (floating-point, integer, etc.)

-

OPS: Rate of executing operations.

- OPS = OPs / second

📈 Importance of Efficiency Metrics

These metrics provide insights into:

- Model Complexity: Higher values indicate more computationally intensive models.

- Performance Comparisons: Facilitate model comparisons based on computational demands.

- Hardware Requirements: Estimate resources needed for efficient model execution.

- Optimization Targets: Minimizing operations leads to faster inference and lower energy use.

💡 Key Insights

-

Memory Access Dominates Energy Consumption: Accessing memory is far more energy-intensive than performing arithmetic operations.

“Accessing memory is a lot more expensive than doing the arithmetic… two orders of magnitude more energy is consumed by accessing memory, so keep in mind that computation is cheap, but data movement is very expensive.”

-

Activations are the Bottleneck: Optimizing activations is crucial for minimizing memory usage and achieving efficiency.

“#Activation is the memory bottleneck in CNN inference, not #Parameters.”

-

Trade-offs are Everywhere: Designing efficient deep learning models involves balancing accuracy, model size, computation cost, and memory usage.

“Everything is a trade-off… there’s no free lunch in deep neural network design; everything is about constraint optimization, which makes the optimization highly interesting.”

📝 Quiz

-

Explain the difference between a neuron and a synapse in the context of neural networks.

A neuron is the basic processing unit of a neural network, receiving weighted inputs and applying an activation function to produce an output (activation). A synapse represents the connection between two neurons, characterized by a weight that determines the strength of the connection. -

What is a fully connected layer in a neural network, and how does it differ from a convolution layer?

A fully connected layer connects each neuron to every neuron in the previous layer, computing a weighted sum of inputs. In contrast, a convolution layer employs a sliding filter (kernel) to extract features from local regions of the input data, exploiting spatial relationships. -

Describe the concept of a receptive field in convolutional neural networks. Why is a large receptive field desirable?

The receptive field of a neuron in a CNN is the area in the input data that influences its output. A larger receptive field allows the network to capture more global context and relationships between features, aiding in tasks like object recognition. -

What is the purpose of padding in convolutional layers, and what are the different types of padding used?

Padding in convolutional layers helps preserve the spatial dimensions of the feature maps and avoids information loss at the borders. Common padding types include zero padding, reflection padding, and replication padding. -

Explain how strided convolution helps in reducing the number of layers required in a CNN.

Strided convolution allows the filter to skip over input values with a stride greater than one, effectively downsampling the output feature maps. This downsampling reduces the spatial resolution, allowing for deeper networks with larger receptive fields without exponentially increasing computational cost. -

Differentiate between Grouped Convolution and Depthwise Convolution. What is the advantage of using these techniques?

Grouped Convolution divides the convolution operation into smaller groups, each processing a subset of input channels, reducing parameters and computation. Depthwise Convolution is an extreme case where each input channel has its own filter, further minimizing computational cost while retaining important spatial information. -

What is the role of a normalization layer in a neural network, and name at least two types of normalization techniques?

Normalization layers are crucial for stabilizing and accelerating training by normalizing the activations. Common techniques include Batch Normalization, which normalizes across the batch dimension, and Instance Normalization, which normalizes within each individual input sample. -

Why is ReLU a popular choice for an activation function, and what are its limitations? How do Leaky ReLU and Swish address these limitations?

ReLU’s popularity stems from its simplicity and computational efficiency, avoiding vanishing gradient issues for positive inputs. However, it suffers from “dead neurons” for negative inputs. Leaky ReLU addresses this by allowing a small gradient for negative values, while Swish provides a smooth, non-monotonic function, improving gradient flow and performance. -

What are the key differences between latency and throughput in evaluating the efficiency of a neural network?

Latency measures the time delay between input and output, critical for real-time applications, while throughput quantifies the amount of data processed per time unit, important for batch processing. Higher throughput doesn’t always imply lower latency, as parallel processing can increase throughput with a trade-off in latency. -

Explain the relationship between the number of parameters, model size, and the choice of bit-width for representing weights.

The number of parameters directly impacts model size and computational complexity. Model size is calculated by multiplying the number of parameters by the bit-width chosen to represent each weight. Using a lower bit-width, such as 8-bit instead of 32-bit, reduces the model size and memory footprint but may impact accuracy.

🖊️ Essay Questions

-

Analyze the trade-off between accuracy and hardware efficiency when choosing between a deep and narrow neural network versus a shallow and wide neural network. Discuss the factors influencing this choice.

-

Explain the memory bottleneck problem encountered during CNN inference and training. Discuss the role of activations in this context and propose strategies to mitigate this issue.

-

Describe the evolution of activation functions from Sigmoid to ReLU and its variants like Leaky ReLU and Swish. Discuss the considerations of sparsity, quantization, and gradient flow in the development of these activation functions.

-

Explain the concept of attention in Transformers and its analogy to a retrieval system. Elaborate on the different components of the attention mechanism, including query, key, value, and the attention map.

-

Discuss the importance of efficient deep learning techniques in bridging the gap between the computational demands of deep learning models and the capabilities of hardware. Analyze different metrics used to measure the efficiency of neural networks, including model size, computational complexity, latency, and throughput.

📚 Glossary

- Neuron: The fundamental unit of a neural network, analogous to a biological neuron, that processes and transmits information.

- Synapse: The connection between neurons in a neural network, characterized by a weight that determines the strength of the connection.

- Activation: The output of a neuron after applying an activation function.

- Feature: An individual measurable property or characteristic of the input data.

- Weight: A parameter within a neural network that determines the strength of the connection between neurons.

- Parameter: A configurable value in a neural network that is learned during training.

- Fully Connected Layer: A layer where each neuron is connected to every neuron in the previous layer.

- Convolution Layer: A layer that utilizes a convolution operation to extract features from the input data.

- Receptive Field: The region of the input data that a particular neuron in a convolutional neural network can “see” or process.

- Padding: Adding extra values (typically zeros) around the borders of the input data to control the spatial dimensions of the output.

- Strided Convolution: A convolution operation where the filter moves across the input data with a step size greater than one, effectively downsampling the output.

- Grouped Convolution: A technique where the convolution operation is divided into smaller groups, with each group operating on a subset of the input channels.

- Depthwise Convolution: A special case of grouped convolution where each input channel has its own dedicated filter.

- Normalization Layer: A layer that normalizes the activations to stabilize and speed up the training process.

- Activation Function: A non-linear function applied to the output of a neuron to introduce non-linearity into the network.

- ReLU (Rectified Linear Unit): An activation function that outputs the input directly if it is positive; otherwise, it outputs zero.

- Leaky ReLU: A variation of ReLU that allows a small, non-zero gradient for negative input values.

- Swish: A smooth activation function that is computationally efficient and often outperforms ReLU.

- Transformer: A neural network architecture that relies heavily on the attention mechanism for processing sequential data.

- Latency: The time delay between providing an input to a system and receiving the corresponding output.

- Throughput: The amount of data processed by a system within a specific timeframe.

- MAC (Multiply-Accumulate): A fundamental operation in neural networks involving a multiplication followed by an accumulation.

- FLOP (Floating Point Operation): A single arithmetic operation performed on floating-point numbers.

- OP (Operation): A generalization of FLOP to encompass various operations, including integer and bitwise operations.