📚 Manual Neural Networks

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

1. Limitations of Linear Classifiers 🧮

- Linear classifiers divide the input space into linear regions, limiting their ability to model complex datasets.

- As stated in the lecture:

“[Linear classifiers] basically chop up the space into $( K )$ different linear regions.”

2. Nonlinear Feature Mappings 🌟

- Definition: Nonlinear feature mappings ($( \phi(x) )$) transform input features into a higher-dimensional space, making linear separation possible.

Example from the lecture:

“Suppose this is the origin here. Then maybe a good feature vector for this case would be something like $( x^2 + y^2 )$. That would let us separate these classes with a linear classifier.” - Learnable Feature Mappings: Neural networks learn $( \phi(x) )$ directly from data, enhancing flexibility:

“This leads to a natural question: if we have a hypothesis like this… as well as all the parameters of the feature extractor itself.”

3. Neural Networks 🧠

Definition 📖

A neural network is a hypothesis class composed of parameterized, differentiable functions (“layers”) connected sequentially.

“A neural network refers to a hypothesis class consisting of multiple, parameterized differentiable functions (a.k.a. ‘layers’) composed together to form the output.”

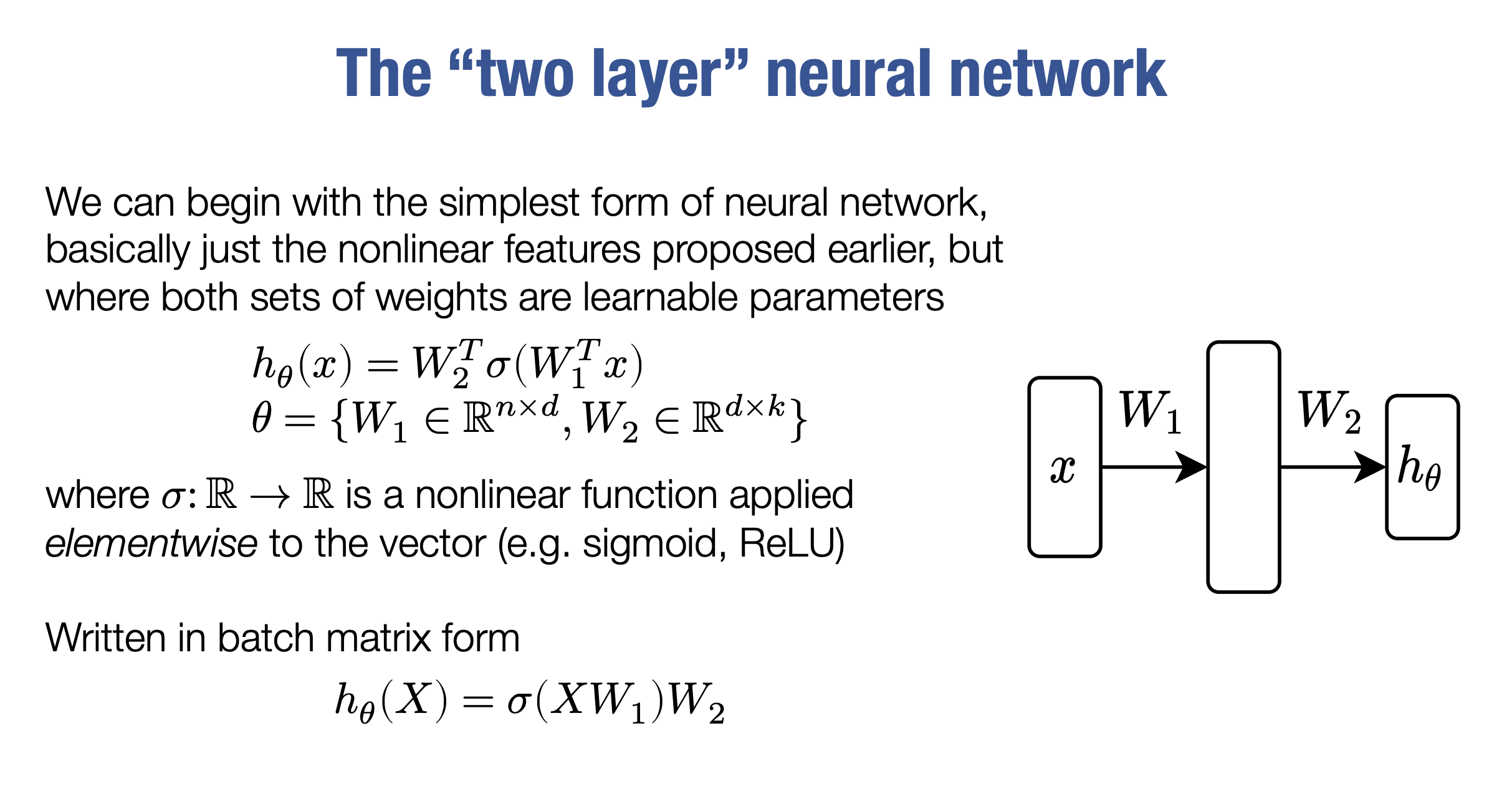

Two-Layer Network 🔗

The simplest neural network:

\([

h_\theta(x) = W_2^\top \sigma(W_1^\top x)

]\)

- $( W_1, W_2 )$: Weight matrices.

- $( \sigma )$: Nonlinear activation function applied elementwise.

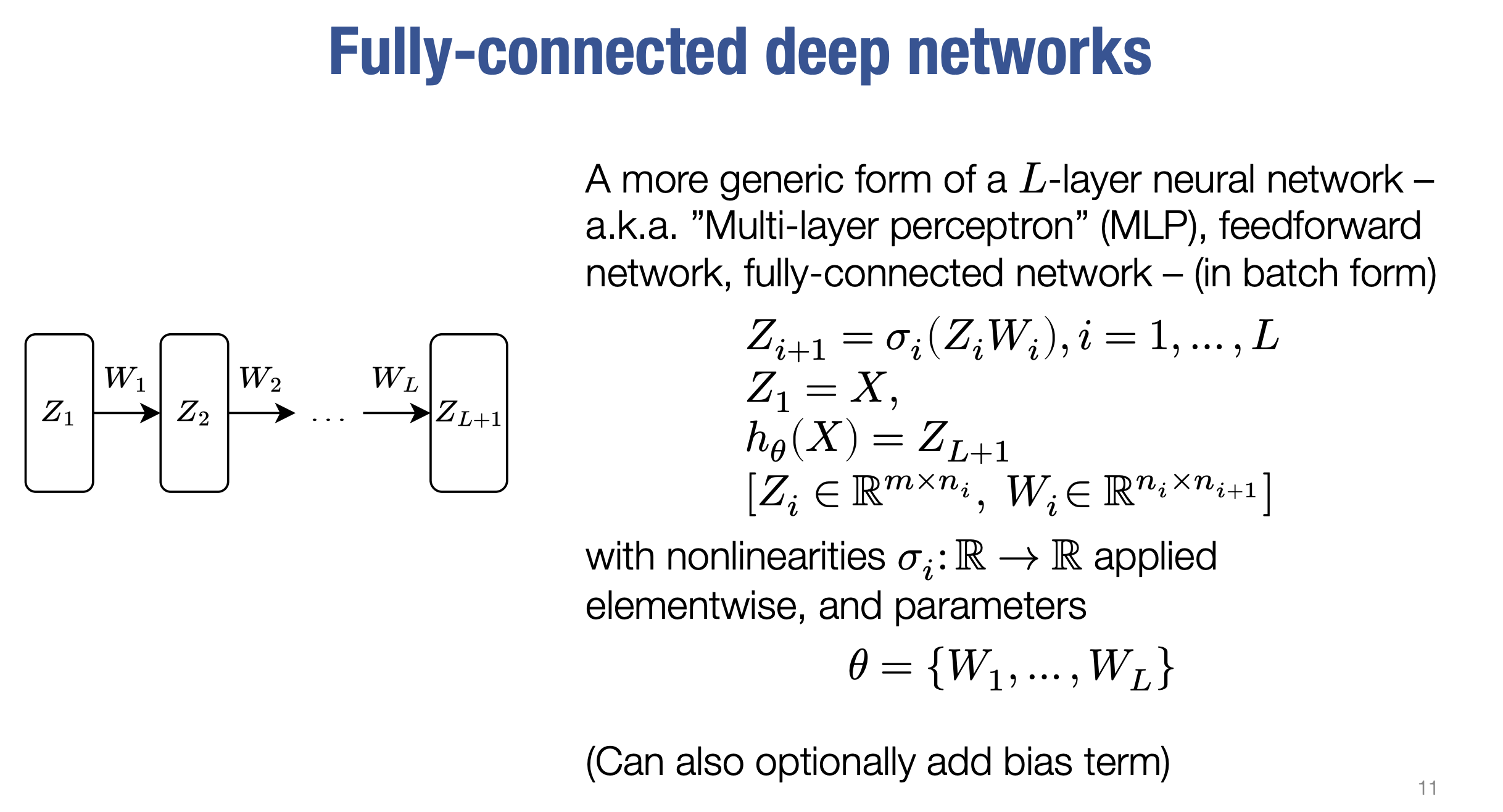

Deep Networks 🌐

Generalizing the structure to $( L )$-layer networks:

“A generic $( L )$-layer neural network – also known as a Multi-layer Perceptron (MLP), feedforward network, or fully-connected network.”

Universal Approximation 🌌

- Theory: Two-layer networks with enough hidden units can approximate any smooth function.

- Caution: This requires a large number of hidden units, which might not be practical:

“It’s a nice property but not that practical.”

4. Example: Two-Layer Network 🧠

Consider a simple two-layer neural network, with weights $( W_1 )$ and $( W_2 )$. The hypothesis function is:

\[[ h_\theta(X) = \sigma(XW_1)W_2 ]\]where:

- $( X )$: Input matrix.

- $( W_1 )$: Weight matrix for the first layer.

- $( \sigma )$: Nonlinear activation function (e.g., sigmoid, ReLU).

- $( W_2 )$: Weight matrix for the second layer.

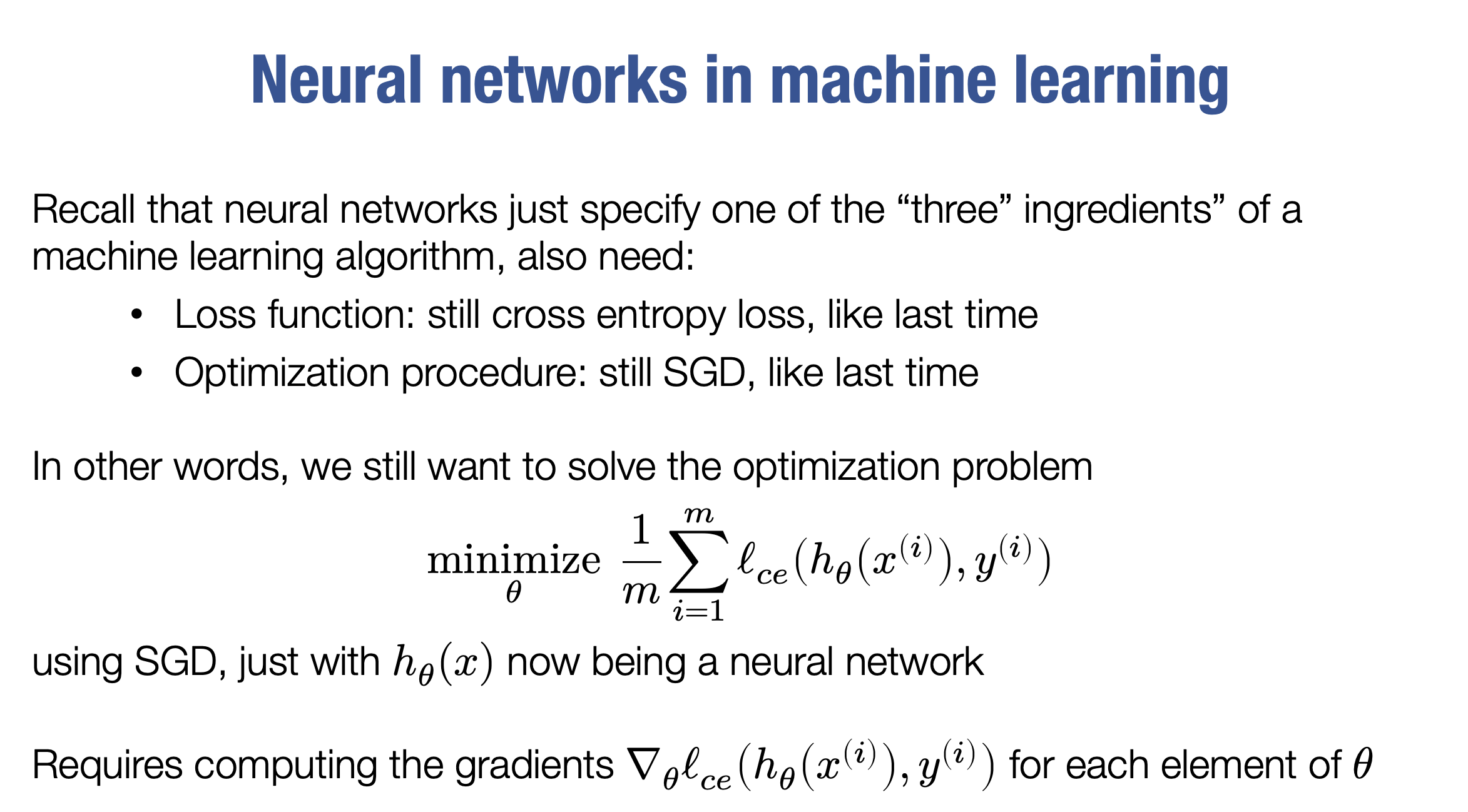

Computing the Gradients 📐

To train the network using gradient descent, we compute the gradients of the loss function with respect to $( W_1 )$ and $( W_2 )$.

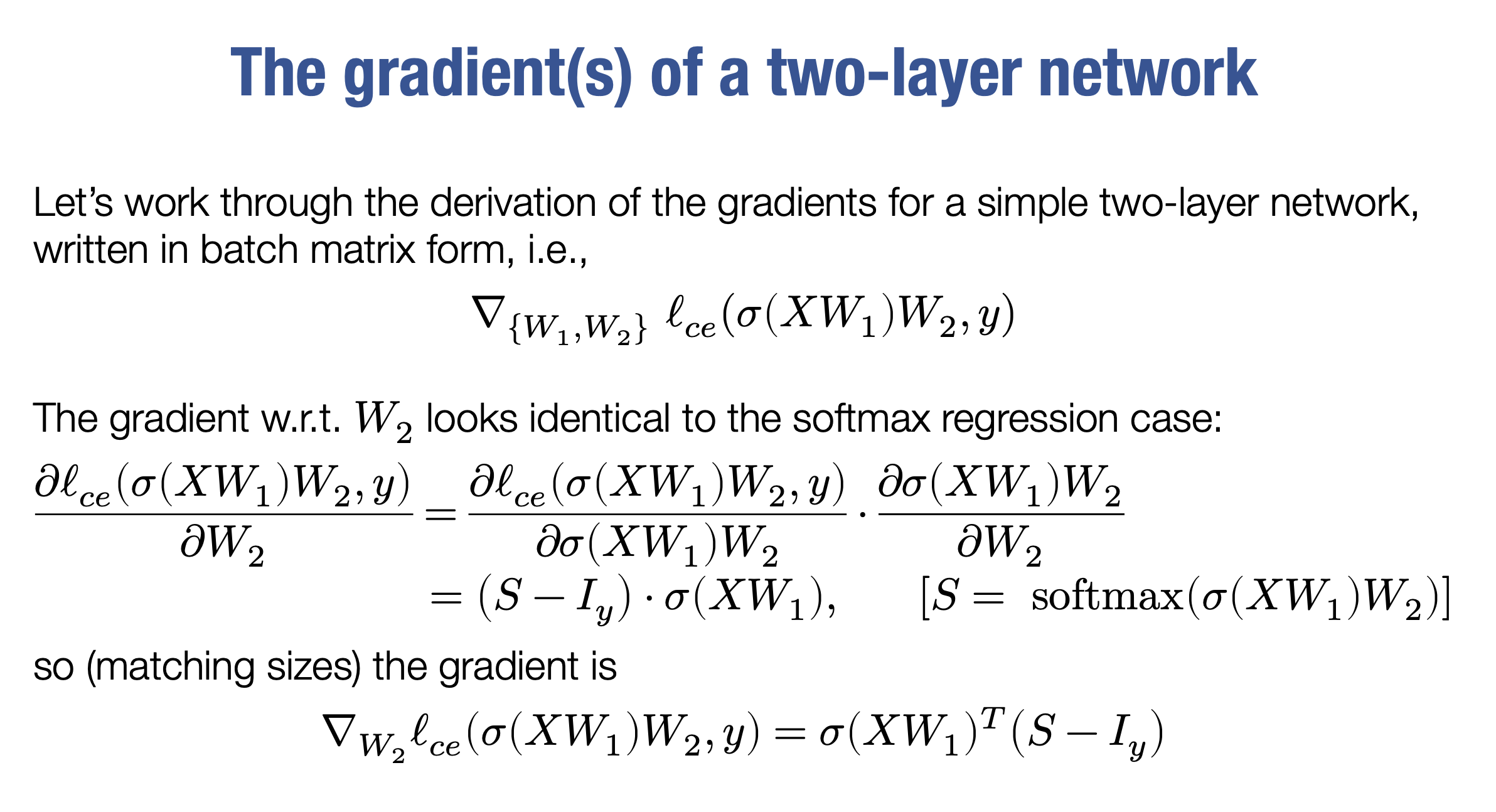

Gradient with Respect to $( W_2 )$ 🔄

The gradient for $( W_2 )$ is similar to the softmax regression case and is given by:

\[[ \nabla_{W_2} \ell_c(XW_1W_2, Y) = (\sigma(XW_1))^\top (S - I_y) ]\]where:

- $( S )$: Softmax of the network’s final prediction.

- $( I_y )$: One-hot encoding of the labels.

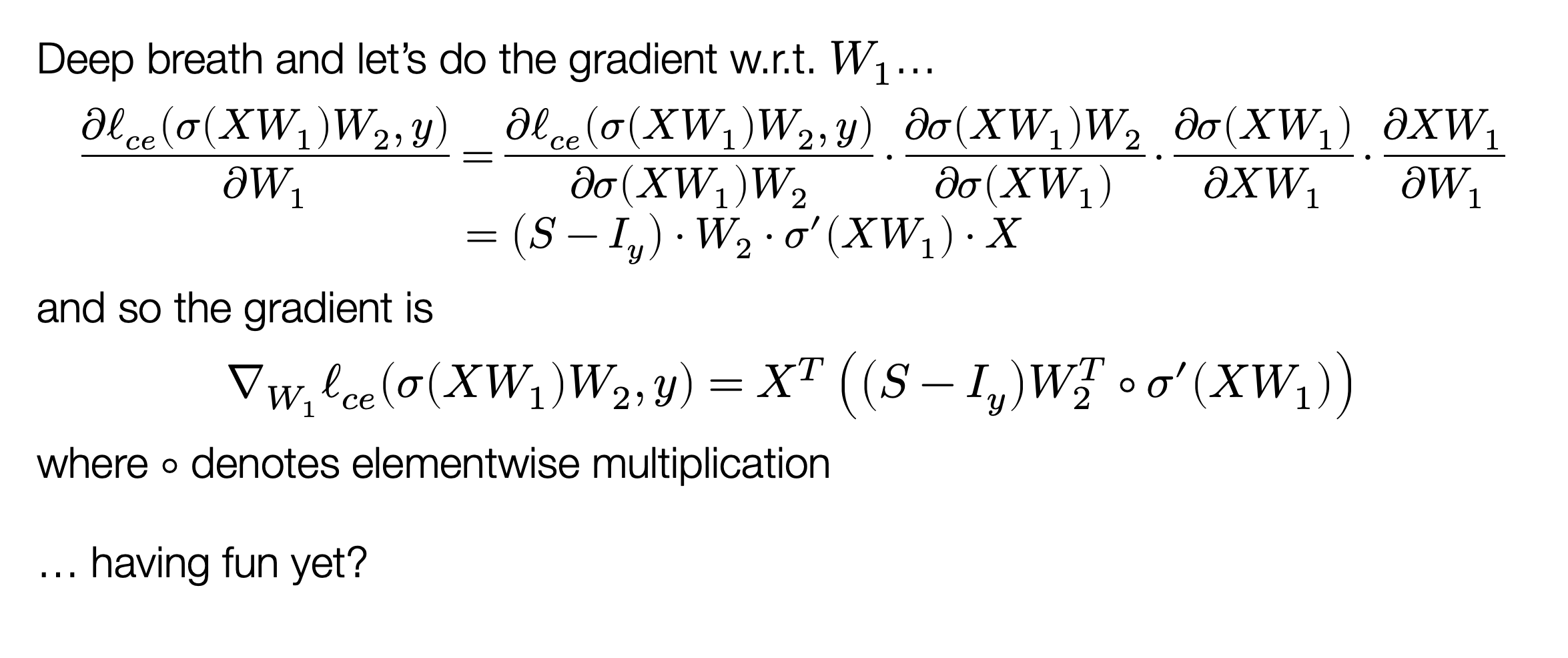

Gradient with Respect to $( W_1 )$ 🔙

The gradient for $( W_1 )$ is more complex and requires applying the chain rule multiple times. The final expression is:

\[[ \nabla_{W_1} \ell_c(XW_1W_2, Y) = \big((S - I_y)W_2^\top \circ \sigma'(XW_1)\big) X^\top ]\]where:

- $( \circ )$: Elementwise multiplication.

- $( \sigma’(XW_1) )$: Elementwise derivative of the activation function applied to $( XW_1 )$.

Key Points ✨

- The gradient computation for $( W_2 )$ uses the outputs of the first layer ($( \sigma(XW_1) )$) and the difference between the softmax output ($( S )$) and the labels ($( I_y )$).

- For $( W_1 )$, the chain rule propagates the gradient through the second layer ($( W_2 )$) and the activation function’s derivative.

def nn_epoch(X, y, W1, W2, lr = 0.1, batch=100):

""" Run a single epoch of SGD for a two-layer neural network defined by the

weights W1 and W2 (with no bias terms):

logits = ReLU(X * W1) * W2

The function should use the step size lr, and the specified batch size (and

again, without randomizing the order of X). It should modify the

W1 and W2 matrices in place.

Args:

X (np.ndarray[np.float32]): 2D input array of size

(num_examples x input_dim).

y (np.ndarray[np.uint8]): 1D class label array of size (num_examples,)

W1 (np.ndarray[np.float32]): 2D array of first layer weights, of shape

(input_dim, hidden_dim)

W2 (np.ndarray[np.float32]): 2D array of second layer weights, of shape

(hidden_dim, num_classes)

lr (float): step size (learning rate) for SGD

batch (int): size of SGD minibatch

Returns:

None

"""

num_examples = X.shape[0] # Number of examples

for start in range(0, num_examples, batch):

# Batch indices

end = min(start + batch, num_examples)

X_batch = X[start:end]

y_batch = y[start:end]

# Forward pass

Z1 = np.dot(X_batch, W1) # Shape: (batch_size, hidden_dim)

H1 = np.maximum(0, Z1) # ReLU activation, Shape: (batch_size, hidden_dim)

logits = np.dot(H1, W2) # Shape: (batch_size, num_classes)

# Compute probabilities (softmax)

logits_stable = logits - np.max(logits, axis=1, keepdims=True) # Stability fix

exp_logits = np.exp(logits_stable)

probs = exp_logits / np.sum(exp_logits, axis=1, keepdims=True) # Shape: (batch_size, num_classes)

# One-hot encode labels

Iy = np.zeros_like(probs)

Iy[np.arange(y_batch.size), y_batch] = 1

# Backward pass

G2 = (probs - Iy) / X_batch.shape[0] # Gradient of W2

G1 = np.dot(G2, W2.T) # Backpropagate to hidden layer

G1[Z1 <= 0] = 0 # Apply ReLU derivative

# Compute gradients

grad_W2 = np.dot(H1.T, G2) # Shape: (hidden_dim, num_classes)

grad_W1 = np.dot(X_batch.T, G1) # Shape: (input_dim, hidden_dim)

# Gradient descent step

W1 -= lr * grad_W1

W2 -= lr * grad_W2

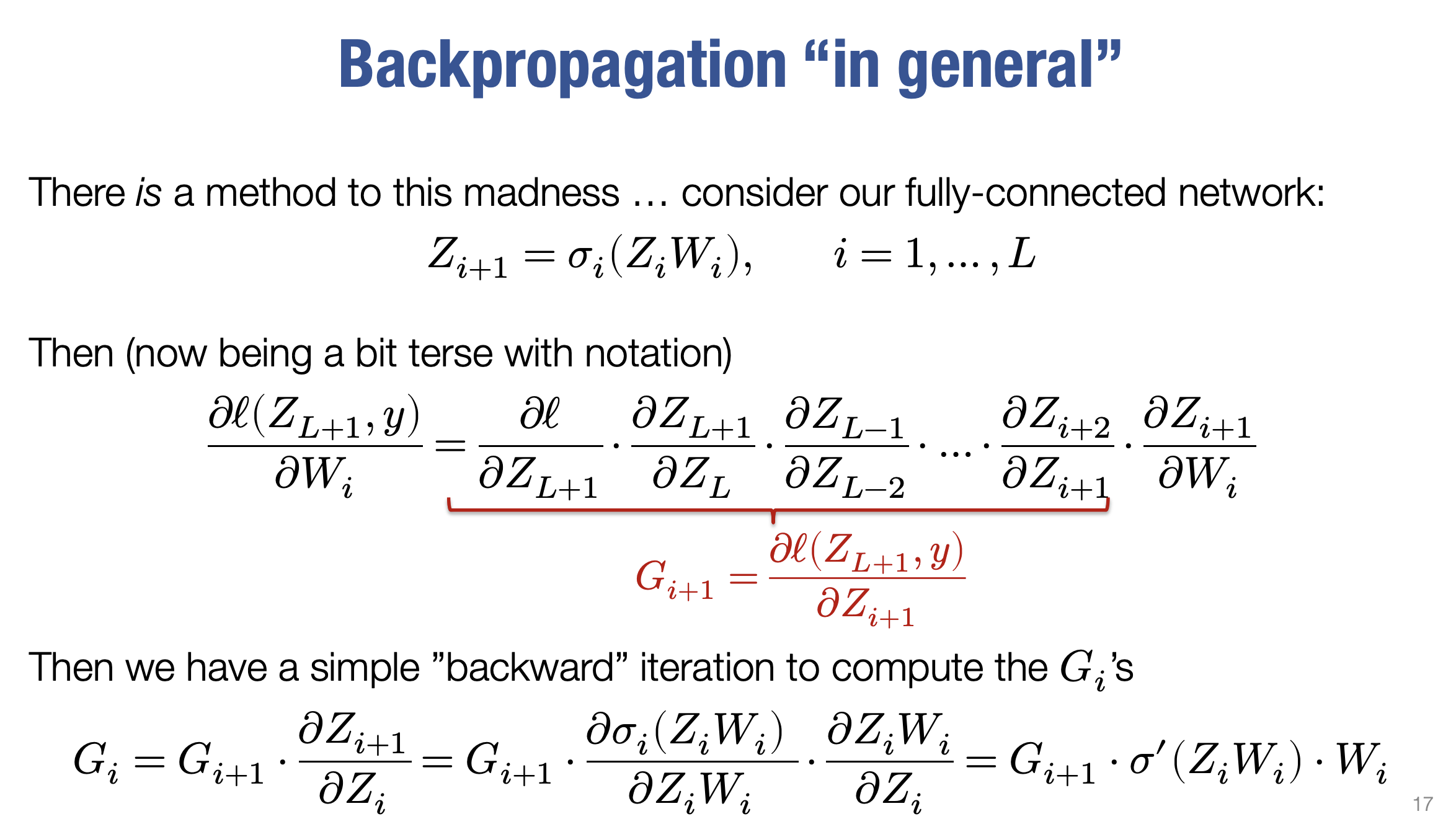

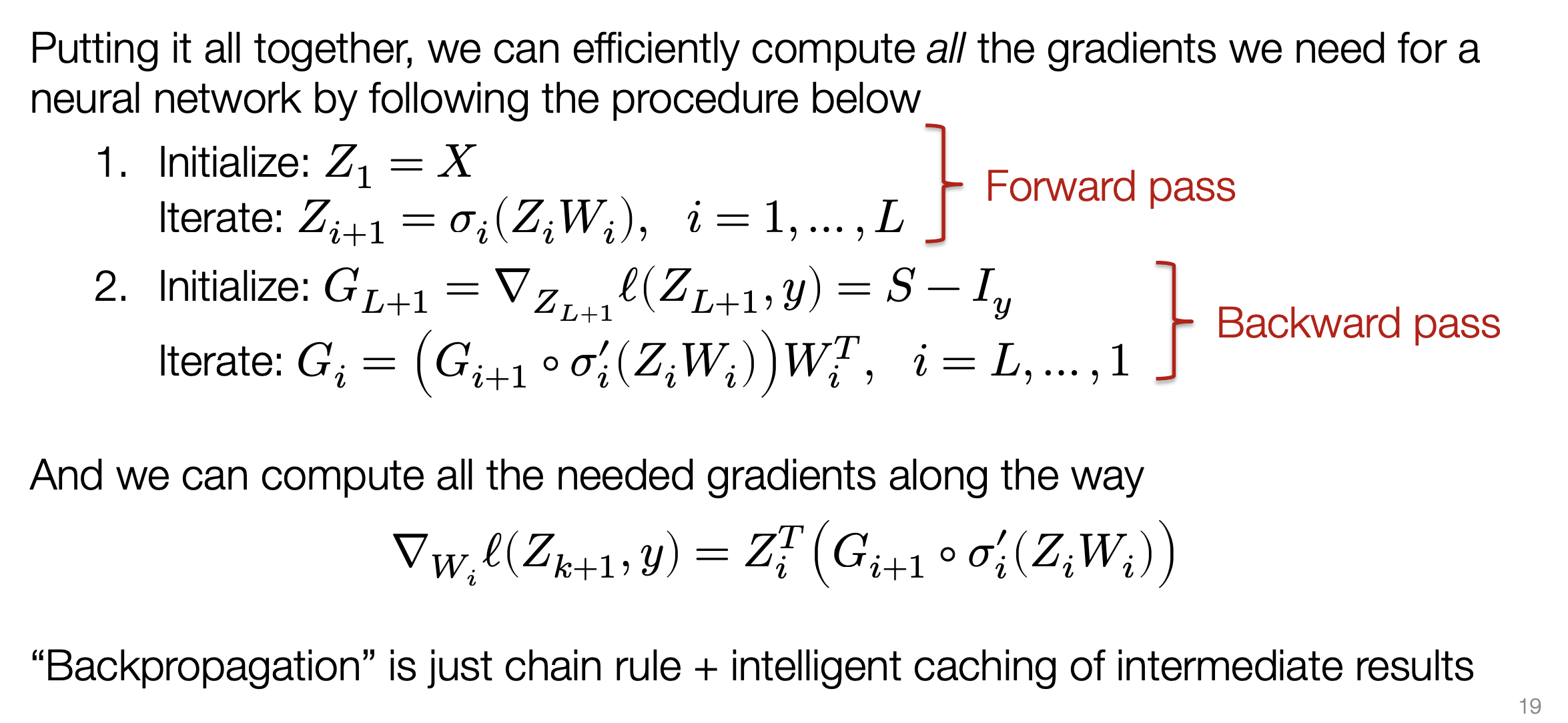

5. General L-Layer Backpropagation 🔄

Purpose 🎯

An efficient algorithm for computing gradients of the loss function with respect to network parameters.

“Backpropagation is just the chain rule + intelligent caching of intermediate results.”

Process 🛠️

- Forward Pass: Compute layer activations sequentially.

- Backward Pass: Compute gradients using the chain rule: \([ G_{i} = G_{i+1} \cdot \frac{\partial Z_{i+1}}{\partial Z_{i}} ]\)

Backpropagation in $( L )$-layer neural networks generalizes the process used for simpler networks. The main idea is to break down gradient computations into smaller, manageable steps using the chain rule and efficient caching.

Forward Pass 🔄

- Initialization: The first layer output, $( Z_1 )$, is set to the input data, $( X )$.

-

Subsequent Layers: For $( i = 1, \dots, L )$, compute: \([ Z_{i+1} = \sigma(Z_i W_i) ]\)

where:

- $( Z_i )$: Activations of the $( i )$-th layer.

- $( W_i )$: Weight matrix of the $( i )$-th layer.

- $( \sigma )$: Nonlinear activation function applied elementwise.

- Final Output: The network’s output is $( Z_{L+1} )$, which depends on the architecture and choice of activation functions.

Backward Pass 🔙

- Start with Output Gradient: Compute the gradient of the loss with respect to the output layer:

\([

G_{L+1} = S - I_y

]\)

where:

- $( S )$: Softmax of the network’s predictions.

- $( I_y )$: One-hot encoding of the true labels.

- Iterative Gradients: For $( i = L, \dots, 1 )$, compute:

\([

G_i = \big(G_{i+1} \circ \sigma'(Z_i W_i)\big) W_i^\top

]\)

where:

- $( G_i )$: Backward gradient for the $( i )$-th layer.

- $( \circ )$: Elementwise multiplication.

- $( \sigma’(Z_i W_i) )$: Derivative of the activation function applied elementwise.

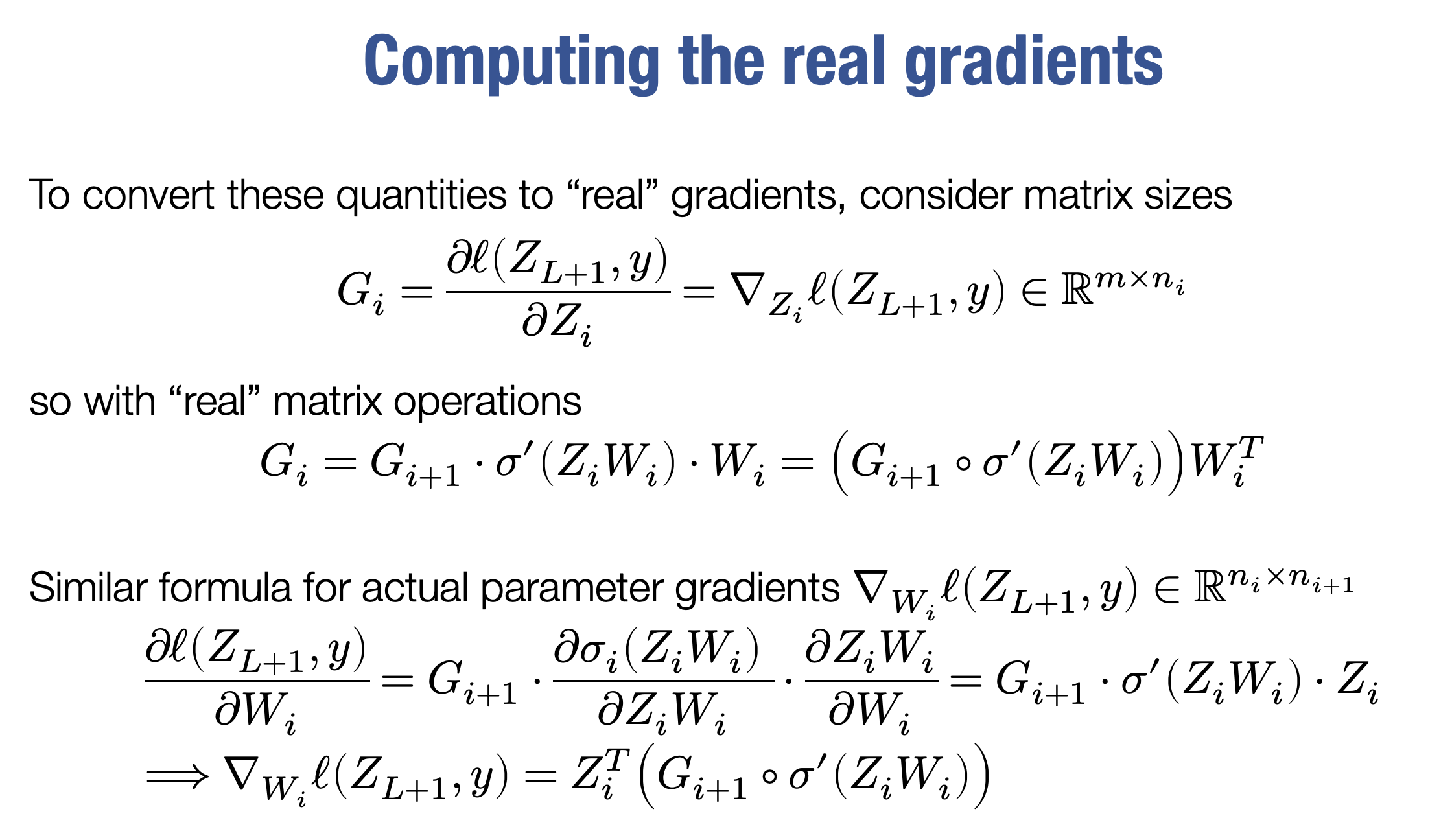

Computing Parameter Gradients 📐

During the backward pass, calculate the gradients for each weight matrix: \([ \nabla_{W_i} \ell(Z_{L+1}, Y) = Z_i^\top \big(G_{i+1} \circ \sigma'(Z_i W_i)\big) ]\)

Key Components:

- Incoming Gradient: $( G_{i+1} )$, the gradient from the subsequent layer.

- Local Derivative: $( \sigma’(Z_i W_i) )$, the activation function’s derivative.

- Previous Layer Output: $( Z_i )$, the activations from the previous layer.

Vector-Jacobian Product 📊

- The gradient computation involves a vector Jacobian product, where:

- The “vector” is $( G_{i+1} )$.

- The “Jacobian” is the matrix of partial derivatives of the layer’s output with respect to its weights.

Role of Caching 🗂️

- Efficiency: Intermediate results from the forward pass, $( Z_i )$, are cached.

- Usage: These results are reused during the backward pass to compute gradients, avoiding redundant calculations.

Implications ⚡

- Memory Tradeoff: Storing intermediate activations $( Z_i )$ increases memory usage, especially in deep networks and large datasets.

- Efficiency: Backpropagation computes all gradients in a single forward and backward pass.

Conclusion ✅

- Backpropagation for $( L )$-layer networks is a foundational concept for training deep models.

- By modularizing gradient computation into forward and backward passes, it leverages:

- The chain rule.

- Caching for efficiency.

- These principles extend to advanced techniques like automatic differentiation.

“We reuse intermediate results to compute all the gradients efficiently, exploiting the recursive structure of the network.”

6. Glossary of Key Terms 📚

- Logit: The raw, unnormalized output of a neuron before any activation function is applied.

- Feature Mapping ($( \phi )$): Transforms input features into a new feature space for better learning.

- Activation Function ($( \sigma )$): Introduces non-linearity. Examples: ReLU, sigmoid, tanh.

- Hidden Layer: Layers between input and output, extracting complex features.

- Universal Approximation Theorem: States that a single hidden layer can approximate any continuous function with enough neurons.

- Backpropagation: Algorithm for gradient computation during neural network training.

- Forward Pass: Computes predictions by passing input through the network.

- Backward Pass: Computes gradients by propagating errors backward.

- Vector-Jacobian Product: Combines backward gradients with local layer derivatives.

- Automatic Differentiation: Generalizes backpropagation for arbitrary models.