📚 Softmax Regression

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

1. 🛠️ Ingredients of a Machine Learning Algorithm:

- Hypothesis Function: Maps input features to output predictions.

- Loss Function: Quantifies the error between predicted and actual values.

- Optimization Method: Finds the parameters that minimize the loss function.

🎯 Softmax Regression Specifics:

- Multi-class classification setting: For problems where the output belongs to one of $( k )$ classes.

- Input data ($( x )$): Represented as $( n )$-dimensional vectors.

- Output data ($( y )$): Scalar values ranging from 1 to $( k )$, representing class labels.

- Training set size ($( m )$): Number of examples.

Example: MNIST digit classification

- $( n = 784 )$ (28 x 28 pixel images)

- $( k = 10 )$ (digits 0 through 9)

- $( m = 60,000 )$

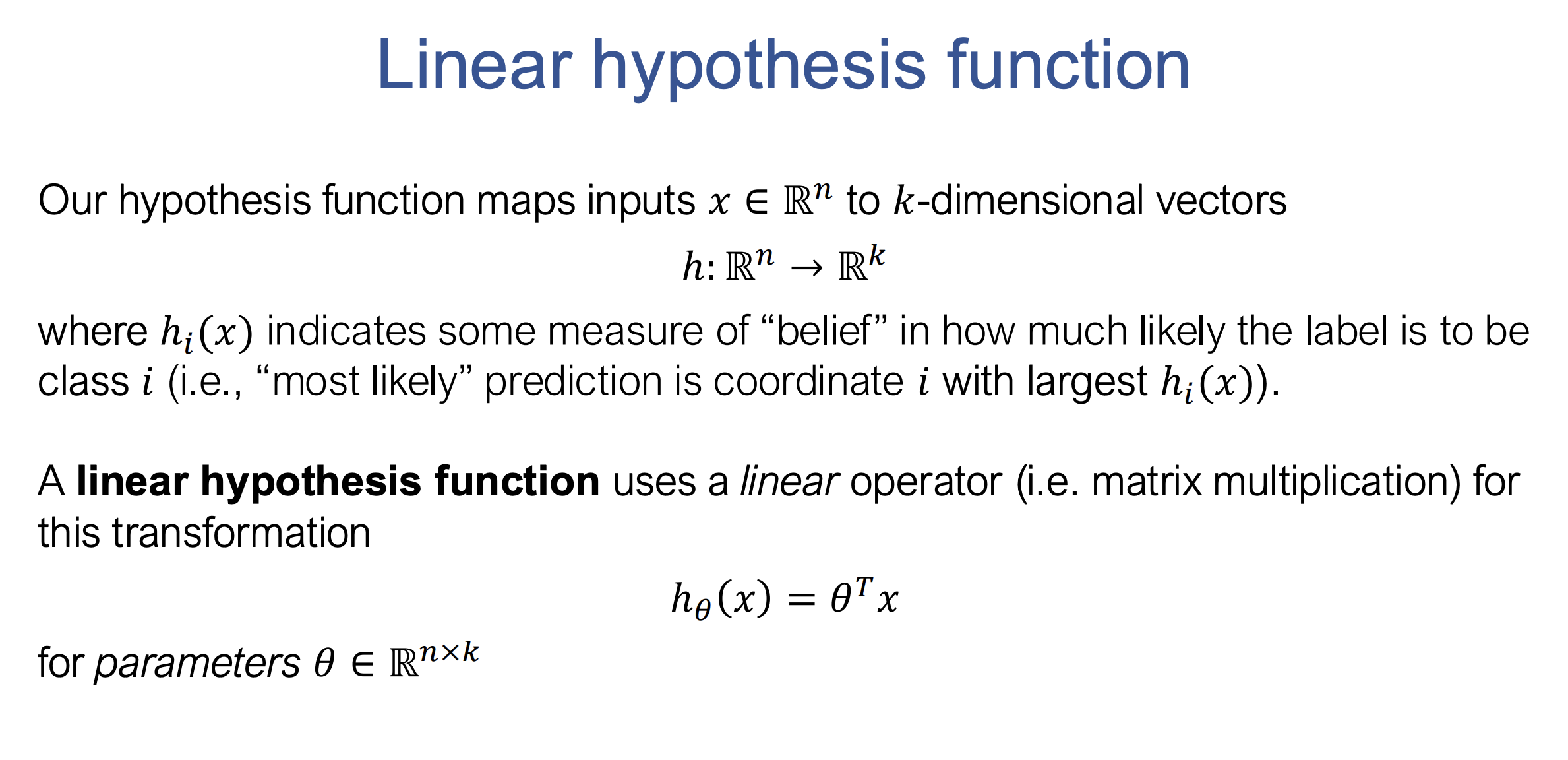

🧮 Hypothesis Function for Softmax Regression:

Maps $( n )$-dimensional input vectors to $( k )$-dimensional output vectors.

- Linear Hypothesis:

Softmax From Scratch

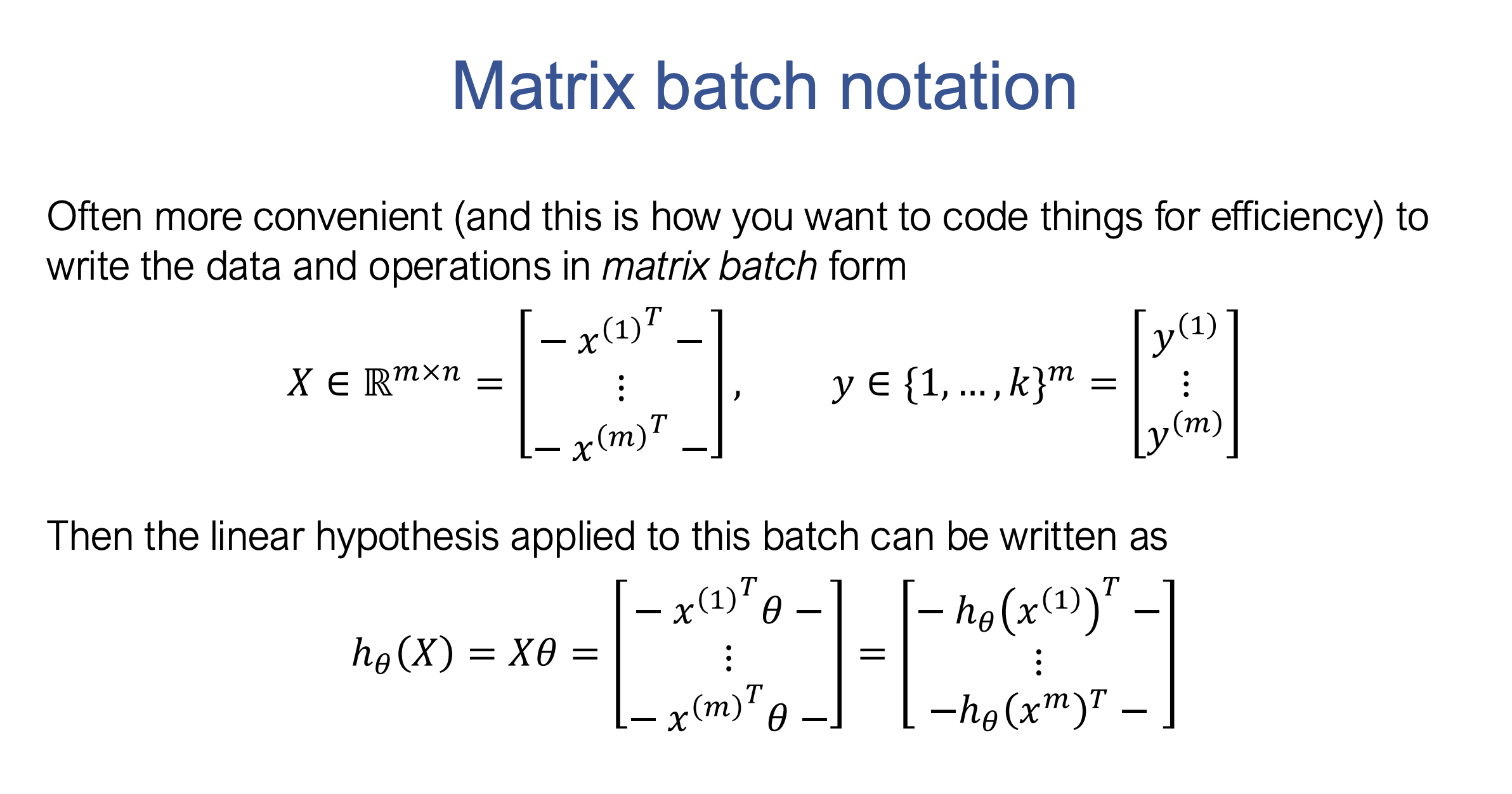

Matrix Batch Notation

Efficient representation using matrices:

- Design Matrix ($( X )$): A matrix of size $( m \times n )$, where each row represents a training example.

- Target Vector ($( y )$): A vector of size $( m )$, containing the class labels for each example.

Explanation:

- $( m )$: Number of examples (samples) in the training set.

- $( n )$: Number of features (dimensions) in each input example.

- $( k )$: Number of classes in the classification problem.

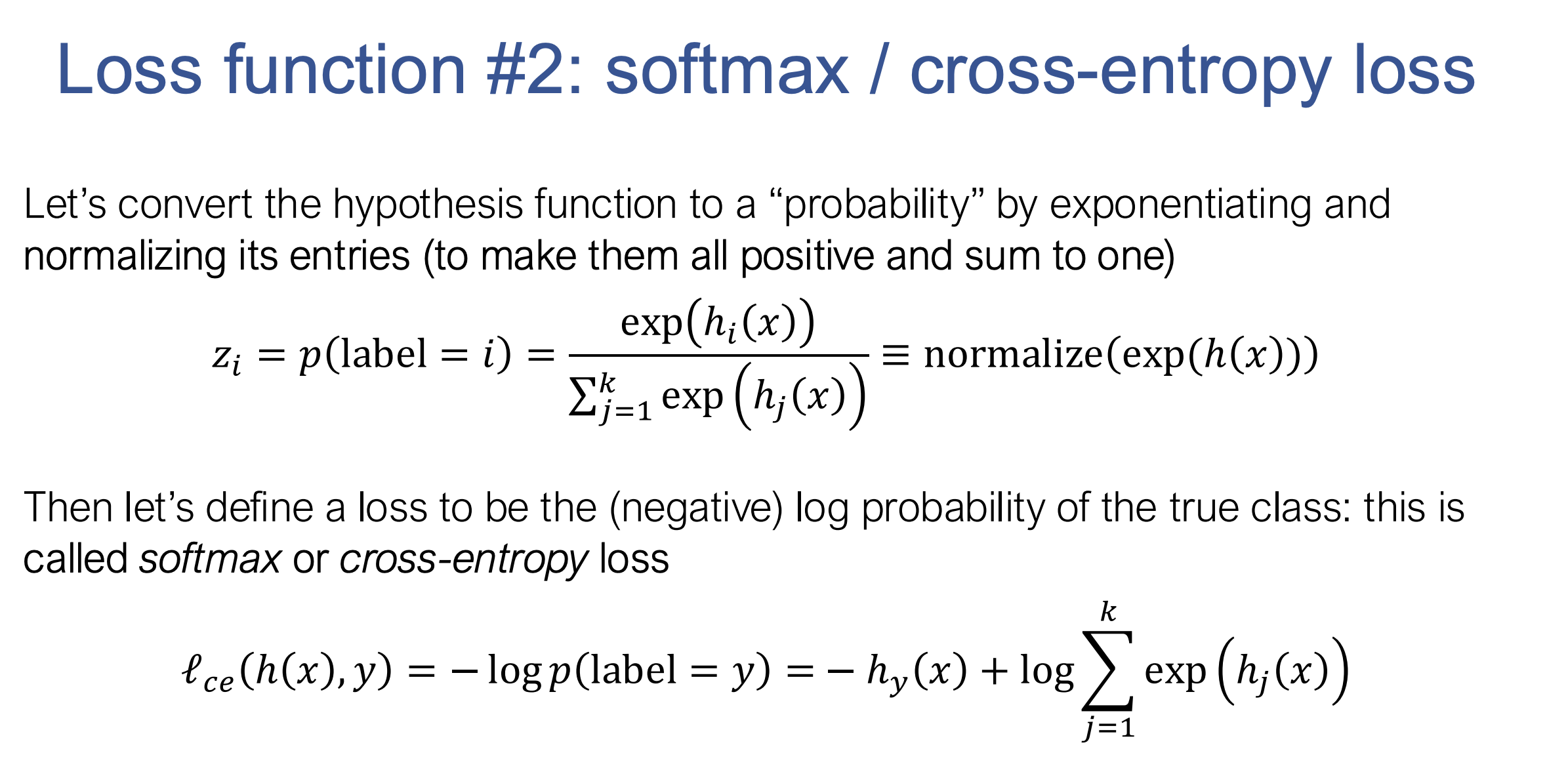

📉 Loss Functions:

- Classification Error (Accuracy): Indicates mistakes but is non-differentiable (not suitable for optimization).

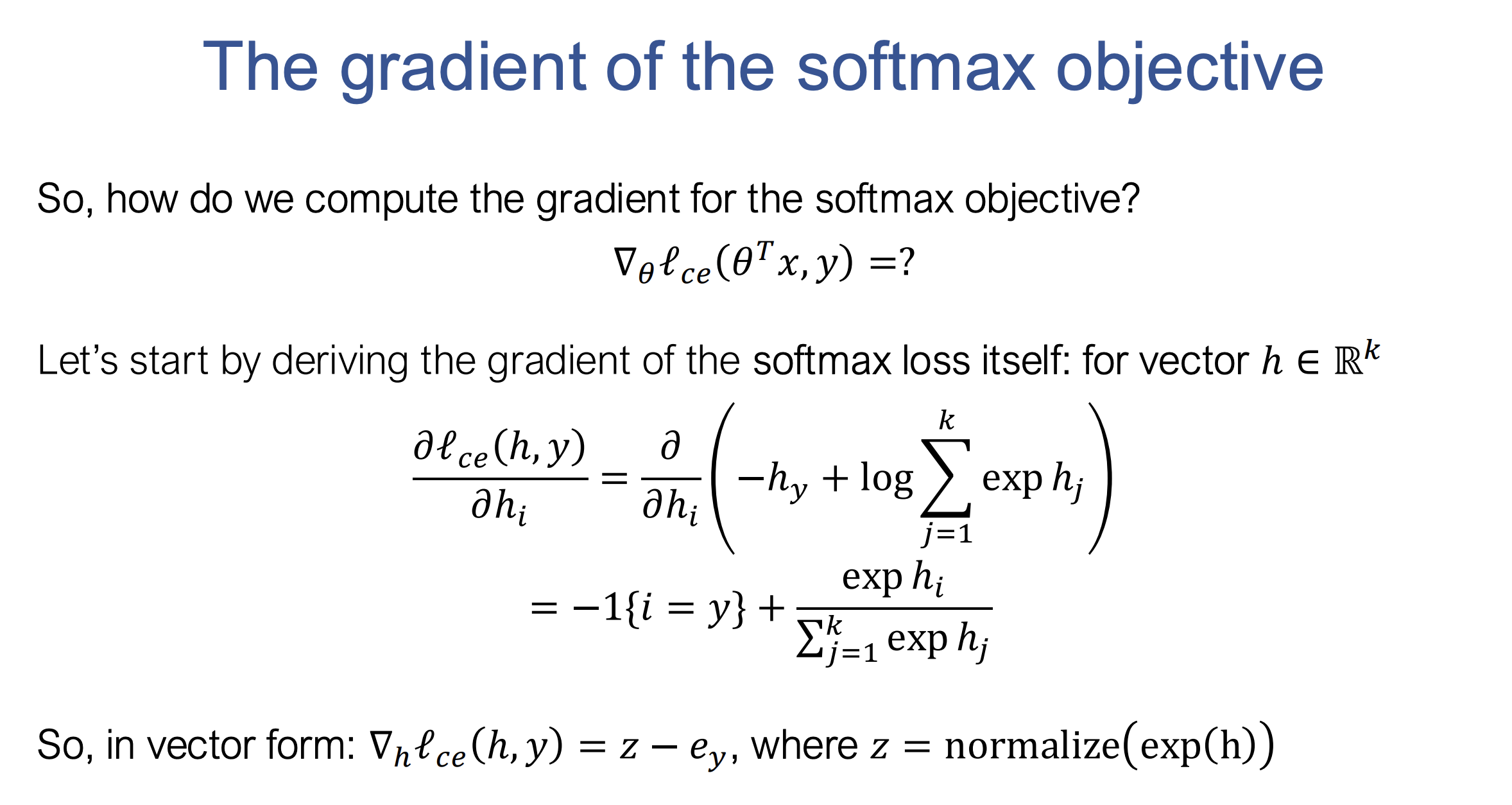

- Softmax/Cross-Entropy Loss: Converts logits to probabilities and measures the negative log probability of the true class: \(\ell_c(h(x), y) = -h_y(x) + \log \left( \sum_{j=1}^k \exp(h_j(x)) \right)\)

def softmax_loss(Z, y):

""" Return softmax loss.

Args:

Z (np.ndarray[np.float32]): 2D numpy array of shape

(batch_size, num_classes), containing the logit predictions for

each class.

y (np.ndarray[np.uint8]): 1D numpy array of shape (batch_size, )

containing the true label of each example.

Returns:

Average softmax loss over the sample.

"""

# Compute the log-sum-exp term

log_sum_exp = np.log(np.sum(np.exp(Z), axis=1))

# Compute the log probability for the correct class

correct_class_log_prob = Z[np.arange(Z.shape[0]), y]

# Compute the softmax loss

loss = np.mean(log_sum_exp - correct_class_log_prob)

return loss

The above given function computes the softmax loss for a batch of data. However, the computation of the log_sum_exp term can lead to numerical instability, especially when the values in Z (the logits) are very large or very small. To address this, the log-sum-exp trick is commonly applied, which involves subtracting the maximum logit value for each example before applying the exponential function. This ensures numerical stability without changing the result of the computation.

def softmax_loss_improved(Z, y):

""" Improved softmax loss.

Args:

Z (np.ndarray[np.float32]): 2D numpy array of shape

(batch_size, num_classes), containing the logit predictions for

each class.

y (np.ndarray[np.uint8]): 1D numpy array of shape (batch_size, )

containing the true label of each example.

Returns:

Average softmax loss over the sample.

"""

# Stabilize logits by subtracting the max logit from each row

Z_max = np.max(Z, axis=1, keepdims=True)

Z_stable = Z - Z_max

# Compute the log-sum-exp term using the stabilized logits

log_sum_exp = np.log(np.sum(np.exp(Z_stable), axis=1)) + np.squeeze(Z_max)

# Compute the log probability for the correct class

correct_class_log_prob = Z[np.arange(Z.shape[0]), y]

# Compute the softmax loss

loss = np.mean(log_sum_exp - correct_class_log_prob)

return loss

The Log-Sum-Exp Trick:

The goal of the log-sum-exp trick is to make the computation of \(\log\left(\sum_{j=1}^k e^{Z_j}\right)\)

numerically stable while preserving its mathematical equivalence.

-

Original Expression: \(\log \left( \sum_{j=1}^k e^{Z_j} \right)\)

-

Stabilization: Subtract the maximum value from Z: \(( Z_{\text{max}} = \max_j Z_j )\)

This does not change the result because: \(\log \left( \sum_{j=1}^k e^{Z_j} \right) = \log \left( \sum_{j=1}^k e^{Z_j - Z_{\text{max}}} \cdot e^{Z_{\text{max}}} \right)\)

-

Logarithm Property: Using the property \(( \log(a \cdot b) = \log(a) + \log(b) )\), we rewrite: \(= Z_{\text{max}} + \log \left( \sum_{j=1}^k e^{Z_j - Z_{\text{max}}} \right)\)

In the Code:

Z_stable = Z - Z_maxstabilizes the computation.np.log(np.sum(np.exp(Z_stable), axis=1))computes the logarithm of the scaled sum.- Adding

+ np.squeeze(Z_max)restores the original value, making the stabilized version equivalent to the mathematically correct log-sum-exp.

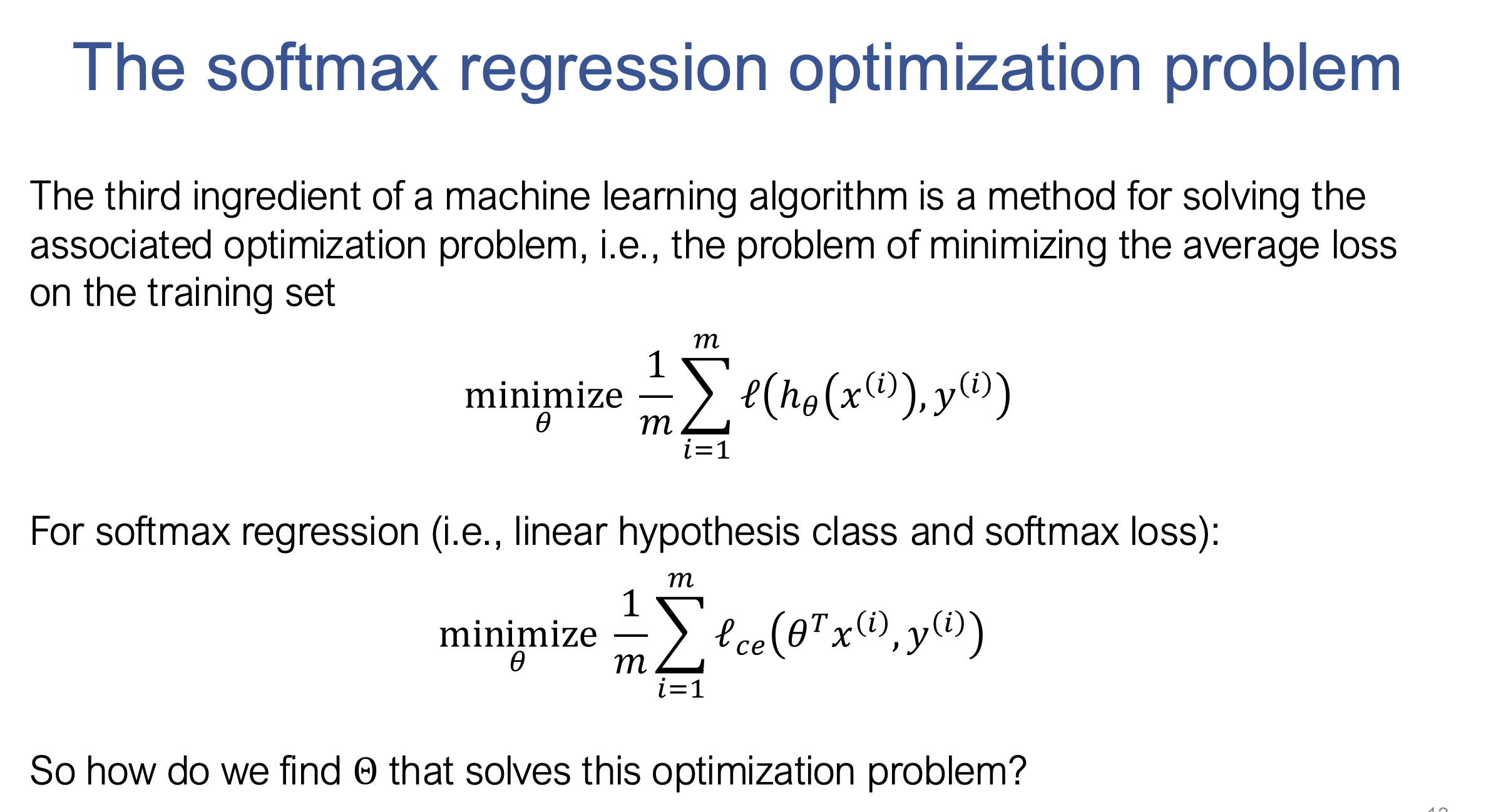

3. 🔧 Optimization:

- Gradient Descent: Gradient descent updates parameters by stepping in the direction of the negative gradient:

Where:

- ( \alpha ): Learning rate (step size), controls the size of the step taken in the direction of the negative gradient.

- ( \nabla_\theta ): Gradient of the loss function with respect to the parameters ( \theta ).

- Stochastic Gradient Descent (SGD):

- Uses mini-batches instead of the whole dataset for updates.

- Faster and more memory-efficient.

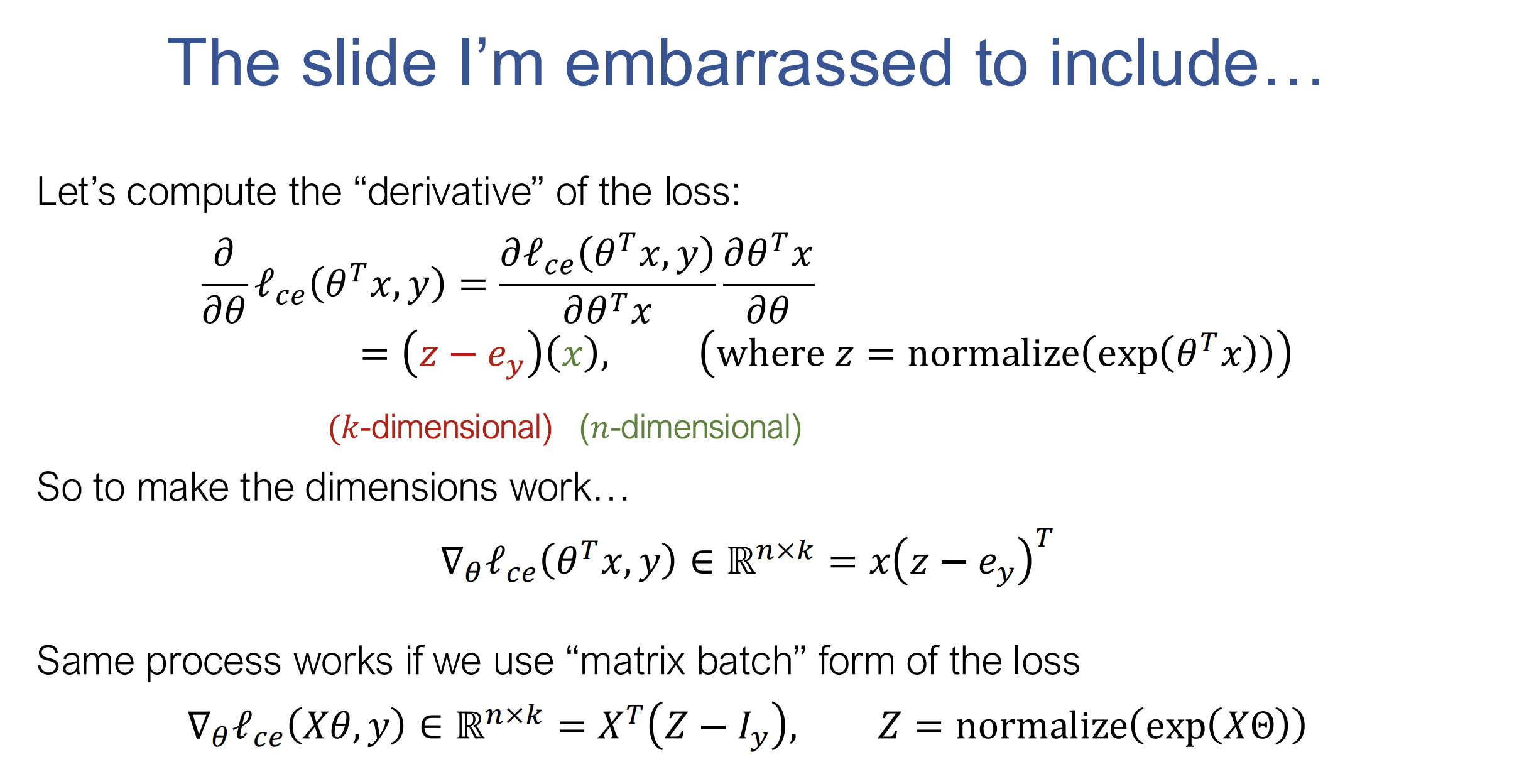

4. 🔢 Computing the Gradient:

- Pretend everything is scalar and use the chain rule.

- Adjust dimensions for correctness.

- Verify numerically.

def softmax_regression_epoch(X, y, theta, lr = 0.1, batch=100):

""" Run a single epoch of SGD for softmax regression on the data, using

the step size lr and specified batch size. This function should modify the

theta matrix in place, and you should iterate through batches in X _without_

randomizing the order.

Args:

X (np.ndarray[np.float32]): 2D input array of size

(num_examples x input_dim).

y (np.ndarray[np.uint8]): 1D class label array of size (num_examples,)

theta (np.ndarrray[np.float32]): 2D array of softmax regression

parameters, of shape (input_dim, num_classes)

lr (float): step size (learning rate) for SGD

batch (int): size of SGD minibatch

Returns:

None

"""

num_examples = X.shape[0] # Number of examples

num_classes = theta.shape[1] # Number of classes

for start in range(0, num_examples, batch):

# Batch indices

end = min(start + batch, num_examples)

X_batch = X[start:end]

y_batch = y[start:end]

# Compute logits

logits = np.dot(X_batch, theta) # Shape: (batch_size, num_classes)

# Compute softmax probabilities

exp_logits = np.exp(logits - np.max(logits, axis=1, keepdims=True)) # Stability fix

probs = exp_logits / np.sum(exp_logits, axis=1, keepdims=True) # Shape: (batch_size, num_classes)

# Create one-hot encoded labels

Iy = np.zeros_like(probs)

Iy[np.arange(y_batch.size), y_batch] = 1

# Compute gradient

g = np.dot(X_batch.T, (probs - Iy)) / X_batch.shape[0] # Shape: (input_dim, num_classes)

# Update theta in place

theta -= lr * g

5. 📝 Short Answer Quiz:

- What is the role of the hypothesis function in machine learning?

- Why is classification error not suitable for optimization?

- How does the softmax function transform outputs?

- Describe the basic idea of gradient descent.

- What is the purpose of the learning rate (( \alpha ))?

- Why use SGD instead of standard gradient descent?

- How does SGD handle large datasets?

- Describe the matrix batch notation in softmax regression.

- Outline the steps of the softmax regression algorithm.

- Why check matrix dimensions when computing gradients?

6. 🗝️ Answer Key:

- Maps input features to predictions, defining the model’s mechanism.

- It’s not differentiable; gradient-based methods need gradients.

- Converts logits to probabilities, enabling probabilistic interpretation.

- Iteratively updates parameters to minimize loss using negative gradients.

- Controls step size; smaller values ensure stability, larger ones speed up convergence.

- It’s computationally cheaper and allows frequent updates using mini-batches.

- Processes data in small subsets, reducing memory and computational costs.

- Represents data as a design matrix and target labels as a vector for efficiency.

- Iterates over batches, computes gradients, and updates parameters.

- Ensures mathematical correctness and avoids computation errors.

📖 Glossary:

- Hypothesis Function: Maps inputs to outputs.

- Loss Function: Measures prediction error.

- Optimization Method: Minimizes the loss function.

- Multi-class Classification: Outputs belong to one of $( k )$ classes.

- Linear Hypothesis Class: Linear functions in parameters.

- Matrix Batch Notation: Efficient matrix representation of data.

- Design Matrix: Rows are training examples.

- Target Vector: Contains example labels.

- Classification Error: Indicates mistakes.

- Softmax Loss: Measures true class probability.

- Gradient Descent: Optimizes parameters iteratively.

- Learning Rate ($( \alpha )$): Controls update size.

- SGD: Mini-batch gradient updates.

- Gradient: Points in the steepest ascent direction.

- Partial Derivative: Rate of change wrt one variable.

- Chain Rule: Derivative of composite functions.

- Automatic Differentiation: Automates gradient computation.

- MNIST Dataset: Handwritten digit dataset.

- Logits: Raw model outputs before softmax.

- One-hot Encoding: Vectorized label representation.

- Unit Basis Vector: Vector with a single 1.

- Softmax Operator: Converts logits to probabilities.