TinyML LLM Post-Training

Modern AI models are becoming increasingly large, demanding substantial computational resources and memory. This creates a gap between the computational demands of these models and the available hardware capabilities. Pruning addresses this gap by reducing model size, memory footprint, and ultimately, energy consumption.

This document summarizes key concepts and techniques for post-training Large Language Models (LLMs). The focus is on aligning model outputs with human preferences, optimizing performance, and enabling multi-modal capabilities.

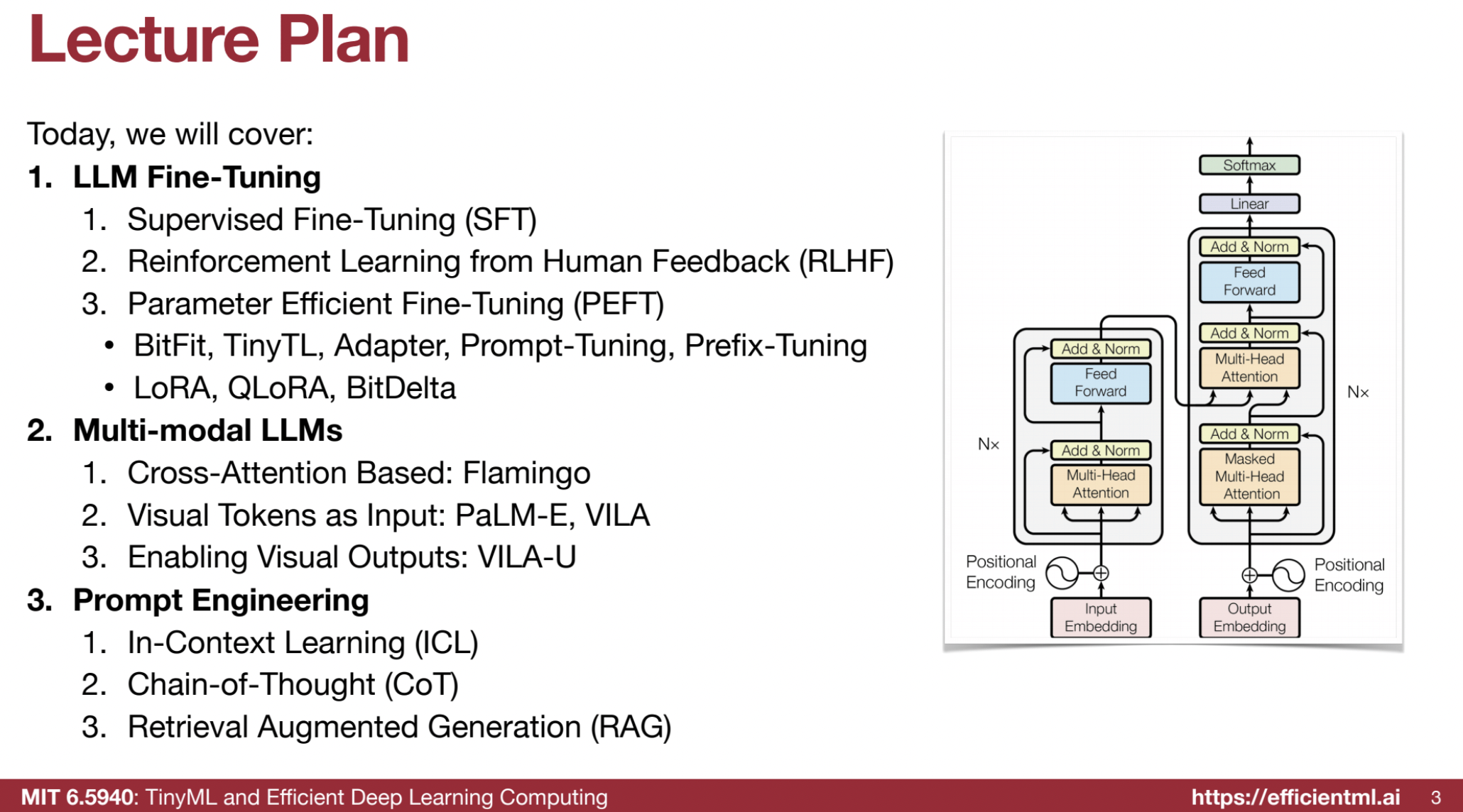

📚 1. LLM Fine-Tuning

The post-training phase adapts pre-trained LLMs to specific tasks and preferences. Here are the key fine-tuning techniques:

🎯 1.1 Supervised Fine-Tuning (SFT)

- Purpose: Align the LLM’s outputs with human preferences by training on a dataset of prompts paired with desired responses.

- Mechanism: Human-labeled responses are used as “ground truth,” and the model learns to match these.

- Example:

- Base LLM: “Try resetting your password using the forgot password option.”

- SFT Model: “I’m sorry to hear you’re having trouble logging in. You can reset your password using the forgot password option on the login page.”

- Key Idea: SFT focuses on language modeling for desired responses using a loss function: $[ L(U) = \sum_{i} \log P(u_i | u_0, …, u_{i−1}; \Theta) ]$

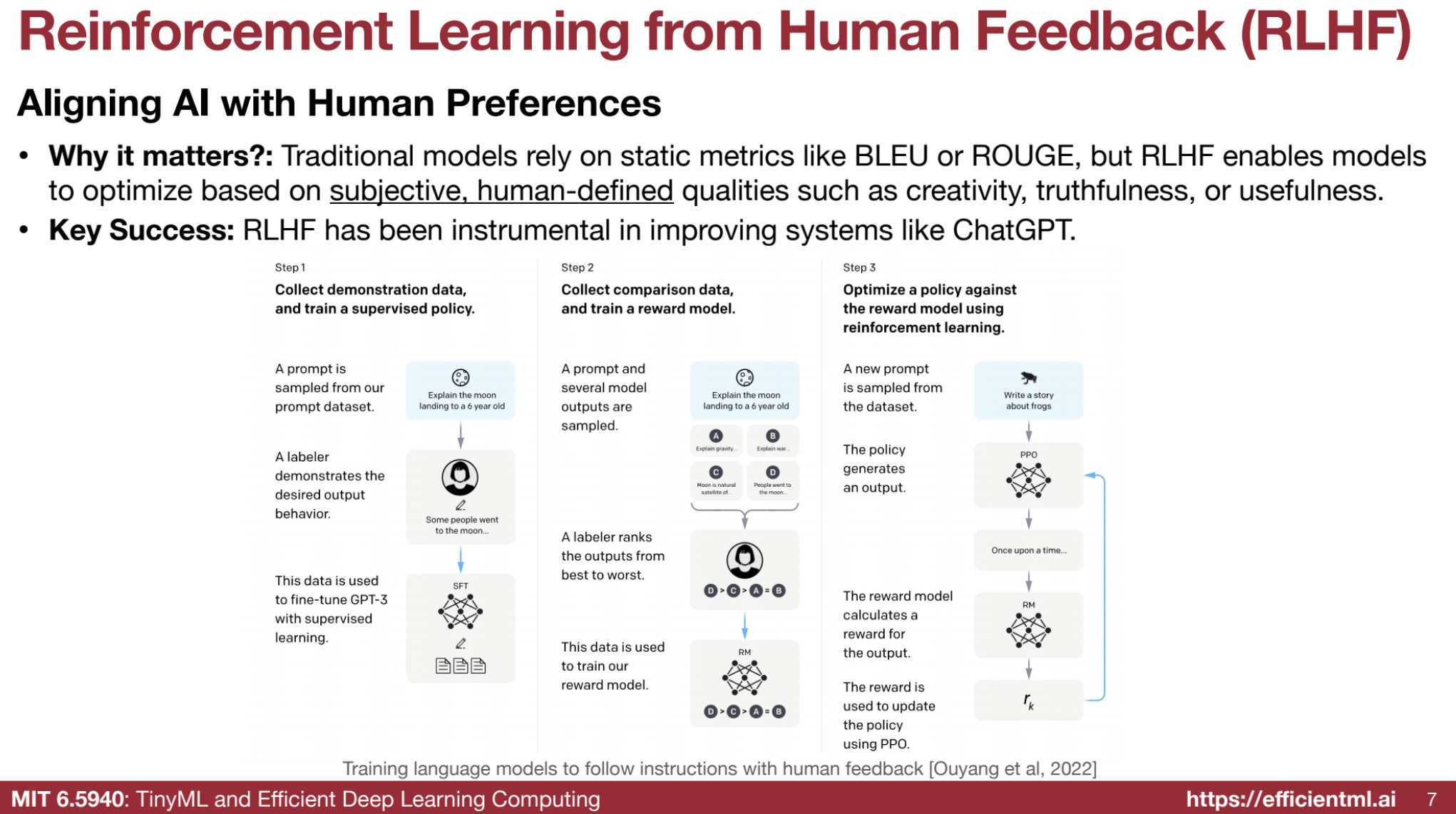

🤝 1.2 Reinforcement Learning from Human Feedback (RLHF)

- Purpose: Align AI with human-defined subjective qualities like creativity, truthfulness, and usefulness.

- Process:

- Supervised Fine-Tuning (SFT): Train on labeled outputs.

- Reward Model: Train a model to rank outputs based on human preferences.

- Policy Optimization: Use reinforcement learning to maximize rewards while maintaining similarity to the original model.

- Key Idea: RLHF optimizes for subjective qualities, addressing limitations of static metrics like BLEU or ROUGE.

⭐ Creating a Reward Model

- Purpose: Train a model to predict how well responses align with human preferences.

- Process:

- Collect comparison data by ranking multiple responses to a single prompt (best to worst).

- Example: For “Explain the moon landing to a six-year-old,” simpler answers score higher than complex or irrelevant ones.

- Training Formula:

- $( \text{max}{r\theta} {\mathbb{E}{(x, y{\text{win}}, y_{\text{lose}}) \sim \mathcal{D}} [\log \sigma(r_\theta(x, y_{\text{win}}) - r_\theta(x, y_{\text{lose}}))] } )$

- $( r_\theta )$: reward model.

- $( x )$: prompt.

- $( y_{\text{win}}, y_{\text{lose}} )$: preferred and less preferred responses.

- $( \mathcal{D} )$: dataset of ranked responses.

- $( \sigma )$: sigmoid function.

- $( \text{max}{r\theta} {\mathbb{E}{(x, y{\text{win}}, y_{\text{lose}}) \sim \mathcal{D}} [\log \sigma(r_\theta(x, y_{\text{win}}) - r_\theta(x, y_{\text{lose}}))] } )$

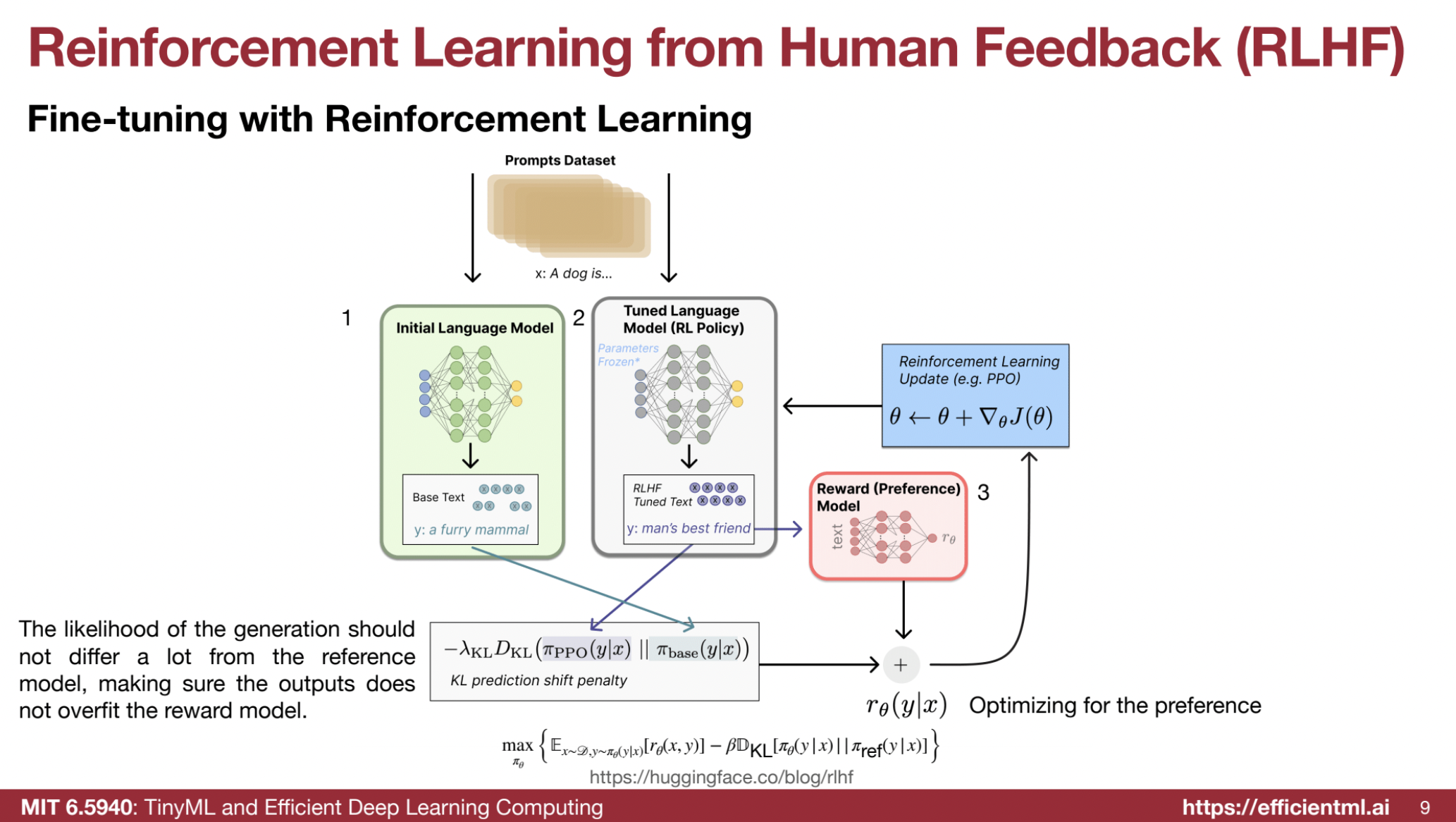

🤖 Fine-Tuning with Reinforcement Learning (PPO)

- Objective: Further optimize the model using rewards from the reward model.

- Policy Optimization: Use Proximal Policy Optimization (PPO) to balance alignment and originality.

- Formula:

-

$( \text{max}{\pi\theta} {\mathbb{E}{x \sim \mathcal{D}, y \sim \pi\theta(y x)}[r_\theta(x, y)] - \beta \mathcal{D}{KL}[\pi\theta(y x) \pi_{\text{ref}}(y x)]} )$ - $( \pi_\theta )$: policy model.

- $( r_\theta(x, y) )$: reward for response $( y )$.

- $( \beta )$: KL divergence penalty weight.

- $( \mathcal{D}_{KL} )$: Kullback-Leibler divergence (distance from the reference model).

-

🛠️ PPO Steps:

- Generate Experience: Use the policy model to generate responses.

- Calculate Rewards:

- $( \text{reward} = \text{reward_score} - \text{kl_ctl} \times (\text{log_probs} - \text{ref_log_probs}) )$

- $( \text{kl_ctl} )$: KL divergence weight.

- Compute Advantages and Returns:

- Use Generalized Advantage Estimation (GAE).

- Calculate Actor Loss:

- $( \text{pg_loss} = -\text{advantages} \times \min(\text{ratio}, \text{clip}(\text{ratio}, 1-\text{cliprange}, 1+\text{cliprange})) )$

- Calculate Critic Loss:

- $( \text{vf_loss} = 0.5 \times \max((\text{values} - \text{returns})^2, (\text{values_clipped} - \text{returns})^2) )$

- Update Models: Adjust the policy (actor) and value (critic) models using gradient descent.

🎭 1. Actor Model (Policy Model)

- Role: The language model being optimized. Takes prompts as input and generates responses.

- Training Details:

- Input Data: Prompts from the training dataset.

- Objective: Maximize reward while limiting policy deviation using policy loss:

- $( \text{pg_loss} = -\text{advantages} \times \min(\text{ratio}, \text{clip}(\text{ratio}, 1-\text{cliprange}, 1+\text{cliprange})) )$

- Ratio: New policy probability to old policy probability.

- Cliprange: Hyperparameter limiting changes.

- Weights: Updated during training to maximize rewards.

- Training Process:

- Iteratively trained via PPO.

- Generates responses, calculates actor loss, backpropagates, and updates weights.

- Key Concept:

- Old Log Probs: Log probabilities from the reference model to prevent policy drift.

- Training is sequential within batches.

📊 2. Critic Model (Value Model)

- Role: Evaluates responses by predicting a value (expected cumulative reward) for each state.

- Training Details:

- Input Data: Prompts, responses, and rewards from the reward model.

- Objective: Minimize the difference between predicted and actual returns using:

- $( \text{vf_loss} = 0.5 \times \max((\text{values} - \text{returns})^2, (\text{values_clipped} - \text{returns})^2) )$

- Weights: Updated to improve value predictions.

- Training Process:

- Iteratively trained.

- Predicts values, calculates critic loss, backpropagates, and updates weights.

🌟 3. Reward Model

- Role: Predicts a score indicating alignment of responses with human preferences.

- Training Details:

- Input Data: Comparison data where human labelers rank responses (e.g., preferred vs. dispreferred).

- Objective: Train using:

- $( \text{max}{r\theta} {\mathbb{E}{(x, y{\text{win}}, y_{\text{lose}}) \sim \mathcal{D}} [\log \sigma(r_\theta(x, y_{\text{win}}) - r_\theta(x, y_{\text{lose}}))] } )$

- Weights: Updated during training to improve preference prediction.

- Process:

- Trained prior to PPO fine-tuning.

- Outputs higher scores for preferred responses.

📏 4. Reference Model

- Role: Baseline model to compute KL divergence, preventing drastic policy changes.

- Training Details:

- Input Data: Same prompts as the actor model.

- Objective: No updates during PPO; used to stabilize actor training.

- Weights: Fixed during RLHF.

🔄 Connections and Training Flow

- Initial SFT: Supervised fine-tuning trains the model on prompts and desired responses, creating the initial actor and reference models.

- Reward Model Training: Comparison data trains the reward model to predict scores reflecting human preferences.

- PPO Loop:

- Actor generates responses.

- Reward model scores responses.

- Reference model calculates KL divergence as a penalty.

- $( \text{reward} = \text{reward_score} - \text{kl_ctl} \times (\text{log_probs} - \text{ref_log_probs}) )$

- Critic evaluates responses, computes GAE.

- Actor and critic models are updated iteratively.

- Iteration: Actor and critic models refine through multiple epochs, while the reference model remains static.

- Sequential Processing: Training for actor and critic models in PPO occurs sequentially.

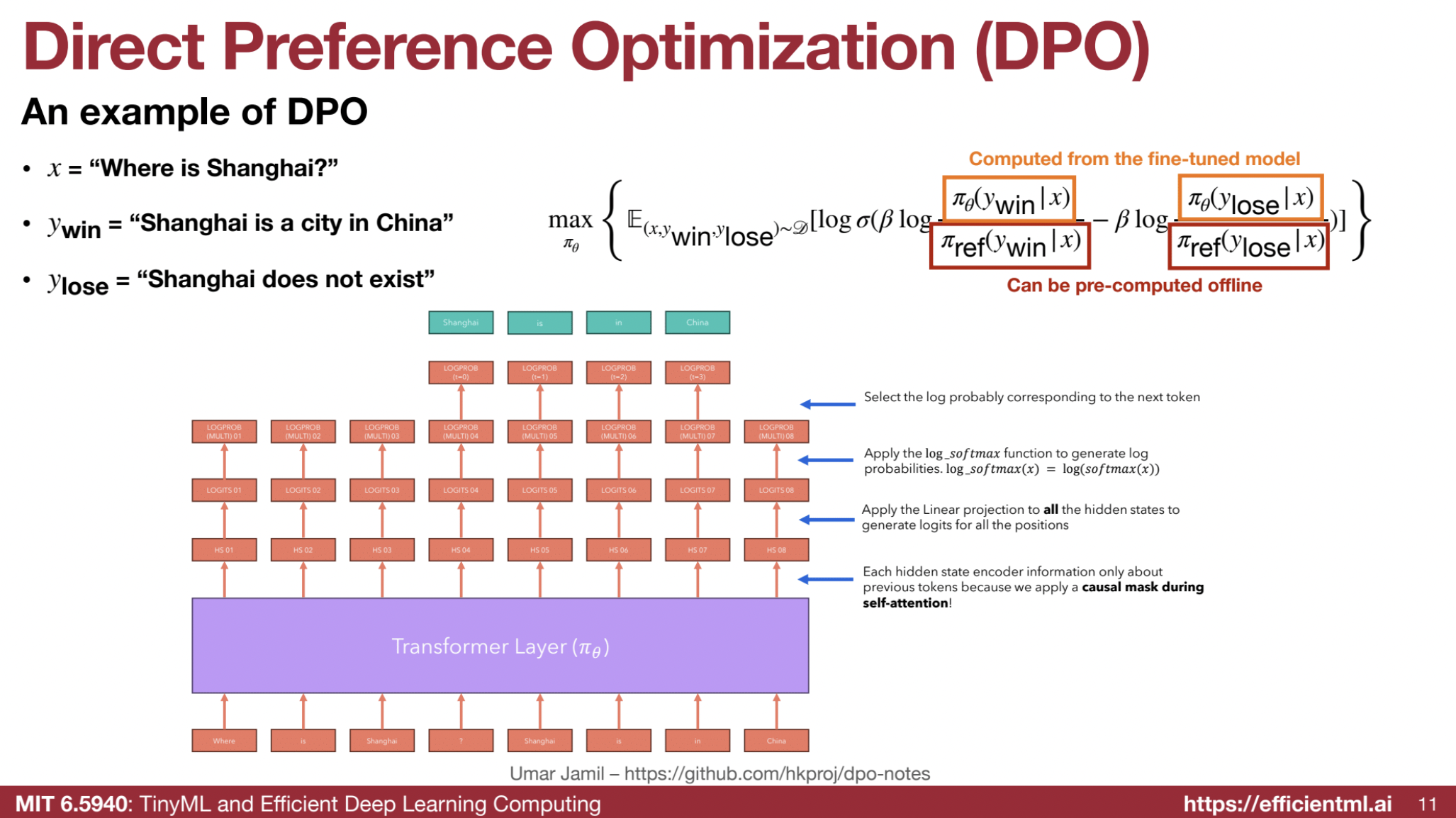

🛠️ 1.3 Direct Preference Optimization (DPO)

- Purpose: Simplifies RLHF by reducing the stages and models involved.

- Mechanism: Uses a single-phase SFT task with a loss function based on winning vs. losing responses.

- Key Idea: Directly optimizes the model for human preference with a simpler loss function.

💡 Core Idea

- Objective: Directly optimize a language model based on human preferences without explicitly training a separate reward model or using RL algorithms.

- Dataset: Consists of preferences with prompts and two responses: a preferred (“winning”) and a dispreferred (“losing”) response.

📈 DPO Objective Function

The DPO loss function is:

$[

\text{max}{\pi\theta} \mathbb{E}{(x, y{\text{win}}, y_{\text{lose}}) \sim \mathcal{D}} \left[ \log \sigma \left( \beta \log \frac{\pi_\theta(y_{\text{win}} | x)}{\pi_\text{ref}(y_{\text{win}} | x)} - \beta \log \frac{\pi_\theta(y_{\text{lose}} | x)}{\pi_\text{ref}(y_{\text{lose}} | x)} \right) \right]

]$

Where:

- $( \pi_\theta )$: Language model being optimized.

- $( \pi_\text{ref} )$: Reference model (pre-fine-tuning).

- $( x )$: Prompt.

- $( y_{\text{win}} )$: Preferred response.

- $( y_{\text{lose}} )$: Dispreferred response.

- $( \beta )$: Hyperparameter controlling model deviation from the reference.

Goal: Increase the log probability of preferred responses while decreasing it for dispreferred ones relative to the reference model.

⚙️ How DPO Works

- Dataset of Preferences: Contains prompts and paired responses (preferred vs. dispreferred).

- Log Probabilities:

- Calculate log probabilities of responses ($( y_{\text{win}} )$, $( y_{\text{lose}} )$) using both fine-tuned ($( \pi_\theta )$) and reference ($( \pi_\text{ref} )$) models.

- Combine prompt and response into a single input string. The language model generates logits, and log probabilities are derived using log softmax.

- Loss Function: Compute DPO loss:

$[ \log \sigma \left( \beta \log \frac{\pi_\theta(y_{\text{win}} | x)}{\pi_\text{ref}(y_{\text{win}} | x)} - \beta \log \frac{\pi_\theta(y_{\text{lose}} | x)}{\pi_\text{ref}(y_{\text{lose}} | x)} \right) ]$ - Training: Use gradient descent to minimize this loss, aligning the fine-tuned model with human preferences.

🌟 Key Advantages of DPO

- 🧾 Simplicity: Replaces multi-stage RLHF with a single-stage SFT process.

- ⚖️ Stability: The reference model ensures minimal deviation from the original behavior, controlled by $( \beta )$.

- 🚀 Efficiency: Avoids complex RL optimization, making it computationally lightweight.

- 🎯 Direct Optimization: Aligns the model with human preferences without intermediate reward models.

🔍 Comparison with RLHF

- RLHF Workflow:

- Train a reward model from preferences.

- Use Proximal Policy Optimization (PPO) to maximize reward.

- Involves three models: reference, reward, and fine-tuned.

- DPO Workflow:

- Combines reward computation and policy optimization into a single step.

- Involves only two models: reference and fine-tuned.

- RLHF Complexity vs. DPO Simplicity: DPO uses the Bradley-Terry model for preference conversion, optimizing the model in one stage.

🛠️ Implementation Details

- Log Probabilities:

- Combine prompt and responses (preferred and dispreferred) as input to the language model.

- Compute log probabilities for all tokens and sum them.

- Loss Computation: Use log probabilities to calculate the DPO loss.

- Hyperparameter $( \beta )$:

- Controls the degree of change allowed for the language model relative to the reference model.

- Typical values can be found in the Hugging Face documentation.

💡 1.4 Parameter-Efficient Fine-Tuning (PEFT)

- Purpose: Reduce computational and memory costs by tuning only a subset of parameters.

- Techniques:

- BitFit: Fine-tunes bias terms only.

- Adapters: Adds learnable layers to the model.

- LoRA (Low-Rank Adaptation): Injects low-rank matrices into layers.

- QLoRA: Combines LoRA with quantized base models.

- Key Idea: PEFT methods enable fine-tuning on resource-constrained hardware.

PEFT methods aim to reduce the computational cost and memory footprint of fine-tuning large language models (LLMs). Below is a detailed explanation of each method along with a comparison table. 📊

1. BitFit 🧩

- Concept: Sparse fine-tuning by updating only bias terms, keeping other parameters frozen.

- Mechanism:

- Updates bias parameters in model layers like QKV projection, FFN linear layers, and output projection.

- Advantages:

- 🛑 Reduced Memory: Only 0.1M trainable bias terms in BERT-base (compared to 110M total).

- ⚡ Computational Efficiency: Fewer trainable parameters mean faster fine-tuning.

- 🎯 Competitive Performance: Effective for small/medium datasets.

- Disadvantages:

- 📉 Performance on Large Datasets: May lag behind full fine-tuning.

2. TinyTL (Tiny Transfer Learning) 🪶

- Concept: Adds a lightweight residual branch to the main network, updating only this branch.

- Mechanism:

- A residual module (e.g., group convolutions) learns the residual changes.

- Advantages:

- 🛠️ Reduced Parameters: Only updates parameters in the residual branch.

- 💡 Computational Efficiency: Optimized lightweight design.

- Disadvantages: None mentioned.

3. Adapter 🛠️

- Concept: Inserts small, trainable modules (adapters) into the Transformer architecture.

- Mechanism:

- Adapter layers include a bottleneck structure: down-project, activation, up-project.

- Adapters are task-specific; the original model stays frozen.

- Advantages:

- 🛡️ Parameter Efficiency: Fewer trainable parameters per task.

- 📦 Reduced Storage: Handles many tasks efficiently (e.g., 1000 tasks require only 14 GB vs. 14 PB).

- 🌟 Performance: Near state-of-the-art (SOTA).

- Disadvantages:

- ⏳ Inference Latency: Deeper architecture increases latency.

4. Prompt Tuning 📝

- Concept: Learns continuous, task-specific prompts prepended to the input.

- Mechanism:

- Trainable prompts prepended to input; the base model remains unchanged.

- Advantages:

- 💾 Parameter Efficiency: Tuning prompts only, not the model.

- 🤝 Batching: Handles different tasks together with prompts.

- 🎯 Comparable Accuracy: Matches full fine-tuning for large models.

- Disadvantages:

- 📏 Increased Input Length: More tokens = higher latency and reduced usable sequence length.

5. Prefix Tuning 🔗

- Concept: Extends prompt tuning by adding tunable prefixes to every layer.

- Mechanism:

- Tunable prefixes prepended at each Transformer layer.

- Advantages:

- 📈 Improved Performance: Outperforms prompt tuning.

- Disadvantages:

- 📏 Increased Input Length: Similar to prompt tuning.

- ⏳ Inference Latency: Larger KV cache = slower inference.

6. LoRA (Low-Rank Adaptation) 📉

- Concept: Adds trainable low-rank matrices to Transformer layers.

- Mechanism:

- Introduces low-rank matrices $( A )$ and $( B )$, trained during fine-tuning.

- $( B )$ starts as zero, ensuring no initial change in output.

- Advantages:

- 🛠️ Parameter Efficiency: Only small matrices are trainable.

- ⚡ No Inference Latency: Fuses matrices with original weights.

- 🎨 Practical: Suitable for many applications.

- Disadvantages: None mentioned.

7. QLoRA (Quantized Low-Rank Adaptation) ⚙️

- Concept: Combines LoRA with quantization for memory efficiency.

- Mechanism:

- Quantizes the model with NF4 data type and adds low-rank adapters.

- Uses paged optimizers with CPU offloading.

- Advantages:

- 💾 Memory Efficiency: Supports large models on mid-level GPUs.

- ⚡ No Inference Latency: Adapters are fused at inference.

- Disadvantages: None mentioned.

8. BitDelta 🧮

- Concept: Quantizes weight deltas to 1-bit and learns a scaling factor.

- Mechanism:

- Quantizes delta to 1 bit ($(+1)$ for positive, $(-1)$ for non-positive).

- Applies a learned scaling factor.

- Advantages:

- 🛠️ Extreme Compression: Saves storage and memory.

- 🏗️ Multi-Tenant Serving: Efficient for serving many fine-tuned models.

- ⚡ Performance: Maintains accuracy despite compression.

- Disadvantages: None mentioned.

Comparison Table 📊

| Method | Trainable Parameters | Inference Latency | Memory Usage | Key Mechanism | Performance |

|---|---|---|---|---|---|

| BitFit | Bias terms only | Low | Very Low | Updates bias terms | Competitive for small datasets |

| TinyTL | Lite residual module | Low | Low | Adds/upd residual branch | Good |

| Adapter | Adapter layers | High | Low | Inserts small trainable modules | Near SOTA |

| Prompt Tuning | Task-specific prompts | High | Low | Prepends trainable prompts | Comparable to full fine-tuning |

| Prefix Tuning | Tunable prefixes per layer | High | Low | Adds prefixes at each layer | Improved over prompt tuning |

| LoRA | Low-rank matrices | Low | Low | Injects trainable low-rank matrices | Good |

| QLoRA | Low-rank matrices + quant. | Low | Very Low | Combines LoRA with quantization | Good |

| BitDelta | 1-bit deltas + scaling | Low | Very Low | Quantizes weight delta | Good |

🖼️ 2. Multi-Modal LLMs

Multi-modal Large Language Models (LLMs) are designed to process and understand information from multiple modalities, such as text, images, videos, and sometimes audio. These models aim to integrate different types of data to achieve a more comprehensive understanding of the world. Below are approaches to multi-modal LLMs:

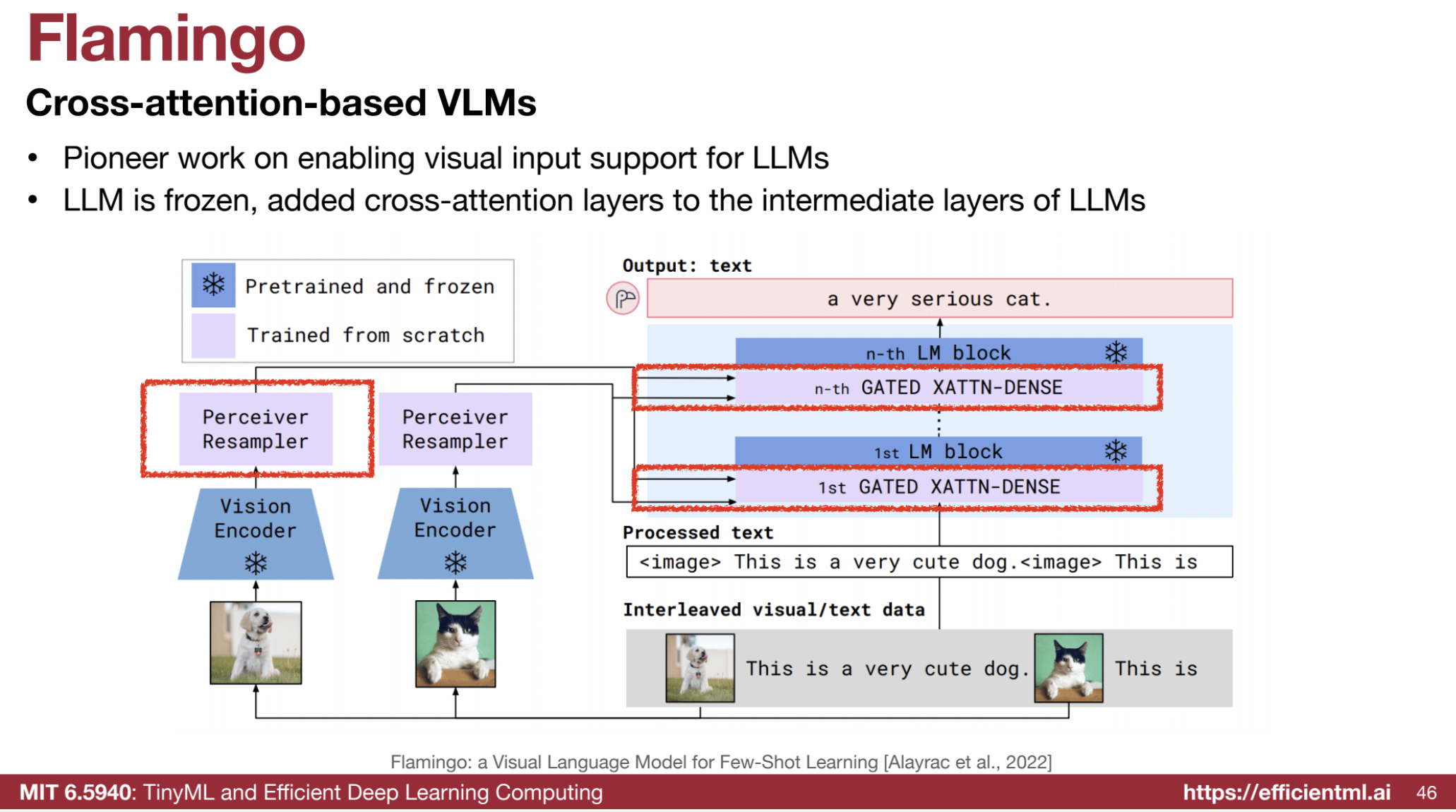

1. Cross-Attention Based Models (Flamingo) 🔥

- Concept: Uses cross-attention mechanisms to inject visual information into a language model. The language model remains frozen, with added cross-attention layers to process visual input.

- Mechanism:

- Vision Encoder: Converts images into visual tokens.

- Perceiver Resampler: Adjusts the number of visual tokens to a fixed size using learned queries.

- Cross-Attention Layer: Processes visual tokens as Key and Value (K, V) with the language input as the Query (Q).

- Gated Cross-Attention: Controls the amount of visual information added, initialized to zero to function initially as a language-only model.

- Advantages:

- 📚 In-context learning: Demonstrates strong capabilities.

- 🗨️ Visual Dialogues: Capable of engaging in visual conversations.

1. Frozen Language Model ❄️

- The pre-trained language model remains frozen during the training of visual components.

- Why? This approach leverages the existing language capabilities of the LLM while focusing on adding visual understanding.

2. Vision Encoder 🖼️

- Visual input, such as an image, is processed by a vision encoder.

- Output: The encoder converts the image into a set of visual tokens, numerical representations of the visual content.

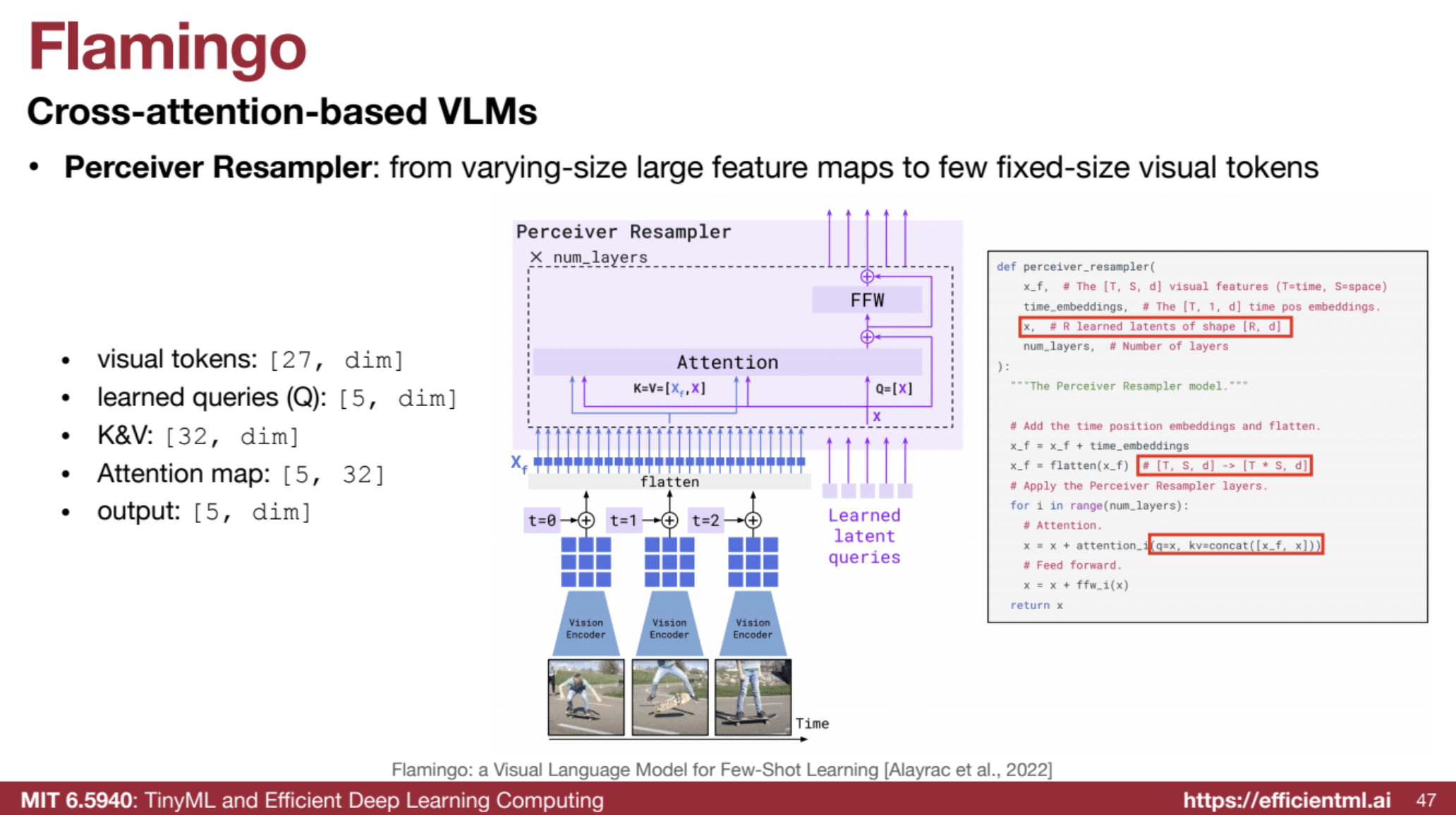

3. Perceiver Resampler 🔄

- The visual tokens are passed through a perceiver resampler, which adjusts their number to a fixed size.

- Mechanism:

- The perceiver resampler uses learned query tokens to reduce variable input tokens into a fixed set of output tokens.

- Example:

- For three images (each with 3x3 tokens = 27 tokens), the resampler uses 5 learned query tokens.

- These 5 learned tokens concatenate with the 27 input tokens, resulting in 32 tokens that compute Keys (K) and Values (V) for attention.

- The final output is 5 fixed-size tokens.

- Benefit: Enables the model to handle images of varying resolutions and sizes by normalizing them into a fixed number of tokens.

4. Cross-Attention Mechanism 🔗

- The fixed-size visual tokens are integrated into the language model via cross-attention layers.

- How It Works:

- Visual tokens act as Keys (K) and Values (V), while language input serves as the Query (Q).

- This lets the language model attend to relevant visual information during text processing.

- Key Difference: Unlike self-attention (where Q, K, and V are derived from the same input), cross-attention combines visual and textual inputs.

5. Gated Cross-Attention 🚪

- A tanh gate controls how much visual information is injected into the language model.

- Initialization:

- The gate is initialized to zero, meaning the model starts as a language-only system.

- As training progresses, the gate learns to adjust, dynamically controlling the influence of visual input.

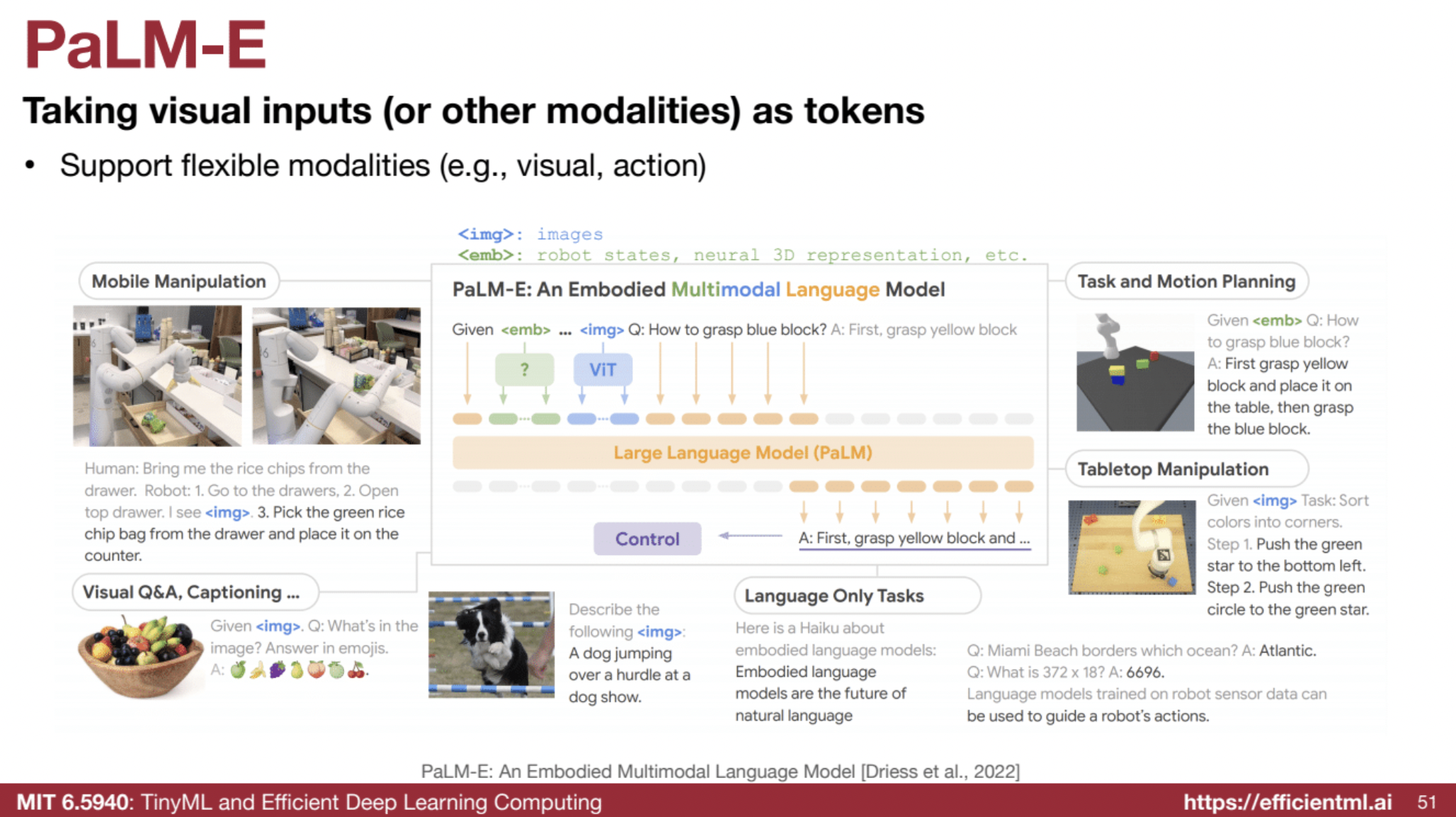

2. Visual Tokens as Input (PaLM-E, VILA) 🖼️

- Concept: Treats all inputs, including visual data, as tokens for a uniform processing model.

- Mechanism:

- Tokenization: Converts visual data using a vision transformer (ViT) into tokens aligned with text tokens.

- Unified Input: Combines visual and text tokens to feed into the LLM.

- Flexible Modalities: Supports diverse modalities, including action-based inputs.

- Control Signals: Outputs direct control signals.

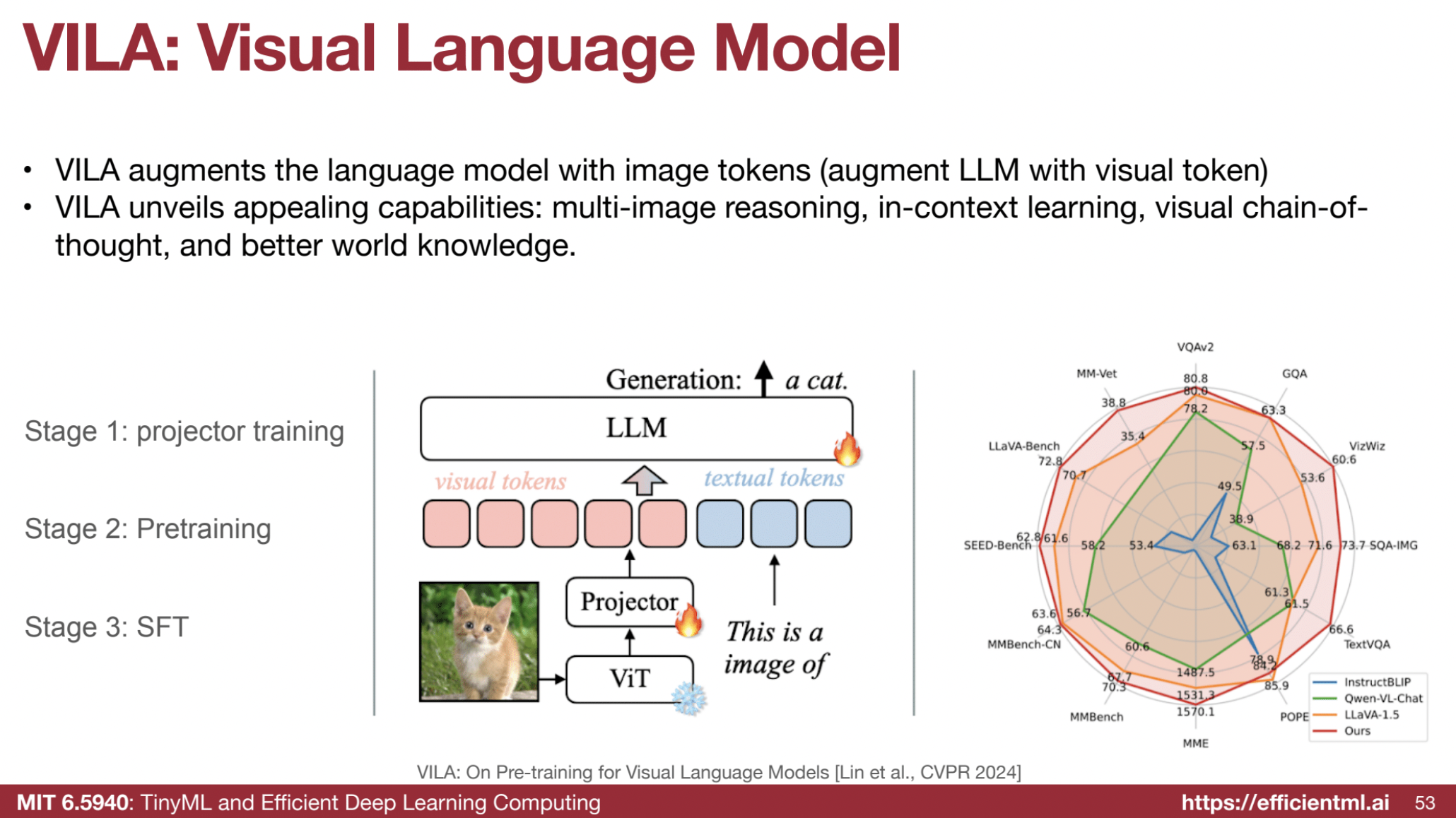

- VILA (Visual Language Model):

- Training Process: Includes projector training, pre-training, and supervised fine-tuning (SFT).

- Key Findings:

- 🔄 LLM Training: Updating the LLM enhances generalization for visual-language tasks.

- 📂 Data Structure: Interleaved data improves learning; aligning visual and textual tokens boosts performance.

- 🧪 Data Blending: Combining text-only and image-text data enhances task accuracy.

- 🖼️ Image Resolution: Original resolution is more important than token count.

- Advantages:

- 🔄 Homogeneous Model: Uniform architecture across modalities.

- 🖌️ Multi-Image Reasoning: Handles reasoning over multiple images.

- 🧠 Visual Chain-of-Thought: Enables complex visual reasoning.

VILA is a family of models augmenting a language model (LLM) with visual tokens to perform diverse visual-language tasks. It excels in multi-image reasoning, in-context learning, visual chain-of-thought, and leveraging world knowledge. As an auto-regressive model, VILA processes and generates outputs token by token.

🏗 Core Components and Architecture

- Visual Encoder:

Converts images or videos into visual embeddings, often using a Vision Transformer (ViT). These embeddings are tokenized into visual tokens. - Projector:

Aligns visual and textual feature spaces, bridging the gap between modalities. A simple linear projector is effective for training the LLM. - Large Language Model (LLM):

Processes both visual and textual tokens. Pre-trained models like Llama-2 are commonly used. - Tokenization:

All inputs (images and text) are treated as sequences of tokens, allowing the LLM to process them uniformly.

📚 Training Process

- Projector Training:

Aligns the image and language feature spaces for better modality interaction. - Interleaved Pre-training:

Uses large datasets of interleaved image-text data to train the model to jointly understand visual and textual information. - Joint Supervised Fine-Tuning (SFT):

Fine-tunes on a mix of visual-language and text-only instruction datasets, ensuring performance across modalities.

🔍 Key Findings & Design Choices

- Updating the LLM:

Essential during pre-training for strong in-context learning capabilities. Freezing the LLM limits adaptability. - Interleaved Data:

Training with interleaved visual-language data outperforms image-text pairs alone. - Data Blending:

Mixing text-only instruction data with image-text data during SFT prevents text-only task degradation while enhancing visual-language task accuracy. - Image Resolution:

Higher image resolution improves accuracy on fine-grained tasks. - Linear Projector:

A simple linear projector aligns visual and textual tokens effectively. - No Visual Experts:

Directly fine-tuning the LLM yields better results than adding separate visual experts.

🎯 Capabilities

- Multi-Image Reasoning:

Identifies common elements and distinctions across images, even when trained on single-image-text pairs. - In-Context Learning:

Adapts from demonstrations in prompts for tasks like object counting and style understanding. - Visual Chain-of-Thought (CoT):

Performs step-by-step reasoning with visual inputs. - World Knowledge:

Recognizes landmarks, locations, and other world concepts effectively. - Multilingual Capabilities:

Outperforms benchmarks like MMBench-Chinese, showcasing robust multilingual understanding. - Visual Reference Understanding:

Handles references like circled objects in images for reasoning. - Detailed Captioning:

Generates context-rich and detailed captions. - Handling Corner Cases:

Manages edge scenarios, such as challenges in autonomous driving.

📊 Performance

- Outperforms state-of-the-art models (e.g., LLaVA-1.5) across benchmarks.

- Excels in tasks like VisWiz and TextVQA.

- Maintains strong text-only benchmark performance, especially at the 13B parameter scale.

- Demonstrates real-world effectiveness across diverse datasets.

📚 Additional: Overview of Models: LLaVA, BLIP/BLIP-2

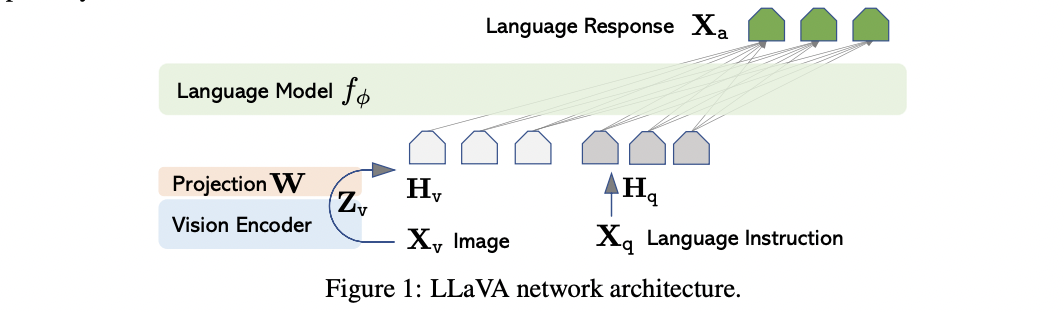

🤖 LLaVA (Large Language and Vision Assistant)

- Overview: Multimodal model combining a vision encoder and an LLM for instruction-following tasks.

- Architecture:

- Vision Transformer (ViT) processes images.

- A projection network aligns visual features with text embeddings.

- Training:

- Pre-training: Freezes ViT and LLM, trains projection network for modality alignment.

- Instruction Fine-Tuning (SFT): Fine-tunes the entire model on instruction-based image-text datasets.

- Key Features:

- Strong performance on tasks like image captioning, visual question answering, and reasoning.

- Focuses on instruction following but lacks interleaved pre-training for richer multi-modal understanding.

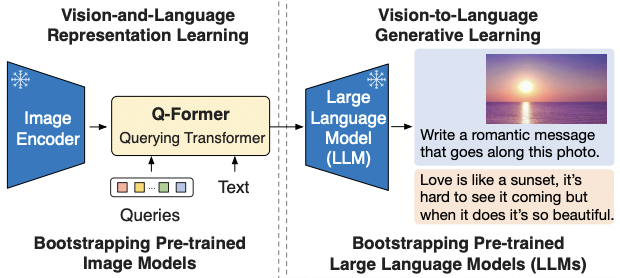

🧠 BLIP & BLIP-2

- Overview: Designed for unified vision-language understanding and generation. BLIP-2 emphasizes training efficiency.

- BLIP Architecture:

- Image encoder + text encoder + fusion module with image-text matching and generation objectives.

- BLIP-2 Architecture:

- Frozen Image Encoder: Pre-trained vision transformer (ViT).

- Frozen LLM: Maintains original LLM capabilities.

- Q-Former: Lightweight transformer extracts visual features as query vectors.

- Training:

- Train Q-Former to extract visual features.

- Fine-tune model on downstream tasks with visual queries and text embeddings.

- Key Features:

- Efficient training with frozen components.

- Excels in image captioning and VQA but less flexible for multi-modal or complex tasks like multi-image reasoning.

🛠 Comparison Table

| Feature | VILA | LLaVA | Flamingo | BLIP/BLIP-2 |

|---|---|---|---|---|

| Vision Encoder | Uses a vision encoder (like CLIP ViT) to extract visual features from images. | Uses a vision encoder (like CLIP ViT) to extract visual features from images. | Uses a vision encoder to process images. | Uses a vision encoder to extract visual features from images. |

| Projection Layer | Uses a trainable simple linear layer to project visual features into the LLM’s embedding space. | Uses a trainable linear layer to project visual features into the LLM’s embedding space. | Uses a perceiver resampler to adjust the number of visual tokens, then inputs into cross-attention layers. | Uses a Q-former to connect the image and language representations. |

| Text Token Processing | Text tokens are directly fed into the LLM; no additional projector is used. | Text tokens are directly fed into the LLM; no additional projector is used. | Text tokens are input directly into the LLM. | Text tokens are directly input into the LLM. |

| LLM Updates | The LLM is updated during the visual-language pre-training phase, enabling deeper alignment of visual and textual embeddings and allowing the LLM to process both visual and text tokens. | The LLM is kept frozen during pre-training and is only updated during the instruction fine-tuning phase. | The LLM is frozen and cross-attention layers are added to intermediate layers of the LLM to inject visual information. | The LLM is frozen, and the visual features are injected through a Q-former. |

| Pre-training Data | Uses interleaved image-text data during pre-training, where image and text tokens are mixed in sequences, improving model performance. | Primarily uses image-text pairs for pre-training, and uses instruction-tuning data for fine-tuning. | Uses interleaved image-text data. | BLIP uses image-text pairs and BLIP-2 uses frozen image encoders with large language models and image-text pairs. |

| Architecture | Has a more homogeneous architecture because the LLM is updated during pre-training and processes both visual and text inputs as tokens. | Does not have the same level of integration of visual inputs with the LLM during pre-training and is less flexible with in-context learning and multi-image reasoning. | Has a cross-attention-based architecture where visual information is injected into the LLM through added cross-attention layers, keeping the LLM frozen. | BLIP-2 uses a Q-former to connect the frozen image encoder with the LLM, while BLIP uses a unified vision-language model. |

| In-Context Learning | Has stronger in-context learning capabilities due to the LLM being trained on both visual and textual inputs during the pre-training phase. | Can perform in-context learning, but has worse in-context learning capabilities compared to VILA because the LLM is not trained on both visual and textual inputs during pre-training. | Has in-context learning capabilities. | BLIP is for unified vision-language understanding and generation; BLIP-2 uses bootstrapping to enable in-context learning. |

| Multi-Image Reasoning | Has better multi-image reasoning capabilities thanks to the LLM pre-training and the use of interleaved data. | Has less flexibility in multi-image reasoning because the LLM is not trained on visual inputs during pre-training. | Not explicitly mentioned in the sources. | Not explicitly mentioned in the sources. |

| World Knowledge | Has enhanced world knowledge thanks to pre-training of the LLM on both text and visual data and the use of interleaved data. | World knowledge is present, but may not be as strong as VILA due to the LLM being kept frozen during the pre-training phase. | The LLM is frozen, so it relies on pre-existing knowledge. | The model uses a frozen LLM and benefits from its pre-existing world knowledge. |

| Text-Only Performance | Demonstrates competitive text-only performance, retaining or improving upon the LLM’s performance with joint supervised fine-tuning on text-only data. | Can have degradation in text-only performance when not supplemented with text-only instruction data during SFT. | Not explicitly mentioned in the sources. | Not explicitly mentioned in the sources. |

| Resolution | The raw resolution of the image matters more than the number of visual tokens. | The raw resolution of the image matters more than the number of visual tokens. | Not explicitly mentioned in the sources. | Not explicitly mentioned in the sources. |

| LoRA Fine Tuning | Significantly outperforms LoRA fine-tuning with a rank of 64. | Not mentioned in the sources. | Not explicitly mentioned in the sources. | Not explicitly mentioned in the sources. |

| Training Data Size | Pre-trained on about 50M images. | LLaVA pre-trains on a filtered CC3M dataset of 595K image-text pairs and fine-tunes on a dataset of 158K language-image instruction-following samples. | Not explicitly mentioned in the sources. | Not explicitly mentioned in the sources. |

- VILA and LLaVA both utilize a projection layer to align visual features with the LLM’s embedding space, and neither uses a projector for text tokens; they are directly fed into the LLM.

- The core difference lies in when the LLM is updated: VILA updates it during pre-training, enabling better integration of visual and textual information, while LLaVA only updates the LLM during instruction fine-tuning. Flamingo and BLIP/BLIP-2 keep the LLM frozen.

- VILA also uses interleaved image-text data for pre-training, further contributing to its enhanced capabilities. Flamingo also uses interleaved image-text data, while LLaVA, BLIP, and BLIP-2 use image-text pairs.

- These differences result in VILA having stronger in-context learning, multi-image reasoning, and world knowledge compared to LLaVA. Flamingo and BLIP/BLIP-2 are also capable of in-context learning.

- VILA achieves significantly better performance than LoRA fine-tuning.

- Flamingo uses cross-attention layers to inject visual information into the frozen LLM, while BLIP/BLIP-2 use a Q-former to connect the image and language representations.

3. InternVL: Vision Encoder in Multimodal Models 📸

The sources discuss InternVL as a vision encoder used in multimodal models, noting its strengths and improvements over other vision encoders. Here are some key points about InternVL:

-

🧠 Strong Vision Encoder: InternVL is a vision foundation model that is used in multimodal large language models (LLMs). It is often used as an encoder, processing image data into a format that can be used by the LLM.

-

🔄 Contrastive Pre-training: The most common vision foundation model is a contrastively pre-trained Vision Transformer (ViT), like InternVL, which are typically trained on image-text pairs from the internet at a fixed, low resolution.

-

⚠️ Limitations of Standard ViTs: ViTs trained at a fixed low resolution (e.g., 224x224) often experience performance degradation when processing higher resolution images or images from sources other than the internet.

-

🔧 InternVL 1.2 Update: To address the limitations of standard ViTs, the InternVL 1.2 update involved the continuous pre-training of the InternViT-6B model.

-

🗑️ Discarding Layers: The update found that the features from the fourth-to-last layer of the model perform best for multimodal tasks. As a result, they discarded the weights of the last three layers, reducing the model from 48 to 45 layers.

-

🔼 Increased Resolution: The resolution of the InternViT-6B was increased from 224 to 448 pixels.

-

📈 High-Resolution Support: InternVL supports dynamic high resolution by using a combination of tiling and thumbnails. This involves matching the input image to a pre-defined aspect ratio, dividing it into tiles, and creating a thumbnail for global context.

- 🧩 Tiling: An image is divided into tiles of a fixed size (e.g., 448x448 pixels). The number of tiles can vary depending on the resolution of the original image and the desired level of detail.

- The optimal number of tiles for different tasks varies. For example, text and OCR benchmarks benefit from high resolution, whereas knowledge and reasoning benchmarks are sufficient with around six tiles.

- 🔀 Cross-Attention: High-resolution image processing can also be supported through cross-attention mechanisms that combine low-resolution image features and text features as queries, with the high-resolution image features as keys and values.

4. Enabling Visual Outputs (VILA-U) 🎬

- Concept: Supports both understanding and generation of images, videos, and text within a unified autoregressive model.

- Mechanism:

- Unified Vision Tower: Aligns visual tokens with text using a vision encoder, residual quantizer, and decoder.

- Unified Pipeline: Encodes multi-modal inputs into discrete tokens for next-token prediction.

- Losses: Combines image-text contrastive loss and reconstruction loss for visual generation.

- Discrete Visual Tokens: Generates images as tokens, similar to text.

- Advantages:

- 🌀 Unified Model: Integrates understanding and generation seamlessly.

- 🎨 Generation Capabilities: Supports native visual generation.

- Performance: Achieves near SOTA performance in visual understanding tasks.

- Disadvantages:

- ❌ No explicitly mentioned drawbacks.

Additional Techniques for Multi-Modal LLMs 🛠️

- High Resolution Images:

- 🖼️ Tiling: Divides images into tiles with a thumbnail for global context.

- 🎯 Cross Attention: Processes high-resolution images using low-resolution image and text features as queries.

- Dynamic High Resolution:

- 📏 Optimal Aspect Ratio: Matches predefined ratios, dividing images into tiles.

- 🧩 Tile Count: Impacts performance differently across tasks. For instance:

- 🔍 Text and OCR tasks benefit from high resolution.

- 🧠 Knowledge and reasoning tasks perform well with ~6 tiles.

🧠 3. Prompt Engineering

Effective interaction techniques for guiding LLMs to perform specific tasks.

📖 3.1 In-Context Learning (ICL)

- Mechanism: Provides examples in the prompt to demonstrate desired behavior.

- Key Idea: Enables LLMs to perform new tasks through instructions alone.

🔗 3.2 Chain-of-Thought (CoT)

- Mechanism: Encourages intermediate reasoning steps before the final answer.

- Key Idea: Improves reasoning accuracy for complex tasks.

🔍 3.3 Retrieval-Augmented Generation (RAG)

- Mechanism: Combines LLMs with external information retrieval.

- Key Idea: Updates LLM knowledge dynamically by using a database for relevant information retrieval.

🎉 These techniques showcase the advanced methods in post-training to enhance the adaptability, efficiency, and multi-modal capabilities of LLMs! 🚀