📚 Fully Connected Networks, Optimization, Initialization and Activations

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

🧠 Fully Connected Networks

In a fully connected network, each neuron in a layer is connected to every neuron in the previous layer. The network performs a series of linear transformations followed by non-linear activation functions.

- A common activation function is ReLU (Rectified Linear Unit), while the last layer typically uses a linear activation to produce logits.

🔢 Notation

- $( z_i )$: Output of the linear transformation in layer $( i )$.

- $( n_i )$: Size of layer $( i )$.

- $( W_i )$: Weight matrix of layer $( i )$ with shape $( (n_i \times n_{i+1}) )$.

- $( b_i )$: Bias vector of layer $( i )$ with size $( n_{i+1} )$.

- $( \sigma_i )$: Non-linear activation function in layer $( i )$.

🧮 Matrix Form and Broadcasting

The linear transformation in layer $( i+1 )$ can be expressed as:

\(z_{i+1} = \sigma_i \left( z_i W_i^T + \mathbf{1} b_i^T \right)\)

where $( \mathbf{1} )$ is a vector of ones to broadcast the bias across all neurons.

🔍 Note:

- In practice, explicit matrix formation is avoided for efficiency.

- Libraries like Needle handle broadcasting efficiently during automatic differentiation.

🟠 Gradient Descent

Gradient Descent is a fundamental optimization algorithm used in deep learning to iteratively update model parameters by moving in the direction of the negative gradient of the objective function.

🔹 Gradient Descent Update Rule

The update rule for gradient descent is:

\(\theta_{t+1} = \theta_t - \alpha \nabla f(\theta_t)\)

where:

- $( \theta_t )$ represents the parameters at iteration $( t )$.

- $( \alpha )$ is the step size (learning rate).

- $( \nabla f(\theta_t) )$ is the gradient of the objective function $( f )$ at $( \theta_t )$.

🔹 Key Characteristics

- Finds the direction of steepest descent (based on the $( \ell_2 )$ norm).

- May oscillate when using large step sizes.

- Convergence behavior depends on the step size:

- 🔹 Larger step size: Faster convergence but more oscillations.

- 🔹 Smaller step size: Slower convergence but smoother trajectory.

🔹 Example:

Consider the quadratic function:

\(f(\theta) = \frac{1}{2} \theta^T P \theta + q^T \theta\)

where $( P )$ is a positive definite matrix. The step sizes affect the evolution of parameters.

🟡 Newton’s Method

Newton’s method incorporates second-order information by scaling the gradient with the inverse of the Hessian matrix.

🔹 Newton’s Update Rule

\(\theta_{t+1} = \theta_t - \alpha (\nabla^2 f(\theta_t))^{-1} \nabla f(\theta_t)\)

where:

- $( (\nabla^2 f(\theta_t))^{-1} )$ is the inverse Hessian matrix at $( \theta_t )$.

🔹 Example:

For quadratic functions, Newton’s method with a full step ($( \alpha = 1 )$) finds the optimum in a single iteration.

🔹 Limitations:

- High Computational Cost: Inverting the Hessian matrix is expensive.

- Non-convexity: Performance is uncertain for non-convex functions, common in deep learning.

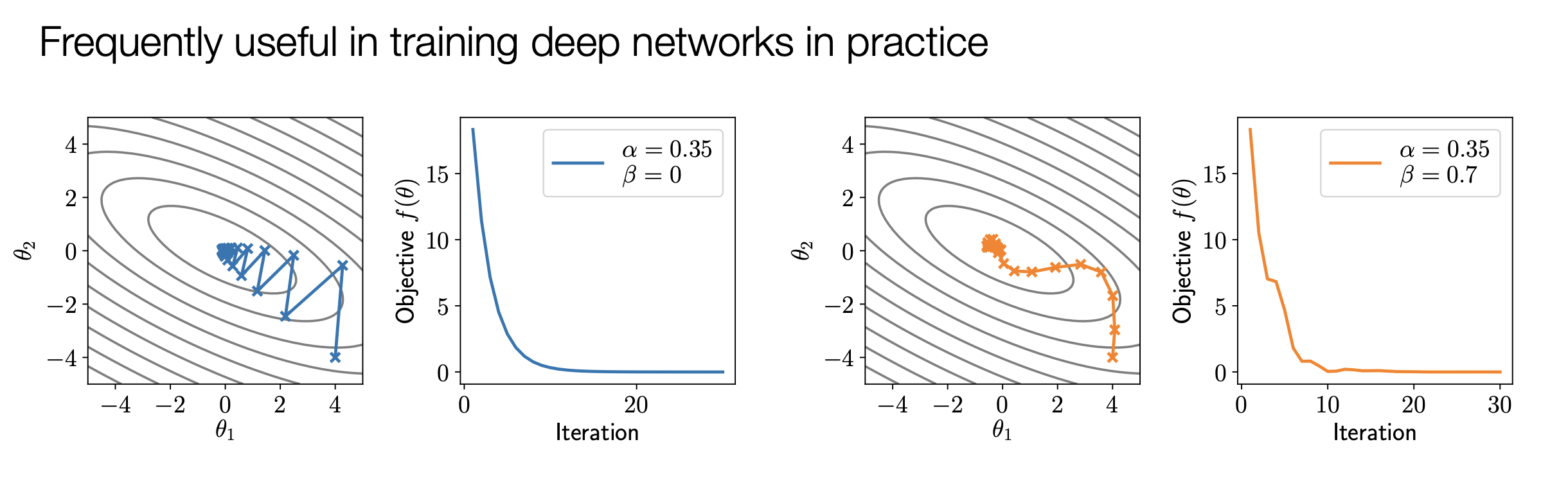

🟢 Momentum-Based Optimization

Momentum methods combine gradient information from multiple iterations to smooth out updates and accelerate convergence.

🔹 Momentum Update Rule

Momentum maintains a velocity term $( \mu )$:

\(\mu_{t+1} = \beta \mu_t + \nabla f(\theta_t)\)

\(\theta_{t+1} = \theta_t - \alpha \mu_{t+1}\)

where:

- $( \beta )$ is the momentum coefficient.

🔹 Example:

Momentum leads to smoother trajectories and faster convergence compared to plain gradient descent.

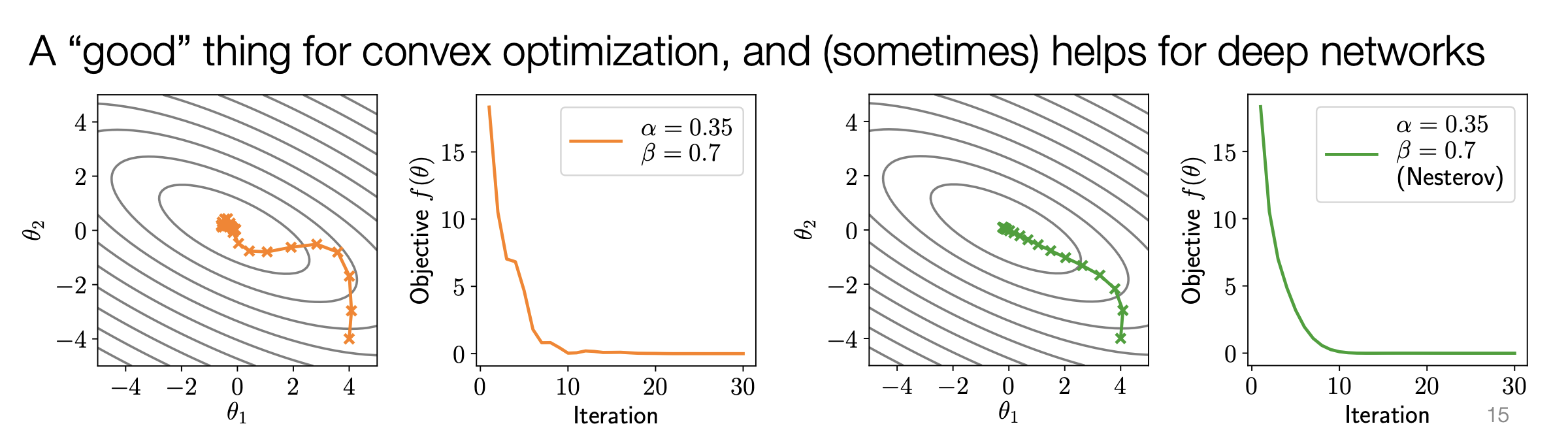

🔵 Nesterov Momentum

Nesterov momentum computes the gradient at a “look-ahead” point, improving convergence.

🔹 Nesterov Update Rule

\(\mu_{t+1} = \beta \mu_t + \nabla f(\theta_t - \alpha \beta \mu_t)\)

\(\theta_{t+1} = \theta_t - \alpha \mu_{t+1}\)

🔹 Example:

Nesterov often converges faster and smoother than regular momentum, especially for convex functions.

🔴 Adaptive Gradient Methods

🔹 Adam (Adaptive Moment Estimation)

Adam combines momentum and adaptive learning rates by maintaining first and second moment estimates of gradients.

Adam Update Rule

\(u_{t+1} = \beta_1 u_t + (1 - \beta_1) \nabla f(\theta_t)\)

\(v_{t+1} = \beta_2 v_t + (1 - \beta_2) \nabla f(\theta_t)^2\)

\(\theta_{t+1} = \theta_t - \alpha \frac{u_{t+1}}{\sqrt{v_{t+1}} + \epsilon}\)

where:

- $( u_t )$ tracks the gradient.

- $( v_t )$ tracks the squared gradient.

- $( \epsilon )$ is a small constant for numerical stability.

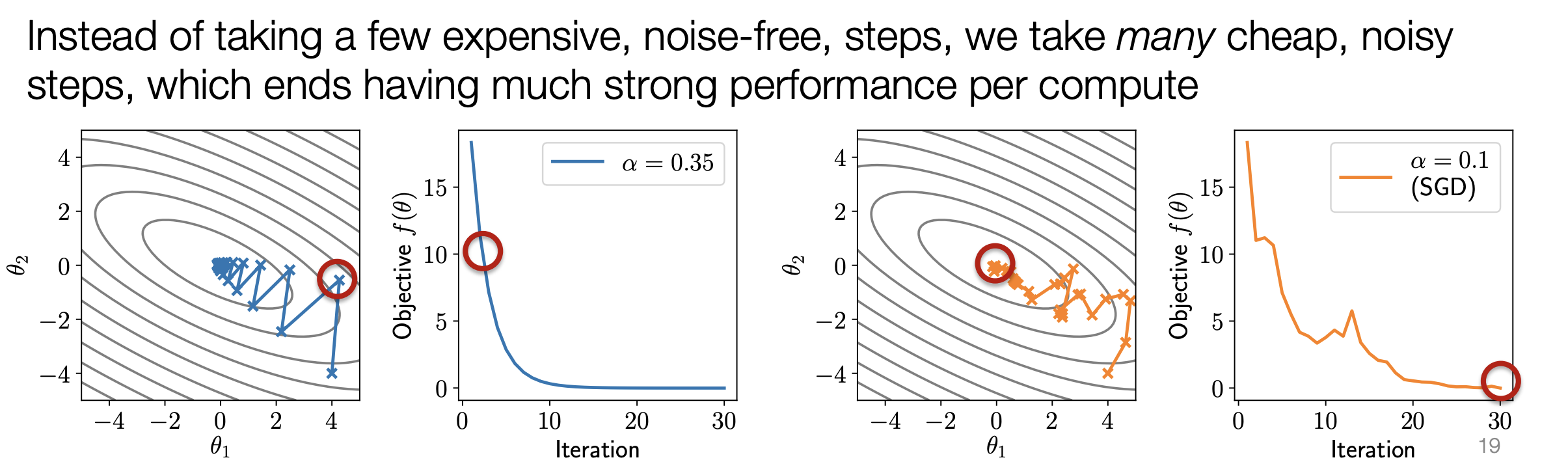

🟣 Stochastic Gradient Descent (SGD)

SGD updates parameters using a mini-batch of data, making it computationally efficient and introducing noise that can escape local optima.

🔹 SGD Update Rule

\(\theta_{t+1} = \theta_t - \alpha \frac{1}{|B|} \sum_{i \in B} \nabla f_i(\theta_t)\)

where $( |B| )$ is the batch size.

🔹 Benefits:

- Efficiency: Frequent updates using small batches.

- Noise: Helps escape saddle points and local minima.

⚙️ Comparison of Optimization Methods in Deep Learning

The following table summarizes first-order optimization methods commonly used in deep learning, highlighting their formulas, characteristics, and common usage scenarios:

| Method | Formula | Characteristics | Common Usage |

|---|---|---|---|

| Gradient Descent | \(\theta_{t+1} = \theta_t - \alpha \nabla f(\theta_t)\) | * Simple and widely applicable. * Might oscillate and converge slowly, especially with varying gradient scales. |

Often used as a baseline and for understanding basic optimization concepts. |

| Momentum | \(\mu_{t+1} = \beta \mu_t + (1 - \beta) \nabla f(\theta_t)\) \(\theta_{t+1} = \theta_t - \alpha \mu_{t+1}\) |

* Smooths out the descent trajectory by accumulating past gradients. * Potentially faster convergence compared to gradient descent. |

Widely used in deep learning, often with unbiasing and/or Nesterov acceleration. |

| Nesterov Momentum | \(\mu_{t+1} = \beta \mu_t + (1 - \beta) \nabla f(\theta_t - \alpha \mu_t)\) \(\theta_{t+1} = \theta_t - \alpha \mu_{t+1}\) |

* Similar to momentum but computes the gradient at a “look-ahead” point. * Can offer faster convergence and smoother trajectories. |

Used in some deep learning applications, although less common than regular momentum. |

| Adam | \(u_{t+1} = \beta_1 u_t + (1 - \beta_1) \nabla f(\theta_t)\) \(v_{t+1} = \beta_2 v_t + (1 - \beta_2)(\nabla f(\theta_t))^2\) \(\theta_{t+1} = \theta_t - \alpha \frac{u_{t+1}}{\sqrt{v_{t+1}} + \epsilon}\) |

* Adaptive gradient method that adjusts the update based on estimated gradient magnitudes. * Performs well across various deep learning tasks. |

One of the most popular optimizers in deep learning, known for robust performance across architectures. |

🧮 Second-Order Methods

Second-order methods like Newton’s Method utilize the Hessian matrix (second-order derivatives) to scale the gradient:

\(\theta_{t+1} = \theta_t - \alpha (\nabla^2 f(\theta_t))^{-1} \nabla f(\theta_t)\)

However, they are less common in deep learning due to the high computational cost of:

- Computing the Hessian matrix.

- Inverting it for large models with millions or billions of parameters.

🏆 Commonly Used Optimizers

- Adam 🧪

- A good starting point for most deep learning tasks due to its adaptive learning rate and momentum features.

- Provides generally robust performance across different architectures.

- SGD with Momentum 🚀

- Preferred for its simplicity and potential to find better solutions with careful tuning of hyperparameters like learning rate and momentum.

🔄 Importance of Stochastic Variants

In deep learning, the objective function involves a sum of losses over a large dataset. Computing exact gradients over the entire dataset is computationally expensive.

⚡ Stochastic Gradient Descent (SGD)

Instead of using the entire dataset, SGD approximates the gradient using a minibatch of data:

\(\theta_{t+1} = \theta_t - \alpha \frac{1}{|B|} \sum_{i \in B} \nabla L_i(\theta_t)\)

✅ Advantages of Stochastic Optimization

- Computational Efficiency: Faster updates by processing smaller batches of data.

- Noise Benefits: The noise introduced by minibatch sampling helps escape local optima and saddle points.

🔍 Note:

All optimization methods discussed are primarily used in their stochastic forms in deep learning practice.

🔧 Unbiasing Momentum Terms

Unbiasing momentum terms is a technique used to correct for the smaller magnitude of momentum terms in the initial iterations of an optimization algorithm. This bias arises when the momentum term (often denoted as $(u)$) is initialized to zero.

🧮 Momentum Update

A standard momentum update involves accumulating past gradients using an exponential moving average. The update rule for the momentum term is:

\[u_{t+1} = \beta u_t + (1 - \beta) \nabla f(\theta_t)\]Where:

- $( u_{t+1} )$: Momentum term at iteration $( t+1 )$.

- $( \beta )$: Momentum averaging parameter (typically between 0 and 1).

- $( \nabla f(\theta_t) )$: Gradient of the objective function at iteration $( t )$.

⚠️ Bias in Early Iterations

When $( u_0 )$ is initialized to zero, the momentum term in early iterations is smaller than expected. For example:

-

Iteration 1:

\(u_1 = \beta \cdot 0 + (1 - \beta) \nabla f(\theta_0) = (1 - \beta) \nabla f(\theta_0)\)

(Smaller than the gradient) -

Iteration 2:

\(u_2 = \beta u_1 + (1 - \beta) \nabla f(\theta_1)\)

(Closer to the gradient’s scale)

🔄 Unbiasing the Momentum Term

To correct this initial bias, an unbiasing factor is introduced. The updated rule becomes:

\[\hat{u}_{t+1} = \frac{u_{t+1}}{1 - \beta^{t+1}}\]Where $( \frac{1}{1 - \beta^{t+1}} )$ compensates for the smaller magnitude in early iterations.

- As $( t \to \infty )$, $( 1 - \beta^{t+1} \to 1 )$, and the unbiasing effect diminishes.

🚀 Effects of Unbiasing

-

Equal Expected Magnitude:

Ensures the momentum term has an expected magnitude comparable to the gradient at all iterations. -

Faster Convergence:

In some cases, unbiased momentum leads to faster convergence compared to biased momentum.

🛠️ Usage in Adam

The Adam optimizer, a popular adaptive gradient method, incorporates unbiased momentum estimates for both its momentum terms ($( u )$ and $( v )$):

\[\hat{u}_{t+1} = \frac{u_{t+1}}{1 - \beta_1^{t+1}} \quad \text{and} \quad \hat{v}_{t+1} = \frac{v_{t+1}}{1 - \beta_2^{t+1}}\]❓ Is Unbiasing Always Necessary?

While unbiasing is common, it’s not always essential:

- Adam: Incorporates multiple biased terms, and their relative scaling might not be significantly impacted.

- Other Optimizers: May not require unbiasing depending on specific use cases.

🔑 Key Points

- Corrects Initial Bias: Unbiasing addresses the bias introduced when initializing the momentum term to zero.

- Consistent Scale: Provides a momentum term with consistent scale across iterations, leading to smoother convergence.

- Common in Optimizers: Widely employed in optimizers like Adam, though its necessity varies.

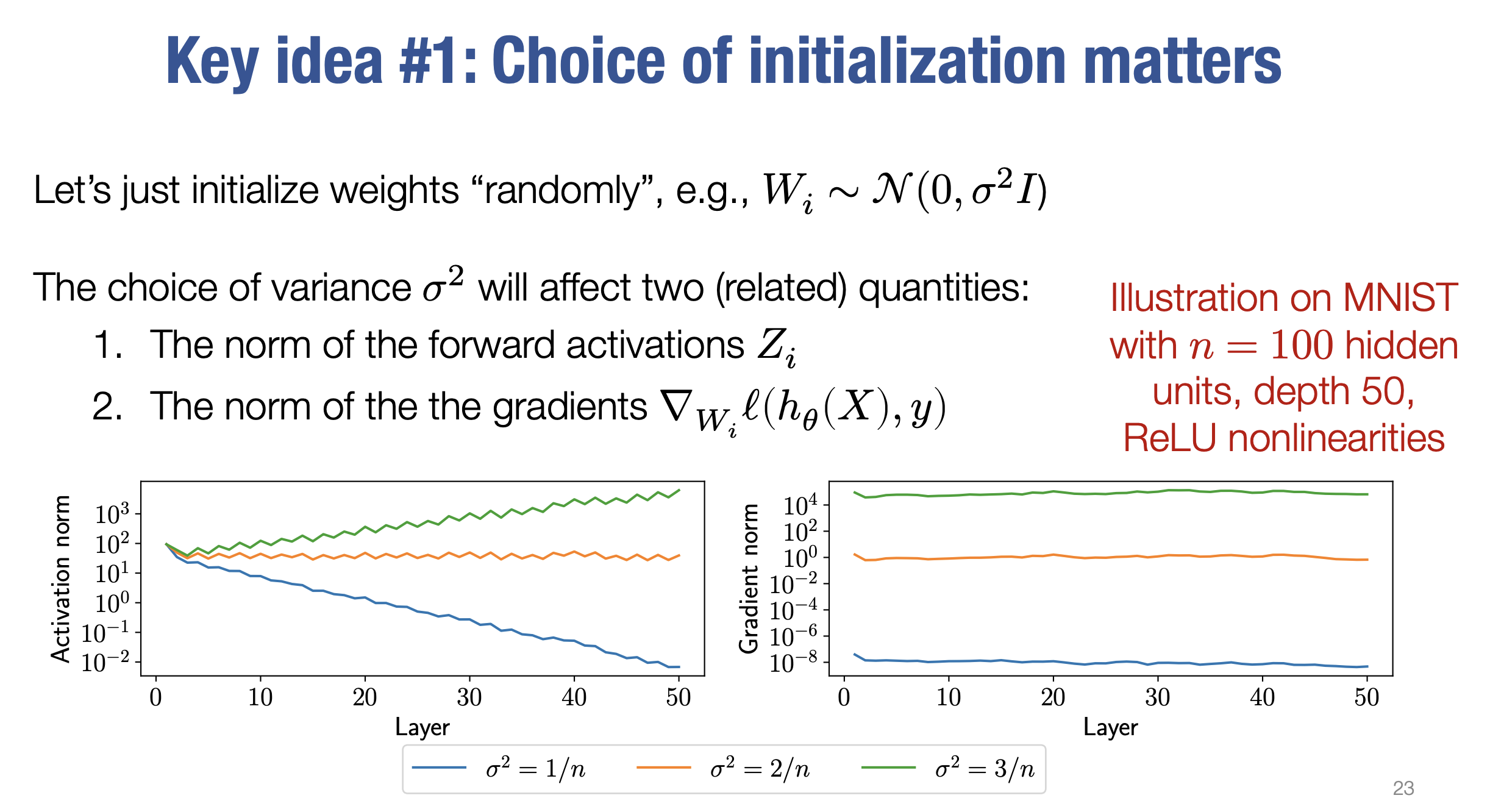

🧠 Weight Initialization in Neural Networks

Initializing weights in a neural network is crucial for successful training. Setting weights to zero is ineffective because it leads to zero gradients and stagnant learning. Instead, random initialization, where weights are drawn from a distribution (e.g., Gaussian), is a common practice. However, the choice of variance σ² for this distribution significantly impacts network performance.

🔍 Impact of Variance on Activations and Gradients

Let’s analyze how different variances affect network behavior, using a 50-layer network with ReLU activations trained on the MNIST dataset as an example:

- Variance σ² = 1/n

- 🔻 Effect:

Norms of activations decrease as we move deeper into the network.

🔻 Result:

Gradients shrink, leading to slow or stalled learning (vanishing gradients).

- 🔻 Effect:

- Variance σ² = 2/n

- ✅ Effect:

Maintains relatively stable norms of activations across layers.

🔹 Result:

Gradients have magnitudes around 1, promoting consistent learning.

This variance is highlighted as the “right” choice in this scenario.

- ✅ Effect:

- Variance σ² = 3/n

- 🔺 Effect:

Norms of activations increase as we move deeper.

🔻 Result:

Gradients explode, causing instability and hindering convergence.

- 🔺 Effect:

for σ² = 1/n and σ² = 3/n don’t change significantly across layers in the figure provided in the sources. However, the issue isn’t about fluctuating gradient norms, but rather about their persistently extreme values. Even though they remain relatively constant across layers, these extreme values (either too small or too large) disrupt the learning process in deep neural networks.

- σ² = 1/n (Vanishing Gradients): Even though the gradient norms are consistently small across layers, they are too small to effectively propagate information back through the network. As gradients flow from the output layer back towards the input layer, their magnitudes diminish with each layer. With a small variance initialization like σ² = 1/n, this diminishing effect is amplified, leading to vanishing gradients in the earlier layers. Consequently, the weights in those early layers receive extremely small updates, essentially stalling their learning process.

- σ² = 3/n (Exploding Gradients): On the other hand, when the gradient norms are consistently large, as seen with σ² = 3/n, the opposite problem occurs. Large gradients lead to exploding gradients, causing significant instability during training. The large updates to the weights can make the optimization process oscillate erratically, preventing the model from converging to a good solution.

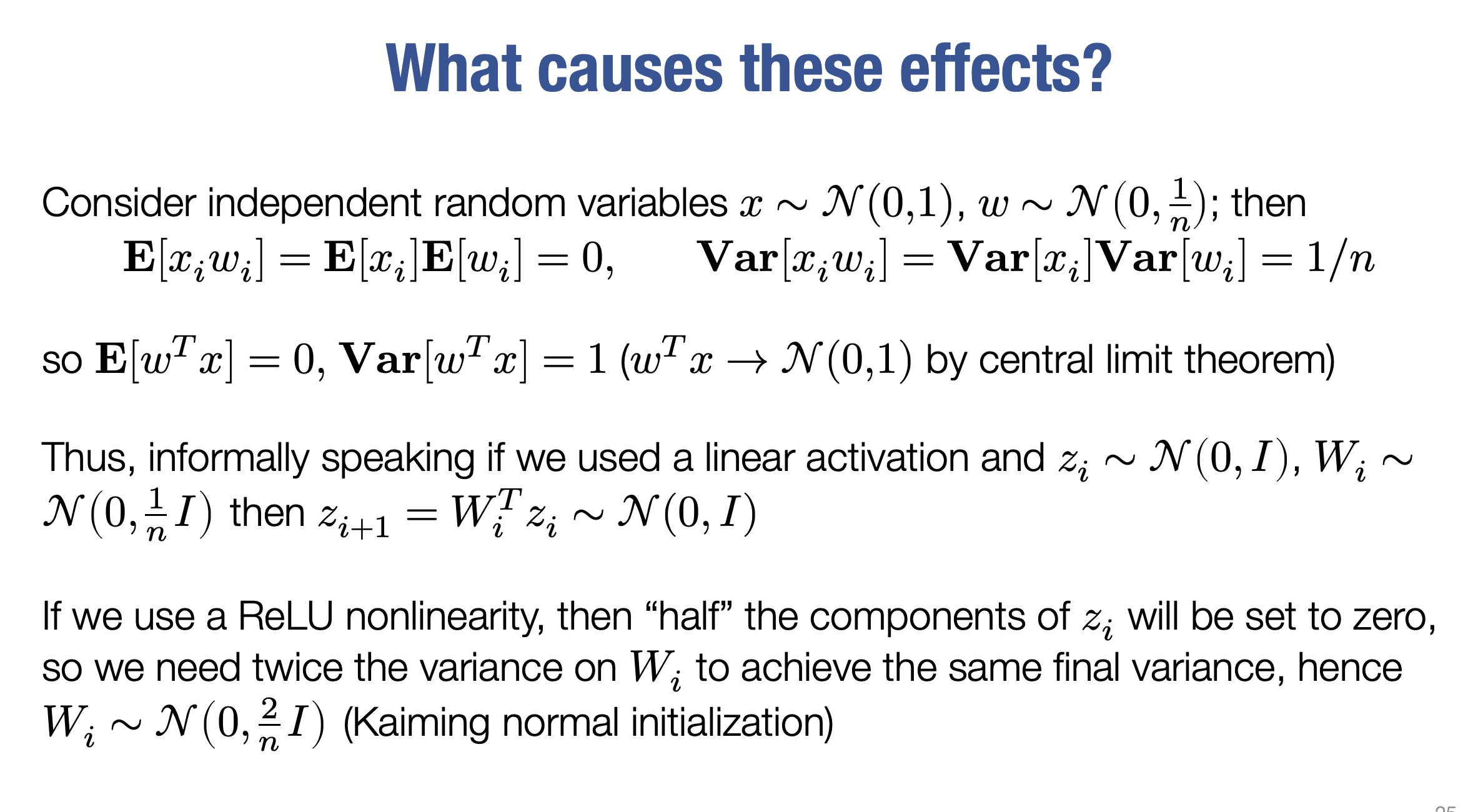

⚡ Connection to ReLU Activations and Kaiming Initialization

The effectiveness of \(( \sigma^2 = \frac{2}{n} )\) is closely linked to ReLU activations:

- ReLU sets all negative inputs to zero.

- When weights are initialized randomly from a Gaussian distribution with mean zero, approximately half of the activations in a layer become zero after ReLU.

To compensate for this halving of activations, a variance of 2/n is used. This is known as Kaiming Normal Initialization, which ensures stable variance of activations across layers, even with the ReLU non-linearity.

🧮 Central Limit Theorem (Informal Explanation)

An informal explanation using the Central Limit Theorem (CLT) helps build intuition:

- Weights w_i and inputs x_i are independent random variables with zero means and specific variances.

- The inner product \(( \sum (x_i \cdot w_i) )\) can be viewed as a sum of random variables.

- According to the CLT, the sum of many independent random variables tends towards a normal distribution.

- By carefully choosing the variance σ² for weight initialization, we can control the variance of this sum (i.e., the neuron activation).

- The goal is to maintain consistent activation variance across layers, preventing vanishing or exploding gradients.

📌 Key Takeaways

- The choice of variance during weight initialization significantly influences the behavior of activations and gradients.

- Too small variance σ² = 1/n → Vanishing gradients.

- Too large variance σ² = 3/n → Exploding gradients.

- Kaiming Initialization σ² = 2/n is commonly used with ReLU activations to maintain stable variance across layers.

- The Central Limit Theorem offers an intuitive explanation of how variance affects activation distributions.

📚 Glossary

- Activation Function: A non-linear function applied to a neuron’s output, introducing non-linearity.

- Adam: An adaptive optimization algorithm that combines momentum with gradient scaling.

- Backpropagation: An algorithm to compute gradients of the loss function w.r.t. network parameters.

- Bias: A parameter added to the weighted sum of inputs in a neuron.

- Broadcasting: A mechanism allowing operations between arrays of different shapes.

- Epoch: One complete pass through the entire training dataset.

- Exploding Gradients: When gradients become excessively large, causing instability.

- Fully Connected Network: A network where each neuron connects to every neuron in the previous layer.

- Gradient Descent: An iterative optimization algorithm that moves in the direction of the negative gradient.

- Hessian Matrix: A matrix of second-order partial derivatives.

- Kaiming Normal Initialization: A weight initialization strategy with variance $( \frac{2}{n} )$.

- Learning Rate: Controls the step size in gradient descent.

- Loss Function: Measures the difference between predictions and true labels.

- Momentum: Accumulates past gradients to smooth updates and accelerate convergence.

- Nesterov Momentum: Computes the gradient at a lookahead point for smoother convergence.

- Neuron: The basic computational unit in a neural network.

- ReLU: An activation function that outputs the input if positive, else zero.

- Saddle Point: A point where the gradient is zero but not a local minimum or maximum.

- Sigmoid: An activation function that squashes input to the range [0, 1].

- Stochastic Gradient Descent (SGD): Uses a random subset of data to update parameters.

- Vanishing Gradients: When gradients become very small, slowing learning.

- Weight: Determines the strength of the connection between two neurons.