📚 Sequence Modeling and Recurrent Networks

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

This lecture covers sequence modeling, RNNs, LSTMs, and their applications in complex prediction tasks. 🚀

🔄 Sequence Modeling

- What Makes Sequence Modeling Unique:

- Deals with ordered input-output pairs where order matters.

- Unlike standard ML problems, pairs are not IID (independent and identically distributed).

- Input-output pairs form a dependent sequence, e.g., $( x_1 \to y_1 \to x_2 \to y_2 )$, etc.

- Examples of Sequence-Dependent Tasks:

- Part-of-Speech Tagging: Predicts part of speech for each word in a sentence using context.

Example: “well” as an adverb vs. a noun. - Speech-to-Text: Translates audio sequences into text, preserving order and temporal relationships.

- Autoregressive Prediction: Predicts the next term in a sequence, e.g., “the quick brown” → “fox.”

- Part-of-Speech Tagging: Predicts part of speech for each word in a sentence using context.

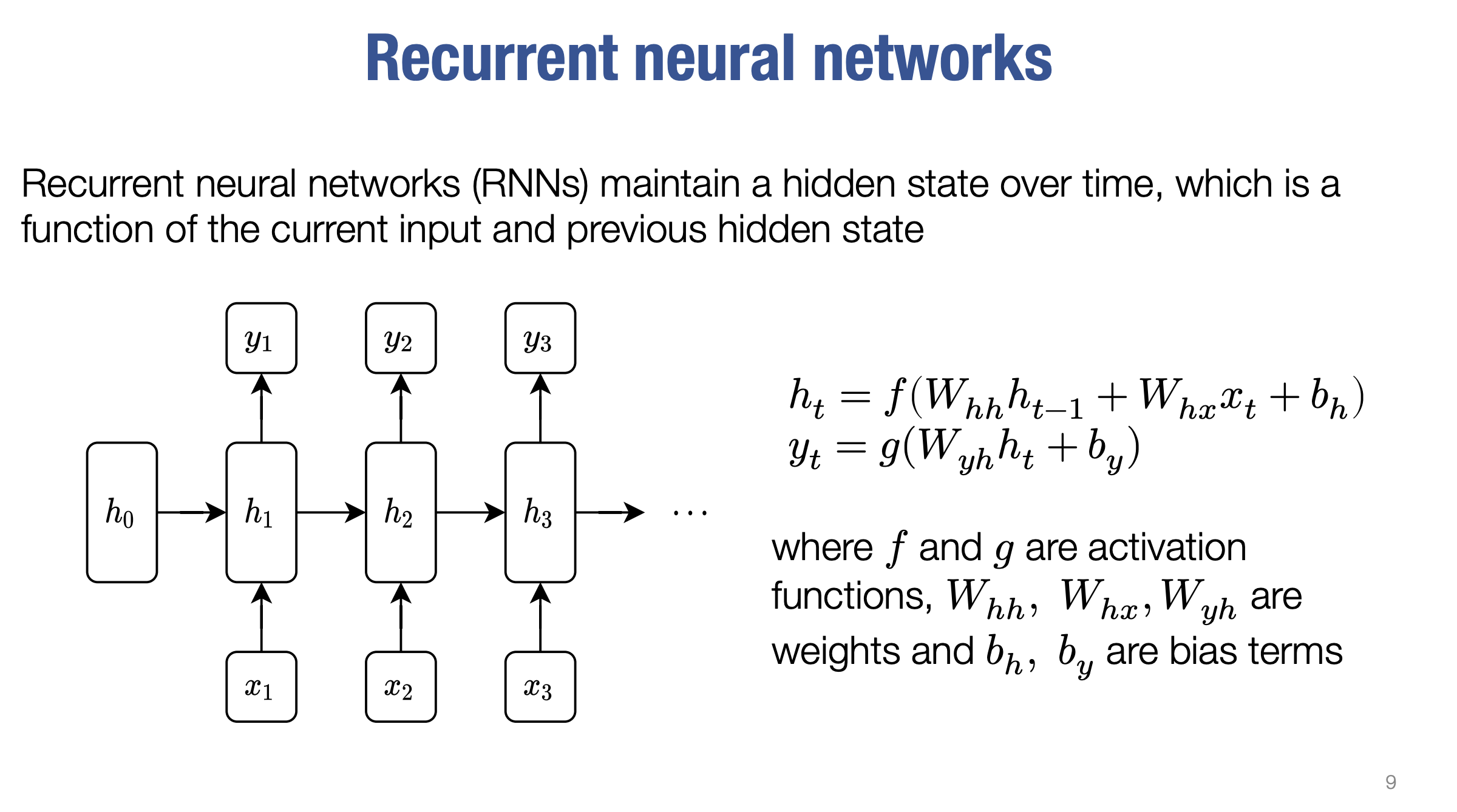

🤖 Recurrent Neural Networks (RNNs)

- Core Idea:

- Models temporal structure using a hidden state updated over time.

- At time $( t )$, hidden state $( h_t )$ is a function of:

- Current input $( x_t )$,

- Previous hidden state $( h_{t-1} )$.

- Formulas:

- Hidden state update:

$( h_t = f(W_{hh} \cdot h_{t-1} + W_{hx} \cdot x_t + b_h) )$

($( f )$: non-linear activation, $( W )$: weights, $( b )$: bias). - Output prediction:

$( y_t = g(W_{yh} \cdot h_t + b_y) )$

($( g )$: non-linear activation).

- Hidden state update:

- Hidden State ($( h_t )$): Vector in $( \mathbb{R}^d )$, where $( d )$ is the dimension of the hidden state.

- Input ($( x_t )$): Vector in $( \mathbb{R}^n )$, where $( n )$ is the dimension of the input vector.

- Output ($( y_t )$): Vector in $( \mathbb{F}^k )$, where $( k )$ is the dimension of the output.

- Weight Matrices:

- $( W_{hh} )$: Maps the previous hidden state to the current hidden state ($( d \times d )$).

- $( W_{hx} )$: Maps the input to the hidden state ($( d \times n )$).

- $( W_{yh} )$: Maps the hidden state to the output ($( k \times d )$).

- Bias Terms:

- $( b_h )$: Vector in $( \mathbb{R}^d )$.

- $( b_y )$: Vector in $( \mathbb{R}^k )$.

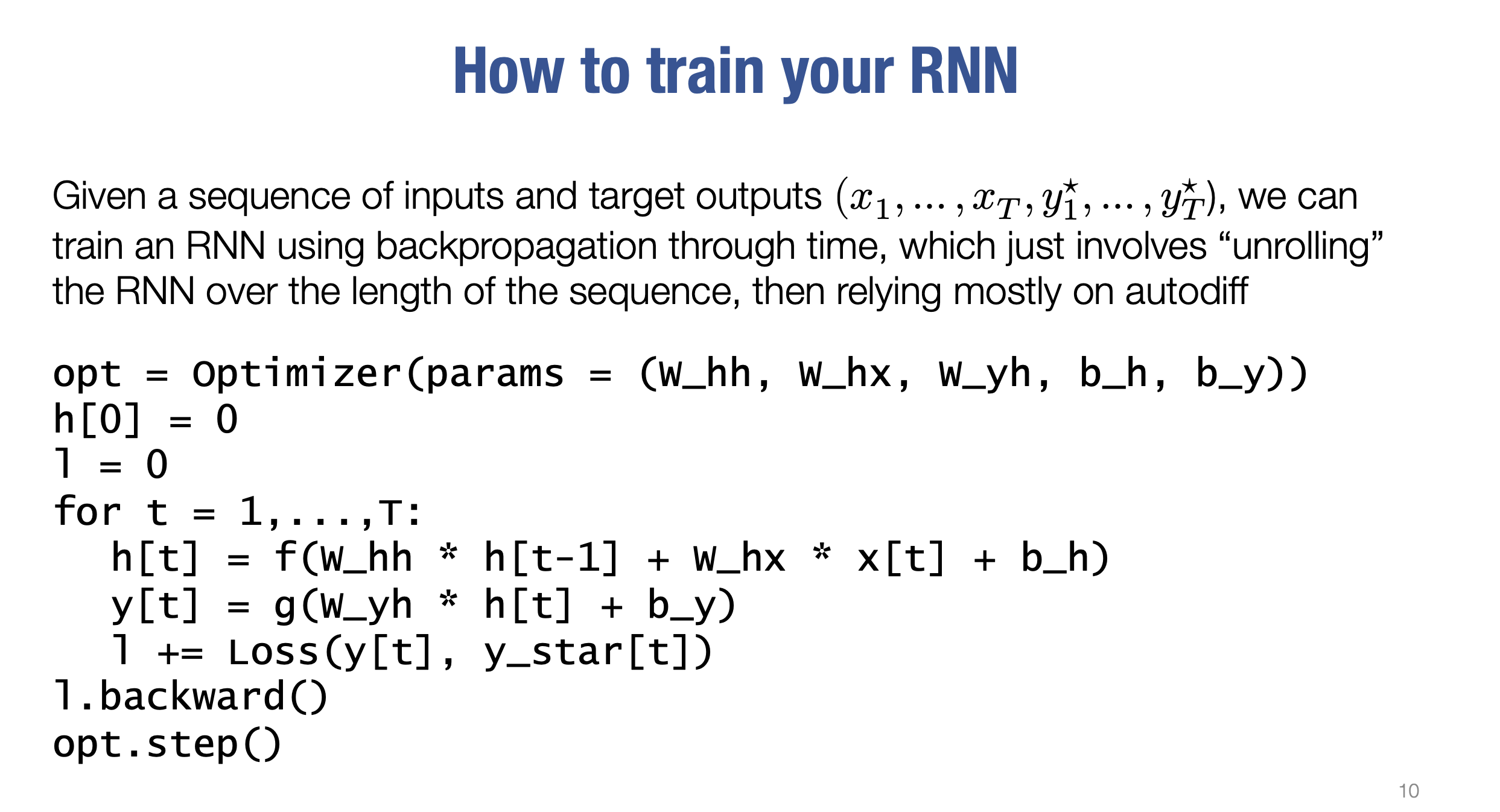

- Training RNNs:

- Adjust parameters ($( W_{hh}, W_{hx}, W_{yh}, b_h, b_y )$) using Backpropagation Through Time (BPTT).

- Gradients computed via automatic differentiation.

- First hidden state $( h_0 )$ initialized to zero.

- Loss computed at each time step, then backpropagated to update weights.

- Deep RNNs:

- RNN layers can be stacked for deeper models, passing outputs from one layer to the next.

⚠️ Challenges in Training RNNs

- Exploding Gradients:

- Weights too large → Gradients grow exponentially → Numerical instability.

- Vanishing Gradients:

- Weights too small → Information decays → Struggles with long-range dependencies.

- Activation Issues:

- ReLU: Can cause exploding or vanishing gradients.

- Sigmoid/Tanh: Saturates, leading to small gradients.

🚨 Exploding Activations/Gradients

- Caused by large weights, leading to exponentially growing activations and gradients.

- Results in numerical instability (e.g., NaN values).

- Example: Initializing $( W_{hh} )$ with variance $( 3/n )$ using ReLU causes rapid growth over time.

🌑 Vanishing Activations/Gradients

- Caused by small weights, leading to decaying information from earlier inputs.

- Example: Initializing $( W_{hh} )$ with variance $( 1.5/n )$ reduces the norm of hidden units over time.

🔍 Why ReLU Can Be Problematic for RNNs

- Effective in other networks but unstable in RNNs:

- Exploding Gradients: Activations can grow without bounds if weights are large.

- Vanishing Gradients: Activations decay when weights are small, especially with incorrect initialization.

🔄 Why Sigmoid and Tanh Improve ReLU but Have Limitations

- Benefits:

- Both activations are bounded:

- Sigmoid: $( [0, 1] )$,

- Tanh: $( [-1, 1] )$.

- Solve the exploding gradient issue.

- Both activations are bounded:

- Limitations:

- Gradients approach zero when activation values are near bounds (e.g., $( 0, 1 )$ for sigmoid or $( -1, 1 )$ for tanh).

- Causes difficulty in learning long-range dependencies.

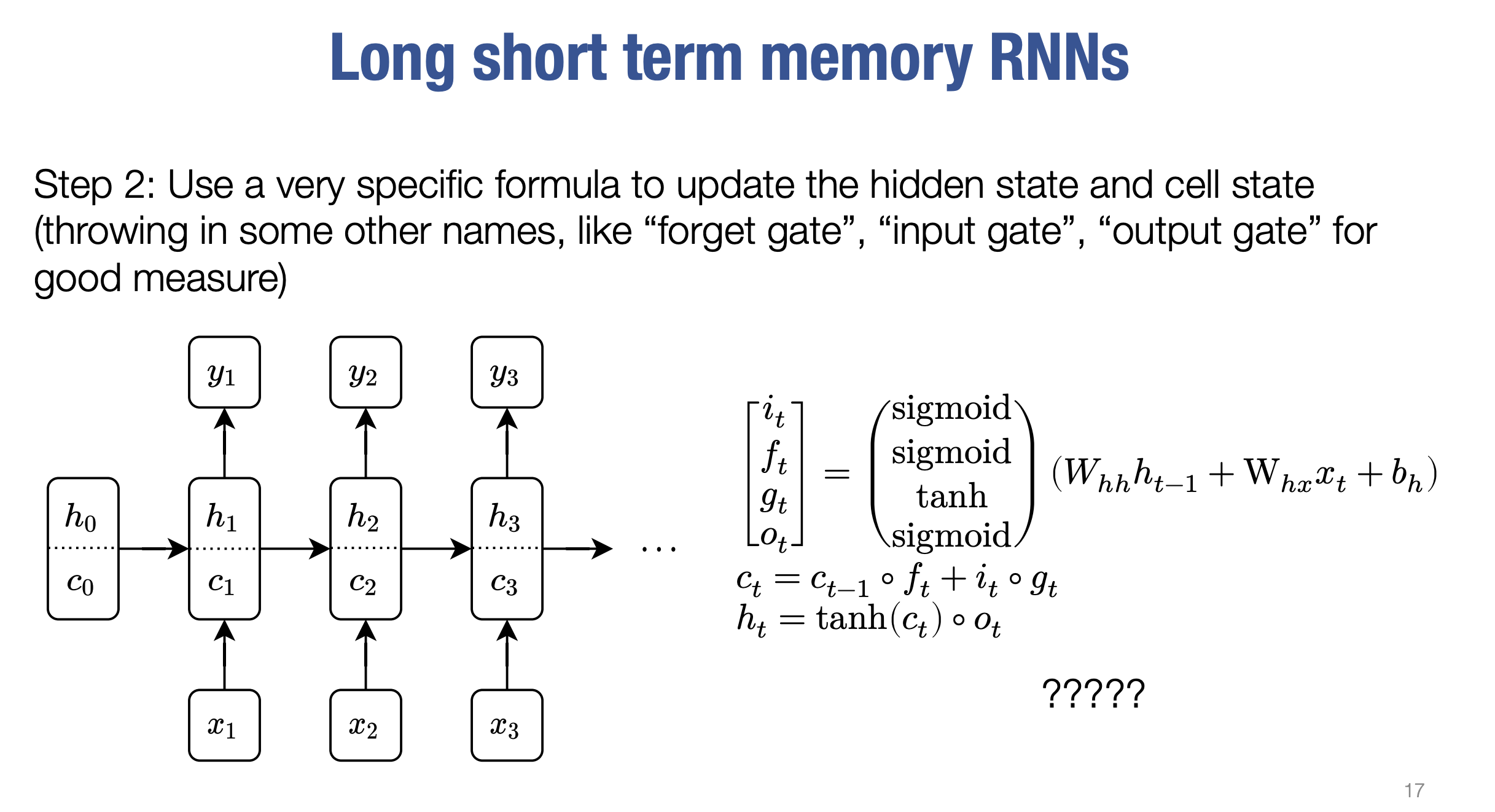

🧠 Long Short-Term Memory Networks (LSTMs)

- What Are LSTMs?

- Special RNNs designed to handle vanishing gradient problems.

- Split hidden state into two components:

- Hidden state ($( h_t )$).

- Cell state ($( c_t )$).

- Key Components:

- Forget Gate ($( f_t )$): Retains parts of the previous cell state.

- Input Gate ($( i_t )$) and Cell Gate ($( g_t )$): Add new information to the cell state.

- Output Gate ($( o_t )$): Controls output from the cell state.

- Cell State Update:

- Combines retained information and new terms: $( c_t = f_t \cdot c_{t-1} + i_t \cdot g_t )$.

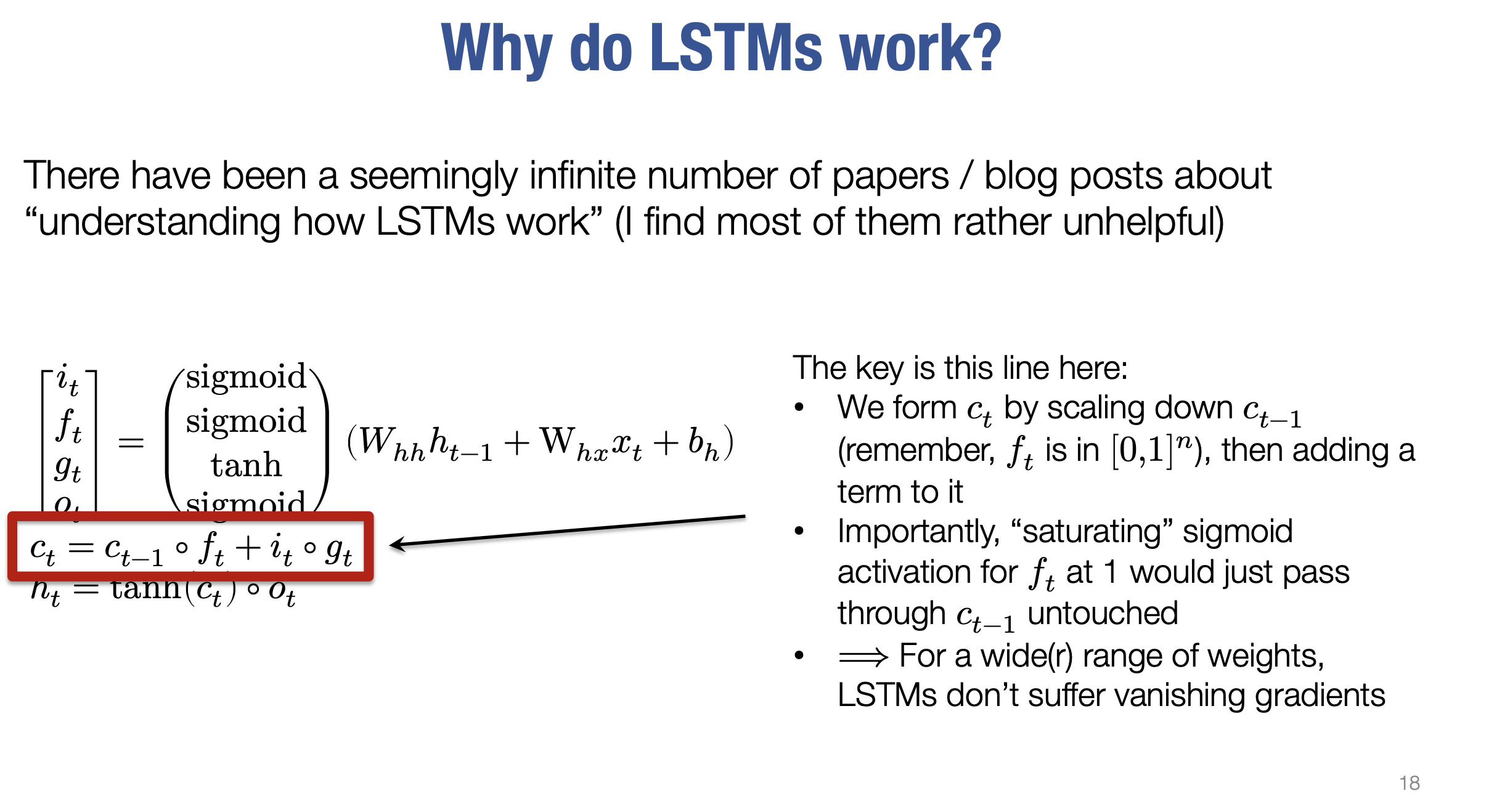

- Why LSTMs Work:

- Gates use sigmoid activations to control updates, preventing vanishing gradients.

- History:

- Introduced in 1997 by Hochreiter and Schmidhuber.

- Gained popularity after Andrej Karpathy’s 2015 blog post on LSTMs in text generation.

📐 Dimensions in Long Short-Term Memory Networks (LSTMs)

- Hidden and Cell States ($( h_t, c_t )$): Both are vectors in $( \mathbb{R}^d )$.

- Gate Vectors ($( i_t, f_t, g_t, o_t )$): Each has dimension $( d )$.

- Weight Matrices:

- $( W_{hh} )$: Maps hidden state to intermediate states ($( 4d \times d )$).

- $( W_{hx} )$: Maps input to intermediate states ($( 4d \times n )$).

- Bias Terms:

- $( b_h )$: Vector of size $( 4d )$.

- Intermediate States: Vectors $( i, f, g, o )$ in $( \mathbb{R}^d )$.

- Cell State ($( c_t )$): Vector of dimension $( d )$.

🧠 LSTM Formulas and Key Concepts

- Cell State Update:

$[

c_t = f_t \odot c_{t-1} + i_t \odot g_t

]$

- $( f_t )$: Forget gate scales $( c_{t-1} )$ ($( [0, 1] )$).

- $( i_t )$: Input gate controls new information.

- $( g_t )$: Cell gate adds bounded new terms ($( [-1, 1] )$).

- Hidden State Update:

$[

h_t = \text{tanh}(c_t) \odot o_t

]$

- Output gate ($( o_t )$) determines visible portions of $( c_t )$.

- Why LSTMs Work:

- Scales past cell states with $( f_t )$ and adds new terms via $( i_t )$.

- Mitigates vanishing gradients by preserving past information.

🔍 Lecturer’s Insights on LSTMs

- Gate names (e.g., input, forget, output) are somewhat arbitrary.

- Other structures can work fine, but LSTM equations are:

- Well-tuned and effective.

- A balance of preserving memory and avoiding vanishing gradients.

🌟 Beyond Simple Sequential Models

- Sequence-to-Sequence Models:

- Encode an input sequence into a hidden state.

- Use another RNN to decode the hidden state into the output sequence.

Example: Language translation.

- Bidirectional RNNs:

- Process input in both forward and backward directions.

- Useful for tasks like translation (not autoregressive tasks).

Example: Stacked forward and backward RNNs.

- Training Complex Models:

- Entire models (e.g., seq2seq or bidirectional RNNs) can be trained end-to-end using automatic differentiation.