🤖 Deep Reinforcement Learning

This briefing document reviews the main themes and key takeaways from a collection of sources focused on deep reinforcement learning (Deep RL), including insights from CS 285 lectures and supplementary materials.

📉 The Limitations of Data-Driven AI

Data-driven AI has achieved impressive results, primarily by mimicking human behavior using large datasets. However, this method relies on density estimation and is better at reproducing human-like outputs than surpassing or innovating beyond them.

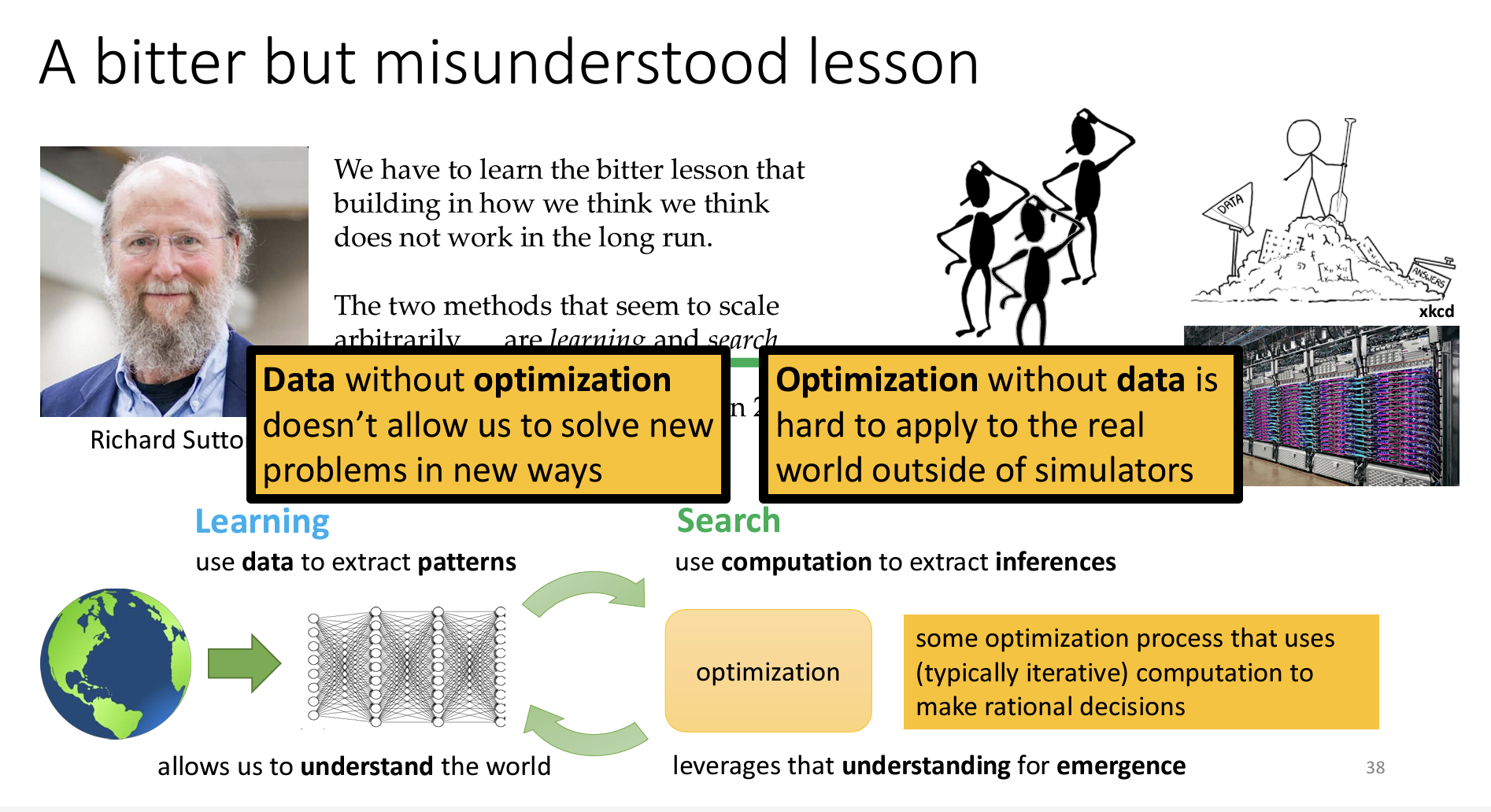

🔹 As Richard Sutton explains in “The Bitter Lesson,” scalable AI requires both learning and search:

“The two methods that seem to scale arbitrarily… are learning and search.”

- Learning enables AI to understand patterns in data.

- Search, in the RL context, allows AI to use this understanding to find new solutions.

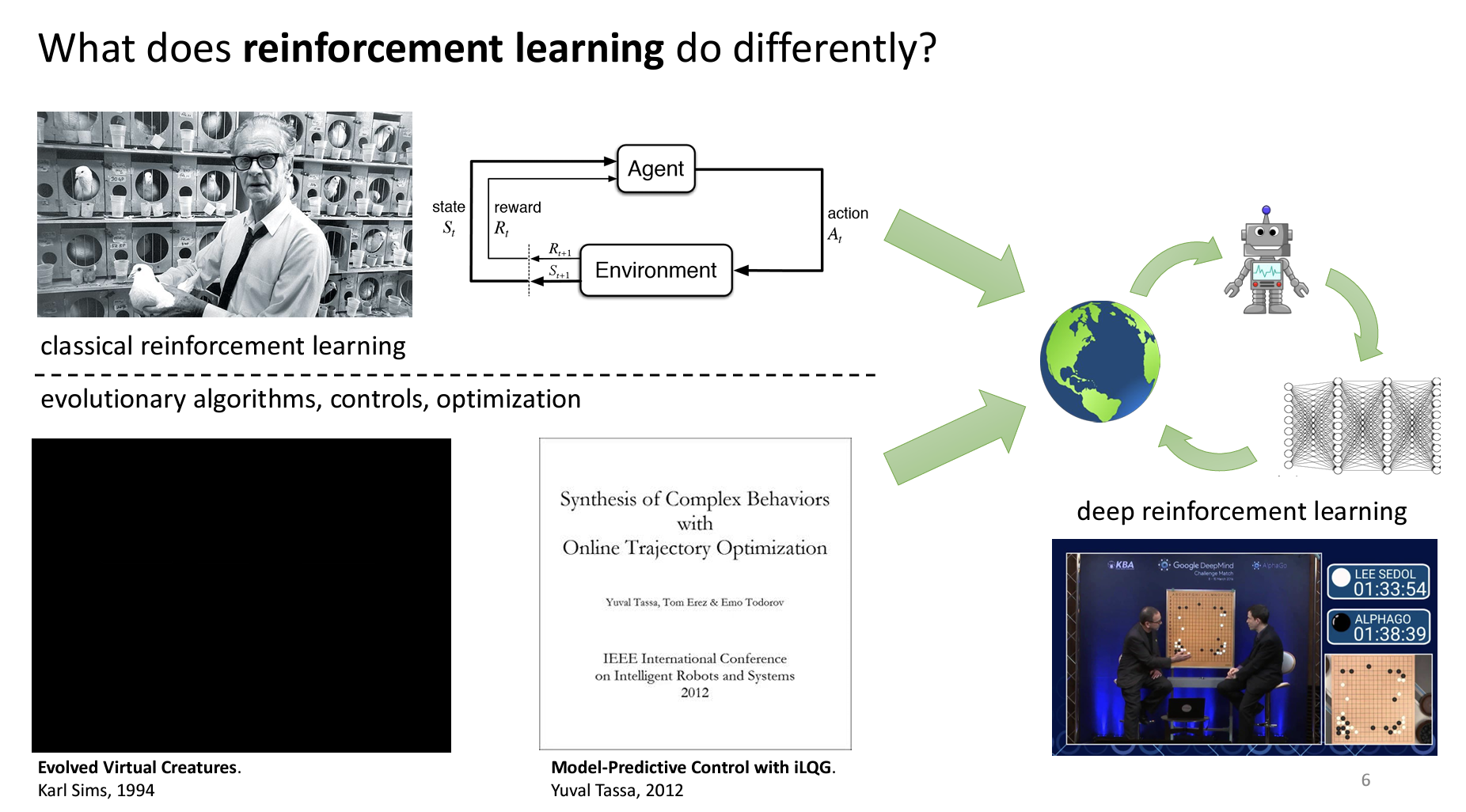

🧠 Reinforcement Learning vs. Supervised Learning

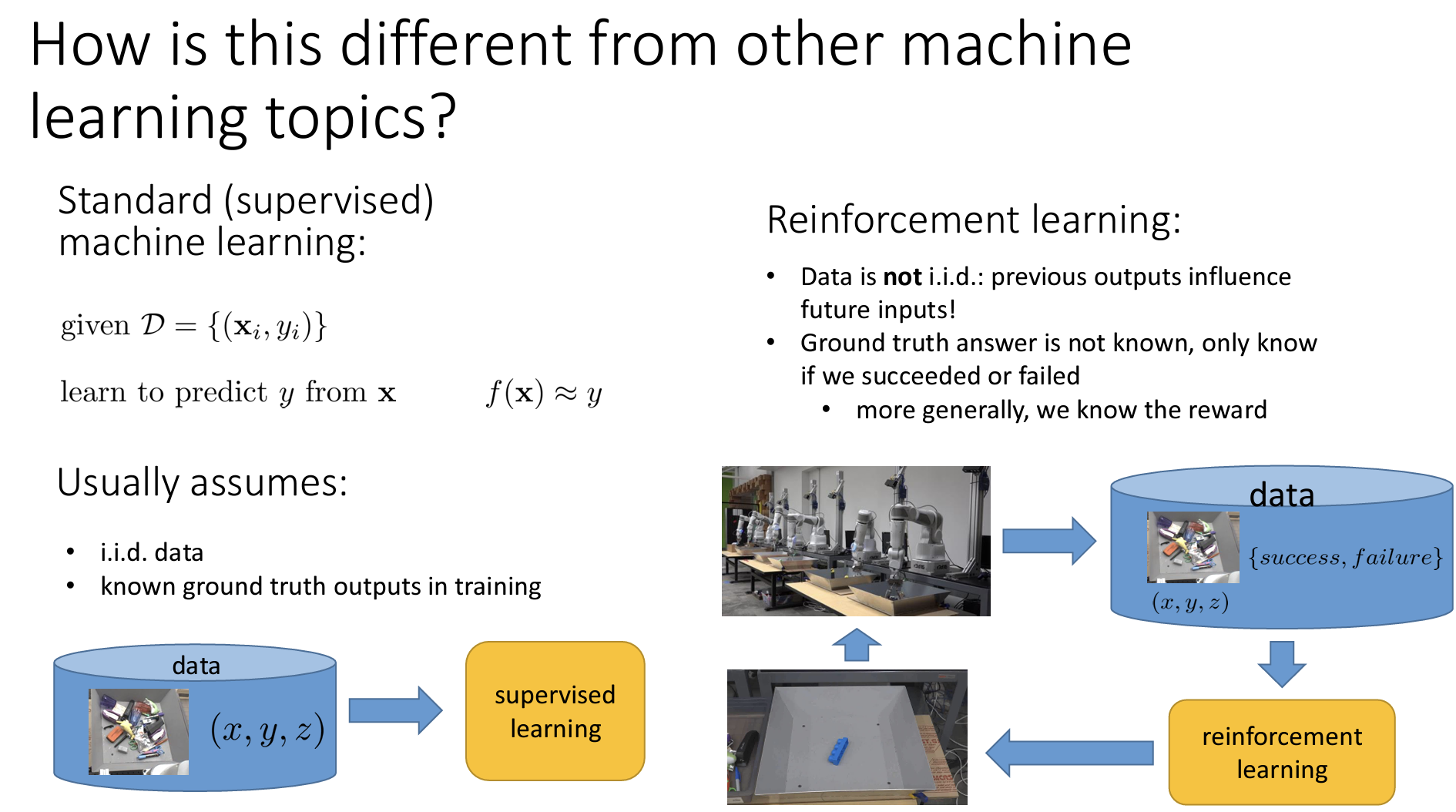

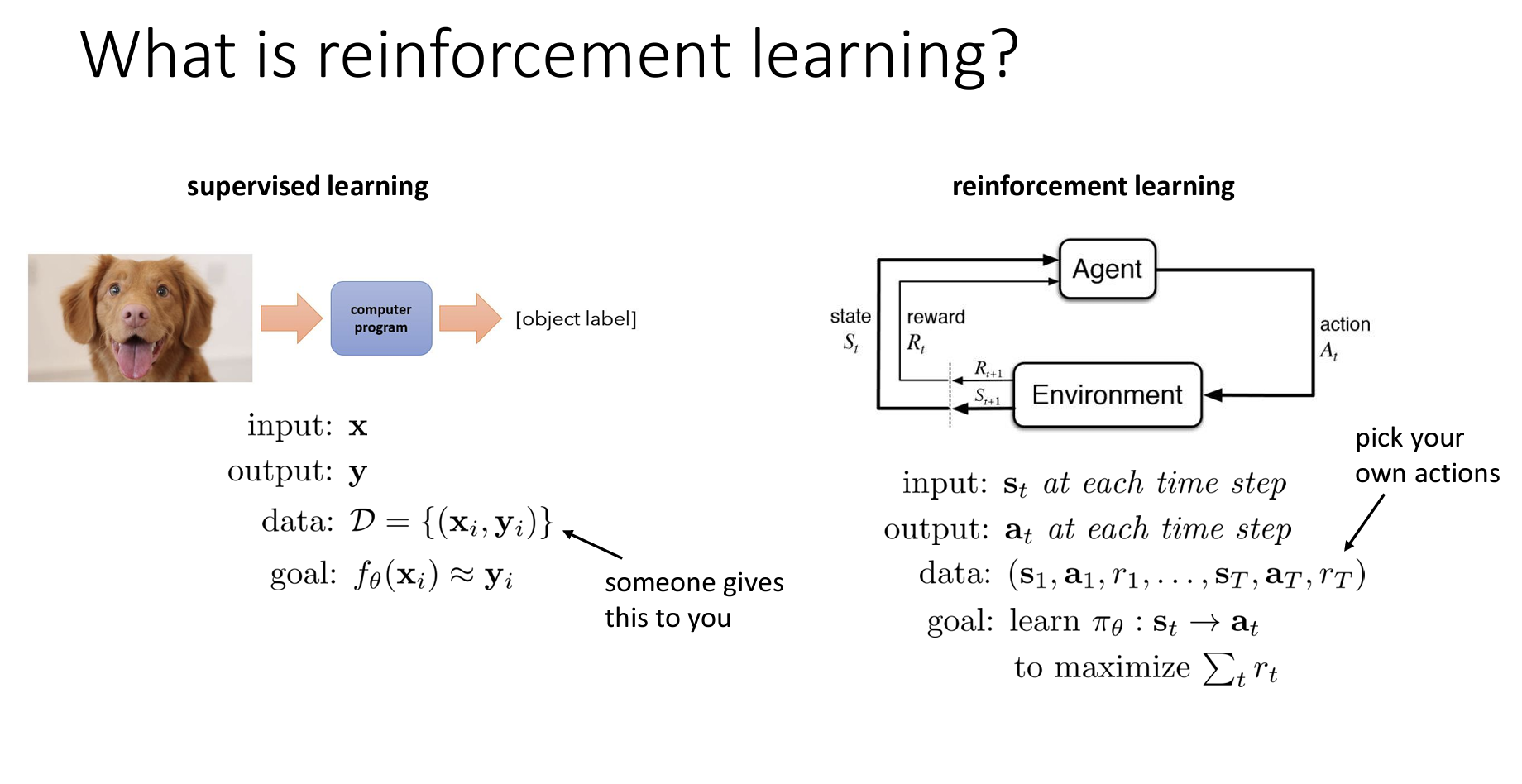

- Data:

- Supervised Learning: Uses a dataset of inputs (X) and outputs (Y) to learn a function that predicts Y from X. This function typically has parameters, like weights in a neural network, trained to match the given labels (Y).

- Reinforcement Learning: Involves an agent interacting with the environment to collect data sequences of states, actions, and rewards. The agent chooses actions based on states, and the environment responds with new states and rewards that indicate the success of those actions.

- Assumptions about Data:

- Supervised Learning: Assumes independent and identically distributed (i.i.d.) data:

- All X-Y pairs are independent of each other; one label does not affect another.

- A single function maps X to Y consistently across all samples.

- Each input (X) has a corresponding true label (Y).

- Reinforcement Learning: Does not assume i.i.d. data:

- Data is not independent; past actions influence future inputs.

- There’s no single ground truth answer, only rewards that signal success or failure.

- Supervised Learning: Assumes independent and identically distributed (i.i.d.) data:

- Goal:

- Supervised Learning: Aims to approximate a function that fits provided labels accurately.

- Reinforcement Learning: Aims to learn a policy that maximizes cumulative reward over time. This often involves strategic reasoning, where immediate actions might be sacrificed to gain higher rewards later. For instance, in a game, an agent may position itself to win rather than pursuing immediate points.

Supervised Learning is ideal when labeled data is available, and the task is to replicate patterns, such as in image classification where human-labeled data is abundant. In contrast, Reinforcement Learning is better suited to tasks requiring optimal decision-making without predefined labels, like robotic control or autonomous navigation, where the agent learns by trial and error to maximize rewards.

🤖 Deep Reinforcement Learning

Deep Reinforcement Learning combines:

- Reinforcement Learning: Provides a framework for learning optimal decision-making through trial and error and reward maximization.

- Deep Learning: Supports complex, high-dimensional data processing (e.g., images, text) through large-scale models.

Why Deep RL is Powerful:

- Data-driven learning: Enables systems to use extensive data to model real-world complexity.

- Goal-driven optimization: Helps agents optimize toward specific objectives, resulting in emergent behavior that may exceed human-designed solutions.

With deep reinforcement learning, agents are designed to adapt and innovate within complex environments, showing intelligent behavior that can generalize to new problems.

🌍 Applications and Potential of Deep RL

Deep RL holds significant promise in advancing AI, as it allows systems to learn from data and discover novel solutions. Notable applications and successes include:

- AlphaGo: Demonstrated strategic moves that surprised even expert human players.

- Traffic Control: Optimizes traffic flow for smoother transportation.

- Language Modeling: Enhances alignment of AI responses to human preferences.

- Image Generation: Advances image creation from text descriptions, as seen with tools like Stable Diffusion.

The sources highlight that deep reinforcement learning could pave the way for intelligent systems that learn from data but also use optimization to make decisions in real-world, complex environments. This fusion of data-driven insights with goal-driven optimization represents a balance that allows these systems to learn from existing patterns and apply knowledge to new challenges.

💪 The Power of Deep Reinforcement Learning

Deep RL combines deep learning’s data-processing prowess with RL’s optimization abilities, creating AI that can learn and innovate independently of human guidance.

🌟 Examples of Deep RL Applications:

- Robotics: Robots learning complex tasks like grasping, jumping, balancing, and sorting.

- Gaming: AI agents mastering games (Atari, Go) and developing strategies that even surprise human experts.

- Traffic Control: Optimizing traffic flow and reducing jams with autonomous systems.

- Language Models: Enhancing language models like ChatGPT to align better with human preferences.

- Image Generation: Improving relevance and accuracy in generated images from textual prompts.

- Chip Design: Optimizing chip layouts for cost and performance.

🚀 Beyond Reward Maximization

While reward maximization is central to RL, real-world decision-making involves additional challenges. This course covers advanced topics, including:

- Inverse Reinforcement Learning: Learning reward functions by observing behavior.

- Transfer Learning & Meta-Learning: Adapting knowledge across tasks for quicker learning.

- Prediction: Using predictive capabilities to inform better decision-making.

🌐 Deep RL as a Building Block for General Intelligence

There’s a compelling case for Deep RL as a foundation for general intelligence, supported by:

- Learning Power: Humans learn most skills, suggesting learning mechanisms could underpin general AI.

- Single Learning Algorithm Hypothesis: A flexible learning algorithm could potentially tackle various tasks.

- Deep Learning and Brain Similarities: Parallels between neural network representations and brain activity hint at a biological basis for deep learning.

- Neuroscience Support for RL: Evidence suggests RL as a mechanism for decision-making in humans and animals.

⚠️ Remaining Challenges and Future Directions

Despite its promise, Deep RL faces several hurdles:

- Data Efficiency: Deep RL needs vast data; humans learn much faster.

- Knowledge Reuse: Transfer learning in RL remains an open problem.

- Reward Function Design: Defining reward functions for complex tasks is challenging.

- Prediction: Integrating predictive models with RL methods is an ongoing area of research.

🏁 Conclusion

Deep RL offers a powerful approach to creating intelligent systems that could surpass human abilities. While challenges persist, research is paving the way toward adaptable, learning-based AI capable of solving complex problems in groundbreaking ways.

📝 Short Answer Questions

Instructions: Answer each question in 2-3 sentences.

- Why might supervised learning not be ideal for a robot learning to grasp objects?

- Supervised learning requires labeled data with optimal grasp points, but grasping depends on real-time physical interactions, which makes precise labeling challenging.

- What distinguishes reinforcement learning from supervised learning?

- Reinforcement learning learns from trial and error by interacting with an environment and adapting based on rewards, unlike supervised learning which relies on labeled data.

- How do “learning” and “search” relate to AI, as per Richard Sutton?

- Learning extracts data patterns, while search uses this knowledge to make decisions. Together, they allow AI to optimize and adapt intelligently.

- Key differences between data assumptions in supervised vs. reinforcement learning?

- Supervised learning assumes i.i.d. data with known labels; RL deals with sequential, dependent data where optimal actions are unknown.

- Role of the reward function in reinforcement learning?

- The reward function assigns value to states or actions, guiding the agent to maximize cumulative rewards over time.

- Explain “credit assignment” in RL.

- Credit assignment is about identifying which actions contributed most to an outcome, especially when rewards are delayed.

- Real-world RL applications beyond games and robotics?

- Applications include inventory management, traffic optimization, chip design, personalized recommendations, and language model training.

- Limitation of data-driven AI systems relying on density estimation?

- They replicate training data patterns but struggle with novel solutions or exceeding human behavior when data is suboptimal.

- How does imitation learning differ from learning from rewards?

- In imitation learning, agents learn from expert demonstrations without direct reward feedback, unlike reward-based learning.

- Benefits of predictive models in RL?

- Predictive models help agents anticipate consequences, plan effectively, and make sophisticated decisions.

🖊 Essay Questions

- Ethical considerations in designing reward functions for RL (e.g., autonomous driving or healthcare).

- How Deep RL bridges data-driven and optimization-based approaches; strengths and limitations.

- Model-based vs. model-free RL methods, with scenario examples for each.

- Analyze if learning, rather than explicit programming, is the essence of intelligence.

- Potential and challenges of Deep RL in creating general-purpose AI systems.

📖 Glossary of Key Terms

- Supervised Learning: Learning with labeled input-output pairs for prediction.

- Reinforcement Learning (RL): Learning by interacting with an environment to maximize rewards.

- Agent: The decision-maker in an RL system.

- Environment: The world the agent interacts with.

- State: The current situation in the environment.

- Action: Choices made by the agent that affect the environment.

- Reward: A measure of the desirability of a state or action.

- Policy: The agent’s strategy, mapping states to actions.

- Model-Free RL: Learning from experience without modeling the environment (e.g., Q-learning).

- Model-Based RL: Learning a model of the environment for planning (e.g., using state transitions).

- Deep Reinforcement Learning: RL with deep neural networks for function approximation.

- Imitation Learning: Learning from observing expert behavior.

- Inverse Reinforcement Learning: Learning reward functions from expert actions.

- Predictive Model: Anticipates future states based on current state and actions.

- Transfer Learning: Applying knowledge from one task to another related task.

- Meta-Learning: Learning to adapt quickly to new tasks using prior experience.

- Credit Assignment: Identifying which actions led to success or failure.

- Emergent Behavior: Complex outcomes from the interaction of simpler components in a system.

This document provides an in-depth look into Deep RL, from theoretical foundations to practical applications and challenges, shedding light on its potential for advancing AI.