📚 Convolutional Networks

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

This document summarizes key concepts and practical considerations related to Convolutional Networks (CNNs) based on provided lecture excerpts and slides. CNNs are a fundamental deep learning architecture, particularly effective for spatial data like images, audio, and sequences. By leveraging input structure, CNNs are more efficient and effective compared to fully connected networks for large inputs.

🔑 Key Themes and Ideas

🌟 Convolutional Networks as Structured Deep Networks

- CNNs are a cornerstone of deep learning and have historically been among the most impactful structured networks.

“It’s not too much of an exaggeration to say that convolutional networks are, in some sense, historically the most important structured deep network.”

- Applicable beyond images to sequences, audio, and other domains.

❌ The Problem with Fully Connected Networks for Image Data

- High Parameter Count: Flattening images leads to impractical parameter counts.

- A 256x256 RGB image has ~200,000 inputs. Mapping to a 1000-dimensional hidden layer = 200M parameters.

“That would require 200 million parameters.”

- A 256x256 RGB image has ~200,000 inputs. Mapping to a 1000-dimensional hidden layer = 200M parameters.

- Inefficient Use of Parameters: Fully connected layers don’t exploit spatial structure.

- Failure to Capture Image Invariances: Fully connected networks don’t recognize that shifted images are the same.

🛠️ Core Concepts of Convolutional Operations

👀 Local Interactions

- Hidden units are primarily influenced by nearby inputs in the previous layer.

“This hidden unit is influenced primarily by inputs near that spatial location.”

🔄 Weight Sharing

- The same weights (filter/kernel) are used across locations, reducing parameters.

“We use the same weight slid across the whole image to produce our next layer.”

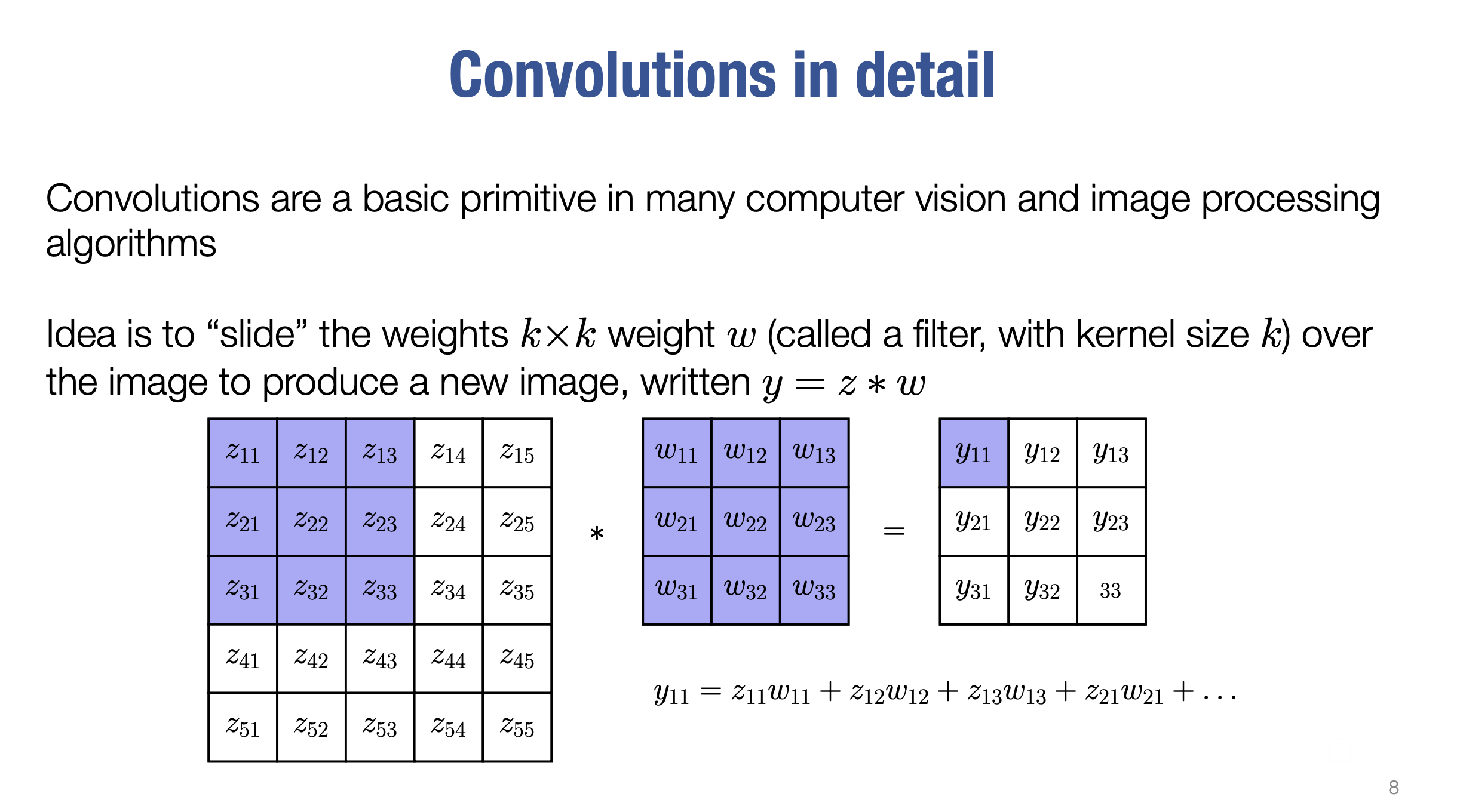

📖 Formal Definition

- Convolution is the inner product of the input at a location with the filter, slid across the image.

“For the first output, we multiply the input block by the weights.”

🤔 Convolution vs. Correlation

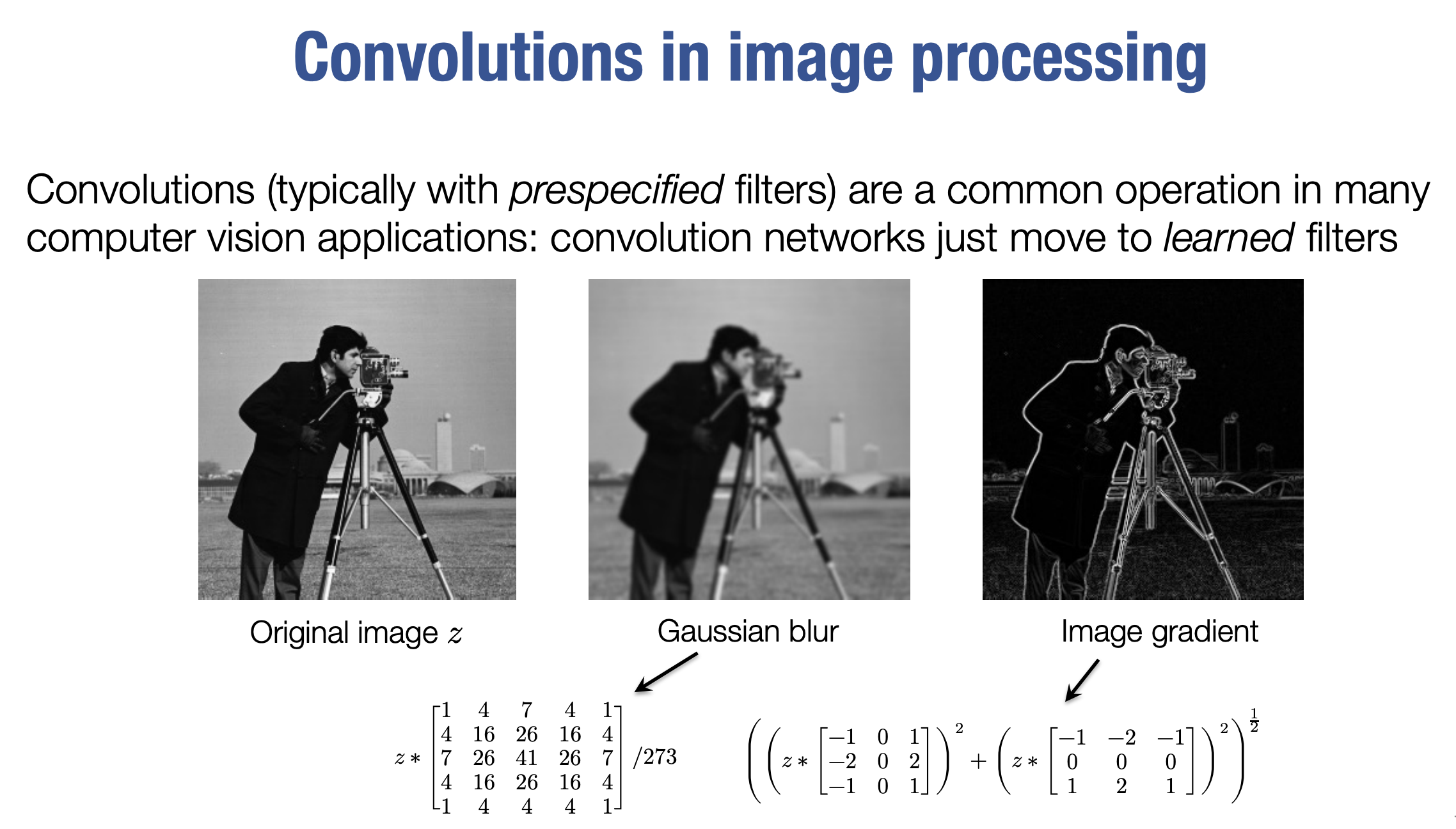

- In machine learning, “convolution” refers to correlation (without flipping the filter).

“What we do in ML is technically a correlation.”

💡 Advantages of Convolutional Layers

- Reduced Parameter Count:

- A 256x256 grayscale image mapped to a hidden layer = billions of parameters.

- With a 3x3 convolutional filter: only 9 parameters.

“That would require 4 billion parameters… with a convolutional structure, you need nine parameters.”

- Captures Invariances:

- Natural invariances like shift invariance are captured effectively.

🔧 Practical Elements of Convolutions

- Multi-Channel Convolutions:

- Input/hidden layers typically have multiple channels. Convolutions sum across input channels to produce output channels.

“Each channel is a 2D array, and we map multiple inputs to multiple outputs.”

- Input/hidden layers typically have multiple channels. Convolutions sum across input channels to produce output channels.

- Filters as Matrices:

- Filters for multi-channel convolutions are matrices mapping input channels to output channels.

- Padding:

- Zeros are added around input borders to maintain image size. For a $( k \times k )$ filter, padding is $((k-1)/2)$.

“Padding ensures output is the same size as the input.”

- Zeros are added around input borders to maintain image size. For a $( k \times k )$ filter, padding is $((k-1)/2)$.

- Downsampling (Pooling):

- Reduces resolution (and computation) via pooling (e.g., max or average pooling).

“Pooling combines a small block of values into one.”

- Reduces resolution (and computation) via pooling (e.g., max or average pooling).

- Group Convolutions:

- Limits input-output channel connections to reduce parameters. Depth-wise convolutions are the extreme case.

“Only some input channels lead to specific output channels.”

- Limits input-output channel connections to reduce parameters. Depth-wise convolutions are the extreme case.

- Dilated Convolutions:

- Increases receptive field by spreading out filter influence.

“Adding a dilation factor allows filters to consider a wider area.”

- Increases receptive field by spreading out filter influence.

🌈 Multi-Channel Convolutions

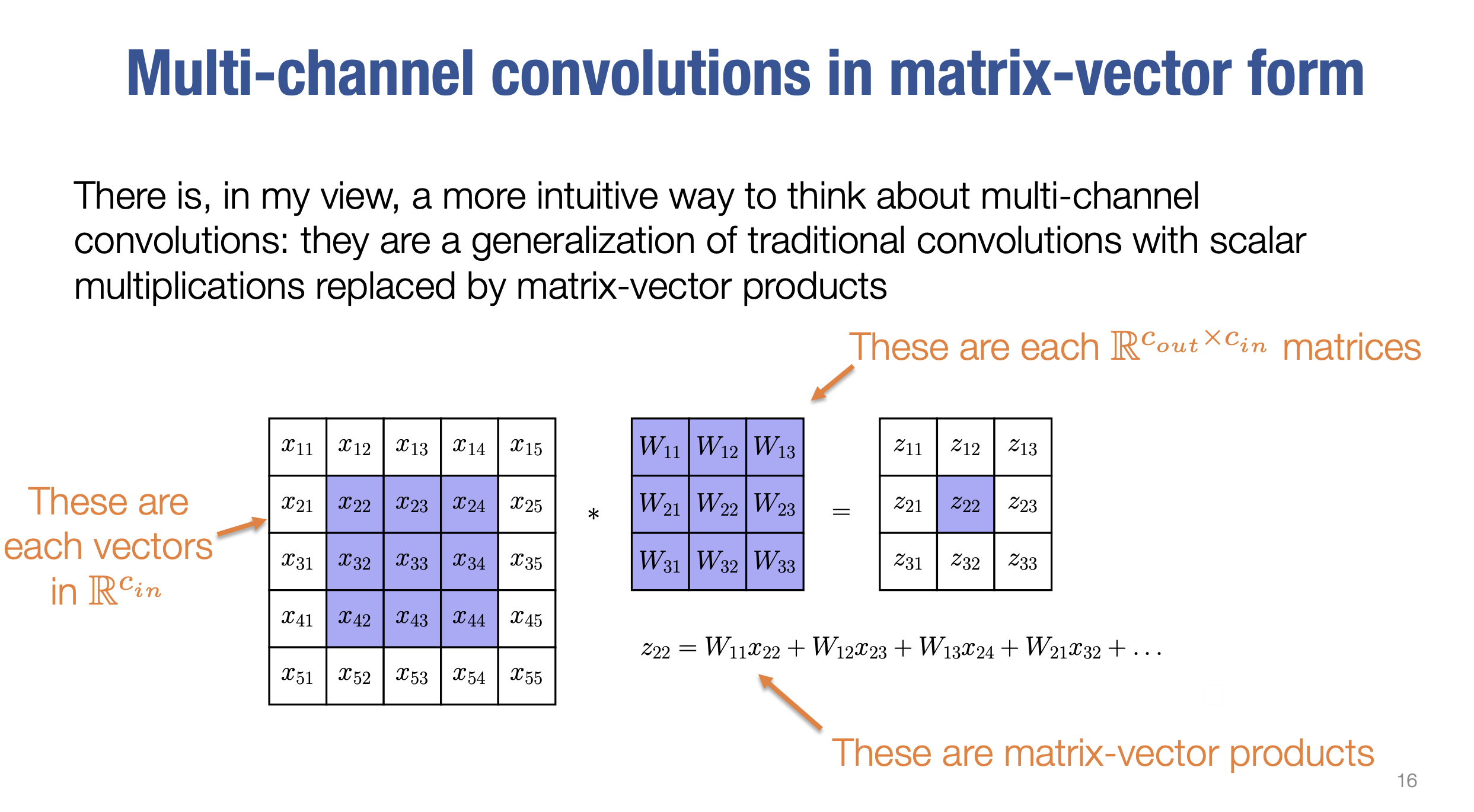

Multi-channel convolutions generalize traditional convolutions by replacing scalar multiplications with matrix-vector products. Here’s how it works step by step:

🧩 Breakdown of Multi-Channel Convolutions

- 📥 Inputs as Vectors:

Each spatial location in the input is treated as a vector, where components correspond to the values of input channels at that location.- For $( c_{in} )$ input channels, each vector is in $( \mathbb{R}^{c_{in}} )$.

- 📤 Outputs as Vectors:

Similarly, the output at each spatial location is treated as a vector, where components correspond to the values of output channels at that location.- For $( c_{out} )$ output channels, each vector is in $( \mathbb{R}^{c_{out}} )$.

- 🧮 Filters as Matrices:

Each element of the filter is a matrix that maps an input channel vector to an output channel vector.- For $( c_{in} )$ input channels and $( c_{out} )$ output channels, each filter element is a matrix in $( \mathbb{R}^{c_{out} \times c_{in}} )$.

-

📊 Matrix-Vector Products:

At each spatial location, the convolution involves multiplying the filter matrix by the input vector at that location, producing an output vector for that location. -

🔀 Multiple Filters:

There is a separate filter (set of weights) for every possible input-output channel pairing. - ➕ Sum of Convolutions:

Each output channel is the sum of convolutions over all input channels.

📖 Example: Multi-Channel Convolution with a 3x3 Filter

Imagine a $( 3 \times 3 )$ filter:

- Each of the 9 elements in the filter is a matrix.

- The input at each spatial location is a vector.

- The output at each location is also a vector.

When the $( 3 \times 3 )$ filter slides across the image:

- The matrix in the filter is multiplied by the input vector at the corresponding location.

- The result is an output vector for the corresponding location in the output.

🚀 Why Multi-Channel Convolutions Matter

This approach intuitively explains multi-channel convolutions and enables efficient implementation using matrix operations. It effectively handles data with multiple channels (e.g., RGB images, feature maps) while leveraging the power of matrix multiplications.

📉 Differentiating Convolutions

To integrate a convolution operation into a deep network, it’s essential to compute the partial derivatives (adjoints) of the operation. This includes derivatives with respect to both its inputs and weights.

- Adjoint Operations:

- Compute partial derivatives of convolutions w.r.t. inputs and weights.

“We need partial derivatives to integrate operations into a deep network.”

- Compute partial derivatives of convolutions w.r.t. inputs and weights.

- Convolution as Matrix-Vector Product:

- Represent convolution as a matrix-vector product for efficient derivatives.

“Forward pass = matrix-vector product, backward pass = transpose multiplication.”

- Represent convolution as a matrix-vector product for efficient derivatives.

- Adjoint w.r.t. Input:

- Convolve incoming adjoint with a flipped filter.

“This equals the convolution of the adjoint term with the flipped filter.”

- Convolve incoming adjoint with a flipped filter.

- Adjoint w.r.t. Weights:

- Represent derivatives as matrix-vector products, often using

im2col.“The derivative uses im2col for efficient computation.”

- Represent derivatives as matrix-vector products, often using

- im2col Operation:

- Rearranges input for efficient matrix-matrix operations.

“Efficient convolutions often duplicate memory to optimize matrix-matrix multiplication.”

- Rearranges input for efficient matrix-matrix operations.

- Efficiency:

- Despite memory duplication, matrix-matrix products are computationally efficient.

“Duplicating memory for matrix-matrix multiplication is often worthwhile.”

- Despite memory duplication, matrix-matrix products are computationally efficient.

🧩 Key Concepts Breakdown

1️⃣ The Challenge

- Convolution operation: Represented as $( z = \text{conv}(x, w) )$, where:

- $( z )$: Output

- $( x )$: Input

- $( w )$: Filter weights

- Goal: Compute adjoints:

- Derivative w.r.t. $( x )$ (input)

- Derivative w.r.t. $( w )$ (weights)

- Why complex?: Derivatives involve rank-3 tensors w.r.t. rank-3 or rank-4 tensors.

2️⃣ Key Idea: Transpose of a Convolution

- Transpose concept:

- In forward pass: Multiply by an operator (e.g., matrix).

- In backward pass: Multiply by the transpose of the operator.

- Transpose of a convolution: Represented via matrix multiplication, making the operation more intuitive.

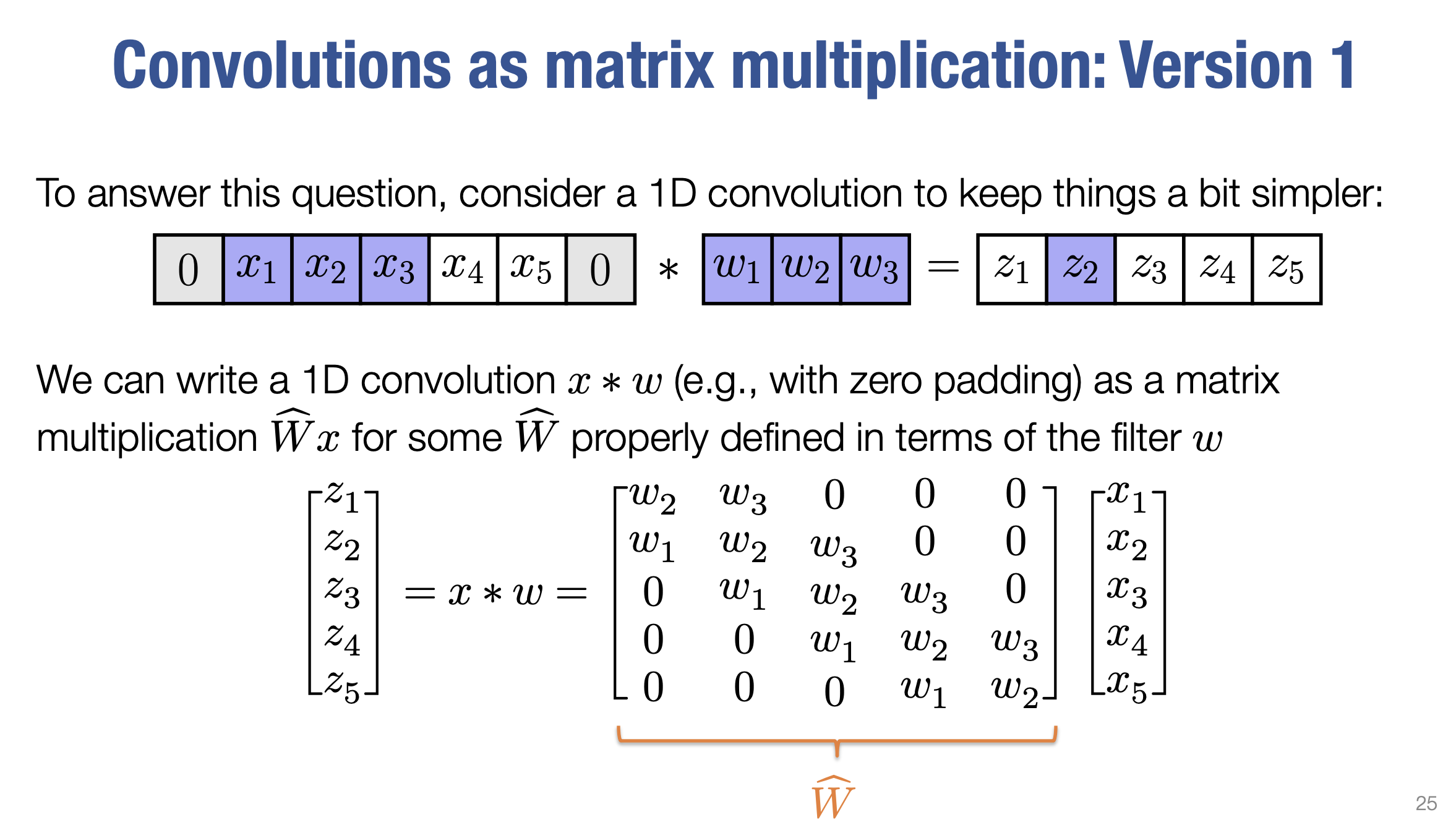

3️⃣ Convolution as Matrix Multiplication (Version 1)

-

Matrix representation:

A 1D convolution is represented as $( x \cdot W_{\text{hat}} )$, where $( W_{\text{hat}} )$ is a sparse matrix created by sliding the filter weights across the input (with zero padding). - Transpose insight:

- $( W_{\text{hat}}^T )$: Transpose corresponds to a convolution with a flipped filter.

- Example: Original filter = $([w_1, w_2, w_3])$, transposed filter = $([w_3, w_2, w_1])$.

- Adjoint computation:

- Derivative w.r.t. input ($( x )$) = Convolution of the adjoint with the flipped filter.

- Key advantage: No need to explicitly form large Jacobian matrices.

4️⃣ Convolution as Matrix Multiplication (Version 2)

- Alternative representation:

- Output = $( X_{\text{hat}} \cdot w_{\text{vec}} )$, where:

- $( X_{\text{hat}} )$: Matrix created using

im2coloperation. - $( w_{\text{vec}} )$: Vectorized filter weights.

- $( X_{\text{hat}} )$: Matrix created using

- Output = $( X_{\text{hat}} \cdot w_{\text{vec}} )$, where:

- im2col:

- Expands the input into a matrix ($( X_{\text{hat}} )$) containing elements used in the convolution.

- Enables convolution to be performed as a matrix-matrix multiplication.

- Practicality:

- Efficient despite duplicating memory.

- $( X_{\text{hat}} )$ should be created inside the convolution operation to avoid excessive memory consumption.

5️⃣ Practical Implementation

- Derivative w.r.t. input ($( x )$):

- Use the flipped convolution approach (transpose of $( W_{\text{hat}} )$).

- Derivative w.r.t. weights ($( w )$):

- Use the

im2colapproach (transpose of $( X_{\text{hat}} )$) as a matrix multiplication.

- Use the

- Automatic Differentiation:

- Deep learning frameworks implement these methods to compute adjoints efficiently.

🏁 Summary

- Differentiating convolutions involves:

- Using the transpose of the convolution to compute derivatives w.r.t. $( x )$.

- Representing convolutions as matrix multiplications to compute derivatives w.r.t. $( w )$.

- Efficiency: These methods eliminate the need for explicitly computing large, sparse Jacobian matrices.

- Essential for DL frameworks: Enable backpropagation and efficient optimization of convolution operations.