Neural Architecture Search

Modern AI models are becoming increasingly large, demanding substantial computational resources and memory. This creates a gap between the computational demands of these models and the available hardware capabilities. Pruning addresses this gap by reducing model size, memory footprint, and ultimately, energy consumption.

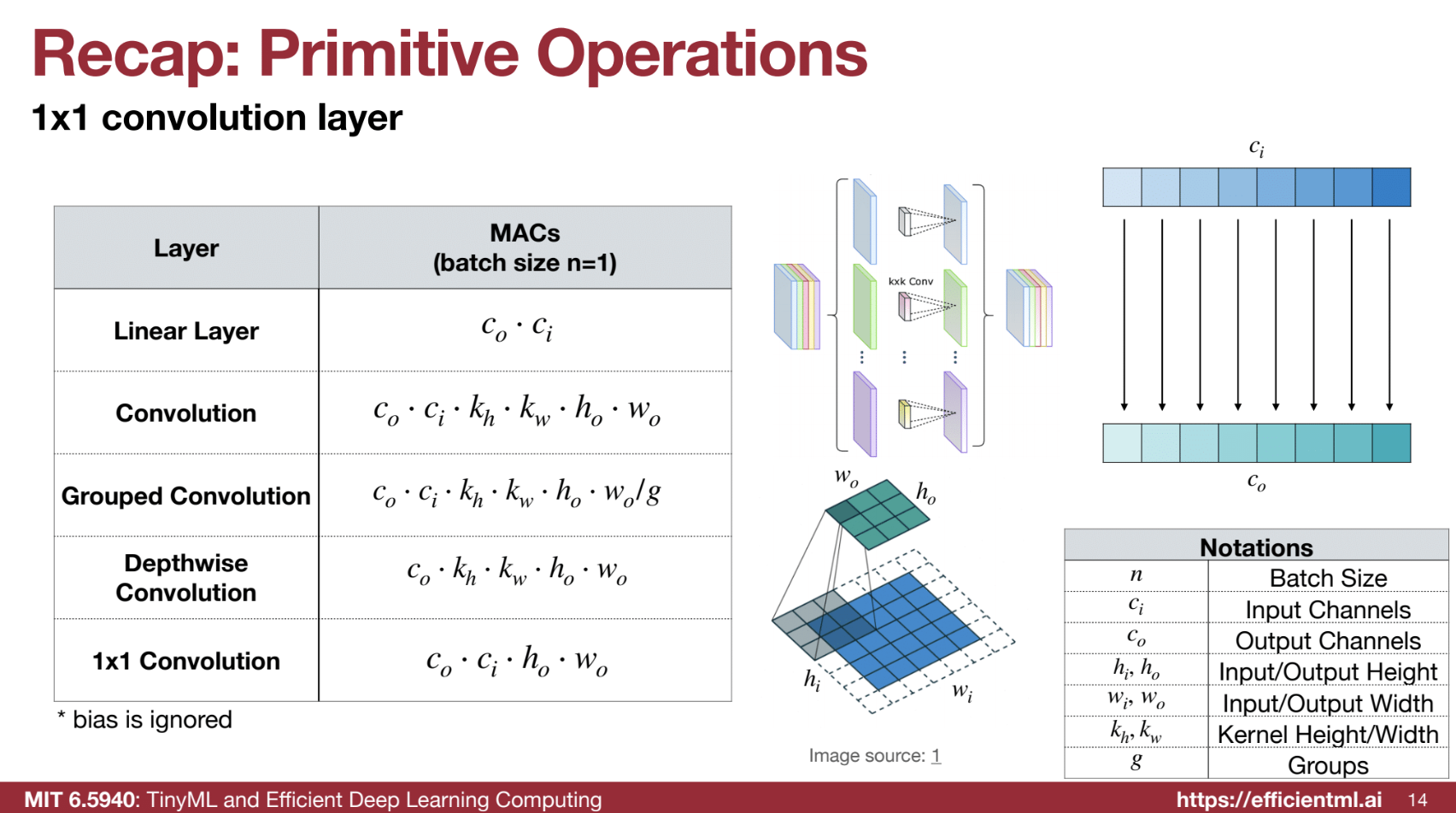

Primitive Operations 🔧

- Fully Connected Layers:

Connect every neuron in the input to every neuron in the output, characterized by weight matrices and bias vectors.

Example:- Input: $( X )$ with shape $( (n, c_i) )$ (batch size $( n )$, input channels $( c_i )$)

- Output: $( Y )$ with shape $( (n, c_o) )$ (output channels $( c_o )$)

- Weight: $( W )$ with shape $( (c_o, c_i) )$, Bias: $( b )$ with shape $( (c_o) )$.

-

Convolutional Layers:

Extract features using kernels (e.g., 2D convolutions with input $( (C_{in}, H, W) )$, weight $( (K_H, K_W, C_{in}, C_{out}) )$). -

Group Convolutions:

Divide input channels into groups for independent convolutions. -

Depthwise Convolutions:

Special case of group convolution with each input channel as a group. - 1x1 Convolutions:

Perform channel projection without spatial info.

Building Blocks 🏗️

These blocks are constructed using the primitive operations and are commonly used in neural network architectures:

-

ResNet50 Bottleneck Block 🏗️: This block consists of three convolutional layers. It uses a 1x1 convolution to reduce the number of channels by 4x, followed by a 3x3 convolution, and then another 1x1 convolution to expand the channels back. A bypass connection adds the input to the output of the three layers. This design reduces computation by feeding a reduced feature map to the 3x3 convolution.

-

ResNeXt Block 🔀: This block replaces the 3x3 convolution in the ResNet block with a 3x3 grouped convolution. This is equivalent to having multiple paths in the block, increasing the capacity.

-

MobileNet Depthwise Separable Block 📱: This block uses a depthwise convolution to capture spatial information and a 1x1 convolution to capture channel-wise interactions. This separates the modeling of spatial and channel information, reducing computation.

-

MobileNetV2 Inverted Bottleneck Block 🔄: This block is similar to the bottleneck block but expands the number of channels before the depthwise convolution and then reduces them back. This is because depthwise convolution has low expressiveness, so expanding the channels compensates for this while keeping computation low. However, this design is not memory-efficient, especially for training.

-

ShuffleNet Block 🔀: This block uses a 1x1 group convolution to further reduce costs and then uses channel shuffling to exchange information between different groups.

-

Transformer Multi-Head Self-Attention (MHSA) 🔍: This block projects input tensors into query (Q), key (K), and value (V) tensors. It then calculates attention scores between each token using scaled dot-product attention and concatenates the output values. This allows the model to attend to all other tokens in the sequence using a single layer, providing a large receptive field.

Comparison Table of Building Blocks 📊

| Building Block | Key Operations | Main Benefit | Drawbacks |

|---|---|---|---|

| ResNet50 Bottleneck | 1x1 conv (reduce), 3x3 conv, 1x1 conv (expand), bypass | Reduces computation by using 1x1 conv to shrink channels before the 3x3 conv | |

| ResNeXt | 1x1 conv (reduce), 3x3 grouped conv, 1x1 conv (expand) | Increases capacity by using grouped convolutions which act like multiple paths | |

| MobileNet Depthwise Separable | Depthwise conv, 1x1 conv | Separates spatial and channel information, reducing computation | |

| MobileNetV2 Inverted Bottleneck | 1x1 conv (expand), depthwise conv, 1x1 conv (reduce) | Uses depthwise convolution which has low computation cost, expands channels to compensate for low expressiveness. | Not memory efficient, especially during training due to large activation size. |

| ShuffleNet | 1x1 group conv, channel shuffle | Reduces cost by using group conv and allows different groups to interact with channel shuffle | |

| Transformer MHSA | Linear projections for Q,K,V, scaled dot-product attention, concatenation | Allows each token to attend to all other tokens in one layer, providing a large receptive field | Computationally expensive, scales quadratically with the number of tokens. |

Neural Architecture Search (NAS) 🤖

-

Motivation:

Trade-offs between latency, accuracy, and resource efficiency. Manual design is time-consuming and suboptimal for specific hardware constraints. -

Components:

- Search Space: Candidate architectures.

- Search Strategy: Methods include:

- Grid Search: Exhaustive but expensive.

- Random Search: Simpler but less directed.

- Reinforcement Learning: Uses rewards to guide exploration.

- Gradient Descent: Optimizes differentiable architectures.

- Evolutionary Search: Mutates and selects best-performing architectures.

- Performance Estimation: Predicts architecture performance efficiently.

Search Spaces 🧩

- Cell-Level Search: Focuses on repeating a single cell design.

- Network-Level Search: Designs the entire architecture for flexibility.

Hardware-Aware NAS ⚙️

-

Challenges:

Searching directly on target tasks/hardware is expensive; proxies (like FLOPs) may be misleading. -

Frameworks:

- ProxylessNAS:

- Builds an over-parameterized network.

- Learns probabilities for paths and selects the best.

- Once-for-All (OFA):

- Trains a super-network to derive sub-networks for various hardware.

- ProxylessNAS:

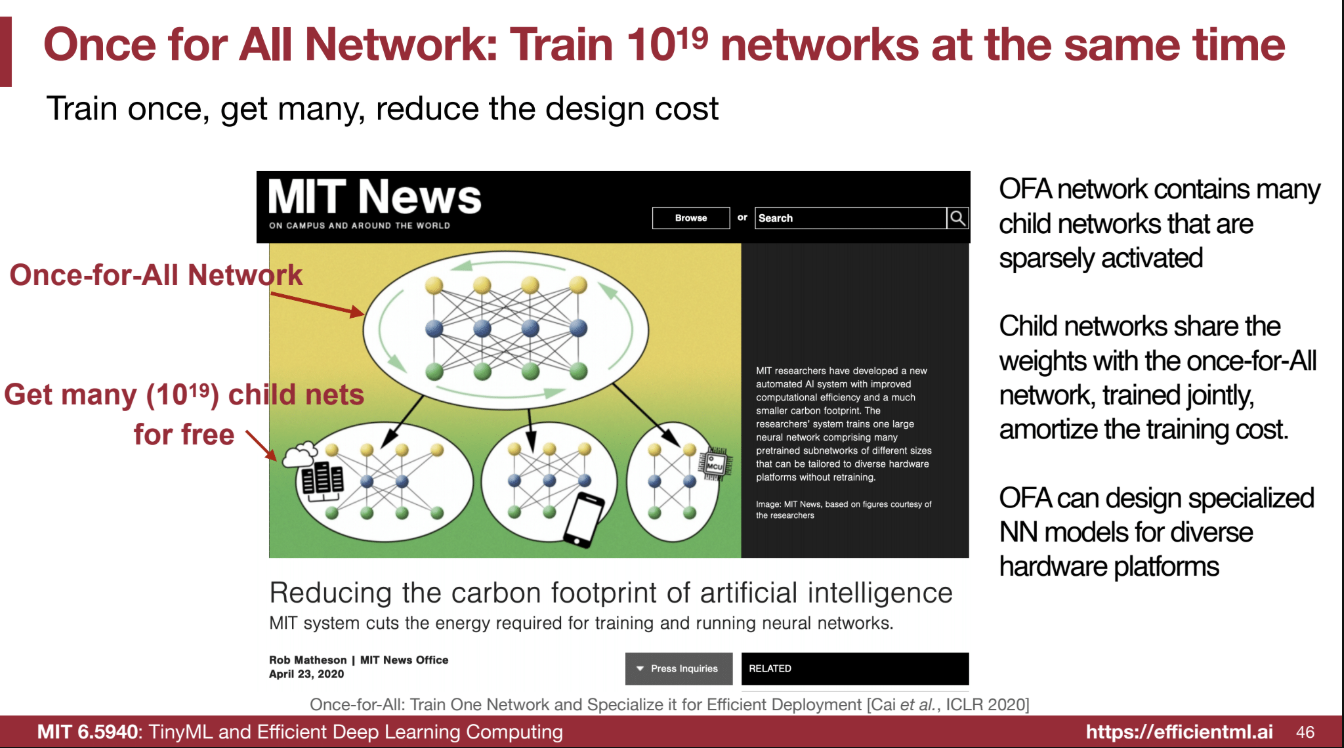

Once-for-All (OFA) Network Overview 🌐

The Once-for-All (OFA) network is a method for training a single neural network that can be specialized for various hardware platforms and performance requirements without retraining. The key idea is to train a large “supernetwork” that contains many sub-networks, which can then be extracted and deployed on different devices. This approach aims to reduce the design cost of creating specialized models for diverse hardware platforms.

Core Principles 🔑:

-

Train Once, Get Many 🎯: Instead of training separate networks for each hardware platform, the OFA approach trains one large network, which can then be used to derive many sub-networks. This amortizes the training cost across multiple search iterations.

-

Sparsely Activated Child Networks ⚡: The OFA network contains many child networks that are sparsely activated. This means that during inference, only a small portion of the network is active, which reduces computation and memory usage.

-

Weight Sharing 🤝: The child networks share weights with the parent OFA network and are trained jointly. This ensures that even when training a smaller sub-network, the weights of the larger network are also updated.

Implementation Details ⚙️:

The OFA network is designed to be elastic across multiple dimensions, allowing for the creation of different sub-networks with varying sizes and complexities:

- Elastic Kernel Size 🔲:

- The network starts with a large kernel size (e.g., 7x7).

- Smaller kernel sizes (e.g., 5x5, 3x3) are derived from the larger kernel through a transformation matrix, and the weights of the smaller kernels are a subset of the larger kernel’s weights.

- For example, a 5x5 kernel is the inner part of a 7x7 kernel, and a 3x3 kernel is the inner part of a 5x5 kernel.

- Elastic Depth 🔼:

- The network supports varying depths by allowing layers to be skipped.

- For instance, a network can have a depth of 4, 3, or 2 layers, with the option to skip the later layers to reduce the depth.

- Elastic Width (Number of Channels) 📉:

- The network begins with the full number of channels and progressively shrinks the width by sorting channels according to their importance.

- The most important channels are kept when reducing the width, and less important channels are pruned away.

- Elastic Resolution 🖼️:

- The network can handle different input resolutions by randomly sampling the input image size for each batch.

- Different resolutions share the same weights, but this affects the MACs and FLOPS.

Training Process 🏋️♂️:

During training, the OFA network samples different sub-networks with varying kernel sizes, depths, widths, and resolutions. This allows the network to learn to adapt to different architectures and helps ensure that the largest models still perform well after training smaller versions.

Examples of OFA in Use 🛠️:

-

Diverse Hardware Platforms 💻📱: OFA allows for the creation of specialized models for various hardware platforms, such as mobile phones, CPUs, GPUs, and specialized accelerators, by sampling different sub-networks. For example, a larger sub-network can be deployed on a powerful GPU, while smaller sub-networks can be used on mobile devices or resource-constrained environments.

-

Varying Memory Constraints 💾: OFA enables the deployment of models under different memory constraints. A larger sub-network can be used when more memory is available, while a smaller sub-network can be used when memory is limited.

-

Dynamic Adaptation ⚙️: OFA can adapt to changes in resource availability. For example, a phone can switch to a smaller model when the battery is low.

-

Specialized Models 🎨: OFA can be used to create models tailored to specific hardware, like tiny models for Raspberry Pi, high-parallelism models for GPUs, or low-energy models for mobile devices.

-

Natural Language Processing (NLP) 📚: OFA can be used to design smaller Transformers for edge devices and larger Transformers for powerful TPUs. This is useful for saving computation costs and enabling NLP on resource-constrained devices.

-

3D Vision 🔍: OFA can also be used in point cloud processing to find fast architectures that can be used in self-driving applications.

-

Generative Adversarial Networks (GANs) 🖼️: OFA supports interactive photo editing by allowing a quick prototype with a small sub-network and then running a large sub-network for high-quality outputs.

-

Pose Estimation 🤖: OFA can be used to develop lightweight models for on-device pose estimation.

-

Quantum AI ⚛️: OFA enables the search for quantum circuits that are robust to noise by training a super circuit and then searching for sub-circuits that have a smaller number of gates.

-

Large Language Models (LLMs) 🧠: OFA can be used to create multiple models of varying sizes from one network, adapting to different compute and memory resources.

Benefits of OFA 🌟:

-

Reduced Design Cost 💸: By training one network, the design cost is amortized over many different models, which reduces the need for retraining from scratch.

-

Flexibility 🤸: OFA allows for the creation of models that can be adapted to different hardware platforms and resource constraints.

-

Efficiency ⚡: The sub-networks are sparsely activated, which reduces computation and memory usage, leading to better performance.

-

Improved Performance 🚀: OFA can lead to higher accuracy and lower latency compared to using models not specifically designed for the target hardware.

-

Hardware-Aware 🖥️: OFA can be used with hardware-aware NAS techniques, enabling the design of models that are optimized for specific hardware platforms.

-

Scalability 📈: OFA can be scaled to large models such as large language models.

🚀 Efficiency Predictor in Once-for-All (OFA) Networks

In the context of Once-for-All (OFA) networks, an efficiency predictor is crucial for estimating the performance of different sub-networks derived from the main OFA network without having to actually run them on the target hardware. The efficiency predictor allows for a quick and low-cost way of determining the latency and other performance metrics of a given sub-network.

📌 The Need for Efficiency Prediction:

- Training and evaluating a neural network on target hardware can be slow and expensive.

- Instead of training each sub-network from scratch or running each one on hardware to measure efficiency, the efficiency predictor provides an estimate of how a sub-network will perform.

📊 Latency Dataset:

- To create an efficiency predictor, a dataset of network architectures and their corresponding latencies on the target hardware is gathered.

- This dataset is used to train the predictor.

🧱 Layer-wise Latency Profiling (Lookup Table):

- 🟦 The simplest approach is to create a latency lookup table.

- In this method:

- The latency of each operation or layer in the neural network is measured and stored in a table.

- When estimating the latency of a sub-network, the latencies of its constituent layers are looked up in the table and summed.

- This method provides a good initial estimate of latency and is easy to implement.

📐 Network-wise Latency Profiling (Latency Prediction Model):

- 🔷 A more advanced approach involves training a machine learning model to predict the latency of a sub-network based on its architecture.

- The model takes features of the network architecture (e.g., the number of channels, kernel sizes, layer dimensions) as input.

- These features include:

- Number of channels

- Kernel size

- Width

- Resolution

- These features include:

- The model is trained using the latency dataset and can predict the latency of new, unseen architectures.

- A simple linear model can often perform well for this task.

⚡ Using the Predictor:

- Once the efficiency predictor (either the lookup table or the model) is trained, it can quickly estimate the efficiency of different sub-networks within the OFA network.

- This enables the neural architecture search (NAS) algorithm to efficiently explore the design space and find the best sub-network for a given target platform.

🛠️ Hardware-Aware NAS:

- The efficiency predictor enables hardware-aware NAS by incorporating hardware feedback (like measured latency) into the search process.

- This allows the network architecture to be optimized directly for the target hardware.

🚧 Addressing Limitations:

- Relying solely on FLOPs or the number of parameters as a proxy for efficiency can be inaccurate.

- FLOPs do not directly translate to latency because factors like memory access and hardware parallelism impact efficiency.

- The predictor accounts for these factors.

- Simply summing up the latency of individual layers can be inaccurate on GPUs due to kernel fusion.

- The ML-based predictor learns these effects during training, providing a more accurate estimate.

🎯 Benefits of the Efficiency Predictor:

- ⚡ Speed: Quickly estimates latency, avoiding the time-consuming process of training and testing each sub-network.

- 💰 Cost: Reduces computational resources needed for evaluating different architectures during NAS.

- 🎯 Accuracy: Enables the search for models that are optimized for the specific target hardware.

Search Config Explain in HW

The configuration of a sub-network is defined by several parameters. Here’s a breakdown with examples:

1. wid – Width of the Network 📏

- Represents the number of channels in convolutional layers.

1– Full width (all channels).0– Pruned width (reduced channels).

2. ks – Kernel Sizes 🔲

- Defines the filter size for each layer.

- Example:

3– 3x3 kernel.7– 7x7 kernel.

- Varies across layers for flexibility.

3. e – Expand Ratio 🚀

- Controls how much the number of channels expands in the inverted residual block.

- Example:

e = 6– Channels expanded by 6x.e = 3– Channels expanded by 3x.

4. d – Depth of the Block 📚

- Refers to how many layers each block has.

- Example:

d = 2– Two layers in the block.d = 0– Block is skipped or minimal.

5. image_size – Input Resolution 🖼️

- Represents the input image size.

- Example:

image_size = 96– 96x96 pixel input.

🧪 NAS Applications Overview

🌟 Once-for-All (OFA) in Different Domains

📖 Natural Language Processing (NLP)

- 🌀 Once-for-All Transformers (OFA-Transformers):

Design a single transformer model that can specialize into sub-networks for various platforms, from resource-constrained devices to powerful servers. - ⚙️ Hardware-Aware Transformers (HAT):

Search for transformer architectures optimized for specific hardware platforms, achieving significant speed-ups and model size reductions. - 🔩 Spartan Transformer Chip:

A specialized chip for efficient NLP, showcasing the practical feasibility of hardware-aware NAS.

🏔️ 3D Vision

- 🛻 Sparse Point Voxel (SPV) for LiDAR Segmentation:

Efficient architectures designed to process point cloud data from LiDAR sensors, enabling real-time performance for self-driving applications.

🖼️ Image Editing (Generative Adversarial Networks - GANs)

- 🎨 Once-for-All GANs:

Train a single GAN capable of generating images at various resolutions and quality levels, supporting fast prototyping and high-quality image finalization.

🕺 Human Pose Estimation

- 📱 Hardware-aware NAS for Pose Estimation:

Design efficient architectures for real-time pose estimation on mobile devices.

🧑🔬 Quantum AI

- 🔬 OFA for Quantum Circuits:

Search for robust sub-circuits within a larger super-circuit to mitigate noise and enhance accuracy in quantum computing applications.

💬 Large Language Models (LLMs)

- ⚡ Inference Adaptive LLMs:

Apply OFA principles to LLMs, creating multiple models of varying sizes from a single trained super-network, enabling deployment across diverse hardware platforms.