TinyML Quantization II

Modern AI models are becoming increasingly large, demanding substantial computational resources and memory. This creates a gap between the computational demands of these models and the available hardware capabilities. Pruning addresses this gap by reducing model size, memory footprint, and ultimately, energy consumption.

1️⃣ Recap of Quantization Concepts

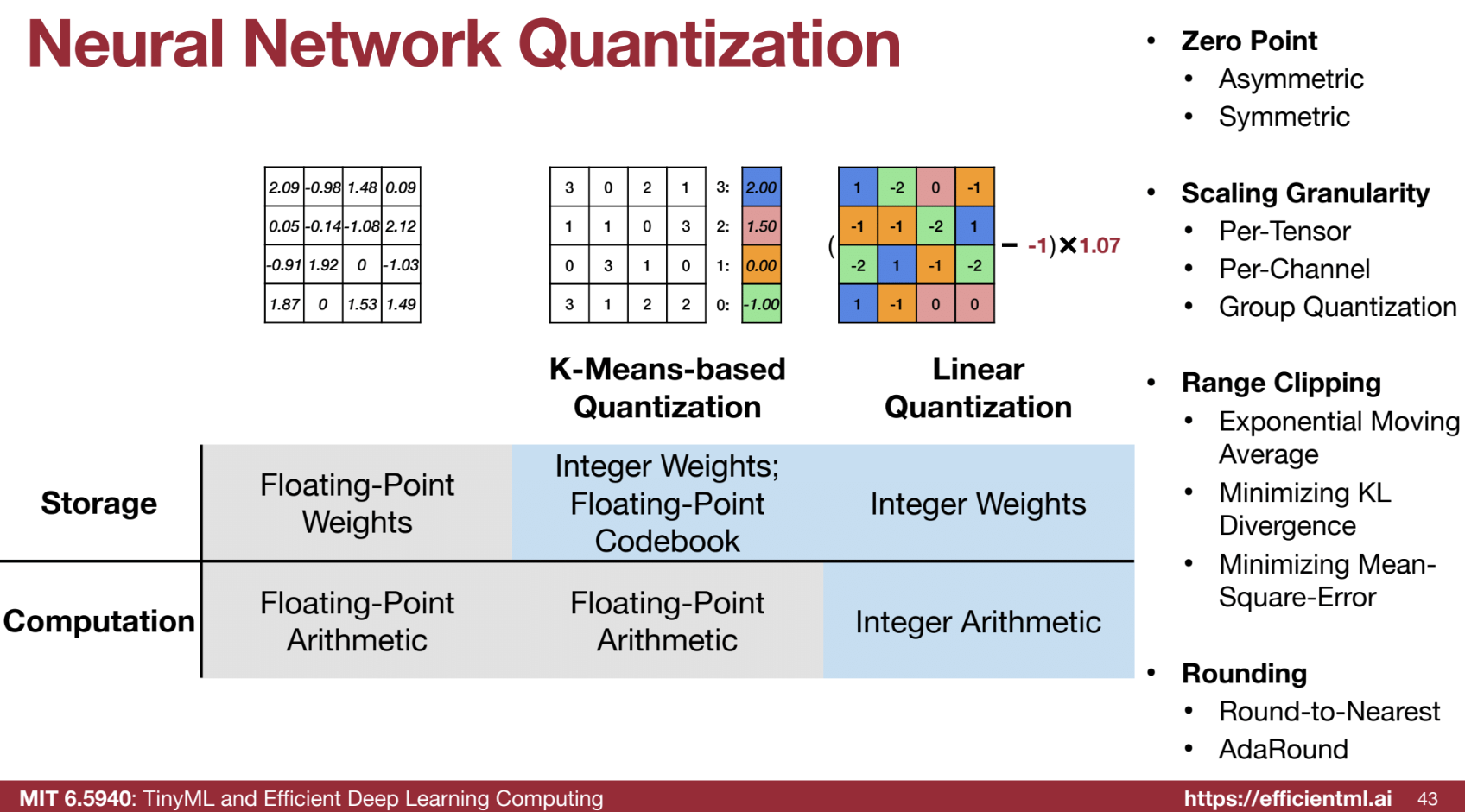

- 📊 K-means and Linear Quantization:

Brief summary of K-means-based quantization (codebook) and linear quantization (scaling factors + zero points). - ⚖️ Benefits and Trade-offs:

- Pros: Reduced storage and computation costs.

- Cons: Potential accuracy degradation.

2️⃣ Quantization Granularity

- 📦 Per-Tensor Quantization:

- Single scaling factor for the entire tensor.

- Pros: Simplicity for large models.

- Cons: Accuracy issues in small models due to varying ranges across channels.

- 🧱 Per-Channel Quantization:

- Individual scaling factors for each channel.

- Pros: Better accuracy for small models.

- Cons: Higher storage requirements for scaling factors.

- 👥 Group Quantization:

- Reduces group size for finer scaling and improved accuracy at low precision.

- Importance in architectures like Blackw for low-bit quantization.

- 📐 Per-Vector Quantization (VSQuant):

- Combines a global floating-point scaling factor with integer per-vector scaling.

- Balances accuracy and hardware efficiency.

- ⚙️ Shared Micro-exponent (MX) Data Type:

- Combines shared exponent bits with per-channel/group scaling factors.

- Examples: MX4, MX6, MX9 with varying effective bit widths.

| Granularity | Description | Advantages | Disadvantages | Use Cases |

|---|---|---|---|---|

| Per-Tensor | A single scaling factor and zero point are used for the entire tensor. | Simplest to implement; requires storing only one scaling factor and zero point for the entire tensor. | May lead to significant accuracy degradation, especially in smaller models, as it doesn’t account for different channel ranges. | Works reasonably well for large models where variations across channels are less significant. |

| Per-Channel | Each channel has its own scaling factor and zero point. | More accurate than per-tensor quantization, especially for smaller models. Allows for different scaling factors per channel, capturing variations across output channels. | Requires storing a scaling factor and zero point for each channel, increasing memory overhead. | Ideal for models with significantly different weight ranges across channels, which is common. It’s the preferred granularity for many models. |

| Group Quantization | The tensor is divided into groups, each sharing a scaling factor and zero point. | Balances accuracy and memory usage. Fine-grained scaling factors compared to per-tensor quantization. Crucial for optimal performance in lower bit-width scenarios. | More complex to implement than per-tensor or per-channel quantization. Requires more scaling factors than per-tensor. | Useful for very low-bit quantization (4-bit or below), such as in the Blackwell architecture. Helps balance accuracy and hardware efficiency for FP4 AI. |

| Per-Vector | A scaling factor is applied to each sub-vector within a tensor. Uses both a floating-point coarse-grained scale for the entire tensor and integer scaling factors per sub-vector. | Finer granularity than per-channel and group quantization. Achieves a balance between accuracy and hardware efficiency. Less expensive integer scaling factors at finer granularity. | Requires additional memory for storing scaling factors for each sub-vector. | Suitable for fine-grained control in lower precision quantization (e.g., 4-bit), often used with micro-exponent data types. |

| Shared Micro-exponent (MX) Data Type | Combines shared exponent bits and mantissa bits, along with scaling factors (e.g., MX4 with 1 sign bit, 2 mantissa bits, 1 exponent bit shared by 2 values). | Useful in very low precision quantization (e.g., FP4), providing a fine-grained scaling factor for better accuracy. Popular with Blackwell architecture. | More complex to implement due to the unique combination of mantissa and exponent bits. | Gaining popularity in very low-bit quantization (4-bit and below). Used in Blackwell GPUs to maintain accuracy for FP4 models. |

Key Takeaways 📝

- Trade-offs: There is a trade-off between the granularity of quantization and the memory overhead. Finer granularity (e.g., per-channel, group, per-vector) results in better accuracy but increases storage requirements for scaling factors.

- Model Size: Per-tensor quantization works well for very large models where differences across channels are less noticeable. However, for smaller models, per-channel quantization is preferable due to the significant variations in weight ranges.

- Hardware: Certain granularities are tailored for specialized hardware. For example, Blackwell GPUs support group quantization using micro-tensor scaling.

- Low Precision: For very low-bit quantization (e.g., 4-bit or below), group quantization, per-vector quantization, and the MX data type become increasingly important for preserving accuracy.

- Accuracy: Per-channel quantization offers better accuracy than per-tensor quantization, as it uses unique scaling factors for each channel. Per-vector quantization provides even finer-grained scaling, leading to reduced quantization error.

3️⃣ Dynamic Range Clipping

-

✂️ Motivation for Clipping:

Clipping minimizes quantization noise, especially for distributions with outliers. -

📐 Methods for Clipping:

Techniques include exponential moving average, KL divergence minimization, and MSE minimization. -

🔍 Octave Technique:

- Automatically finds optimal clipping ranges.

- Effectively maintains accuracy compared to FP32.

4️⃣ Rounding in Quantization

- 🔄 Adaptive Rounding:

- Challenges traditional “round-to-nearest” methods.

- Adaptive rounding considers correlated weights.

- 🧠 AdaRound Algorithm:

- Learns optimal rounding decisions for weights.

- Minimizes reconstruction error while considering weight correlations.

5️⃣ Quantization-Aware Training (QAT)

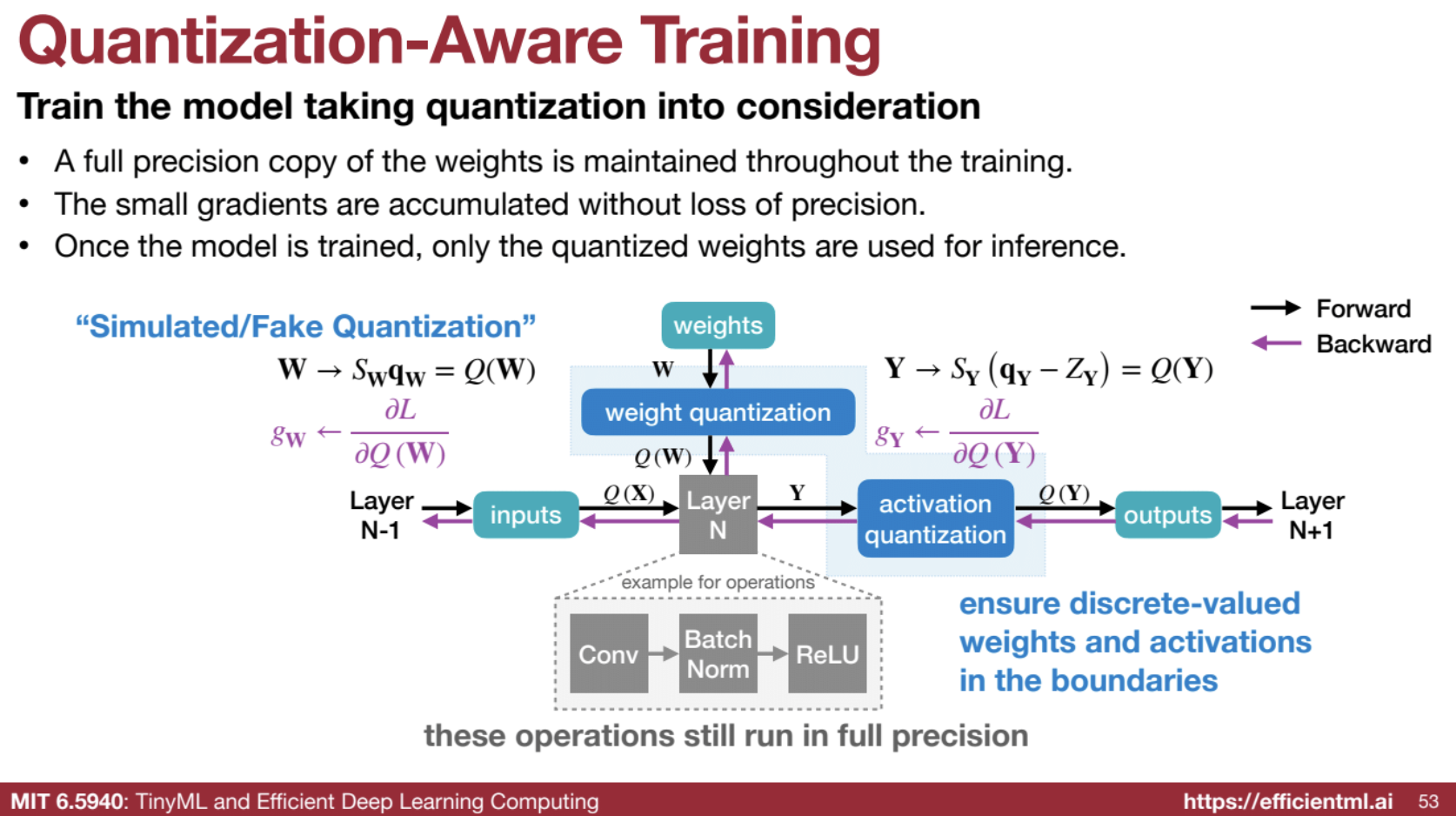

Quantization-Aware Training (QAT) is a fine-tuning technique that emulates the effects of quantization during the training process to recover accuracy that might be lost when quantizing a neural network. The goal is to make the model more robust to the effects of quantization, especially at lower bit-widths. 🧠💡

1. Maintaining a Full Precision Copy of Weights 🏋️♂️

- During QAT, a full-precision (e.g., 32-bit floating-point) copy of the weights is maintained in the background. This is crucial because it allows for the accumulation of small gradient changes without loss of precision. 🔢

- The quantized weights are used for the forward pass, but the full precision weights are updated during the backward pass. 🔄

- The full precision copy is only used during training; at deployment time, only the quantized weights are used for inference. 🚀

2. Simulating Quantization 🎛️

- During the forward pass, both the weights and activations are quantized to simulate the inference-time quantization. This is also known as “fake quantization”. 👾

- This simulation is done by adding “quantization nodes” in the network that quantize the weights and activations.

- For example, a weight ‘W’ is quantized to ‘qW’ using a scaling factor and zero point, as in linear quantization. The full precision weight ‘W’ is maintained and updated during training. 🏋️♂️

- Similarly, activations are also quantized. 🎚️

- This ensures that the network is trained with discrete-valued weights and activations as they would be during inference. The network learns to adapt to the reduced precision of the quantized values. 🧑🏫

3. Straight-Through Estimator (STE) 🔄

- The quantization process is discrete and non-differentiable, so the gradient of the quantization function would be zero almost everywhere. ❌

- To enable backpropagation, a straight-through estimator (STE) is used. This means that the gradient is passed through the quantization function as if it were an identity function. ➡️

- For example, if Q(W) is the quantized weight, the gradient ∂L/∂Q(W) is treated the same as ∂L/∂W. 🔄

- In essence, the gradient with respect to the quantized value is passed directly back to the original full-precision value. 💬

4. Backward Pass and Weight Update 🔁

- During the backward pass, the gradients are calculated as if the weights and activations had not been quantized. 📉

- The gradients are used to update the full-precision weights. 📈

- The full-precision weights are updated to minimize the loss function, effectively fine-tuning the network to be more robust to quantization. 🎯

5. Fine-tuning 🔧

- QAT usually involves fine-tuning a pre-trained floating-point model. This helps in achieving better accuracy compared to training from scratch using quantized weights and activations. 🏁

- The training process involves multiple epochs. During each epoch, forward and backward passes are performed, which gradually updates the full precision weights to converge to a new set of weights. 🔁

Example: Let’s consider a simple example of a weight ‘W’ in a neural network. 🎓

- Initially, ‘W’ is a full-precision floating-point number. 🧮

- During the forward pass:

- ‘W’ is quantized to a lower precision, resulting in ‘qW’ by using the scaling factor and the zero point. 📏

- The quantized weight qW is used for the forward computation. 🔀

- The activations of the layer are also quantized in a similar manner. 🖥️

- During the backward pass:

- The gradients are calculated with respect to the output of the layer. 📊

- The gradients are passed back through the quantization node using the STE. 🔄

- The full-precision weight ‘W’ is updated by the optimizer. 🛠️

- After the training is complete, the full-precision weights are discarded, and the quantized weights are used for inference. 💡

Benefits of QAT 🚀

- Improved Accuracy: QAT significantly improves the accuracy of quantized models compared to post-training quantization, especially for smaller models. 🎯

- Robustness to Low Bit-widths: It makes the model more resilient to the effects of quantization, allowing for the use of lower bit-widths (e.g., 4-bit or 8-bit) with less accuracy loss. 🛡️

Comparison with Post-Training Quantization (PTQ) and in LLM Settings 📚🤖

- PTQ quantizes a pre-trained model without any fine-tuning. ❌

- QAT, on the other hand, fine-tunes the model while taking quantization into account. ✅

- QAT typically achieves much better accuracy than PTQ, especially when the bit-width is reduced aggressively. 🔝

● PTQ Can Be Sufficient for LLMs 📉

- Due to their large size, LLMs (Large Language Models) are less susceptible to accuracy loss from quantization. 💡

- Post-Training Quantization (PTQ) can be a practical approach for LLMs and often achieves surprisingly good results without the need for fine-tuning. 🛠️

● QAT Provides Further Gains 📈

- While PTQ may be sufficient in many cases, Quantization-Aware Training (QAT) can provide a boost in accuracy. 🚀

- If the performance of a quantized LLM is not satisfactory after PTQ, QAT should be considered to fine-tune the model and improve results. 🔧

● QAT Is Beneficial for Lower Bit-widths 🔥

- When using aggressive quantization with lower bit-widths (e.g., 4-bit or 8-bit), QAT becomes more valuable. 🏆

- The accuracy loss from PTQ may be too significant, so QAT helps recover accuracy that might be lost during extreme quantization. ⚡

● QAT Offers Fine-tuning 🎯

- QAT also fine-tunes the model while being aware of the quantization process. 🧑🏫

- This allows the model to adapt to the constraints of quantized computations, improving accuracy and making it more robust to quantization effects. 💪

6️⃣ Binary and Ternary Quantization

- ⚙️ Motivation for Low-Precision Quantization:

- Extreme savings in storage and computation using binary (1-bit) and ternary (2-bit) representations.

- 🔢 Binarization Techniques:

- Deterministic (sign function) and stochastic binarization.

- 📉 Accuracy Impact:

- Scaling factors mitigate accuracy loss in binarized models.

- 🤖 Binarized Neural Networks (BWN):

- Examples of BWN in tasks like image classification.

- Highlights trade-offs between accuracy and efficiency.

- ➕ Ternary Quantization:

- Adds a zero value for more representational power.

- Threshold-based and trained ternary quantization (TTQ) with learnable scaling factors.

- 🔧 XNOR Operation and Popcount:

- Efficient hardware implementation of binarized operations.

7️⃣ Mixed-Precision Quantization

- 🌈 Concept and Benefits:

- Different layers/operations use varying bit widths.

- Optimizes the balance between accuracy and efficiency.

- 🕵️ Design Space Exploration:

- Challenges due to the vast design space.

- Automated solutions are crucial.

- 🤖 Reinforcement Learning for Quantization:

- Actor-critic frameworks optimize layer-specific bit widths.

- 🔧 Hardware Considerations:

- Specialized accelerators improve performance for mixed-precision models.

- 💡 HAQ (Hardware-Aware Quantization):

- Example of a hardware-aware mixed-precision method.

- Outperforms uniform quantization.