📚 GPU Acceleration

This document reviews the main themes and key takeaways from Deep Learning Systems: Algorithms and Implementation** at Carnegie Mellon University, taught by J. Zico Kolter and Tianqi Chen.

This lecture summarizes key concepts and techniques related to GPU (Graphics Processing Unit) acceleration, particularly within the context of deep learning and linear algebra operations. The information is drawn from two primary sources: a lecture transcript (“Lecture - 12 GPU Acceleration”) and a corresponding slide deck (“https://dlsyscourse.org/slides/12-gpu-acceleration.pdf”).

I. Core Concepts: GPU Architecture & Programming Model

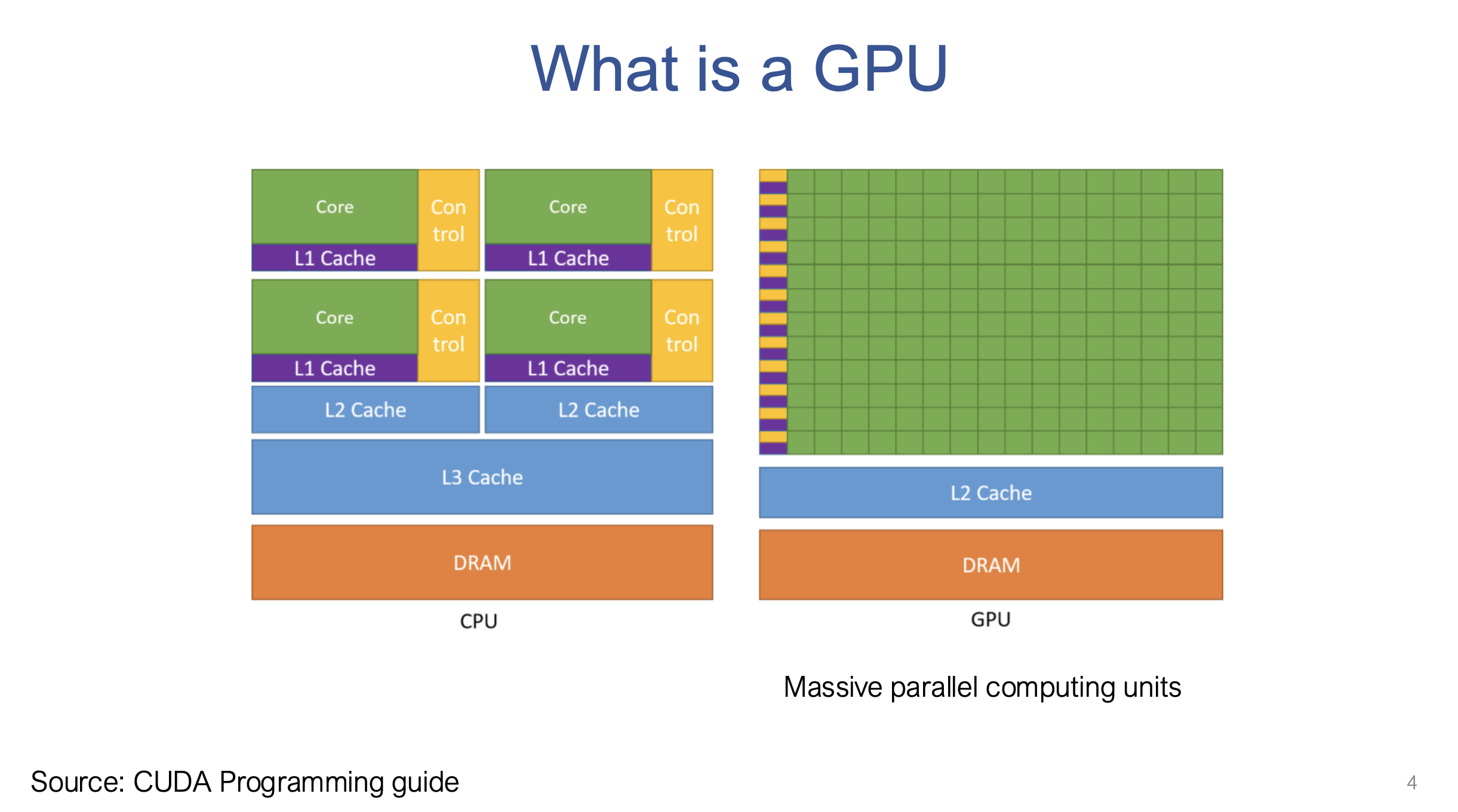

CPU vs. GPU Architecture

- CPU (Central Processing Unit): Designed for flexibility with a strong “commander unit” (control unit) capable of handling diverse tasks, including branching, context switching. Emphasizes flexible control.

- GPU (Graphics Processing Unit): Employs a large number of “soldiers” (compute cores) under the control of fewer “commanders” (control units). Optimized for highly parallel, arithmetic-intensive tasks, where the same operation is performed on large datasets.

“A typical CPU will have a very strong Commander unit…that allows us to do different kind of tasks…On other hand…we are doing a lot of similar things…you want a huge amount of soldier that go ahead and perform a task…” 💡

Massive Parallelism

GPUs provide significant speedups in specific tasks compared to CPUs, often in the range of 10x to 100x.

“By leveraging your GPU you can usually observe more than 10 X or sometimes 100 X speedup versus traditional computing…GPU itself is kind of indispensable nowadays in order to run deep learning works” 🚀

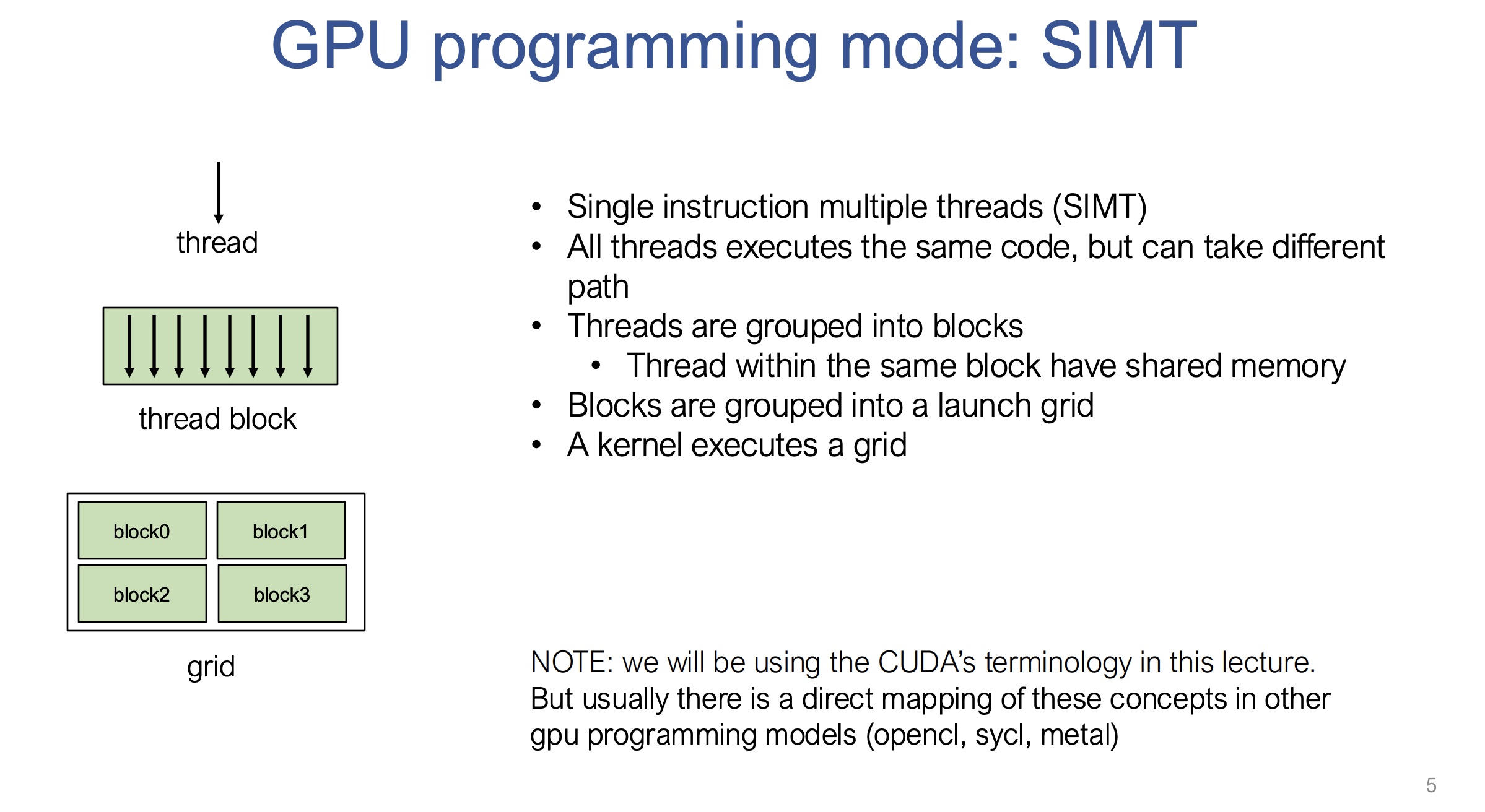

SIMT (Single Instruction, Multiple Threads) Programming Model

GPU programming employs SIMT, where all threads execute the same code (kernel) but on different data.

“All threads executes the same code, but can take different path” 🔄

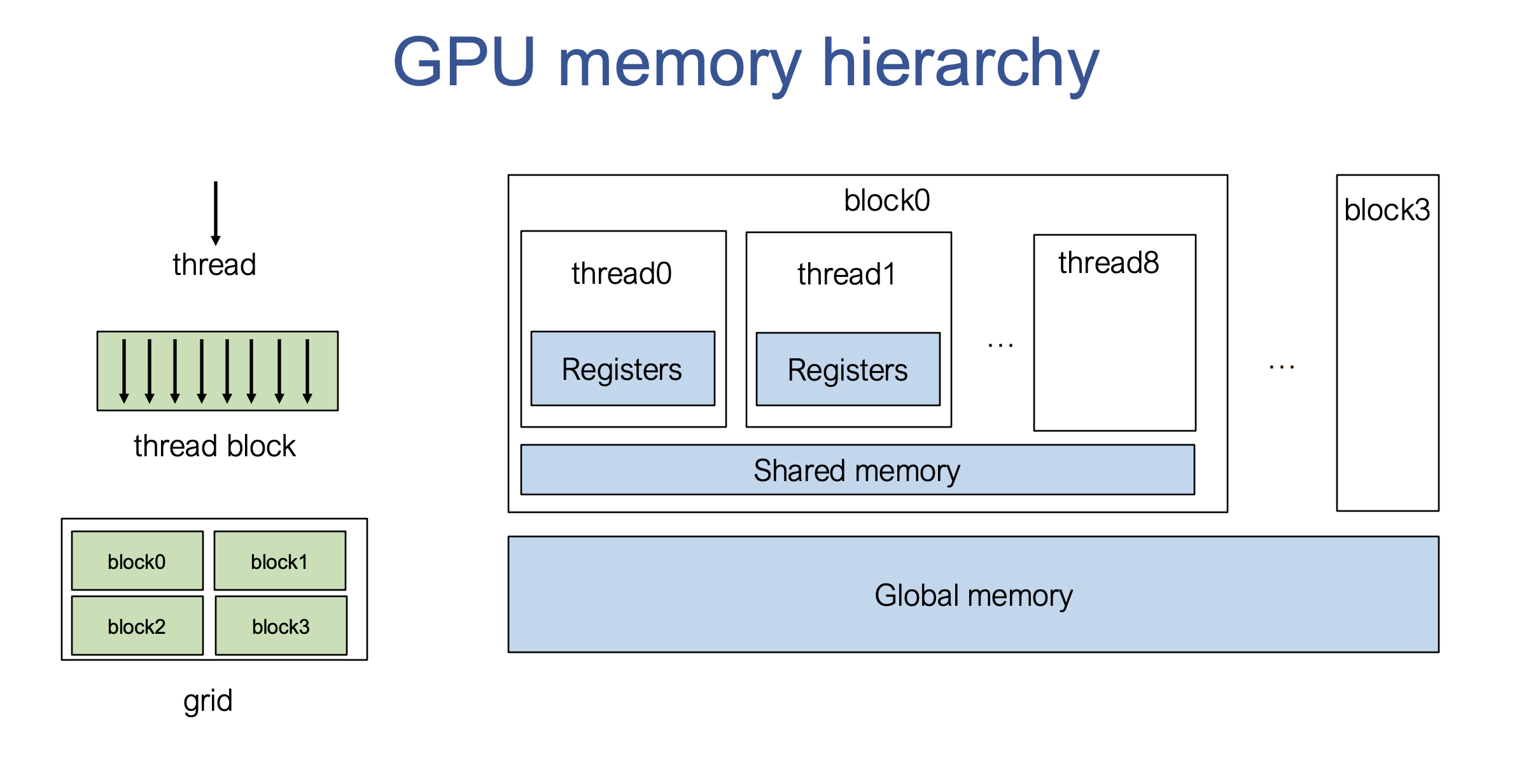

Threads, Thread Blocks, and Grids

- Threads: Individual execution units that perform computations.

- Thread Blocks: Threads are grouped into blocks, which share common resources such as shared memory.

- Grid: Thread blocks are further grouped into a launching grid when a GPU kernel is executed.

“we’re going to group the thread onto what we call blocks and the threads within the same blocks also share uh some kind of common resources…finally all the blocks are then grouped into what we call a launching grid…” 🎯

Context

Each thread has its own context, especially its unique locations (thread ID and block ID), allowing for varied data access and storage even though the same code is executed.

CUDA (Compute Unified Device Architecture)

NVIDIA’s programming model for GPUs, the primary focus of the lecture, but similar models exist (OpenCL, SYCL, Metal).

Data Parallelism

The ability to execute tasks on each data element independently is crucial for efficient GPU utilization.

“The ability to parallelize depends on how much independence we have across each element of computation…” 🔢

Operations with data dependencies require more complex parallel implementations such as parallel scan, instead of simple data parallelism.

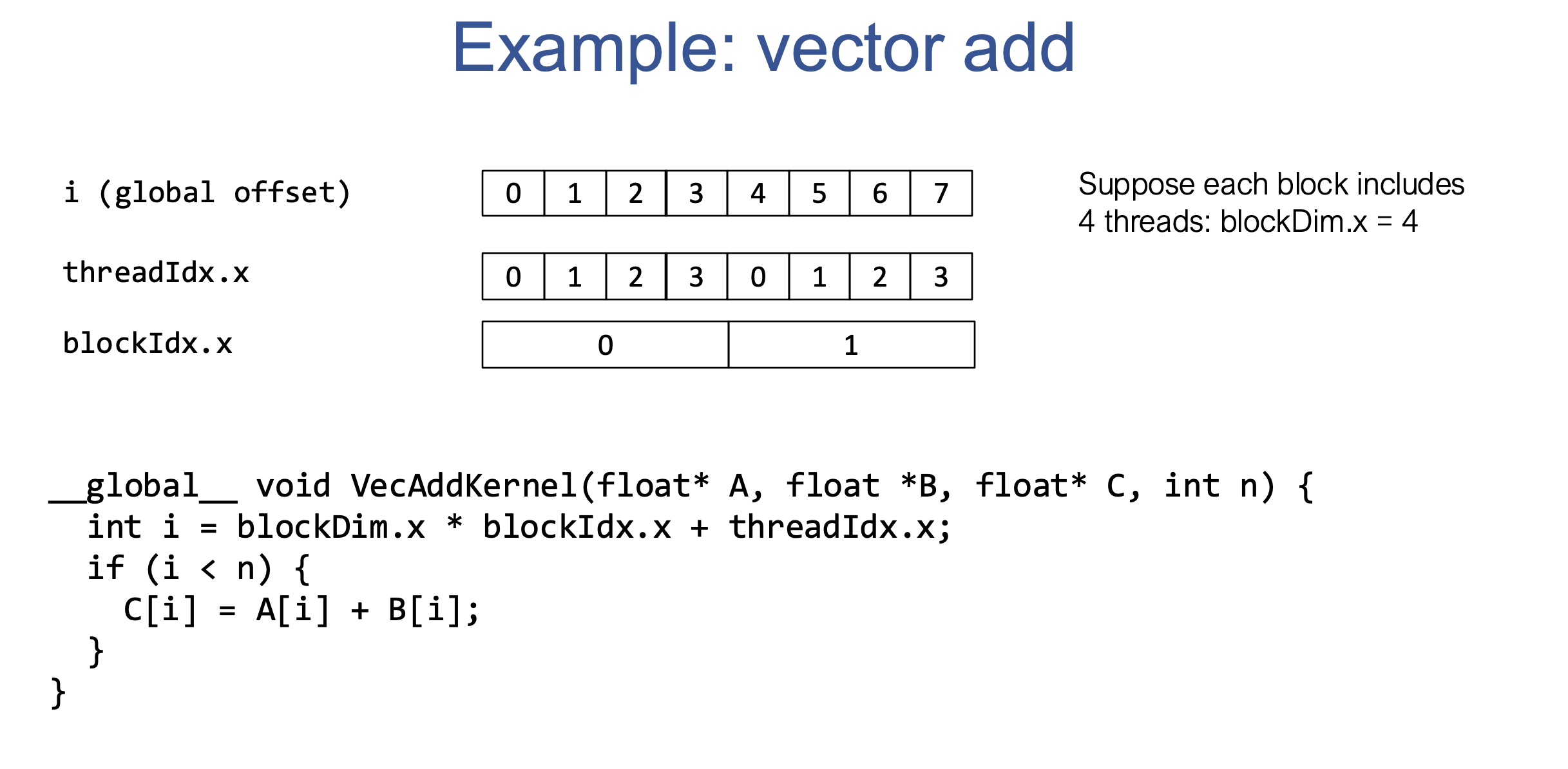

II. GPU Programming Example: Vector Addition

CPU Implementation

A simple for loop iterates over the array elements to perform the addition.

GPU (CUDA) Implementation

- A kernel function (

__global__ void VecAddKernel(...)) executes on the GPU. - Each thread calculates a global index based on its block and thread ID (

int i = blockDim.x * blockIdx.x + threadIdx.x;). - Threads perform additions on specific array elements concurrently.

“Each of Str it’s only doing one computation right…load from A, load from B, do the addition, and then write result back on C…” 🧮

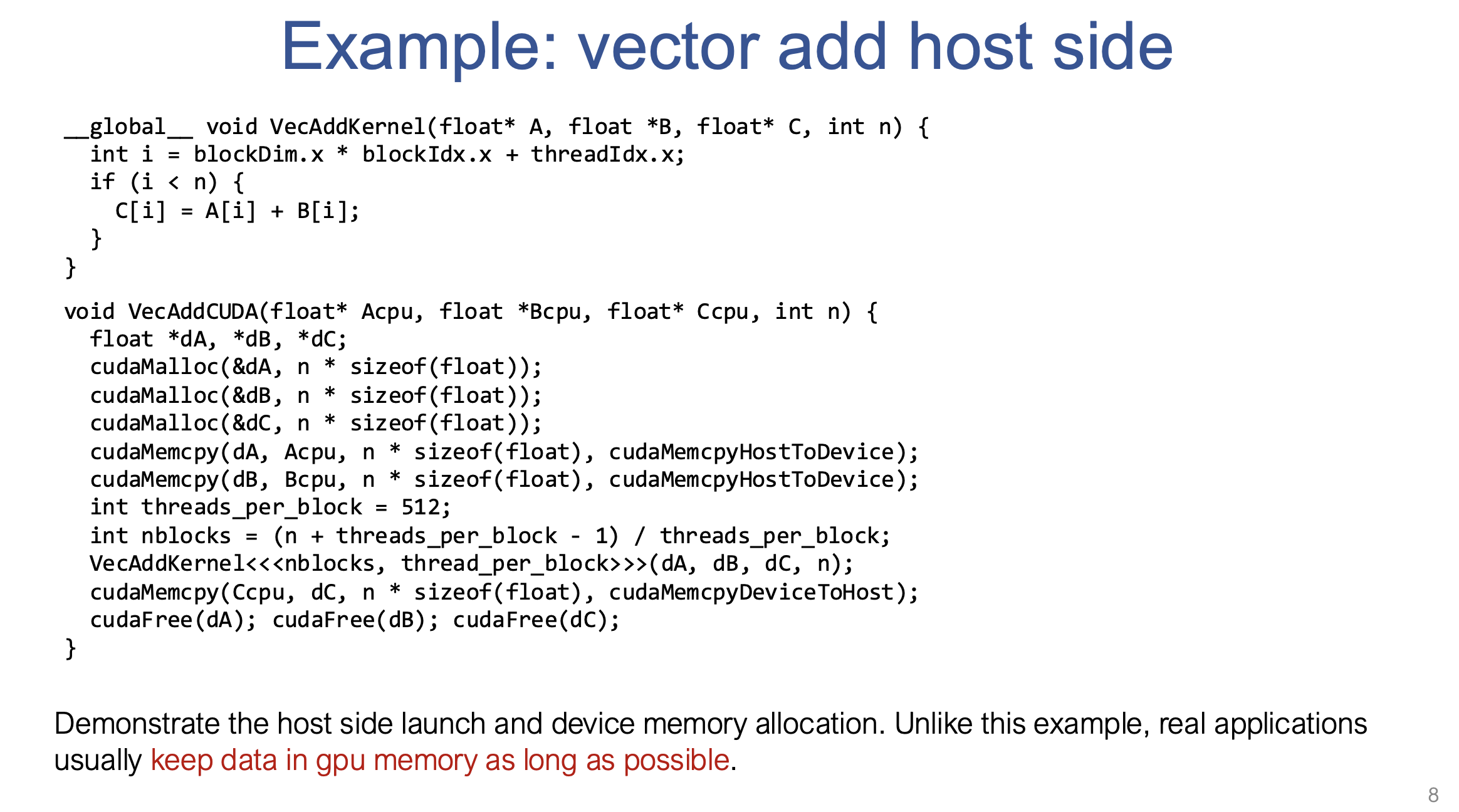

Host-Side Code

- Allocates memory on the GPU using

cudaMalloc. - Copies data from CPU (host) memory to GPU (device) memory using

cudaMemcpy. - Launches the kernel with specified numbers of threads and blocks.

- Copies results back to the CPU using

cudaMemcpy. - Frees allocated GPU memory using

cudaFree.

“when you call CA memory copy these operations help us to be able to take uh original memory and copy the data onto the corresponding regions on GPU…we’re going to launch a CUDA kernel with you know so many threads per block and number of blocks by passing in the GPU pointer” 🎯

Key Point

Real-world GPU applications minimize CPU-GPU data transfers, instead aiming to keep data in GPU memory as long as possible to reduce bottlenecks.

“Real world applications really try to keep data in GPU memory as long as possible” 🏃♂️

III. GPU Memory Hierarchy

Two-Level Hierarchy

- Global Memory: Main memory of the GPU, allocated via functions like

cudaMalloc. Slower, shared across the entire GPU. - Shared Memory: Fast, on-chip memory shared by threads within the same block. Used for data reuse and optimization.

- Registers: Local memory for each thread.

- Stream Multiprocessors (SM): Blocks are mapped to SMs, which contain multiple computing cores. Shared memory is associated with each SM.

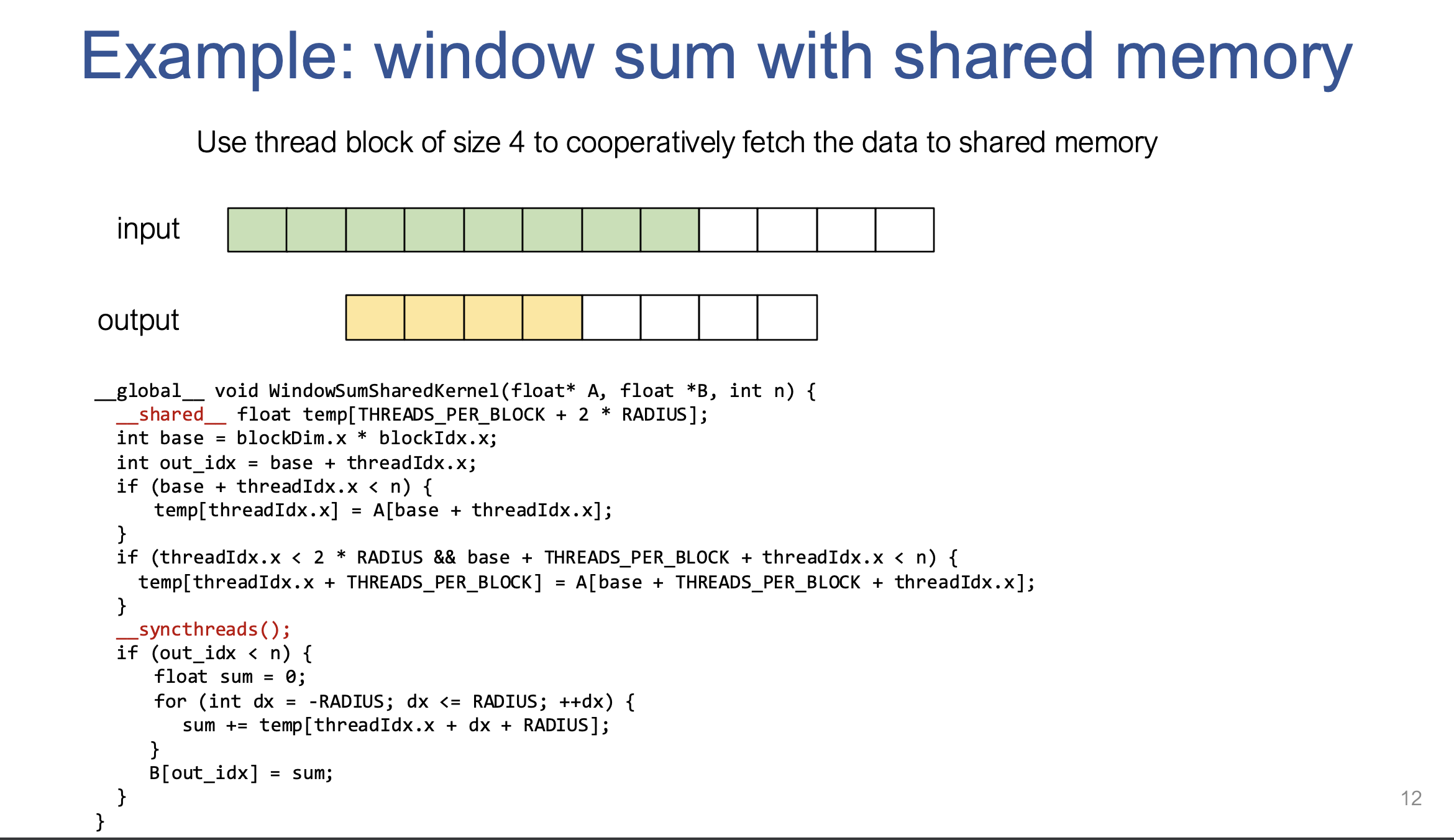

IV. Optimization: Shared Memory & Window Sum

The window sum example demonstrates how shared memory can be used to optimize memory access and improve the performance of GPU computations. The goal is to compute the sum of elements within a sliding window of a given radius. 🌐

Imagine you are trying to compute the sum of elements in a sliding window over an array of numbers. Let’s assume the array A contains n elements, and we have a window of size 5 (radius 2). For each output element B[i], we need to calculate the sum of 5 consecutive elements from A, centered at A[i].

For simplicity, assume:

- The window radius is

2(so 5 elements in the window: 2 elements before, 2 after, and the current element). - We have 4 threads per block.

1. Naive Approach 🛠️

In the naive implementation, each thread would independently load the required data from global memory and compute the sum for its corresponding output element.

- Problem: Threads load overlapping data. For example, Thread 0 will load the elements

A[0], A[1], A[2], A[3], A[4], while Thread 1 will loadA[1], A[2], A[3], A[4], A[5]. This leads to redundant memory accesses and unnecessary overhead.

2. Optimized Approach Using Shared Memory 🔥

In the optimized version, we use shared memory to load the data once per block, and then allow all threads to access it. This minimizes redundant global memory accesses and improves performance.

Key Steps:

-

Shared Memory Allocation: Each block of threads has access to a section of shared memory. We allocate enough space in shared memory to hold all the elements required by the threads in the block. For a radius of

2and4threads per block, we need a total of4 threads + 2 * radius = 4 + 4 = 8 elementsin shared memory. - Loading Data into Shared Memory:

- Each thread loads its corresponding element into shared memory.

- To ensure all threads can access data from neighboring threads (to cover the full window), threads at the beginning of the block will also load data beyond their own index.

-

Synchronizing Threads: The function

__syncthreads()ensures that all threads have finished loading their data into shared memory before any of them begin computing the sum. - Computing the Sum: After loading the data, each thread calculates the sum of elements within its sliding window using the data from shared memory. Since all threads have access to the same shared memory, they can work together without redundant memory accesses.

Example with 4 Threads:

Let’s say the array A = [1, 2, 3, 4, 5, 6, 7, 8, 9] and we have 4 threads per block with a radius of 2.

-

The shared memory will hold the data

A[0], A[1], A[2], A[3], A[4], A[5], A[6], A[7]. This way, each thread can access its own element and also the elements required by its neighboring threads. - Thread 0 calculates the sum of

A[0] + A[1] + A[2] + A[3] + A[4]. - Thread 1 calculates the sum of

A[1] + A[2] + A[3] + A[4] + A[5]. - Thread 2 calculates the sum of

A[2] + A[3] + A[4] + A[5] + A[6]. - Thread 3 calculates the sum of

A[3] + A[4] + A[5] + A[6] + A[7].

Benefits:

- Memory Efficiency: Each thread only loads the data it needs into shared memory once, instead of repeatedly loading the same data from global memory.

- Faster Access: Shared memory is much faster to access than global memory, leading to a significant speedup in computation.

- Cooperation Between Threads: Threads within a block cooperate to load the data into shared memory, making the computation more efficient.

3. How It Works in Shared Memory

In the GPU architecture:

- Global Memory: Is slower and shared across all threads of all blocks.

- Shared Memory: Is much faster but only accessible by threads within the same block. It’s ideal for situations where threads need to perform similar operations on neighboring elements of large datasets.

Final Thoughts:

- By using shared memory, we avoid the overhead of redundant global memory accesses, and the threads in a block work together more efficiently.

- This approach is especially helpful for tasks like convolution operations, matrix multiplications, and other tasks where threads need to perform similar operations on neighboring elements of large datasets.

This process greatly speeds up computations, particularly in deep learning or scientific computing tasks that require processing large arrays of data. 🎯

4. Step-by-Step Code Explanation 🔍

- Shared Memory Allocation: The shared memory array

tempholds data for the entire block of threads. It’s sized based on the number of threads per block and the radius of the window. 🏗️ - Index Calculations: The

basevariable calculates the starting index for the block, andout_idxis the output index for the current thread. ⏱️ - Loading Data into Shared Memory: Each thread loads its corresponding element from global memory into shared memory (

temp). 🌍 - Handling Edge Elements: Threads at the beginning of the block also load additional data into shared memory to ensure the entire window is covered. 🛠️

- Synchronization: This ensures all threads have finished loading data into shared memory before moving forward. 🔒

- Sum Calculation: Each thread sums the values within the sliding window using data from shared memory. 🧮

5. Advantages of Shared Memory 🚀

- Reduced Global Memory Access: Shared memory significantly reduces the number of accesses to global memory. Threads load only a minimal number of elements. 🚫

- Increased Memory Reuse: Data in shared memory can be reused by all threads in the block, eliminating redundant loads. 🔁

- Faster Memory Access: Shared memory is much faster to access than global memory, leading to performance improvements. ⚡

V. Case Study: Matrix Multiplication on GPU

Tiling

Dividing large problems into smaller subproblems (tiles) to improve data locality and reuse.

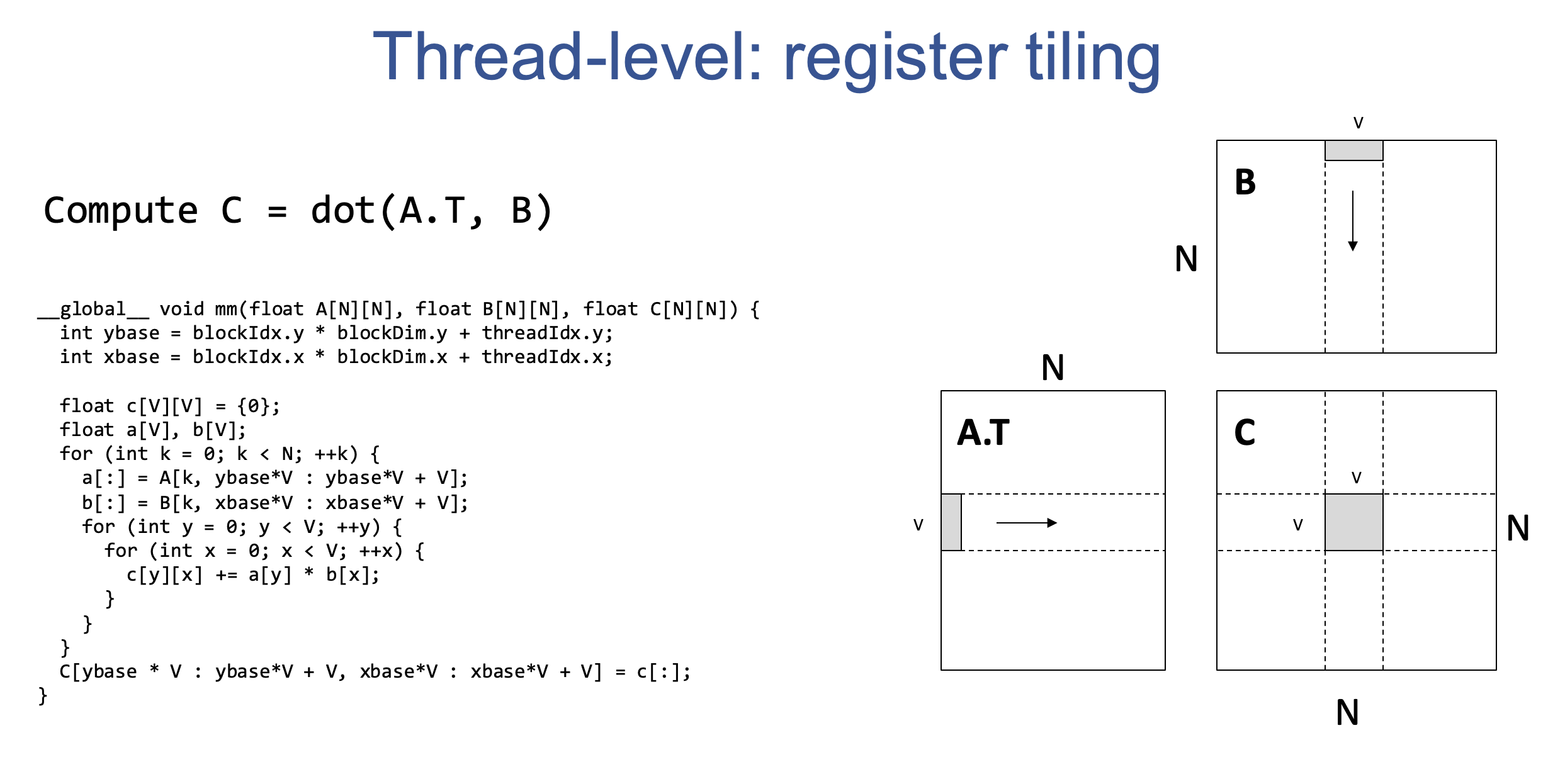

Register Tiling

- Each thread computes on a submatrix (V x V) of the output matrix.

- Input data (A and B) is loaded into registers for fast access.

- Increases data reuse within a single thread.

Shared Memory Tiling

- Each block computes a submatrix (L x L), and each thread computes a submatrix of V x V.

- Input blocks of A and B are first loaded into shared memory for reuse across all threads in the block.

- Threads load from the shared memory into registers to perform computations.

“We are going to first iterate over the reduction dimension in each case we want to be able to fetch the corresponding data first onto the shared memory” 🔄

Cooperative Fetching

Individual threads cooperatively fetch data from global memory into shared memory in an efficient way.

“Each of the threads in here is going to correspond to a cooperative fetching among multiple threads where each of the threads is going to perform one part of the job” 🔗

Memory Reuse Analysis

- Global memory to shared memory reuse.

- Shared memory to register reuse.

Careful selection of tiling factors (L and V) is crucial for performance and is often done through auto-tuning.

Trade-offs

Choosing the correct values of L and V is difficult due to multiple factors, including:

- Number of registers available for each thread vs total number of threads in a single Stream Multiprocessor (SM).

- Size of shared memory that affects how many thread blocks can fit into each SM.

- Data loading speed due to memory access patterns.

“When we are trying to pick for example the values of L and V, it is something that is affected by a lot of factors” 🧠

Details

🚀 GPU Matrix Multiplication: Register and Shared Memory Tiling

Matrix multiplication on GPUs can be significantly accelerated using techniques like register tiling and shared memory tiling. These methods optimize memory access and data reuse, crucial for maximizing performance on GPUs. Let’s break it down step by step! 🔧

1. The Goal: Matrix Multiplication 🎯

-

We want to compute matrix multiplication:

C = A * B

More specifically:

Cᵢⱼ = Σₖ Aᵢₖ * Bₖⱼ

This can also be written as:

C = dot(Aᵀ, B), meaning:

Cᵢⱼ = Σₖ Aₖᵢ * Bₖⱼ -

The goal is to perform these calculations in parallel on the GPU for speedup.

2. Register Tiling (Thread-Level Tiling) 🧱

📌 Concept:

- Register tiling divides matrices into smaller submatrices that fit into the GPU’s registers.

🔧 Mechanism:

- Submatrix of size V x V (where V is the tiling factor) is allocated per thread.

- Each thread computes one V x V submatrix of the output matrix C.

- Two temporary arrays, a and b, of size V are created in each thread’s registers.

- Strips of data from matrices A and B are loaded into a and b.

- The dot product is computed, and results accumulate in the register array c.

- Finally, the computed submatrix is written back to global memory.

🚀 Advantages:

- Memory Reuse: Each element of A and B is reused V times within the registers.

- Speed: Register access is very fast, boosting performance.

3. Shared Memory Tiling (Block-Level Tiling) 🧩

📌 Concept:

- This technique leverages shared memory for further optimization, enabling threads in a block to share data.

🔧 Mechanism:

- Each thread block computes a L x L submatrix of C (L = tiling factor).

- Each thread performs a V x V computation inside this block.

- Shared memory arrays, sA and sB, of size S x L are allocated.

- Threads cooperate to load data from global memory into shared memory.

- After loading, threads access data from shared memory into registers (a and b) for computation.

- Results are stored in the register array (c) and written back to global memory.

🚀 Advantages:

- Increased Reuse: Data loaded into shared memory is reused across threads.

- Cooperative Loading: Threads load data together, reducing redundant loads.

- Higher Performance: Combining register tiling with shared memory tiling maximizes efficiency.

4. Memory Reuse Analysis 📊

- Global → Shared Memory: Data reuse factor of L. Memory loads reduced by L times.

- Shared → Registers: Data reuse factor of V. Memory loads reduced by V times.

🔹 Total Reduction:

- Global to Shared:

2 * N³ / L - Shared to Registers:

2 * N³ / V

5. Cooperative Fetching 🤝

- Threads work together to load data into shared memory, avoiding redundancy.

🔧 How It Works:

- nthreads = blockDim.y * blockDim.x (total threads in a block).

- Each thread has a unique ID:

tid = threadIdx.y * blockDim.x + threadIdx.x - A loop (j) distributes the load:

Threads calculate the coordinates:

y = (j * nthreads + tid) / L

x = (j * nthreads + tid) % L - Data is loaded as:

s[y, x] = A[k + y, yblock * L + x]

6. Choosing L and V ⚖️

Choosing the best values for L and V involves balancing several factors:

- Register Usage:

- Larger V → More reuse within threads but fewer concurrent threads.

- Shared Memory Size:

- Larger L → More reuse but limits blocks per Streaming Multiprocessor (SM).

- Thread Parallelism:

- More threads → Better performance (hides latency).

🔹 Trade-off:

- Fewer threads with large registers OR

- More threads with smaller registers.

🔹 Auto-Tuning:

- Test various L and V values to find the optimal combination.

VI. Further GPU Optimization Techniques

- Continuous Memory Access: Ensuring threads read from contiguous regions of memory to optimize memory access.

- Shared Memory Bank Conflict: Avoid conflicts when multiple threads in a warp (subgroup of threads) access the same memory banks.

- Software Pipelining: Overlapping data loading with computation for increased throughput.

- Warp Level Optimizations: Exploiting the smaller granularity of warps for collective computations.

- Tensor Cores: Using specialized hardware units on modern GPUs for accelerated matrix operations.

“All those techniques are important to really get us the maximum benefit of a GPU accelerator” 🎯